OCZ's Fastest SSD, The IBIS and HSDL Interface Reviewed

by Anand Lal Shimpi on September 29, 2010 12:01 AM ESTTake virtually any modern day SSD and measure how long it takes to launch a single application. You’ll usually notice a big advantage over a hard drive, but you’ll rarely find a difference between two different SSDs. Present day desktop usage models aren’t able to stress the performance high end SSDs are able to deliver. What differentiates one drive from another is really performance in heavy multitasking scenarios or short bursts of heavy IO. Eventually this will change as the SSD install base increases and developers can use the additional IO performance to enable new applications.

In the enterprise market however, the workload is already there. The faster the SSD, the more users you can throw at a single server or SAN. There are effectively no limits to the IO performance needed in the high end workstation and server markets.

These markets are used to throwing tens if not hundreds of physical disks at a problem. Even our upcoming server upgrade uses no less than fifty two SSDs across our entire network, and we’re small beans in the grand scheme of things.

The appetite for performance is so great that many enterprise customers are finding the limits of SATA unacceptable. While we’re transitioning to 6Gbps SATA/SAS, for many enterprise workloads that’s not enough. Answering the call many manufacturers have designed PCIe based SSDs that do away with SATA as a final drive interface. The designs can be as simple as a bunch of SATA based devices paired with a PCIe RAID controller on a single card, to native PCIe controllers.

The OCZ RevoDrive, two SF-1200 controllers in RAID on a PCIe card

OCZ has been toying in this market for a while. The zDrive took four Indilinx controllers and put them behind a RAID controller on a PCIe card. The more recent RevoDrive took two SandForce controllers and did the same. The RevoDrive 2 doubles the controller count to four.

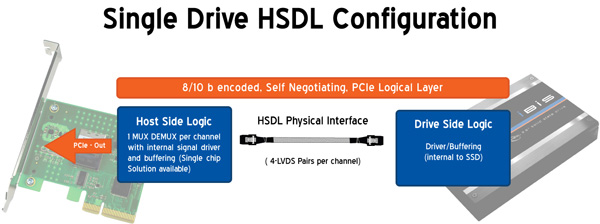

Earlier this year OCZ announced its intention to bring a new high speed SSD interface to the market. Frustrated with the slow progress of SATA interface speeds, OCZ wanted to introduce an interface that would allow greater performance scaling today. Dubbed the High Speed Data Link (HSDL), OCZ’s new interface delivers 2 - 4GB/s (that’s right, gigabytes) of aggregate bandwidth to a single SSD. It’s an absolutely absurd amount of bandwidth, definitely more than a single controller can feed today - which is why the first SSD to support it will be a multi-controller device with internal RAID.

Instead of relying on a SATA controller on your motherboard, HSDL SSDs feature a 4-lane PCIe SATA controller on the drive itself. HSDL is essentially a PCIe cable standard that uses a standard SAS cable to carry a 4 PCIe lanes between a SSD and your motherboard. On the system side you’ll just need a dumb card with some amount of logic to grab the cable and fan the signals out to a PCIe slot.

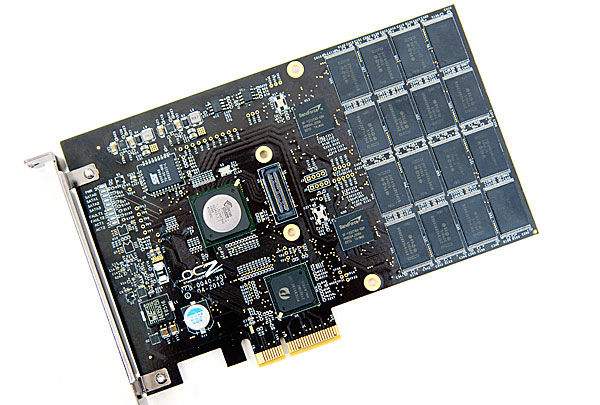

The first SSD to use HSDL is the OCZ IBIS. As the spiritual successor to the Colossus, the IBIS incorporates four SandForce SF-1200 controllers in a single 3.5” chassis. The four controllers sit behind an internal Silicon Image 3124 RAID controller. This is the same controller used in the RevoDrive which is natively a PCI-X controller, picked to save cost. The 1GB/s of bandwidth you get from the PCI-X controller is routed to a Pericom PCIe x4 switch. The four PCIe lanes stemming from the switch are sent over the HSDL cable to the receiving card on the motherboard. The signal is then grabbed by a chip on the card and passed through to the PCIe bus. Minus the cable, this is basically a RevoDrive inside an aluminum housing. It's a not-very-elegant solution that works, but the real appeal would be controller manufacturers and vendors designing native PCIe-to-HSDL controllers.

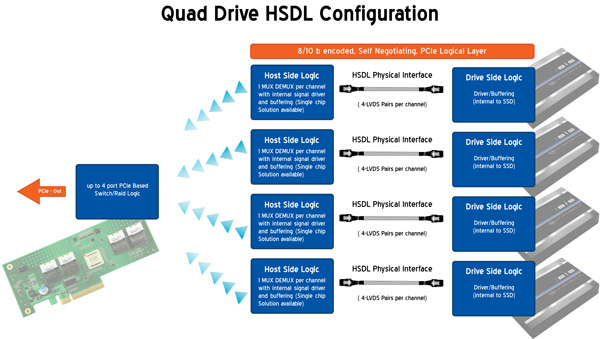

OCZ is also bringing to market a 4-port HSDL card with a RAID controller on board ($69 MSRP). You’ll be able to raid four IBIS drives together on a PCIe x16 card for an absolutely ridiculous amount of bandwidth. The attainable bandwidth ultimately boils down to the controller and design used on the 4-port card however. I'm still trying to get my hands on one to find out for myself.

74 Comments

View All Comments

MRFS - Wednesday, September 29, 2010 - link

> A mini-SAS2 cable has four lanes of 6 Gbps for a total of 24 Gbps.That was also my point (posted at another site today):

What happens when 6G SSDs emerge soon to comply

with the current 6G standard? Isn't that what many of

us have been waiting for?

(I know I have!)

I should think that numerous HBA reviews will tell us which setups

are best for which workloads, without needing to invest

in a totally new cable protocol.

For example, the Highpoint RocketRAID 2720 is a modest SAS/6G HBA

we are considering for our mostly sequential workload

i.e. updating a static database that we upload to our Internet website,

archiving 10GB+drive images + lots of low-volume updates.

http://www.newegg.com/Product/Product.aspx?Item=N8...

That RR2720 uses an x8 Gen2 edge connector, and

it provides us with lots of flexibility concerning the

size and number of SSDs we eventually will attach

i.e. 2 x SFF-8087 connectors for a total of 8 SSDs and/or HDDs.

If we want, we can switch to one or two SFF-8087 cables

that "fan out" to discrete HDDs and/or discrete SSDs

instead of the SFF-8087 cables that come with the 2720.

$20-$30 USD, maybe?

Now, some of you will likely object that the RR2720

is not preferred for highly random high I/O environments;

so, for a little more money there are lots of choices that will

address those workloads nicely e.g. Areca, LSI etc.

Highpoint even has an HBA with a full x16 Gen2 edge connector.

What am I missing here? Repeating, once again,

this important observation already made above:

"A mini-SAS2 cable has four lanes of 6 Gbps for a total of 24 Gbps."

If what I am reading today is exactly correct, then

the one-port IBIS is limited to PCI-E 1.1: x4 @ 2.5 Gbps = 10 Gbps.

p.s. I would suggest, as I already have, that the SATA/6G protocol

be modified to do away with the 10/8 transmission overhead, and

that the next SATA/SAS channel support 8 Gbps -- to sync it optimally

with PCI-E Gen3's 128/130 "jumbo frames". At most, this may

require slightly better SATA and SAS cables, which is a very

cheap marginal cost, imho.

MRFS

blowfish - Wednesday, September 29, 2010 - link

I for one found this to be a great article, and I always enjoy Anand's prose - and I think he surely has a book or two in him. You certainly can't fault him for politeness, even in the face of some fierce criticism. So come on critics, get real, look what kind of nonsense you have to put up with at Tom's et al!sfsilicon - Wednesday, September 29, 2010 - link

Hi Anand,Thank you for writing the article and shedding some light into this new product and standard from OCZ. Good to see you addressing the objectivity questions posted by some of the other posters. I enjoyed reading the additional information and analysis from some of the more informed poster. I do wonder if the source of the bias of your article is based on your not being aware of some of the developments in the SSD space or just being exposed to too much vendor coolaid. Not sure which one is the case, but hopefully my post will help generate a more balanced article.

---

I argee with several of the previous posters that this both the IBIS drives and the HDSL interface are nothing new (no matter how hard OCZ marketing might want to make it look like). As one of the previous posters said it is a SSD specific implementation of a PCIe extender solution.

I'm not sure how HDSL started, but I see it as a bridging solution because OCZ does not have 6G technology today. Recently released 6G dual port solutions will allow single SSD drives to transfer up to a theoretical 1200 MB/s per drive.

It does allow higher scaling beyond 1200 MB/s per drive through the channel bundling, but the SAS standardization commity is already looking into that option in case 12Gbps SAS ends up becoming too difficult to do. Channel bundling is inherent to SAS and address the bandwidth threat brought up by PCIe.

The PCIe channel bundling / IBIS Drive solution from OCZ also looks a bit like a uncomfortable balancing act. Why do you need to extend the PCIe interface to the the drive level? Is it just to maintain the more familiar "Drive" based use model? Or is it really a way to package 2 or more 3Gbps drives to get higher performance? Why not stick with a pure PCIe solution?

Assuming you don't buy into the SAS channel bundling story or you need a drive today that has more bandwidth. Why another propriatary solution? The SSD industry is working on NVMHCI which will address the concern of proprietary PCIe card solutions and will allow addressing of PCIe based cards as a storage device (Intel backed and evolved from ACHI).

While OCZ's efforts are certainly to be applauded especially given their aggresive roadmap plans a more balanced article should include references to the above developments to put the OCZ solution into perspective. I'd love to see some follow-up articles on the multi-port SAS and NVMHCI as a primer on what how the SSD industry is addressing technology limitations of today's SSDs. In addition it might be interesting to talk about the recent SNIA performance spec (soon to include client specific workloads) and Jedec's endurance spec.

---

I continue to enjoy your indepth reporting on the SSD side.

don_k - Wednesday, September 29, 2010 - link

"..or have a very fast, very unbootable RAID."Not quite true actually. PCIe drives of this type, meaning drives that are essentially multiple ssd drives attached to a raid controller on a single board, show up on *nix OS's as individual drives as well as the array device itself.

So under *nix you don't have to use the onboard raid (which does not provide any performance benefits in this case, there is no battery backed cache) and you can then create a single RAID0 for all the individual drives on all your PCIe cards, how many those are.

eva2000 - Thursday, September 30, 2010 - link

would love to see some more tests on non-windows platform i.e. centos 5.5 64bit with mysql 5.1.50 database benchmarks - particularly on writes.bradc - Thursday, September 30, 2010 - link

With the PCI-X converter this is really limited to 1066mb/s, but minus overhead is probably in the 850-900mb/s range which is what we see on one of the tests, just above 800mb/s.While this has the effect of being a single SSD for cabling and so on, I really don't see the appeal? Why didn't they just use something like this http://www.newegg.com/Product/Product.aspx?Item=N8...

I hope the link works, if it doesn't, I linked to an LSI 8204ELP for $210. It has a single 4x SAS connector for 1200mb/s, OCZ could link that straight into a 3.5" bay device with 4x Sandforce SSD's on it. This would be about the same price or cheaper than this IBIS device, while giving 1200mb/s which is going to be about 40% faster than the IBIS.

It would make MUCH MUCH more sense to me to simply have a 3.5" bay device with a 4x SAS port on it which can connect to just about any hard drive controller. The HSDL interface thing is completely unnecessary with the current PCI-X converter chip on the SSD.

sjprg2 - Friday, October 1, 2010 - link

Why are we concerning ourselfs withs memory controllers instead of just going directly to DMA? Just because these devices are static rams doesen't change the fact they are memory. Just treat them as such. Its called KISS it.XLV - Friday, October 1, 2010 - link

One clarification: these devices and controllers use multi-lane-sas internal ports and cables, but are electrically incompatible.. what wil happen if one by mistake attaches an IBIS to a SAS controller, or a SAS device to the HSDL controller? the devices get fried, or is there atleast some keying to the connectors, so such a mistake can be avoided?juhatus - Sunday, October 3, 2010 - link

"Even our upcoming server upgrade uses no less than fifty two SSDs across our entire network, and we’re small beans in the grand scheme of things."Why didn't you go for real SAN ? Something like EMC clariion?

You just like pedling with disks, now do you? :)

randomloop - Tuesday, October 5, 2010 - link

In the beginning, we based our aerial video and imaging recording system on OCZ SSD drives, based on their specs.They've all failed after several months of operation.

Aerial systems endure quite a bit of jostling. Hence the desire to use SSD's.

We had 5 of 5 OCZ 128G SSD drives fail during our tests.

We now use other SSD drives.