Apple 27-inch LED Cinema Display Review

by Anand Lal Shimpi on September 28, 2010 12:15 AM EST- Posted in

- Displays

- Mac

- Apple

- Cinema Display

Power Consumption

With the U2711 Dell opted for a CCFL backlight to deliver a wider color gamut. Apple moved to LED to reduce the size of the display's chassis and cut power consumption. Even while charging a MacBook Pro and running at full brightness the 27-inch LED Cinema Display never got more than warm. Part of this is due to the vent in the back of the display:

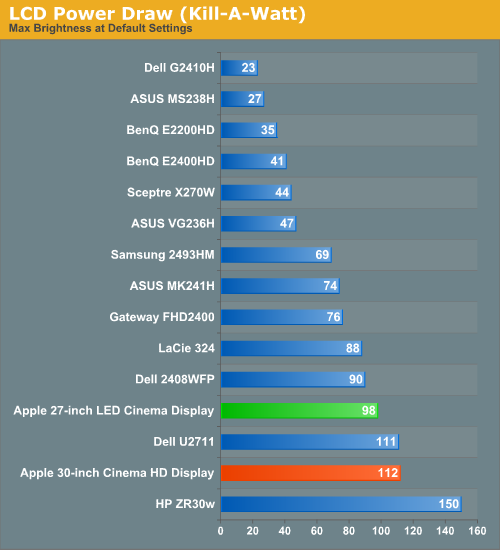

The display is also just generally power efficient:

The 27-inch LED Cinema Display tops out at 98W at full brightness, only saving about 14W compared to my old 30. The power efficiency is greatly improved however. At 98W you get a brighter display than almost anything on the list. Note that this is peak power consumption without a notebook attached to the MagSafe port. I plugged a 2010 15-inch MacBook Pro that was nearly dead and measured a max power draw of 166W at the wall for the display + charging the notebook.

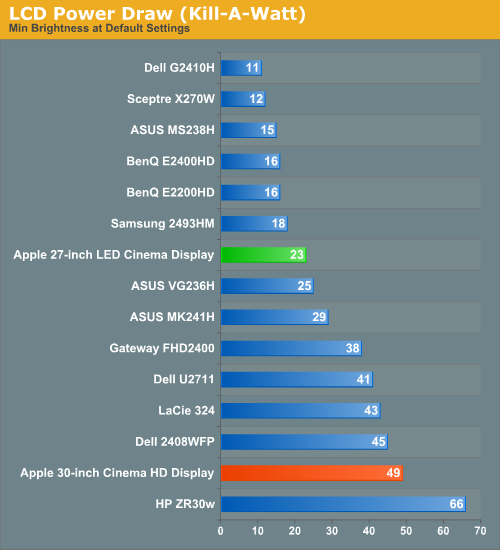

At the lowest brightness setting the new Cinema Display sips power, 23W to be exact. You can even go up to 50% brightness (~100 nits) and never pull more than 40W at the wall.

93 Comments

View All Comments

burgerace - Tuesday, September 28, 2010 - link

Wide color gamut is, for most non-professional users, a horrible drawback. Operating systems, web browsers and sites, images from my SLR camera, games, movies -- content is created for traditional color gamut!At the recommendation of of tech sites like this one, I bought two WCG Dell monitors, a 2408 and a 2410. They exhibit garish red push, and distorted colors in general. ATI drivers can use EDID to adjust the color temperature, reducing red push to a manageable level. But when I "upgraded" to an NVIDA 460, I lost that option.

Anand, do you actually look at the monitors with your eyes? Can you see how bad WCG looks? Forget the tables full of misleading numbers from professional image editing software, please.

7Enigma - Tuesday, September 28, 2010 - link

I think your problem is that most people spending this chunk of change on an LCD also have them properly calibrated. As mentioned in this exact review the uncalibrated picture was quite bad. This LCD might have even been cherry-picked for the review unit (don't know if this was sent by Apple for review or Anand purchased it for personal use). So WYSIWYG doesn't apply when calibration is performed.burgerace - Tuesday, September 28, 2010 - link

WCG monitors are NOT capable of displaying a greater number of colors than a traditional monitor. They display the same 24 bit color, but it's spread over a greater range of wavelengths.ALL mainstream content is designed to use only the 73% gamut. There is no way to "calibrate" a monitor to make mainstream content look good. Either the monitor displays the content within the correct, limited gamut -- thereby using less than 24bit color to render the image and throwing out visual information -- or it spreads it out over the wide gamut, causing inaccurate colors.

Pinkynator - Tuesday, September 28, 2010 - link

Finally someone who knows what they're talking about!I've finally registered here to say the exact same thing as you, but instead I'll give you my full support.

People just don't seem to understand that wide gamut is probably the second worst thing that happened to computer displays, right after TN monitors. It's bad - it's seriously bad.

Things might change a very long time from now, in a distant future, *IF* we get graphics cards with more bits per channel and monitors capable of understanding that (along with proper software support), but right now it's just something that is being pushed by marketing. Even tech review sites like Anandtech managed to fall for that crap, misleading monitor buyers into thinking that bigger gamut equals a better picture. In fact, it's exactly the opposite.

To go into a serious theoretical hyperbole for those who do not understand the implications of a stretched wide gamut with 8BPC output, a monitor with a 1000000000% gamut would only be capable of displaying one single shade of red, green or blue. Everything at 0 would be black, and everything from 1..255 would be eye-scorchingly red, green or blue. (Actually, the shades would technically differ, but the human eye would not be able to discern them.)

Your options with wide gamut are as follows:

1) Display utterly inaccurate colours

2) Emulate sRGB and throw out colour information, lowering the dynamic range and picture quality

That's it. Nothing else. Wide gamut, as it stands right now, DESTROYS the displayed image.

If you like wide gamut, that's fine - there are people who like miss Justine Bieber, too, but that doesn't make her good.

vlado08 - Tuesday, September 28, 2010 - link

I don't understand sRGB emulation.But probably on the input of the monitor you have 8 bits per color and through processing they cange it to 10 bits to drive the panel? This way you may not lose dynamic range. Well the color information will be less than 10 bits per color but you dont have this color in the input to begin with. Tell me if I'm wrong.

Pinkynator - Wednesday, September 29, 2010 - link

Example:Pure red (255,0,0) on a wide gamut monitor is more intense than pure red on a normal gamut monitor (which content is created for, thus ends up looking incorrect on WG).

That means (255,0,0) should actually be internally transformed by the monitor to something like (220,0,0) if you want the displayed colour to match that of the normal monitor and show the picture accurately. It also means that when the graphics card gives the monitor (240,0,0), the monitor would need to transform it to (210,0,0) for proper display - as you can see, it has condensed 15 shades of red (240-255) into only 10 (210-220).

To put it differently, if you display a gradient on a wide gamut monitor performing sRGB emulation, you get banding, or the monitor cheats and does dithering, which introduces visible artifacts.

Higher-bit processing is basically used only because the gamut does not stretch linearly. A medium grey (128,128,128) would technically be measured as something like (131, 130, 129) on the WG monitor, so there's all kinds of fancy transformations going on in order to not make such things apparently visible.

Like I said, if we ever get more bits in the entire display path, this whole point becomes moot, but for now it isn't.

andy o - Tuesday, September 28, 2010 - link

If you have your monitor properly calibrated, it's not a problem. You don't have to "spread" sRGB's "73%" (of what? I assume you mean Adobe RGB). You create your own content in its own color gamut. A wider gamut monitor can ensure that the colors in it overlap other devices like printers, thus proofing becomes more accurate.Wide gamut are great for fairly specialized calibrated systems, but I agree they're not for movie watching or game use.

teng029 - Tuesday, September 28, 2010 - link

still not compliant, i'm assuming..theangryintern - Tuesday, September 28, 2010 - link

Grrrrrr for it being a glossy panel. I *WAS* thinking about getting this monitor, but since I sit at my desk with my back to a large window, glossy doesn't cut it. That and the fact that I HATE glossy monitors, period.lukeevanssi - Wednesday, September 29, 2010 - link

I haven't used it myself, but a close friend did and said it works great - he has two monitors hooked up to his 24" iMac. I have, however, ordered stuff from OWC before (I get all my Apple RAM there since it's a lot cheaper than the Apple Store and it's all Apple-rated RAM) and they are awesome.http://www.articlesbase.com/authors/andrew-razor/6...