Everything You Always Wanted to Know About SDRAM (Memory): But Were Afraid to Ask

by Rajinder Gill on August 15, 2010 10:59 PM ESTEvery read/write memory transaction can be segmented by type into one of three performance bins depending on the status of the bank/page to be accessed. These bins, in order of best to worst, are page-hit, page-empty, and page-miss. For the most part, anything we can do to increase the number of page-hit transactions or reduce the number of page-miss transactions is a good thing.

A page-hit access is defined as any read or write operation to an open page. That is, the bank containing the open page is already active and is immediately ready to service requests. Because the target page is already open, the nominal access latency for any memory transaction falling into this category is approximately tCAS (the CAS Latency of the device).

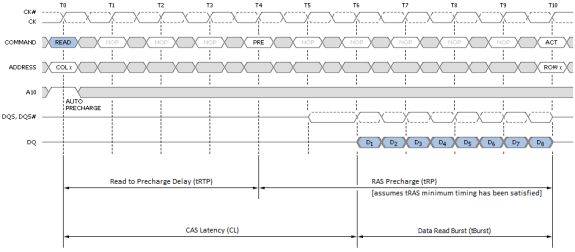

Figure 6 shows the minimum read latency associated with a best-case page-hit scenario. For a part with a CAS Latency of 6T, the memory controller waits only six short clocks before the start of data return. During a Read with Auto-Precharge, the Read command will execute as normal except the active bank will begin precharging CAS-latency (CL) clock cycles before the end of the burst. This feature allows the precharge operation to be partially or completely hidden during periods of burst read cycles, dependent on CL. When tuning our systems we always seek to set tRTP such that tRTP + tRP equals CL + tBurst for exactly this reason. Put another way, if CL and tRP are the same set 4T for DDR3 (2T for DD2).

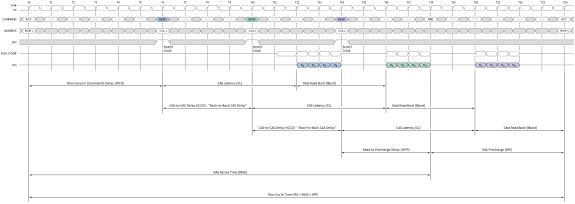

Sequential reads to the same page make these types of transactions even more profitable as each successive access can be scheduled at a minimum of tBurst (4T) clocks from the last. The timing is captured as the CAS-to-CAS Delay (tCCD) and is commonly referred to as 'Back-to-Back CAS Delay' (B2B), as shown per Figure 7. This feature makes possible extremely high data transfer rates for total burst lengths of one page or less - in our case, 8KB.

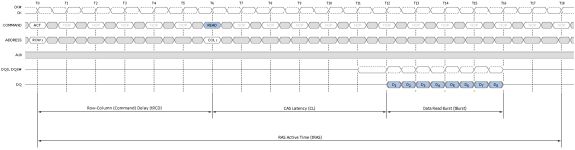

Although not ideal, a page-empty access is still preferred to a miss. In this case the bank to be accessed is Idle with no page open. Common sense tells us any attempt to read or write data to a page in this bank first requires we Activate the bank. In other words, nominal access latency now includes the time to open the page - Row-Column (or Command) Delay (tRCD). This is a doubling of the minimum access latency when compared to that of the page-hit case! Twelve cycles (tRCD + CL) now elapse before the first word is returned. Figure 8 shows this the detail.

Finally, as if the relative penalty of page-empty access wasn't bad enough, here comes page-miss. A miss occurs anytime a memory transaction must first close an open page in order to open an alternate page in the same bank. Only then can the specified data access take place. First closing an open page requires a Precharge, adding the RAS Precharge (tRP) delay to any already lengthy operation. As you can see by Figure 9, the nominal latency of an access of this type is three times that of one page-hit operation!

The relative gain/loss ratio for each access type can be quickly assessed simply through a cursory review of the most basic device timings. Imagine a memory kit rated for operation at DDR3-1600, 6-6-6-18 (CL-tRCD-tRP-tRAS): With nothing more we can estimate six cycles for a page-hit access, 12 cycles for a page-empty access, and 18 cycles for a page-miss access.

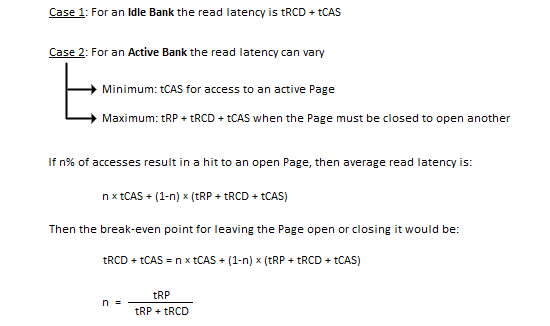

Normalized to the page-hit access latency, page-empty access is twice as long, and page-miss access is a whole three times as long. If we combine this with what we know about the inner functions of the SDRAM state machine we see page-hit and page-miss are really just subsets of the same bank state (active). Of course, page-empty access necessarily implies an idle bank. The following proof rewards us with some powerful insight.

The variable n also represents the percentage of accesses to banks with open pages that must result in a page-hit access if we are to simply pace the nominal access latency that would be achieved if every read access was to an idle bank. And the only thing this depends on is the RAS Precharge delay and the Row-Column (or Command) Delay of the device in question.

You would think by working to maximize n, performance would be maximized as well. And you'd be right. Let's take what we've learned thus far and step it up a notch. We promise, after this you'll never see memory timings in the same light ever again.

Before proceeding, we've prepared a video for those of you that would like to view a few simple animations meant to help visualize each transaction type:

46 Comments

View All Comments

ekoostik - Tuesday, August 17, 2010 - link

Great article. Going to take me a few more reads. One question - why no mention of Command Rate (and I double checked the Memory Scaling on Core i7 article, absent there too)? CR is often included in RAM specs, e.g. 9-9-9-24-2T, but never fully discussed if mentioned at all. Is it just not important anymore?Muhammed - Tuesday, August 17, 2010 - link

Ok I managed to royally confuse my self !What I know is that DDR3 operates at 1/8 the rated frequency , that means in case of DDR3 @800MHz , the internal memory operations are actually running at 100MHz , but the memory is able to fetch 8-words every clock cycle .

So 100MHz X (8 words ) = 800 Word per second as data rate , then the manufacturer misleadingly label the RAM module as a 800MHz part .

so the real benefit of DDR3 over DDR or DDR2 comes not from increased operating frequency , but from higher bandwidth .

To stress that fact , I mention DDR2 @800MHz , it operates at 200Mhz (internal clock) , however it only fetches 4-words every clock cycle , (200X4 = 800 Words).

When DDR3 operates at 200MHz (internal operations) like DDR2 , it fetches double the data , effectively managing 1600 words per second .

NOW , in your article .. you mention the base clock (I/o Bus) and you mention the double data rate , I know the I/O Bus clock is always 2 or 4 times the internal clock , so DDR3 @ 100MHz , has a 400MHz I/O bus .. but I couldn't understand the I/O bus function and it's relation in data transmission and data rate .

I am missing something here , could you enlighten me ?

Edison5do - Tuesday, August 17, 2010 - link

I Really was Affraid..!!Edison5do - Tuesday, August 17, 2010 - link

Technical Reading !!! LOve Thishasherr - Wednesday, August 18, 2010 - link

Great article. But what i dont get is how the hell motherboard knows all those timings? In SPDs there are like N timings described, isnt there really more? At least in bios settings i see more.Another thing. I buy Kingston 1800 MT/s module, with SPDs up to 1333 MTs. I overclock and make it run @ rated 1800mt/s speed. All timings are on auto. How the hell mobo/bios guess all of them :)?

ClagMaster - Wednesday, August 18, 2010 - link

Afraid to Ask ?After perusing through this I find myself afraid to read.

Comprehensive article for a novice EE

just4U - Wednesday, August 18, 2010 - link

Great article and ..." ....should be to focus development on reducing absolute minimum latency requirements for timings such as CAS and tRCD, rather than chasing.."

I hope the memory makers and shakers out there read that!!

lyeoh - Saturday, August 21, 2010 - link

The mistake counter bit counts seem OK to me. In what way are they wrong?There are 9 MSB (most significant bits) in the table.

Yes there are 13 bits in the counter, but the 9 bits in the table only refer to the 9 "top bits" of those 13 bits.

For example, if I have an 8 bit counter but 4 bits in some table only refer to the 4 most significant bits, then that means that you'd only see all zeroes in those 4 bits when the counter has values from 0 to 15 (0x0 to 0xF). When the counter has values from 240 to 255 (0xF0 to 0xFF), you'd see all ones in those 4 bits.

As for the description, I don't know the details of how the stuff works, so I don't know whether it's wrong or not.

dia - Saturday, August 21, 2010 - link

Read the explanation here:http://download.intel.com/design/processor/datasht...

Page 79.

To quote:

"MISTAKECOUNTER.

This field is the upper 8 MSBs of a 12-bit counter. This counter adapts the

interval between assertions of the page close flag. For a less aggressive page

close, the length of the count interval is increased and vice versa for a more

aggressive page close policy."

Now look at the left hand column, it shows 8:0. That's 9 bits! It's a 13 bit counter.

If it were a 12 bit counter the maximum permissible selection value would be 4095 and not 8191.

datasegment - Saturday, August 21, 2010 - link

Quick fyi - 8k is not 8196, it is 8192 :)