A New 30" Contender: HP ZR30w Review

by Brian Klug on June 1, 2010 6:30 PM EST

ZR30w Color Quality

We’ll start out with the color quality of the ZR30w. As per usual, we report two metrics: color gamut and color accuracy (Delta E). Color gamut refers to the range of colors the display is able to represent with respect to some color space. In this case, our reference is the AdobeRGB 1998 color space, which is larger than the sRGB color space. So our percentages are reported with respect to this number, and larger is better.

Color accuracy (Delta E) refers to the display’s ability to display the correct color requested by the GPU. The difference between the color represented by the display, and the color requested by the GPU is our Delta E, and lower is better here. In practice, a Delta E under 1.0 is perfect - the chromatic sensitivity of the human eye is not great enough to distinguish a difference. Moving up, a Delta E of 2.0 or less is generally considered fit for use in a professional imaging environment - it isn’t perfect, but it’s hard to gauge the difference. Finally, Delta E of 4.0 and above is considered visible with the human eye. Of course, the big consideration here is frame of reference; unless you have another monitor or some print samples (color checker card) to compare your display with, you probably won’t notice. That is, until you print or view media on another monitor. Then the difference will be very apparent.

As I mentioned in our earlier reviews, we’ve updated our display test bench. We’ve deprecated the Monaco Optix XR Pro colorimeter in favor of an Xrite i1D2 since there are no longer up-to-date drivers for modern platforms. We’ve also done testing and verification with a Spyder 3 colorimeter. We’re using the latest version of ColorEyes Display Pro - 1.52.0r32, for both color tracking and brightness testing.

We’re providing data from other display reviews taken with the Monaco Optix XR alongside new data taken with an Xrite i1D2. They’re comparable, but we made a shift in consistency of operator and instrumentation, so the comparison isn’t perfect. It’s close, though.

For these tests, we calibrate the display and try to obtain the best Delta E we can get at both 200 nits and 100 nits (print brightness). We target 6500K and a gamma of 2.2, but sometimes performance is better using the monitor’s native measured whitepoint and gamma. We also take uncalibrated measurements that show performance out of box using the manufacturer supplied color profile. For all of these, dynamic contrast is disabled. The ZR30w has no other controls save brightness, which we manually adjust to hit our 200 nit and 100 nit targets.

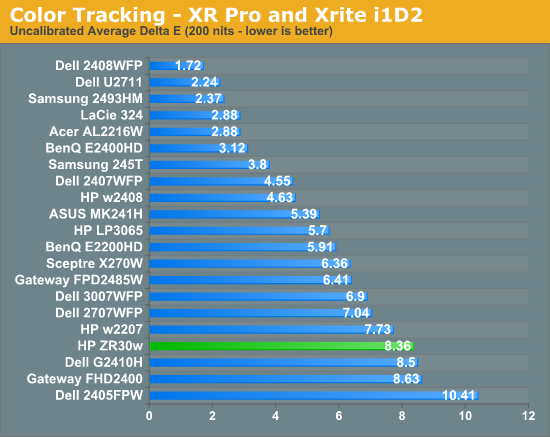

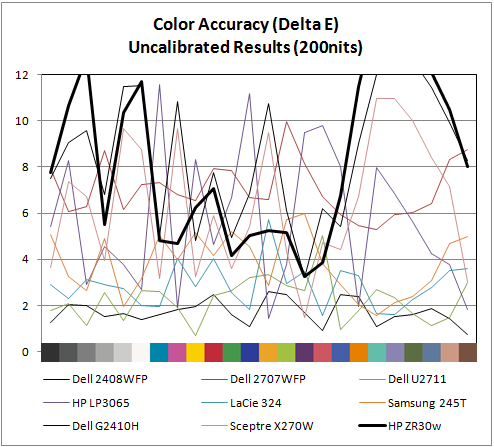

So, how does the ZR30w do? Let’s dive into the charts:

We’ll start out with the color quality of the ZR30w. As per usual, we report two metrics: color gamut and color accuracy (Delta E). Color gamut refers to the range of colors the display is able to represent with respect to some color space. In this case, our reference is the AdobeRGB 1998 color space, which is larger than the sRGB color space. So our percentages are reported with respect to this number, and larger is better.

Color accuracy (Delta E) refers to the display’s ability to display the correct color requested by the GPU. The difference between the color represented by the display, and the color requested by the GPU is our Delta E, and lower is better here. In practice, a Delta E under 1.0 is perfect - the chromatic sensitivity of the human eye is not great enough to distinguish a difference. Moving up, a Delta E of 2.0 or less is generally considered fit for use in a professional imaging environment - it isn’t perfect, but it’s hard to gauge the difference. Finally, Delta E of 4.0 and above is considered visible with the human eye. Of course, the big consideration here is frame of reference; unless you have another monitor or some print samples (color checker card) to compare your display with, you probably won’t notice. That is, until you print or view media on another monitor. Then the difference will be very apparent.

As I mentioned in our earlier reviews, we’ve updated our display test bench. We’ve deprecated the Monaco Optix XR Pro colorimeter in favor of an Xrite i1D2 since there are no longer up-to-date drivers for modern platforms. We’ve also done testing and verification with a Spyder 3 colorimeter. We’re using the latest version of ColorEyes Display Pro - 1.52.0r32, for both color tracking and brightness testing.

We’re providing data from other display reviews taken with the Monaco Optix XR alongside new data taken with an Xrite i1D2. They’re comparable, but we made a shift in consistency of operator and instrumentation, so the comparison isn’t perfect. It’s close, though.

For these tests, we calibrate the display and try to obtain the best Delta E we can get at both 200 nits and 100 nits (print brightness). We target 6500K and a gamma of 2.2, but sometimes performance is better using the monitor’s native measured whitepoint and gamma. We also take uncalibrated measurements that show performance out of box using the manufacturer supplied color profile. For all of these, dynamic contrast is disabled. The ZR30w has no other controls save brightness, which we manually adjust to hit our 200 nit and 100 nit targets.

So, how does the ZR30w do? Let’s dive into the charts:

Out of box, the ZR30w looks a tad cool in temperature and is very vibrant. Perhaps even too vibrant, but then again maybe that's what 1 billion colors looks like. I’m a bit surprised that uncalibrated performance isn’t better than what I measured. I ran and re-ran this test expecting something to be wrong with my setup - it just doesn’t perform very well in this objective uncalibrated test. That isn’t to say it doesn’t look awesome - it does - but the ZR30w strongly benefits from calibration.

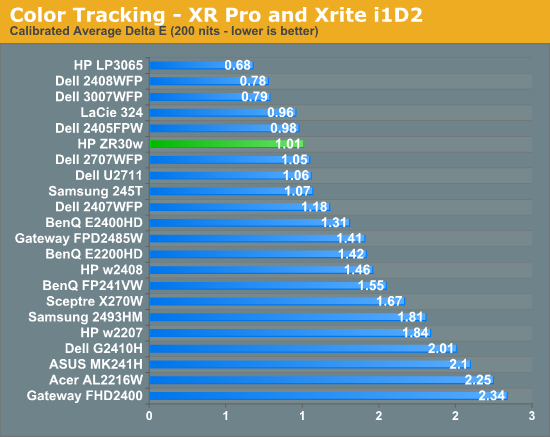

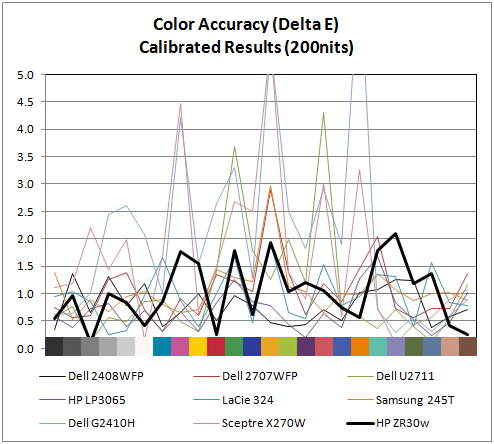

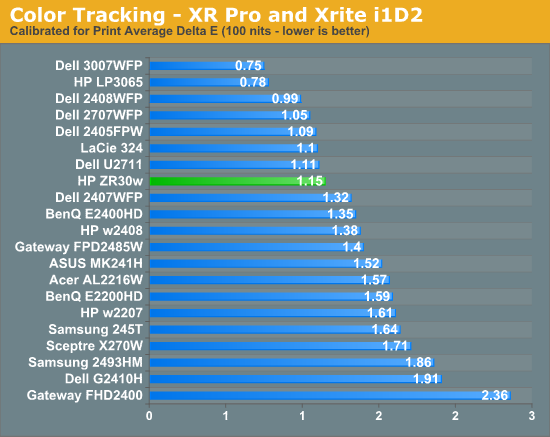

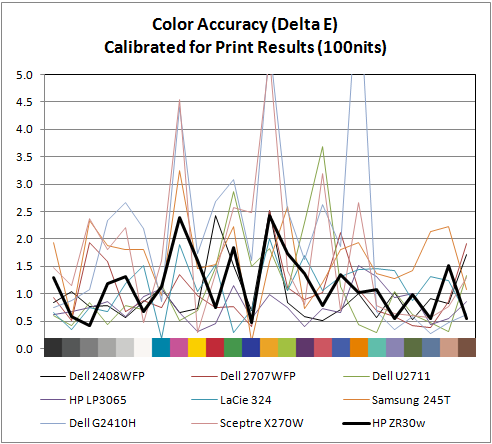

Moving to calibrated performance at 200 nits, the ZR30w really starts to deliver, with impressive Delta E of 1.01. Pay attention to the charts, there's not a single peak above 2.0, which is awesome. I couldn’t get the ZR30w all the way down to 100 nits - the lowest the display will go is right around 150 nits. Surprisingly, Delta E actually gets a bit worse, and moves up to 1.15 at the dimmest setting. Interestingly, the highest peak jumps up to 2.5 at this brightness. I’ll talk more about brightness in a second, but it’s pretty obvious that the ZR30w wants to be bright. You can just tell from the dynamic range you can get to in the menus, from 150 nits up to the maximum around 400, and it’s somewhere inbetween there that Delta E really really shines.

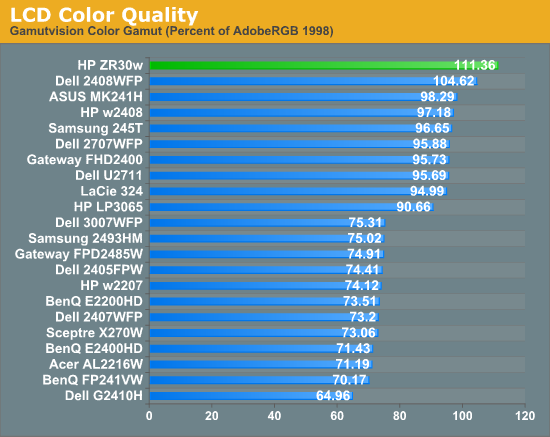

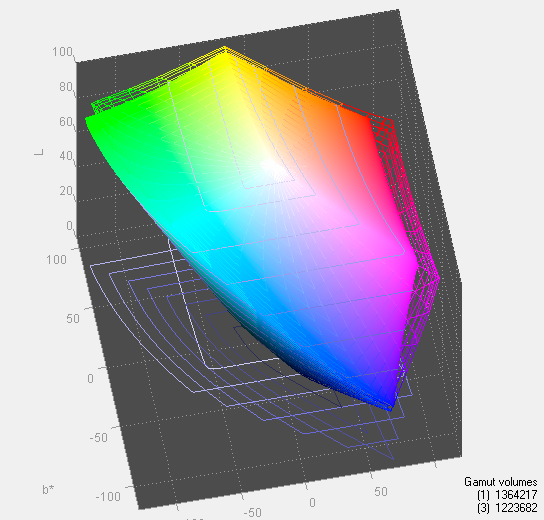

Of course, the ZR30w delivers in color gamut. Note that in the volumetric 3D plot, the wireframe plot is the ZR30w, and the solid plot is AdobeRGB 1998 - that’s right, we’ve exceeded the AdobeRGB color space. The raw data is impressive, the display manages 111.36% of coverage, the highest we’ve tested. In this case, we’ve exceed the manufacturer claims of 99% AdobeRGB by a notable margin. I have no trouble believing that HP's claims about 1+ billion colors are totally accurate - you have to see it in person to believe it. There are just some colors I'm used to not seeing represented very well; reds and blues especially, and the photos that I have looked at are spectacular.

IPS panels are still very, very win. It’d be awesome to see a Delta E under 1.0, but I just couldn’t get that from the ZR30w I tested. The additional difference would of course be absolutely indistinguishable to the human eye, but it’d be an awesome bragging right. But you've already got more than a billion colors.

Moving to calibrated performance at 200 nits, the ZR30w really starts to deliver, with impressive Delta E of 1.01. Pay attention to the charts, there's not a single peak above 2.0, which is awesome. I couldn’t get the ZR30w all the way down to 100 nits - the lowest the display will go is right around 150 nits. Surprisingly, Delta E actually gets a bit worse, and moves up to 1.15 at the dimmest setting. Interestingly, the highest peak jumps up to 2.5 at this brightness. I’ll talk more about brightness in a second, but it’s pretty obvious that the ZR30w wants to be bright. You can just tell from the dynamic range you can get to in the menus, from 150 nits up to the maximum around 400, and it’s somewhere inbetween there that Delta E really really shines.

Of course, the ZR30w delivers in color gamut. Note that in the volumetric 3D plot, the wireframe plot is the ZR30w, and the solid plot is AdobeRGB 1998 - that’s right, we’ve exceeded the AdobeRGB color space. The raw data is impressive, the display manages 111.36% of coverage, the highest we’ve tested. In this case, we’ve exceed the manufacturer claims of 99% AdobeRGB by a notable margin. I have no trouble believing that HP's claims about 1+ billion colors are totally accurate - you have to see it in person to believe it. There are just some colors I'm used to not seeing represented very well; reds and blues especially, and the photos that I have looked at are spectacular.

IPS panels are still very, very win. It’d be awesome to see a Delta E under 1.0, but I just couldn’t get that from the ZR30w I tested. The additional difference would of course be absolutely indistinguishable to the human eye, but it’d be an awesome bragging right. But you've already got more than a billion colors.

95 Comments

View All Comments

Mumrik - Wednesday, June 2, 2010 - link

They basically never have. It's really a shame though - to me the ability to put the monitor into portrait mode with little to no hazzle is one of the major advantages to LCD monitors.softdrinkviking - Tuesday, June 1, 2010 - link

brian, i think it's important to remember that1. it is unlikely that people can perceive the difference between 24 bit and 30 bit color.

2. to display 30 bit color, or 10 bit color depth, you also need an application that is 10 bit aware, like maya or AutoCad, in which case the user would most likely opt for a workstation card anyway.

i am unsure, but i don't think windows 7 or any other normally used program is written to take advantage of 10 bit color depth.

from what i understand, 10 bit color and "banding" only really has an impact when you edit and reedit image files over and over, in which case, you are probably using medical equipment or blowing up photography to poster sizes in a professional manner.

here is a neat little AMD pdf on their 30 bit implementation

http://ati.amd.com/products/pdf/10-Bit.pdf

zsero - Wednesday, June 2, 2010 - link

I think the only point where you need to watch 10-bit _source_ is when watching results from medical imaging devices. Doctors say that they can see difference between 256 and 1024 gray values.MacGyver85 - Thursday, June 3, 2010 - link

Actually Windows 7 does support 10 bit encoding, it even supports more than that; 16 bit encoding!http://en.wikipedia.org/wiki/ScRGB_color_space

softdrinkviking - Saturday, June 5, 2010 - link

all that means is that certain components of windows 7 "support" 16 bit color.it does not mean that 16 bit color is displayed at all times.

scRGB is a color profile management specification that allows for a wider amount of color information that sRGB, but it does not automatically enable 16 bit color, or even 10 bit deep color.

you still need to be running a program that is 10 bit aware, or using a program that is running in a 10 bit aware windows component. (like D3D).

things like aero (which uses directx) could potentially take advantage of an scRGB color profile with 10 bit deep encoding, but why would it?

it would suck performance for no perceivable benefit.

the only programs that really use 10 bit color are professional imagining programs for medical, and design uses.

it is unlikely that will change because it is more expensive to optimize software for 10 bit color, and the benefit is only perceivable in a handful of situations.

CharonPDX - Wednesday, June 2, 2010 - link

I have an idea for how to improve your latency measurement.Get a Matrox DualHead2Go Digital Edition. This outputs DVI-I over both outputs, so each can do either analog or digital. Test it with two identical displays over both DVI and VGA to make sure that the DualHead2Go doesn't directly introduce any lag. Compare with two identical displays, one over DVI and one over VGA, to see if either the display or the DualHead2Go introduces lag over one interface over the other. (I'd recommend trying multiple pairs of identical displays to verify.)

This would rip out any video card mirroring lag (most GPUs do treat the outputs separately, and those outputs may produce lag,) and leave you solely at the mercy of any lag inherent to the DH2Go's DAC.

Next, get a high quality CRT, preferably one with BNC inputs. Set the output to 85 Hz for max physical framerate. (If you go with direct-drive instead of DualHead2Go, set the resolution to something really low, like 1024x768, and set the refresh rate as high as the display will go. The higher, the better. I have a nice-quality old 22" CRT that can go up to 200 Hz at 640x480 and 150 Hz at 1024x768.)

Then, you want to get a good test. Your 3dMark is pretty good, especially with its frame counter. But there is an excellent web-based on at http://www.lagom.nl/lcd-test/response_time.php (part of a wonderful series of LCD tests.) This one goes to the thousands of a second. (Obviously, you need a refresh rate pretty high to actually display all those, but if you can reach it, it's great.)

Finally, take your pictures with a high-sensitivity camera at 1/1000 sec exposure. This will "freeze" even the fastest frame rate.

zsero - Wednesday, June 2, 2010 - link

In the operating systems 32-bit is the funniest of all, it is the same as 24-bit, except I think they count the alpha channel too, so RGB would be 24-bit and RGBA would be 32-bit. But I as far as I know on an operating system level it doesn't mean anything useful, it just looks good in the control panel. True Color would be a better name for it. In any operating system if you take a screenshot, the result will be 24 bit color RGB data.From wikipedia:

32-bit color

"32-bit color" is generally a misnomer in regard to display color depth. While actual 32-bit color at ten to eleven bits per channel produces over 4.2 billion distinct colors, the term “32-bit color” is most often a misuse referring to 24-bit color images with an additional eight bits of non-color data (I.E.: alpha, Z or bump data), or sometimes even to plain 24-bit data.

velis - Wednesday, June 2, 2010 - link

However:1. Reduce size to somewhere between 22 and 24"

2. Add RGB LED instead of CCFL (not edge lit either)

3. Add 120Hz for 3D (using multiple ports when necessary)

4. Ditch the 30 bits - only good for a few apps

THEN I'm all over this monitor.

As it is, it's just another 30 incher, great and high quality, but still just another 30 incher...

I SO want to replace my old Samsung 215TW, but there's nothing out there to replace it with :(

zsero - Wednesday, June 2, 2010 - link

Gamut will not change between 24-bit and 30-bit color, as it is a physical properties of the panel in case (+ lighting).So the picture will be visually the same, nothing will change, except if you are looking at a very fine gradient, it will not have any point where you would notice a sharp line.

Think about it in Photoshop. You make a 8-bit grayscale image (256 possible grey value for each pixel), and apply a black to white gradient on its whole size horizontally. Now look at the histogram, you see continuous distribution of values from 0 to 255.

Now make some huge color correction, like do a big change in gamma. Now the histogram is not a continuous curve, but something full of spikes, as because of the rounding errors a correction from 256 possible values to 256 possible values skips certain values.

Now apply a levels correction, and make the darkest black into for example 50 and the brightest white into for example 200. What happens now, is that you are compressing the whole dynamic range into a much smaller interval, but as your scale is fixed, you are now using only 150 values for the original range. That's exactly what is happening for example when you use a calibrator software to calibrate a wide-gamut (close to 100% AdobeRGB) monitor for sRGB use, because you need to use it in not color aware programs (very, very common situation).

For actual real-world test, I would simply suggest you to use the calibrator software to calibrate your monitor to sRGB, and have a look at fine gradients. For example check it with the fullscreen tools from http://lcdresource.com/tools.php

Rick83 - Wednesday, June 2, 2010 - link

I feel that Eizo has been ahead in this area for a while, and it appears that it will stay there.The screen manager software reduces the need of on-screen buttons, but still gives you direct access to gamma, color temperature, color values and even power on/off timer functions as well as integrated profiles - automatically raising brightness when opening a photo or video app, for example.

Taking all controls away is a bit naive :-/