OCZ's Vertex 2, Special Sauce SF-1200 Reviewed

by Anand Lal Shimpi on April 28, 2010 3:17 PM ESTStill Resilient After Truly Random Writes

In our Agility 2 review I did what you all asked: used a newer build of Iometer to not only write data in a random pattern, but write data comprised of truly random bits in an effort to defeat SandForce’s data deduplication/compression algorithms. What we saw was a dramatic reduction in performance:

| Iometer Performance Comparison - 4K Aligned, 4KB Random Write Speed | |||||

| Normal Data | Random Data | % of Max Perf | |||

| Corsair Force 100GB (SF-1200 MLC) | 164.6 MB/s | 122.5 MB/s | 74.4% | ||

| OCZ Agility 2 100GB (SF-1200 MLC) | 44.2 MB/s | 46.3 MB/s | 105% | ||

| Iometer Performance Comparison | |||||

| Corsair Force 100GB (SF-1200 MLC) | Normal Data | Random Data | % of Max Perf | ||

| 4KB Random Read | 52.1 MB/s | 42.8 MB/s | 82.1% | ||

| 2MB Sequential Read | 265.2 MB/s | 212.4 MB/s | 80.1% | ||

| 2MB Sequential Write | 251.7 MB/s | 144.4 MB/s | 57.4% | ||

While I don’t believe that’s representative of what most desktop users would see, it does give us a range of performance we can expect from these drives. It also gave me another idea.

To test the effectiveness and operation of TRIM I usually write a large amount of data to random LBAs on the drive for a long period of time. I then perform a sequential write across the entire drive and measure performance. I then TRIM the entire drive, and measure performance again. In the case of SandForce drives, if the applications I’m using to write randomly and sequentially are using data that’s easily compressible then the test isn’t that valuable. Luckily with our new build of Iometer I had a way to really test how much of a performance reduction we can expect over time with a SandForce drive.

I used Iometer to randomly write randomly generated 4KB data over the entire LBA range of the Vertex 2 drive for 20 minutes. I then used Iometer to sequentially write randomly generated data over the entire LBA range of the drive. At this point all LBAs should be touched, both as far as the user is concerned and as far as the NAND is concerned. We actually wrote at least as much data as we set out to write on the drive at this point.

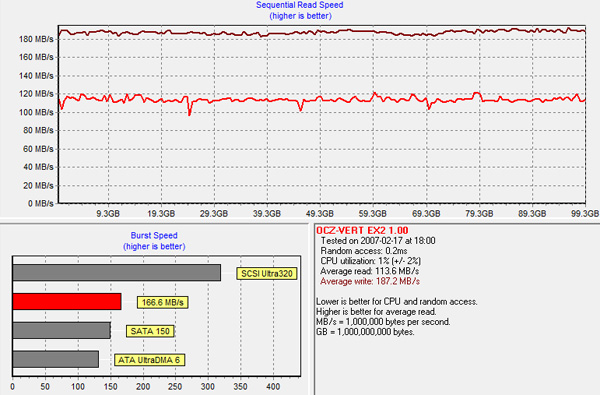

Using HDTach, I measured performance across the entire drive:

The sequential read test is reading back our highly random data we wrote all over the drive, which you’ll note takes a definite performance hit.

Performance is still respectably high and if you look at write speed, there are no painful blips that would result in a pause or stutter during normal usage. In fact, despite the unrealistic workload, the drive proves to be quite resilient.

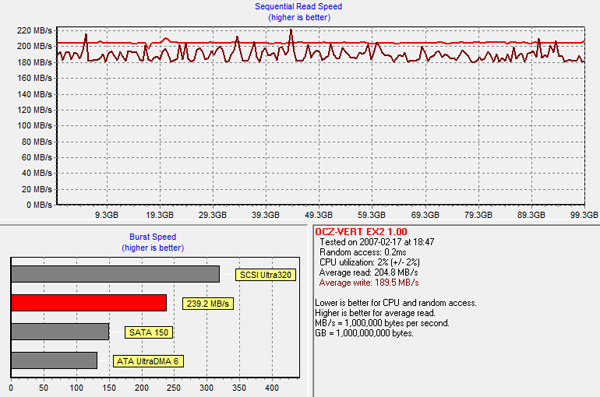

TRIMing all LBAs restores performance to new:

The takeaway? While SandForce’s controllers aren’t immune to performance degradation over time, we’re still talking about speeds over 100MB/s even in the worst case scenario and with TRIM the drive bounces back immediately.

I’m quickly gaining confidence in these drives. It’s just a matter of whether or not they hold up over time at this point.

The Test

With the differences out of the way, the rest of the story is pretty well known by now. The Vertex 2 gives you a definite edge in small file random write performance, and maintains the already high standards of SandForce drives everywhere else.

The real world impact of the high small file random write performance is negligible for a desktop user. I’d go so far as to argue that we’ve reached the point of diminishing returns to boosting small file random write speed for the majority of desktop users. It won’t be long before we’ll have to start thinking of new workloads to really start stressing these drives.

I've trimmed down some of our charts, but as always if you want a full rundown of how these SSDs compare against one another be sure to use our performance comparison tool: Bench.

| CPU | Intel Core i7 965 running at 3.2GHz (Turbo & EIST Disabled) |

| Motherboard: | Intel DX58SO (Intel X58) |

| Chipset: | Intel X58 + Marvell SATA 6Gbps PCIe |

| Chipset Drivers: | Intel 9.1.1.1015 + Intel IMSM 8.9 |

| Memory: | Qimonda DDR3-1333 4 x 1GB (7-7-7-20) |

| Video Card: | eVGA GeForce GTX 285 |

| Video Drivers: | NVIDIA ForceWare 190.38 64-bit |

| Desktop Resolution: | 1920 x 1200 |

| OS: | Windows 7 x64 |

44 Comments

View All Comments

xiphmont - Thursday, April 29, 2010 - link

Yay, good to know. Do you have any pointers to descriptions of the error handling in other drives and devices?jimhsu - Thursday, April 29, 2010 - link

Some hard numbers:Flash memory: 10^-8 to 10^-6 depending on # of cycles. Figure 2, when writing to MLC.

http://ieeexplore.ieee.org/iel5/4550747/4558854/04...

Hard drives: > 10^-4 (!!)

http://download.microsoft.com/download/a/f/d/afdfd...

And people say flash memory is unreliable. ALL consumer storage devices (hard drives, SSDs, CDs, etc) have ridiculously massive amounts of error correction considering the rate of raw errors. It just so happens that the storage media that everyone in the world uses (DNA) is the same.

MrBabbage - Wednesday, April 28, 2010 - link

Impressive benchmark numbers, but is this drive really any faster than the original Vertex (assuming you're running Windows 7, and FW 1.5)?From what I hear, you might shave a second or two off of application loading versus the original Vertex - and that's only with heavy duty applications like Photoshop and AutoCAD. Can you confirm that?

If that's the case, then this would seem to have been a step in the wrong direction: minimal performance gains for lost capacity and higher cost per gig. I still agree that SSDs are the single best performance upgrade you can buy at the moment, but with the amazing prices on Vertexes and X25-M G2s at the moment, why go for the Vertex 2? Nothing in this article suggests it's actually a good buy.

In short, benchmarking the drive's sequential read/write performance and random read/write performance is generally pretty useless. With a controller that is architected this way, it would strike me as worse than useless, given that such benchmarks will produce staggering figures that have little effect on the main uses for SSDs (faster boot times, faster application loading times, better performance with encrypted/compressed files and workloads).

strikeback03 - Thursday, April 29, 2010 - link

That is a question I wondered about in articles like this or the RAIDed 40GB Intel SSD one. Sure they turn out higher IOPS numbers, but is this something actually noticeable to humans? In a blind test would I be able to tell the difference between otherwise identical systems if one was running an 80GB X25M and one was running the RAIDed 40GB X25Vs?QChronoD - Wednesday, April 28, 2010 - link

If you wrote mostly highly compressable data to the drive, would you be able to write more "data" to this drive than a regular SSD?strikeback03 - Thursday, April 29, 2010 - link

No, as far as the OS is concerned you do not gain space by compressing the data.hybrid2d4x4 - Wednesday, April 28, 2010 - link

Anand, I don't know if you follow other sites' articles, but a fairly recent SSD roundup at Tom's also measured power consumption (see link at the bottom of the post), and curiously, they measured 0.1W idle for the Intel drive compared to similar numbers to yours for every other SSD. Are there some test system settings that might be tweaked to get such low idle power figures with the Intel drive (and others) or does it seem like there is something sketchy about their data?I know that with these low power levels, this might seem like splitting hairs, but on a CULV laptop's power budget, 0.5W is still significant, IMO. Also, is there any chance you can throw in a modern 2.5" mechanical drive as a reference point in your SSD power charts? Thanks and keep up the good work on your SSD coverage!

http://www.tomshardware.com/reviews/6gb-s-ssd-hdd,...

strikeback03 - Thursday, April 29, 2010 - link

I know the 200GB 7200 RPM drive I used in my carputer was rated for 1A at 5V. That was the only power number listed, no 12V draw.gfody - Wednesday, April 28, 2010 - link

You made a point to show iometer results using random data but you still present screenshots of HDTach's results using all-zero reads/writes. The all-zero tests are no more realistic than the iometer all-random tests - I think the all-random test is probably closer to reality than HDTach now. I may not always write perfectly random data but I certainly won't be writing all-zero data!xiphmont - Wednesday, April 28, 2010 - link

I believe that OCZ has also released and is recommending the 3.0.5 MP firmware update for the Vertex LE (It is labelled 1.0.5 on the OCZ site). Given that users were bricking their Vertex LEs left and right due to suspend bugs, this is most welcome... Anand, do you happen to know if OCZ 1.0.5 and Sandforce 3.0.5 are the same, and if the Special Sauce is preserved in the Vertex LE with this upgrade? It's been asked on the OCZ forums, but not answered directly.I have a pair of LEs that I'm going to update regardless, but it would be nice to know.