OCZ's Agility 2 Reviewed: The First SF-1200 with MP Firmware

by Anand Lal Shimpi on April 21, 2010 7:22 PM ESTRandom Data Performance

Many of you correctly brought up concerns with our use of Iometer with SandForce drives. SandForce's controller not only looks at how data is being accessed, but also the actual data itself (more info here). The more compressible the data, the lower the write amplification a SandForce drive will realize. While Iometer's random access controls how data is distributed across the drive, the data itself is highly compressible. For example, I took a 522MB output from Iometer and compressed it using Windows 7's built in Zip compression tool. The resulting file was 524KB in size.

This isn't a huge problem because most controllers don't care what you write, just how you write it. Not to mention that the sort of writes you do on a typical desktop system are highly compressable to begin with. But it does pose a problem when testing SandForce's controllers. What we end up showing is best case performance, but what if you're writing a lot of highly random data? What's the worst performance SandForce's controller can offer?

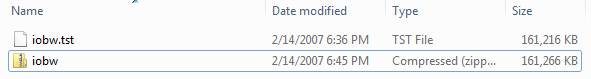

An upcoming version of Iometer will allow you to use 100% random data to test just that. Our own Ryan Smith compiled an early release of this version of Iometer so we could see how much of an impact purely random data has on Iometer performance. When the Use Random Data box is checked, the actual data Iometer generates is completely random. To confirm I tried compressing a 161MB output from Iometer with Use Random Data enabled:

The resulting file was actually slightly bigger. In other words, it could not be compressed. Perfect.

| Iometer Performance Comparison - 4K Aligned, 4KB Random Write Speed | |||||

| Normal Data | Random Data | % of Max Perf | |||

| Corsair Force 100GB (SF-1200 MLC) | 164.6 MB/s | 122.5 MB/s | 74.4% | ||

| OCZ Agility 2 100GB (SF-1200 MLC) | 44.2 MB/s | 46.3 MB/s | 105% | ||

With data that can't be compressed, the SF-1500 (or SF-1200 with 3.0.1 firmware) will drop from 164.6MB/s to 122.5MB/s. That's still faster than any other SSD except for Crucial's RealSSD C300. The Agility 2 and other SF-1200 drives running 3.0.5 shows no performance impact as it's already bound by the performance of its firmware. Since the rest of the Agility 2's performance is identical to the Force drive I'll only include one set of results in the table below:

| Iometer Performance Comparison | |||||

| Corsair Force 100GB (SF-1200 MLC) | Normal Data | Random Data | % of Max Perf | ||

| 4KB Random Read | 52.1 MB/s | 42.8 MB/s | 82.1% | ||

| 2MB Sequential Read | 265.2 MB/s | 212.4 MB/s | 80.1% | ||

| 2MB Sequential Write | 251.7 MB/s | 144.4 MB/s | 57.4% | ||

Read performance is also impacted, just not that much. Performance drops to around 80% of peak, which does put the SandForce drives behind Intel and Crucial in sequential read speed. Random read speed drops to Indilinx levels. Read speed is impacted because if we write fully random data to the drive there's simply more to read back when we need it, making the process slower.

Sequential write speed actually takes the biggest hit of them all. At only 144.4MB/s if you're writing highly random data sequentially you'll find that the SF-1200/SF-1500 performs worse than just about any other SSD controller on the market. Only the X25-M is slower. While the impact to read performance and random write performance isn't terrible, sequential performance does take a significant hit on these SandForce drives.

60 Comments

View All Comments

speden - Wednesday, April 21, 2010 - link

I still don't understand if the SandForce compression increases the available storage space. Is that discussed in the article somewhere? Is the user storage capacity 93.1 GB if you write uncompressable data, but much larger if you are writing normal data? If so that would effectively lower the cost per gigabyte quite a bit.Ryan Smith - Wednesday, April 21, 2010 - link

It does not increase the storage capacity of the drive. The OS still sees xxGB worth of data as being on the drive even if it's been compressed by the controller, which means something takes up the same amount of reported space regardless of compressibility.The intention of SandForce's compression abilities was not to get more data on to the drive, it was to improve performance by reading/writing less data, and to reduce wear & tear on the NAND as a result of the former.

If you want to squeeze more storage space out of your SSD, you would need to use transparent file system compression. This means the OS compresses things ahead of time and does smaller writes, but the cost is that the SF controller won't be able to compress much if anything, negating the benefits of having the controller do compression if this results in you putting more data on the drive.

arehaas - Thursday, April 22, 2010 - link

The Sandforce drives report the same space available to the user, even if there is less data written to the drive. Does it mean that Sandforce drives should last longer because there are fewer actual writes to the NAND? One would reach the 10 million (or whatever) writes with Sandforce later than with other drives.Ryan Smith - Thursday, April 22, 2010 - link

Exactly.MadMan007 - Wednesday, April 21, 2010 - link

On a note somewhat related to another post here, I have a request. Could you guys please post final 'available to OS' capacity in *gibibytes*? (or if you must post gigabytes to go along with the marketers at the drive companies make it clear you are using GIGA and not GIBI) After I realized how much 'real available to OS' capacity can vary among drives which supposedly have the same capacity this would be very useful information...people need to know how much actual data they can fit on the drives and 'gibibytes available to the OS' is the best standard way to do that.vol7ron - Wednesday, April 21, 2010 - link

Attach all corrections to this post.1st Paragraph: incredible -> incredibly

pattycake0147 - Thursday, April 22, 2010 - link

On the second page, there is no link where the difference between the 1500 and the 1200 are referenced.Roland00 - Thursday, April 22, 2010 - link

The problem with logarithmic scales is your brain interprets the data linerally instead of exponentially unless you force yourself not too.Per Hansson - Thursday, April 22, 2010 - link

I agreeJust as an idea you could have the option to click the graph and get a bigger version, I guess it would be something like 600x3000 in size but would give another angle at the data

Because for 90% of your users I think a logramithic scale is very hard to comprehend :)

Impulses - Thursday, April 22, 2010 - link

Not sure if this is the best place to post this but as I just remembered to do so, here goes... I have no issues whatsoever with your site re-design on my desktop, it's clean, it's pretty, looks fine to me.HOWEVER, it's pretty irritating on my netbook and on my phone... On the netbook the top edge of the page simply takes up too much space and leads to more scrolling than necessary on every single page. I'm talking about the banner ad, followed by the HUGE Anandtech logo (it's bigger than before isn't it), flanked by site navigation links, and followed by several more large bars for all the product categories. Even the font's big on those things... I don't get it, seems to take more space than necessary.

Those tabs or w/e on the previous design weren't as clean looking, but they were certainly more compact. At 1024x600 I can barely see the title of the article I'm on when I'm scrolled all the way up (or not at all if I've enlarged text size a notch or two). It's not really that big a deal, but it just seems like there's a ton of wasted space around the site navigation links and the logo. /shrug

Now on to the second issue, on my phone while using Opera Mini I'm experiencing some EXTREME slowdowns when navigating your page... This is a much bigger deal, it's basically useless... Can't even scroll properly. I've no idea what's wrong, since Opera Mini doesn't even load ads or anything like that, but it wasn't happening a week or two ago either so it's not because of the site re-design itself...

It's something that has NEVER happened to me on any other site tho, they may load slow initially, but after it's open I've never had a site scrolls slowly or behave sluggishly within Opera Mini like Anandtech is doing right now... Could it be a rogue ad or something?

I load the full-version of all pages on Opera Mini all the time w/o issue, but is there a mobile version of Anandtech that might be better suited for my phone/browser combination in the meantime?