Intel Cancels Larrabee Retail Products, Larrabee Project Lives On

by Ryan Smith on December 4, 2009 12:00 AM EST- Posted in

- Ryan's Ramblings

We just got off the phone with Nick Knupffer of Intel, who confirmed something that has long been speculated upon: the fate of Larrabee. As of today, the first Larrabee chip’s retail release has been canceled. This means that Intel will not be releasing a Larrabee video card or a Larrabee HPC/GPGPU compute part.

The Larrabee project itself has not been canceled however, and Intel is still hard at work developing their first entirely in-house discrete GPU. The first Larrabee chip (which for lack of an official name, we’re going to be calling Larrabee Prime) will be used for the R&D of future Larrabee chips in the form of development kits for internal and external use.

The big question of course is “why?” Officially, the reason why Larrabee Prime was scrubbed was that both the hardware and the software were behind schedule. Intel has left the finer details up to speculation in true Intel fashion, but it has been widely rumored in the last few months that Larrabee Prime has not been performing as well as Intel had been expecting it to, which is consistent with the chip being behind schedule.

Bear in mind that Larrabee Prime’s launch was originally scheduled to be in the 2009-2010 timeframe, so Intel has already missed the first year of their launch window. Even with TSMC’s 40nm problems, Intel would have been launching after NVIDIA’s Fermi and AMD’s Cypress, if not after Cypress’ 2010 successor too. If the chip was underperforming, then the time element would only make things worse for Intel, as they would be setting up Larrabee Prime against successively more powerful products from NVIDIA and AMD.

The software side leaves us a bit more curious, as Intel normally has a strong track record here. Their x86 compiler technology is second to none, and as Larrabee Prime is x86 based, this would have left them in a good starting position for software development. What we’re left wondering is whether the software setback was for overall HPC/GPGPU use, or if it was for graphics. Certainly the harder part of Larrabee Prime’s software development would be the need to write graphics drivers from scratch that were capable of harnessing the chip as a video card, taking in to consideration the need to support older APIs such as DX9 that make implicit assumptions about the layout of the hardware. Could it be that Intel couldn’t get Larrabee Prime working as a video card? That’s going to be a big question that’s going to hang over Intel’s heads right up to the day that they finally launch a Larrabee video card.

Ultimately when we took our first look at Larrabee Prime’s architecture, there were 3 things that we believed could go wrong: manufacturing/yield problems, performance problems, and driver problems. Based on what Intel has said, we can’t write off any of those scenarios. Larrabee Prime is certainly suffering from something that can be classified as driver problems, and it may very well be suffering from both manufacturing and performance problems too.

To Intel’s credit, even if Larrabee Prime will never see the light of day as a retail product, it has been turning in some impressive numbers at trade shows. At SC09 last month, Intel demonstrated Larrabee Prime running the SGEMM HPC benchmark at 1 TeraFLOP, a notable accomplishment as the actual performance of any GPU is usually a fraction of its theoretical performance. 1TF is close to the theoretical performance of NVIDIA’s GT200 and AMD’s RV770 chips, so Larrabee was no slouch. But then again its competition would not be GT220 and RV770, it’s Fermi and Cypress.

Next, this brings us to the future of Larrabee. Larrabee Prime may be canceled, but the Larrabee project is not. As Intel puts it, Larrabee is a “complex multi-year project” and development will be continuing. Intel still wants a piece of the HPC/GPGPU pie (least NVIDIA and AMD get it all to themselves) and they still want in to the video card space given the collision between those markets. For Intel, their plans have just been delayed.

The Larrabee architecture lives on

For the immediate future, as we mentioned earlier Larrabee Prime is still going to be used by Intel for R&D purposes, as a software development platform. This is a very good use of the hardware (however troubled it may be) as it allows Intel to bootstrap the software side of Larrabee so that developers can get started programming for real hardware while Intel works on the next iteration of Larrabee. Much like how NVIDIA and AMD sample their video cards months ahead of time to game developers, we expect that Larrabee Prime SDKs would be limited to Intel’s closest software partners, so don’t expect to see much if anything leak about Larrabee Prime once chips start leaving Intel’s hands, or to see extensive software development initially. Widespread Larrabee software development will still not start until Intel ships the next iteration of Larrabee, if this is the case.

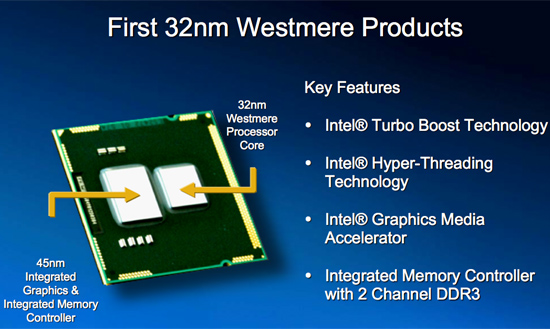

We should know more about the Larrabee situation next year, as Intel is already planning on an announcement at some point in 2010. Our best guess is that Intel will announce the next Larrabee chip at that time, with a product release in 2011 or 2012. Much of this will depend on what the hardware problem was and what process node Intel wants to use. If Intel just needs the ability to pack more cores on to a Larrabee chip then 2011 is a reasonable target, otherwise if there’s a more fundamental issue then 2012 is more likely. This lines up with the process nodes for those years: if they go for 2011 they hit the 2nd year of their 32nm process, otherwise if they launched in 2012 they would be able to launch it as one of the first products on the 22nm process.

For that matter, Since the Larrabee project was not killed, it’s a safe assumption that any future Larrabee chips are going to be based on the same architectural design. The vibe from Intel is that the problem is Larrabee Prime and not the Larrabee architecture itself. The idea of an x86 many-cores GPU is still alive and well.

On-Chip GMA-based GPUs: Still On Schedule For 2010

Finally, there’s the matter of Intel’s competition. For AMD and NVIDIA, this is just about the best possible announcement they could hope for. On the video card front it means they won’t be facing any new competitors through 2010 and most of 2011. That doesn’t mean that Intel isn’t going to be a challenge for them – Intel is still launching Carkdale and Arrandale with on-chip GPUs next year – but they won’t be facing competition at the high-end too. For NVIDIA in particular, this means that Fermi has a clear shot at the HPC/GPGPU space without competition from Intel, which is exactly the kind of break NVIDIA needed since Fermi is running late.

71 Comments

View All Comments

mutarasector - Saturday, December 12, 2009 - link

Personally, I'd rather see Nvidia and AMD join forces somehow and merge their high end HPC/GPGPU technologies into a cohesive seamless technology, and head Intel off at the pass. This would likely never happen for several reasons, the first being that it probably wouldn't pass the SEC and FTC regulatory smell tests. But I'm just *salivating* at the possibilities of the two doing a joint venture. One thing I do know: Nvidia will have to do something -- and real soon. They aren't just getting squeezed out by Intel and ATI not needing their chipsets, but then there is Lucid's Hydra chip which may eventually render both Crossfire and SLI *moot*. Off course, this would affect ATI, but could really hurt Nvidia far more.Merging with AMD would solve their lack of a "ilicense" agreement.

Another possibility might be Nvidia buying out VIA, but to what end, I'm not really certain, and would be too little too late.

jconan - Sunday, December 13, 2009 - link

AMD did try to buy out Nvidia but Nvidia requested that they be CEO of the new company and hence AMD moved on to ATI... Nvidia could however combine ARM cores with their GPUs like they did similarly with TEGRA using a different type of GPU. This way Nvidia can still forge ahead with a serial and a parallel processor in one and not have to rely on x86 for HPC.lifeblood - Sunday, December 6, 2009 - link

Intel may or may not be too proud to do it, but Huang is way to pigheaded to accept it.Olen Ahkcre - Friday, January 8, 2010 - link

The reason for Intel not buying Nvidia is it would probably run into problems with anti-trust laws.I seriously don't think the government would allow the number 1 CPU maker and number 1 GPU maker to merge for the reason being they would become too dominant. With the combined technology and engineering assets under one roof, AMD/ATI would not be able to compete.

You might argue Nvidia isn't currently #1 in the GPU arena, but it's just a formality as Fermi will allow Nvidia to retake the #1 spot. Nvidia gave up their lead temporarily to improve on general purpose GPU support.

Gunbuster - Saturday, December 5, 2009 - link

This is no surprise. With all their R&D money they cant even make a passable driver and control panel for the current integrated "extreme" video chipsets. Thats not even touching on the chips abysmal performance. What revision is this now? 4? each spin touted as "even more extreem" than the last yet all you can run is Unreal 1 at 800X600...eddieroolz - Sunday, December 6, 2009 - link

Unreal at 800X600, you're lucky! I can't even run Halo at 640X480 on this GM45HD.chizow - Saturday, December 5, 2009 - link

This news basically validates all of the various criticisms leveled at Larrabee over the last few years: that Larrabee wouldnt' be a competent raster GPU, too much die space was wasted on x86 instructions, and Intel was spending far too much effort and R&D on peripheral features, like ray-tracing, instead of focusing efforts on making the part competitive.It also helps confirm Intel was never really developing Larrabee with discrete graphis in mind, it was always a reactionary response to Nvidia's GPGPU and HPC efforts. I imagine that's why Intel is keeping the project alive. As it is now, same as it was a few months and years ago, Larrabee serves no purpose as it was highly unlikely to serve as a competent discrete 3D raster-based GPU and is actually a conflicting interest for Intel in the only area it might've really been competitive, as a highly parallel x86-based CPU for HPC.

cyberserf - Saturday, December 5, 2009 - link

they were also making something in those days that would outclass the competition. look how that turned out.IdaGno - Saturday, December 5, 2009 - link

IOW, nVidia is closer to producing their version of an x86 clone than Intel is to producing a viable gaming GPU, integrated or otherwise.Kiijibari - Saturday, December 5, 2009 - link

The picture of your so-called "Larrabee Prime" is clearly a Core2 Quad Processor code name "Yorkfield", here is your source:http://www.intel.com/pressroom/kits/45nm/photos.ht...">http://www.intel.com/pressroom/kits/45nm/photos.ht...

http://download.intel.com/pressroom/kits/45nm/45nm...">http://download.intel.com/pressroom/kits/45nm/45nm...

With an error like this, I doubt now the overall quality of the article. Probably, the only thing that is going to be canceled are the old S775 QuadCores ;-)