LINPACK: Intel's Nehalem versus AMD Shanghai

by Johan De Gelas on November 28, 2008 12:00 AM EST- Posted in

- IT Computing general

A "beta BIOS update" broke compatibility with ESX, so we had to postpone our virtualization testing on our quad CPU AMD 8384 System.

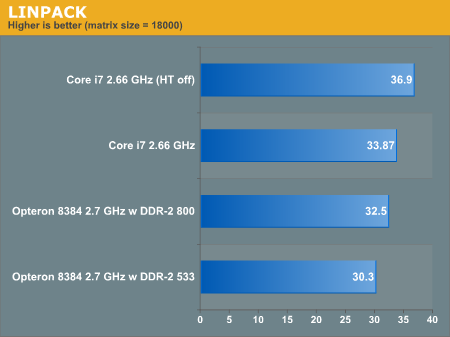

So we started an in depth comparison of the 45 nm Opterons, Xeons and Core i7 CPUs. One of our benchmarks, the famous LINPACK (you can read all about it here) painted a pretty interesting performance picture.

We had to test with a matrix size of 18000 (2.5 GB of RAM necessary), as we only had 3 GB of DDR-3 on the Core i7 platform. That should not be a huge problem as we tested with only one CPU. We normally need about 4 GB for each quadcore CPU to reach the best performance.

We also used the 9.1 version of Intel's LINPACK, as we wanted the same binary on both platforms. As we have show before, this version of LINPACK performs best on both AMD and Intel platforms when the matrix size is low. The current 10.1 version does not work on AMD CPUs unfortunately.

We don't pretend that the comparison is completely fair: the Nehalem platform uses unbuffered RAM which has slightly lower latency and higher bandwidth than the Xeon "Nehalem" will get. But we had to satisfy our curiousity: how does the new "Shanghai" core compare to "Nehalem"?

Quite interesting, don't you think? Hyperthreading (SMT) gives the Nehalem core a significant advantage in most multi-threaded applications, but not in Linpack: it slows the CPU down by 10%. May we have found the first multi-threaded application that is slowed down by Hyperthreading on Nehalem? That should not spoil the fun for Intel though, as many other HPC benchmarks show a larger gap. AMD has the advantage of being first to the market, Nehalem based Xeons are still a few months away.

Also, the impact of the memory subsystem is limited, as a 50% increase in memory speed results in a meager 6% performance increase. The Math Kernel Libraries are so well optimized that the effect of memory speed is minimized. This in great contrast to other HPC applications where the tripple channel DDR-3 memory system of Nehalem really pays off. More later...

60 Comments

View All Comments

befair - Monday, December 8, 2008 - link

Well threaded!!?? Ever heard of MPI? MPI processes are *not* threads!befair - Friday, November 28, 2008 - link

ok .. getting tired of this! Intel loving Anandtech employs very unfair & unreasonable tactics to show AMD processors in bad light every single time. And most readers have no clue about the jargon Anandtech uses every time.1 - HPL needs to be compiled with appropriate flags to optimize code for the processor. Anandtech always uses the code that is optimized for Intel processors to measure performance on AMD processors. As much as AMD and Intel are binary compatible, when measuring performance even a college grad who studies HPC knows the code has to be recompiled with the appropriate flags

2 - Clever words: sometimes even 4 GFLOPS is described as significant performance difference

3- "The Math Kernel Libraries are so well optimized that the effect of memory speed is minimized." - So ... MKL use is justified because Intel processors need optimized libraries for good performance. However, they dont want to use ACML for AMD processors. Instead they want to use MKL optimized for Intel on AMD processors. Whats more ... Intel codes optimize only for Intel processors and disable everything for every other processors. They have corrected it now but who knows!! read here http://techreport.com/discussions.x/8547">http://techreport.com/discussions.x/8547

I am not saying anything bad about either processor but an independent site that claims to be fair and objective in bringing facts to the readers is anything but fair and just!!! what a load!

JohanAnandtech - Saturday, November 29, 2008 - link

It is not that black and white.Please read the article that I linked ( http://it.anandtech.com/IT/showdoc.aspx?i=3162&...">http://it.anandtech.com/IT/showdoc.aspx?i=3162&... ) and you will see that the AMD performs better with the Intel MKLs if you use relatively low matrix sizes. It is only at high matrix sizes that the ACML libraries give the K10 architecture a real advantage.

BlueBlazer - Saturday, November 29, 2008 - link

Are you using Linux 64-bit for this test? What about differences with Linux 32-bit?JohanAnandtech - Monday, December 1, 2008 - link

64 bit... I don't see why we would use 32 bit? Linux 64 bit is the best platform for any of these kinds of tests.BlueBlazer - Saturday, November 29, 2008 - link

No matter where you turn or whichever review website visited, you will see Intel outperforming your precious on many tests. If you are tired at looking at them or watching disappointments after disappointments with your precious, why not shutdown your PC and go out and enjoy life.On the other note, ACML may perform worse than MKL

http://ixbtlabs.com/articles3/cpu/phenom-x4-matlab...">http://ixbtlabs.com/articles3/cpu/phenom-x4-matlab...

And it happens Intel has still the best compilers around, try using GCC to compare, you'll find even the Intel compiled version works better on non-Intel processors.

LawJikal - Friday, November 28, 2008 - link

There are many documented scenarios in which the HyperThreading serves as a detriment (single-threaded scenarios):http://hothardware.com/articleimages/Item1232/3dv....">http://hothardware.com/articleimages/Item1232/3dv....

http://techgage.com/reviews/intel/core_i7_launch/c...">http://techgage.com/reviews/intel/core_i7_launch/c...

http://hothardware.com/articleimages/Item1232/lame...">http://hothardware.com/articleimages/Item1232/lame...

http://hothardware.com/articleimages/Item1232/et.p...">http://hothardware.com/articleimages/Item1232/et.p...

There are also instances in which a 3.0 GHz QX9650 offers greater performance (the few instances in which operating code benefits more from 12MB of split L2 cache than 8MB of shared L3)

hyc - Friday, November 28, 2008 - link

Can you repeat these tests using ACML?http://developer.amd.com/cpu/Libraries/acml/Pages/...">http://developer.amd.com/cpu/Libraries/acml/Pages/...

LINPACK really isn't a great code base for testing these types of systems anyway...

http://www.netlib.org/lapack/">http://www.netlib.org/lapack/

JohanAnandtech - Monday, December 1, 2008 - link

Your wish is my command :-)http://it.anandtech.com/weblog/showpost.aspx?i=529">http://it.anandtech.com/weblog/showpost.aspx?i=529

BlueBlazer - Saturday, November 29, 2008 - link

ACML may perform worse than MKLhttp://ixbtlabs.com/articles3/cpu/phenom-x4-matlab...">http://ixbtlabs.com/articles3/cpu/phenom-x4-matlab...

Of course, its interesting to see ACML in this test.