NVIDIA’s GeForce GTX 480 and GTX 470: 6 Months Late, Was It Worth the Wait?

by Ryan Smith on March 26, 2010 7:00 PM EST- Posted in

- GPUs

Image Quality & AA

When it comes to image quality, the big news from NVIDIA for Fermi is what NVIDIA has done in terms of anti-aliasing of fake geometry such as billboards. For dealing with such fake geometry, Fermi has several new tricks.

The first is the ability to use coverage samples from CSAA to do additional sampling of billboards that allow Alpha To Coverage sampling to fake anti-alias the fake geometry. With the additional samples afforded by CSAA in this mode, the Fermi can generate additional transparency levels that allow the billboards to better blend in as properly anti-aliased geometry would.

The second change is a new CSAA mode: 32x. 32x is designed to go hand-in-hand with the CSAA Alpha To Coverage changes by generating an additional 8 coverage samples over 16xQ mode for a total of 32 samples and giving a total of 63 possible levels of transparency on fake geometry using Alpha To Coverage.

In practice these first two changes haven’t had the effect we were hoping for. Coming from CES we thought this would greatly improve NVIDIA’s ability to anti-alias fake geometry using cheap multisampling techniques, but apparently Age of Conan is really the only game that greatly benefits from this. The ultimate solution is for more developers of DX10+ applications to enable Alpha To Coverage so that anyone’s MSAA hardware can anti-alias their fake geometry, but we’re not there yet.

So it’s the third and final change that’s the most interesting. NVIDIA has added a new Transparency Supersampling (TrSS) mode for Fermi (ed: and GT240) that picks up where the old one left off. Their previous TrSS mode only worked on DX9 titles, which meant that users had few choices for anti-aliasing fake geometry under DX10 games. This new TrSS mode works under DX10, it’s as simple as that.

So why is this a big deal? Because a lot of DX10 games have bad aliasing of fake geometry, including some very popular ones. Under Crysis in DX10 mode for example you can’t currently anti-alias the foliage, and even brand-new games such as Battlefield: Bad Company 2 suffer from aliasing. NVIDIA’s new TrSS mode fixes all of this.

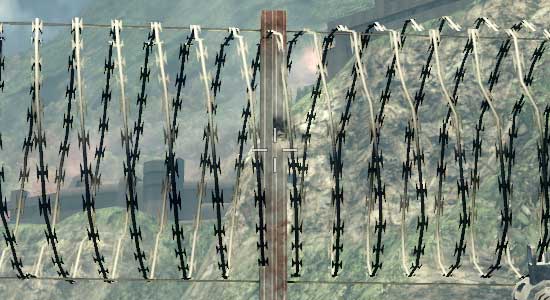

Bad Company 2 DX11 Without Transparency Supersampling

Bad Company 2 DX11 With Transparency Supersampling

The bad news is that it’s not quite complete. Oh as you’ll see in our screenshots it works, but the performance hit is severe. It’s currently super-sampling too much, resulting in massive performance drops. NVIDIA is telling us that this should be fixed next month, at which time the performance hit should be similar to that of the old TrSS mode under DX9. We’ve gone ahead and taken screenshots and benchmarks of the current implementation, but keep in mind that performance should be greatly improving next month.

So with that said, let’s look at the screenshots.

| NVIDIA GeForce GTX 480 | NVIDIA GeForce GTX 285 | ATI Radeon HD 5870 | ATI Radeon HD 4890 |

| 0x | 0x | 0x | 0x |

| 2x | 2x | 2x | 2x |

| 4x | 4x | 4x | 4x |

| 8xQ | 8xQ | 8x | 8x |

| 16xQ | 16xQ | DX9: 4x | DX9: 4x |

| 32x | DX9: 4x | DX9: 4x + AAA | DX9: 4x + AAA |

| 4x + TrSS 4x | DX9: 4x + TrSS | DX9: 4x + SSAA | |

| DX9: 4x | |||

| DX9: 4x + TrSS |

With the exception of NVIDIA’s new TrSS mode, very little has changed. Under DX10 all of the cards produce a very similar image. Furthermore once you reach 4x MSAA, each card producing a near-perfect image. NVIDIA’s new TrSS mode is the only standout for DX10.

We’ve also include a few DX9 shots, although we are in the process of moving away from DX9. This allows us to showcase NVIDIA’s old TrSS mode, along with AMD’s Adapative AA and Super-Sample AA modes. Note how both TrSS and AAA do a solid job of anti-aliasing the foliage, which makes it all the more a shame that they haven’t been available under DX10.

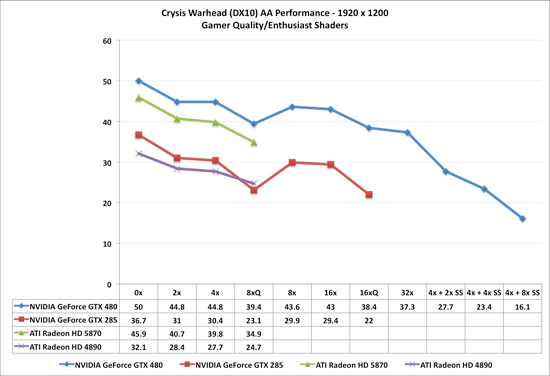

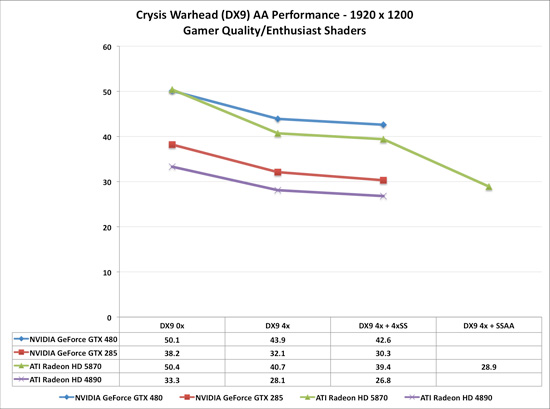

When it comes to performance, keep in mind that both AMD and NVIDIA have been trying to improve their 8x MSAA performance. When we reviewed the Radeon 5870 back in September we found that AMD’s 8x MSAA performance was virtually unchanged, and 6 months later that still holds true. The performance hit moving from 4x MSAA to 8x MSAA on both Radeon cards is roughly 13%. NVIDIA on the other hand took a stiffer penalty under DX10 for the GTX 285, where there it fell by 25%. But now with NVIDIA’s 8x MSAA performance improvements for Fermi, that gap has been closed. The performance penalty for moving to 8x MSAA over 4x MSAA is only 12%, putting it right up there with the Radeon cards in this respect. With the GTX 480, NVIDIA can now do 8x MSAA for as cheap as AMD has been able to

Meanwhile we can see the significant performance hit on the GTX 480 for enabling the new TrSS mode under DX10. If NVIDIA really can improve the performance of this mode to near-DX9 levels, then they are going to have a very interesting AA option on their hands.

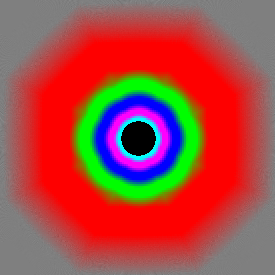

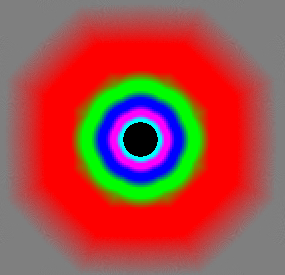

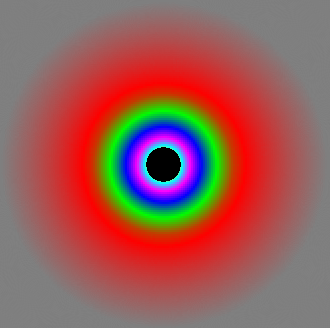

Last but not least, there’s anisotropic filtering quality. With the Radeon 5870 we saw AMD implement true angle-independent AF and we’ve been wondering whether we would see this from NVIDIA. The answer is no: NVIDIA’s AF quality remains unchanged from the GTX200 series. In this case that’s not necessarily a bad thing; NVIDIA already had great AF even if it was angle-dependant. More to the point, we have yet to find a game where the difference between AMD and NVIDIA’s AF modes have been noticeable; so technically AMD’s AF modes are better, but it’s not enough that it makes a practical difference

GeForce GTX 480

GeForce GTX 285

Radeon 5870

196 Comments

View All Comments

ReaM - Saturday, March 27, 2010 - link

I don't agree with final words.480 is crap. Already being expensive it adds huge power consumption factor only to have a slightly better performance.

However (!), I see a potential for future chips and I can't wait for a firmy Quadro to hit the market :)

Patrick Wolf - Saturday, March 27, 2010 - link

6 months and we get a couple of harvested, power-sucking heaters? Performance king, barely, but for what cost. Cards not even available yet. This is a fail.This puts ATI in a very good place to release a refresh or revisions and snatch away the performance crown.

dingetje - Saturday, March 27, 2010 - link

exactly my thoughtsand imo the reviewers are going way to easy on nvidia over this fail product (except maybe hardocp)

cjb110 - Saturday, March 27, 2010 - link

You mention that both of these are cut-down GF100's, but you've not done any extrapolation of what the performance of a full GF100 card would be?We do expect a full GF100 gaming orientated card, and probly before the end of the year, don't we?

Is that going to be 1-9% quicker or 10%+?

Ryan Smith - Saturday, March 27, 2010 - link

It's hard to say since we can't control every variable independent of each other. A full GF100 will have more shading, texturing, and geo power than the GTX 480, but it won't have any more ROP/L2/Memory.This is going to heavily depend on what the biggest bottleneck is, possibly on a per-game basis.

SlyNine - Saturday, March 27, 2010 - link

Yea and I had to return 2 8800GT's from being burnt up. I will not buy a really hot running card again.poohbear - Saturday, March 27, 2010 - link

Oh how the mighty have fallen.:( i remember the days of the 8800gt when nvidia did a hard launch, released a cheap & excellent performing card for the masses. W/ the fermi release u would never know its the same company. Such a disappointment.descendency - Saturday, March 27, 2010 - link

I think the MSRP is lower than $300 for the 5850 (259) and lower than $400 for the 5870 (379). Just thought that was worth sharing.I have to believe that the demand will shift back evenly now and price drops for the AMD cards can ensue (if nothing else, the cards should go to the MSRP values because competition is finally out). I would imagine the price gap between the GTX480 and the AMD 5870 could be as much as $150 dollars when all is said and done. Maybe $200 dollars initially as this kind of release almost always is followed by a paper launch (major delays and problems before launch = supply issues).

AnnonymousCoward - Saturday, March 27, 2010 - link

...for two reasons: power and die size.So the 5870 and 470 appear to be priced similarly, while the 5870 beats it in virtually every game and uses 47W less at load! That is a TON of additional on-die power (like 30-40A?).

We saw this coming last year when Fermi was announced. Now AMD is better positioned than ever.

IVIauricius - Saturday, March 27, 2010 - link

I see why XFX started making ATI cards a few years ago with the 4000 series. Once again nVidia has made a giant chip that requires a high price tag to offset the price of manufacturing and material. The same thing happened a few years ago with the nVidia GTX200 cards and the ATI 4000 cards. XFX realized that they weren't making as much money as they'd like with GTX200 cards and started producing more profitable ATI 4000 cards.I bought a 5870 a couple months ago for $379 at newegg with a promotion code. I plan on selling it not to upgrade, but to downgrade. A $400 card doesn't appeal to me anymore when, like many posters have mentioned, most games don't take advantage of the amazing performance these cards offer us. I only play games like MW2, Borderlands, Dirt 2, and Bioshock 2 at 1920x1080 so a 4870 should suffice my needs for another year. Maybe then I'll buy a 5850 for ~$180.

First post, hope I didn't sound too much like a newbie.

-Mauro