10Gbit Ethernet: Killing Another Bottleneck?

by Johan De Gelas on March 8, 2010 12:00 PM EST- Posted in

- IT Computing

Network Performance in ESX 4.0 Update 1

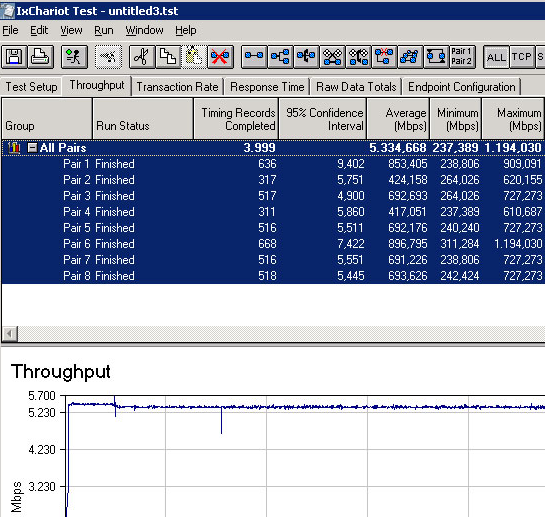

We used up to eight VMs, and each was assigned an “endpoint” in the Ixia IxChariot network test. This way we could measure the total network throughput that is possible to achieve with one, four or eight VMs. Making use of ESX NetQueue, the cards should be able to leverage their separate queues and the hardware Layer 2 “switch”.

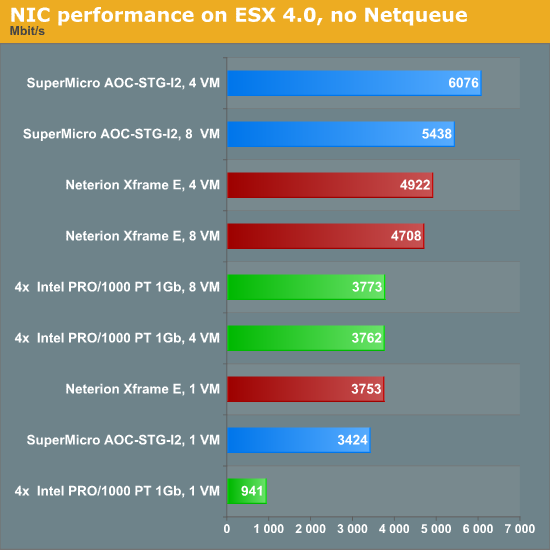

First, we test with NetQueue disabled. The cards will behave like a card with only one Rx/Tx queue. To make the comparison more interesting, we added two dual-port gigabit NICs into the benchmark mix. Teamed NICs are currently by far the most cost effective way to increase network bandwidth.

The 10G cards show their potential. With four VMs, they are able to achieve 5 to 6Gbit/s. There is clearly a queue bottleneck: both 10G cards perform worse with eight VMs. Notice also that 4x1Gbit does very well. This combination has more queues and can cope well with the different network streams. Out of a maximum line speed of 4Gbit/s, we achieve almost 3.8Gbit/s with four and eight VMs. Now let's look at CPU load.

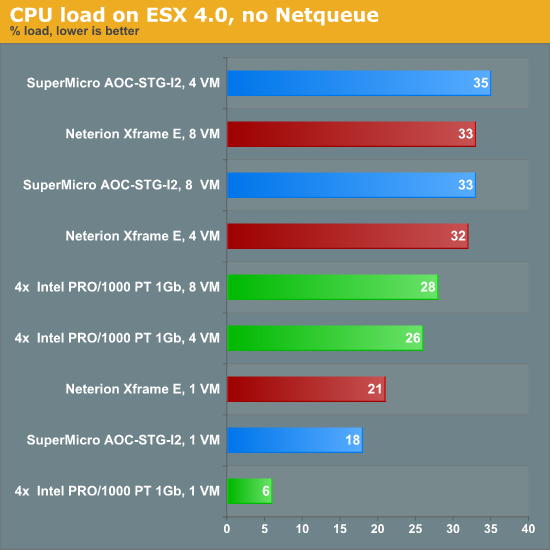

Once you need more than 1Gbit/s, you should pay attention to the CPU load. Four gigabit ports or one 10G port require 25~35% utilization of eight 2.9GHz Opterons cores. That means that you would need two or three cores dedicated just to keeping the network pipes filled. Let us see if NetQueue can do some magic.

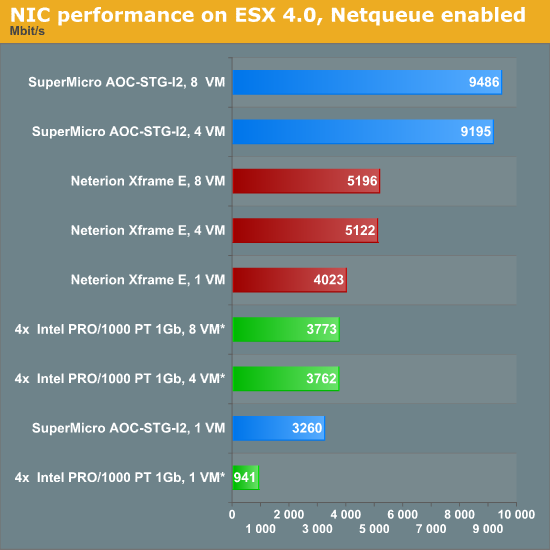

The performance of the Neterion card improves a bit, but it's not really impressive (+8% in the best case). The Intel 82598 EB chip on the Supermicro 10G NIC is now achieving 9.5Gbit/s with eight VMs, very close to the theoretical maximum. The 4x1Gbit/s NIC numbers were repeated in this graph for reference (no NetQueue was available).

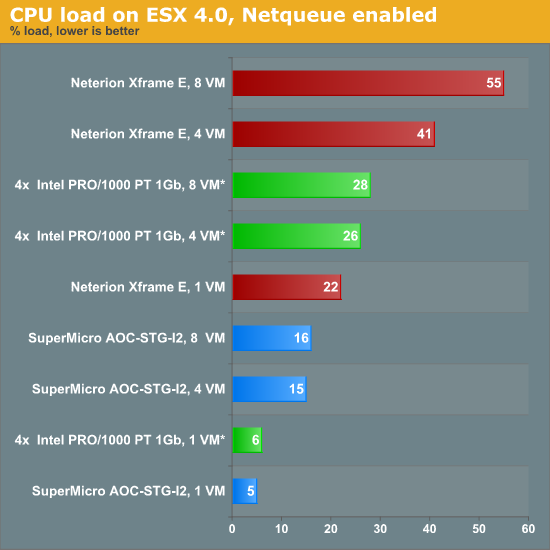

So how much CPU power did these huge network streams absorb?

The Neterion driver does not seem to be optimized for ESX 4. Using NetQueue should lower CPU load, not increase it. The Supermicro/Intel 10G combination shows the way. It delivers twice as much bandwidth at half the CPU load compared to the two dual-port gigabit NICs.

49 Comments

View All Comments

radimf - Wednesday, March 10, 2010 - link

HI,thanks for article!

Btw I am reading your site because of your virtualization articles.

I planned almost 3 years ago for IT project with only a 1/5 of complete budget for small virtualization scenario.

If you want redundancy, It can´t get much simplier than that:

- 2 ESX servers

- one SAN + one NFS/iSCSI/potentially FC storage for D2D backup

- 2 TCP switches, 2 FC switches

world moved, IT changed, EU dotation took too long to process - we finished last summer what was planned years ago...

My 2 cents from small company finishing small IT virtualization project?

FC saved my ass.

iSCSI was on my list (DELL gear), but went FC instead(HP) for lower total price (thanks crisis :-)

HP hardware looked sweet on specs sheets, and actual HW is superb, BUT.... FW sucked BIG TIME.

IT took HP half year to fix it.

HP 2910al switches do have option for up to 4 10gbit ports - that was the reason I bought them last summer.

Coupled with DA cables - very cheap solution how to get 10gbit to your small VMware cluster. (viable 100% now)

But unfortunatelly FW (that time) sucked so much, that 3 out of 4 supplied DA cables did not work at all (out of the box).

Thanks to HP - they changed our DA for 10gbit SFP+ SR optics! :-)

After installation we had several issues with "dead ESX cluster".

Not even ping worked!

FC worked flawlessly through these nightmares.

Swithces again...

Spanning tree protocol bug ate our cluster.

Now we are happy finally. Everything works as advertised.

10gbit primary links are backed up by 1gbit stand-by.

Insane backup speeds of whole VMs compared to our legacy SMB solution to nexenta storage appliance.

JohanAnandtech - Monday, March 8, 2010 - link

Thank you. Very nice suggestion especially since we already started to test this out :-). Will have to wait until April though, as we got a lot of server CPU launches this month;Lord 666 - Monday, March 8, 2010 - link

Aren't the new 32nm Intel server platforms coming with standard 10gbe nics? After my SAN project, going to phase in the new 32nm cpu servers mainly for AES-NI. The 10gbe nics would be an added bonus.hescominsoon - Monday, March 8, 2010 - link

It's called xsigo(pronounced zee-go) and solves the i/o issue you are tying to solve here for vm i/o bandwidth.JohanAnandtech - Monday, March 8, 2010 - link

Basically, it seems like using infiniband to connect each server to an infinibandswitch. And that infiniband connection is then used by a software which offers both a virtual HBA and a virtual NIC. Right? Innovative, but starting at $100k, looks expensive to me.vmdude - Monday, March 8, 2010 - link

"Typically, we’ll probably see something like 20 to 50 VMs on such machines."That would be a low vm per core count in my environment. I typically have 40 vms or more running on a 16 core host that is populated with 96 GB of Ram.

ktwebb - Sunday, March 21, 2010 - link

Agreed. With Nahalems it's about a 2 VM's per core ratio in our environment. And that's conservative. At least with vSphere and overcommit capabilities.duploxxx - Monday, March 8, 2010 - link

All depends on design and application type, we typically have 5-6 VM's on a 12 core 32GB machine and about 350 of those, running in a constant 60-70% CPU utilization range.switcher - Thursday, July 29, 2010 - link

Great article and comments.Sorry I'm so late to this thread, but I was curious to know what the vSwitch is doing during the benchmark? How is it configured? @emuslin notes that SR-IOV is more than just VMDq, and AFAIK the Intel 82598EB doesn't support SR-IOV so what we're seeing it the boost from NetQueue. What support for SR-IOV is there in ESX these days?

I'd be nice to see SR-IOV data too.