The RV870 Story: AMD Showing up to the Fight

by Anand Lal Shimpi on February 14, 2010 12:00 AM EST- Posted in

- GPUs

The Other Train - Building a Huge RV870

While the Radeon HD 5800 series just launched last September, discussions of what the GPUs would be started back in 2006.

Going into the fall of 2007 ATI had a rough outline of what the Evergreen family was going to look like. ATI was pretty well aware of DirectX 11 and Microsoft’s schedule for Windows 7. They didn’t know the exact day it would come out, but ATI knew when to prepare for. This was going to be another one of those market bulges that they had to align themselves with. Evergreen had to be ready by Q3 2009, but what would it look like?

Carrell wanted another RV770. He believed in the design he proposed earlier, he wanted something svelte and affordable. The problem, as I mentioned earlier, was RV770 had no credibility internally. This was 2007, RV770 didn’t hit until a year later and even up to the first day reviews went live there were skeptics within ATI.

Marketing didn’t like the idea of building another RV770. No one in the press liked R600 and ATI was coming under serious fire. It didn’t help that AMD had just acquired ATI and the CPU business was struggling as well. Someone had to start making money. Ultimately, marketing didn’t want to be on the hook two generations in a row for not being at the absolute top.

It’s difficult to put PR spin on why you’re not the fastest, especially in a market that traditionally rewards the kingpin. Marketing didn’t want another RV770, they wanted an NVIDIA killer. At the time, no one knew that the 770 would be an NVIDIA killer. They thought they just needed to build something huge.

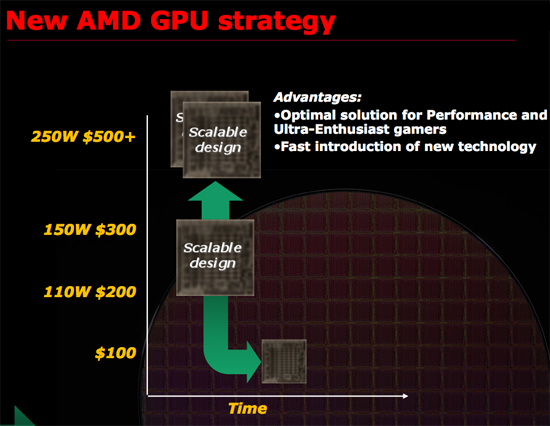

AMD's new GPU strategy...but only for the RV770

From August through November 2007, Carrell Killebrew came very close to quitting. The argument to build a huge RV870 because NVIDIA was going to build a huge competitor infuriated him. It was the exact thinking he fought so hard against just a year earlier with the RV770. One sign of a great leader is someone who genuinely believes in himself. Carrell believed his RV770 strategy was right. And everyone else was trying to get him to admit he was wrong, before the RV770 ever saw the light of day.

Even Rick Bergman, a supporter of Carrell’s in the 770 design discussions, agreed that it might make sense to build something a bit more aggressive with 870. It might not be such a bad idea for ATI to pop their heads up every now and then. Surprise NVIDIA with RV670, 770 and then build a huge chip with 870.

While today we know that the smaller die strategy worked, ATI was actually doing the sensible thing by not making another RV770. If you’re already taking a huge risk, is there any sense in taking another one? Or do you hedge your bets? Doing the former is considered juvenile, the latter - levelheaded.

Carrell didn’t buy into it. But his options were limited. He could either quit, or shut up and let the chips fall where they may.

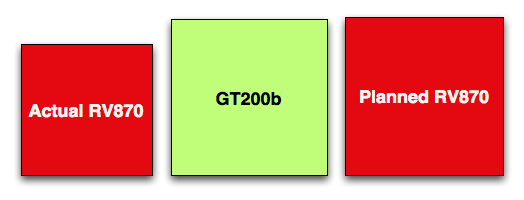

A comparison of die sizes - to scale.

What resulted was sort of a lame compromise. The final PRS was left without a die size spec. Carrell agreed to make the RV870 at least 2x the performance of what they were expecting to get out of the RV770. I call it a lame compromise because engineering took that as a green light to build a big chip. They were ready to build something at least 20mm on a side, probably 22mm after feature creep.

132 Comments

View All Comments

ImmortalZ - Monday, February 15, 2010 - link

Long time reader and lurker here.This article is one of the best I've read here - hell, it's one of the best I've ever read on any tech site. Reading about and getting perspective on what makes companies like ATI tick is great. Thank you and please, more!

tygrus - Sunday, February 14, 2010 - link

Sequences of numbers in a logical way are easier to remember than names. The RV500, RV600 .. makes order obvious. Using multiple names within a generation of chips are confusing and not memorable. They do not convey sequence or relative complexity.Can you ask if AMD are analysing current games/GPGPU and future games/GPGPU to identify possible areas for improvement or skip less useful proposed design changes. Like the Intel >2% gain for <1% cost.

Yakk - Sunday, February 14, 2010 - link

Excellent article! As I've read in a few other comments, this article (and one similar I'd read prior) made me register for the first time, even if I've been reading this site for many years.I could see why "Behind the scenes" articles can make certain companies nervous and others not, mostly based on their own "corporate culture" I'd think.

It was a very good read, and I'm sure every engineer who worked on any given generation on GPU's could have many stories to tell about tech challenges and baffling (at the time) corporate decisions. And also a manager's side of the work in navigating corporate red tape, working with people, while delivering something worthwhile as an end product is also a huge. Having a good manager (people) with a good subject knowledge (tech) is rare, then for Corp. Execs. to know they have one is MUCH rarer still...

If anyone at AMD/ATI read these comments, PLEASE look at the hardware division and try to implement changes to the software division to match their successes...

(btw been using nv cards almost exclusively since the TNT days, and just got a 5870 for the first time this month. ATI Hardware I'd give an "A+", Software... hmm, I'd give it a "C". Funny thing is nv is almost the exact opposite right now)

Perisphetic - Sunday, February 14, 2010 - link

Someone nominate this man for the Pulitzer Prize!As many have stated before, this is a fantastic article. It goes beyond extraordinary, exceptional and excellent. This has become my new benchmark for high quality computer industry related writing.

Thank you sir.

ritsu - Monday, February 15, 2010 - link

It's not exactly The Soul of a New Machine. But, fine article. It's nice to have a site willing to do this sort of work.shaggart5446 - Sunday, February 14, 2010 - link

very appreciative for this article im from ja but reading this make me file like ill go back to school thanks anand ur the best big up yeah man529th - Sunday, February 14, 2010 - link

The little knowledge I have about the business of making a graphics card, that it was Eyefinity that stunted the stability-growth of the 5xxx drivers by the allocation of resources of the software engineers to make Eyefinity work.chizow - Sunday, February 14, 2010 - link

I usually don't care much for these fluff/PR pieces but this one was pretty entertaining, probably because there was less coverage of what the PR/Marketing guys had to say and more emphasis on the designers and engineers. Carrell sounds like a very interesting guy and a real asset to AMD, they need more innovators like him leading their company and less media exposure from PR talking heads like Chris Hook. Almost tuned out when I saw that intro pic, thankfully the article shifted focus quickly.As for the article itself, among the many interesting points made in there, a few that caught my eye:

1) It sounds like some of the sacrifices made with RV870's die size help explain why it fell short of doubling RV770/790 in terms of performance scaling. Seems as if memory controllers might've also been cut as edge real estate was lost, and happen to be the most glaring case where RV870 specs weren't doubled with regard to RV770.

2) The whole cloak and dagger bit with EyeFinity was very amusing and certainly helps give these soulless tech giants some humanity and color.

3) Also with EyeFinity, I'd probably say Nvidia's solution will ultimately be better, as long as AMD continues to struggle with CrossFire EyeFinity support. It actually seems as if Nvidia is applying the same framebuffer splitting technology via PCIe/SLI link with their recently announced Optimus technology to Nvidia Surround, both of course lending technology from their Quadro line of cards.

4) The discussion about fabs/yields was also very interesting and helps shed some light on some of the differences between the strategies used by both companies in the past to present. AMD has always leveraged new process technologies in the past as soon as possible, Nvidia in the past has more closely followed Intel's Tick/Tock cadence of building high-end on mature processes and teething smaller chips on new processes. That clearly changed this time around on 40nm so it'll be interesting to see what AMD does going forward. I was surprised there wasn't any discussion about why AMD hasn't looked into GlobalFoundries as their GPU foundry.

SuperGee - Sunday, February 14, 2010 - link

nV eyeFinity counter solution is a fast software reaction wich is barly the same thing. You need SLI because one GPU can do only 2 active ports. That the main diference. So you depend on a more high-end platform. A SLI mobo PSU capable of feeding two Gcards. While ATI give yo 3 or 6 ou t of one GPU.nV can deliver something native in there next design. Equal and the possibility to be better at it. But we are still waiting for there DX11 parts. I wonder if they could slap a solution in the refresh or can do only wenn they introduce the new archtecture "GF200".

chizow - Monday, February 15, 2010 - link

Actually EyeFinity's current CF problems are most likely a software problem which is why Nvidia's solution is already superior from a flexibility and scalability standpoint. They've clearly worked out the kinks of running multiple GPUs to a single frame buffer and then redistributing portions of that framebuffer to different GPU outputs.AMD's solution seems to have problems because output on each individual GPU is only downstream atm, so while one GPU can send frame data to a primary GPU for CF, it seems secondary GPUs have problems receiving frame data to output portions of the frame.

Why I say Nvidia's solution is better overall is simply because the necessity of SLI will automatically decrease the chance of a poor gaming experience when gaming at triple resolutions, which is clearly a problem with some newer games and single-GPU EyeFinity. Also, if AMD was able to use multiple card display outputs, it would solve the problem of requiring a $100 active DP dongle for the 3rd output if a user doesn't have a DP capable monitor.