Dynamic Power Management: A Quantitative Approach

by Johan De Gelas on January 18, 2010 2:00 AM EST- Posted in

- IT Computing

Our Benchmark Choice

For this article we chose Fritzmark 4.2, the chess benchmark designed by Mathias Feist. The benchmark has the disadvantage that it is not real world for most IT professionals, but it allows us to control the number of threads very easily and precisely. It is also a completely integer dominated benchmark which runs completely in the CPU caches. This allows us to isolate the CPU power savings and the performance and power measurements will still have some resemblance to the typical server loads. This is in contrast to an FP intensive benchmark like LINPACK.

Software Power Management: Windows 2008 Power Plans

On our 64-bit Windows Server 2008 R2 Enterprise two power plans are available:

The interesting thing is how the power plan affects the processor power management (PPM). With Balanced, Turbo Boost never came into action. The L3426 was stuck at 1.86GHz and the X3470 never clocked higher than 2.93GHz. When running idle, both CPUs stayed at 1.2GHz (9x multiplier). The Opterons scaled back to 0.8GHz.

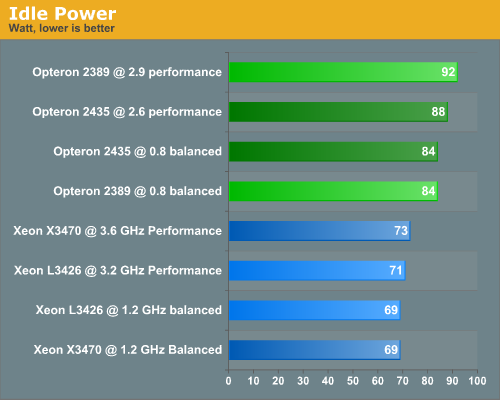

Once set at the Performance power plan, the CPUs never scaled below the default clock speed. According to most clock speed utilities, the Xeons always tried to achieve the highest possible Turbo Boost clock speed. The L3426 switched between 3.066 and 3.2GHz. Note that this did not increase the power consumption significantly: it only used 2W extra on the L3426. The Opterons ran at their top speed. To measure the effect of the power plans we measured the power consumption of the different servers running idle in Windows 2008 R2. This is the power consumption of the complete system, measured at the electrical outlet minus the fans.

We focus on a comparison between the green and blue bars. Comparing the CPUs on each platform offers some interesting insights. Let's first check out the AMD Platform. The Opteron 2389 "Shanghai" clearly needs a higher voltage to achieve 2.9GHz (1.15 - 1.325 V). Despite the fact that the six-core has two cores more to power, the six-core Opteron needs 4W less than the 2.9GHz quad-core Opteron. The reason is that the 2.6GHz never needs more than 1.3V (min: 1.075V) and is also making very good use of clock gating with cache dump (a.k.a. "Smart Fetch").

The idle power measurement of the Xeons shows us how little power is saved by scaling back the frequency: only 2W. The power savings are a result of fine-grained clock gating and core power gating.

35 Comments

View All Comments

JohanAnandtech - Tuesday, January 19, 2010 - link

Well, Oracle has a few downsides when it comes to this kind of testing. It is not very popular in the smaller and medium business AFAIK (our main target), and we still haven't worked out why it performs much worse on Linux than on Windows. So chosing Oracle is a sure way to make the projecttime explode...IMHO.ChristopherRice - Thursday, January 21, 2010 - link

Works worse on Linux then windows? You have a setup issue likely with the kernel parameters or within oracle itself. I actually don't know of any enterprise location that uses oracle on windows anymore. "Generally all Rhel4/Rhel5/Sun".TeXWiller - Monday, January 18, 2010 - link

The 34xx series supports four quad rank modules, giving today a maximum supported amount of 32GB per CPU (and board). The 24GB limit is that of the three channel controller with unbuffered memory modules.pablo906 - Monday, January 18, 2010 - link

I love Johan's articles. I think this has some implications in how virtualization solutions may be the most cost effective. When you're running at 75% capacity on every server I think the AMD solution could have possibly become more attractive. I think I'm going to have to do some independent testin in my datacenter with this.I'd like to mention that focusing on VMWare is a disservice to Vt technology as a whole. It would be like not having benchmarked the K6-3+ just because P2's and Celerons were the mainstream and SS7 boards weren't quite up to par. There are situations, primarily virtualizing Linux, where Citrix XenServer is a better solution. Also many people who are buying Server '08 licenses are getting Hyper-V licenses bundled in for "free."

I've known several IT Directors in very large Health Care organization who are deploying a mixed Hyper-V XenServer environment because of the "integration" between the two. Many of the people I've talked to at events around the country are using this model for at least part of the Virtualization deployments. I believe it would be important to publish to the industry what kind of performance you can expect from deployments.

You can do some really interesting HomeBrew SAN deployments with OpenFiler or OpeniSCSI that can compete with the performance of EMC, Clarion, NetApp, LeftHand, etc. NFS deployments I've found can bring you better performance and manageability. I would love to see some articles about the strengths and weaknesses of the storage subsystem used and how it affects each type of deployment. I would absolutely be willing to devote some datacenter time and experience with helping put something like this together.

I think this article really lends itself well into tieing with the Virtualization talks and I would love to see more comments on what you think this means to someone with a small, medium, and large datacenter.

maveric7911 - Tuesday, January 19, 2010 - link

I'd personally prefer to see kvm over xenserver. Even redhat is ditching xen for kvm. In the environments I work in, xen is actually being decommissioned for VMware.JohanAnandtech - Tuesday, January 19, 2010 - link

I can see the theoretical reasons why some people are excited about KVM, but I still don't see the practical ones. Who is using this in production? Getting Xen, VMware or Hyper-V do their job is pretty easy, KVM does not seem to be even close to being beta. It is hard to get working, and it nowhere near to Xen when it comes to reliabilty. Admitted, those are our first impressions, but we are no virtualization rookies.Why do you prefer KVM?

VJ - Wednesday, January 20, 2010 - link

"It is hard to get working, and it nowhere near to Xen when it comes to reliabilty. "I found Xen (separate kernel boot at the time) more difficult to work with than KVM (kernel module) so I'm thinking that the particular (host) platform you're using (windows?) may be geared towards one platform.

If you had to set it up yourself then that may explain reliability issues you've had?

On Fedora linux, it shouldn't be more difficult than Xen.

Toadster - Monday, January 18, 2010 - link

One of the new technologies released with Xeon 5500 (Nehalem) is Intel Intelligent Power Node Manager which controls P/T states within the server CPU. This is a good article on existing P/C states, but will you guys be doing a review of newer control technologies as well?http://communities.intel.com/community/openportit/...">http://communities.intel.com/community/...r-intel-...

JohanAnandtech - Tuesday, January 19, 2010 - link

I don't think it is "newer". Going to C6 for idle cores is less than a year old remember :-).It seems to be a sort of manager which monitors the electrical input (PDU based?) and then lowers the p-states to keep the power at certain level. Did I miss something? (quickly glanced)

I think personally that HP is more onto something by capping the power inside their server management software. But I still have to evaluate both. We will look into that.

n0nsense - Monday, January 18, 2010 - link

May be i missed something in the article, but from what I see at home C2Q (and C2D) can manage frequencies per core.i'm not sure it is possible under Windows, but in Linux it just works this way. You can actually see each core at its own frequency.

Moreover, you can select for each core which frequency it should run.