At this year’s Consumer Electronics Show, NVIDIA had several things going on. In a public press conference they announced 3D Vision Surround and Tegra 2, while on the showfloor they had products o’plenty, including a GF100 setup showcasing 3D Vision Surround.

But if you’re here, then what you’re most interested in is what wasn’t talked about in public, and that was GF100. With the Fermi-based GF100 GPU finally in full production, NVIDIA was ready to talk to the press about the rest of GF100, and at the tail-end of CES we got our first look at GF100’s gaming abilities, along with a hands-on look at some unknown GF100 products in action. The message NVIDIA was trying to send: GF100 is going to be here soon, and it’s going to be fast.

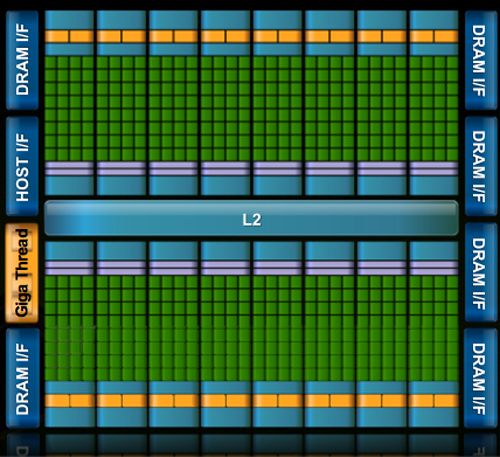

Fermi/GF100 as announced in September of 2009

Before we get too far ahead of ourselves though, let’s talk about what we know and what we don’t know.

During CES, NVIDIA held deep dive sessions for the hardware press. At these deep dives, NVIDIA focused on 3 things: Discussing GF100’s architecture as is relevant for a gaming/consumer GPU, discussing their developer relations program (including the infamous Batman: Arkham Asylum anti-aliasing situation), and finally demonstrating GF100 in action on some games and some productivity applications.

Many of you have likely already seen the demos, as videos of what we saw have already been on YouTube for a few days now. What you haven’t seen and what we’ll be focusing on today, is what we’ve learned about GF100 as a gaming GPU. We now know everything about what makes GF100 tick, and we’re going to share it all with you.

With that said, while NVIDIA is showing off GF100, they aren’t showing off the final products. As such we can talk about the GPU, but we don’t know anything about the final cards. All of that will be announced at a later time – and no, we don’t know that either. In short, here’s what we still don’t know and will not be able to cover today:

- Die size

- What cards will be made from the GF100

- Clock speeds

- Power usage (we only know that it’s more than GT200)

- Pricing

- Performance

At this point the final products and pricing are going to heavily depend on what the final GF100 chips are like. The clockspeeds NVIDIA can get away with will determine power usage and performance, and by extension of that, pricing. Make no mistake though, NVIDIA is clearly aiming to be faster than AMD’s Radeon HD 5870, so form your expectations accordingly.

For performance in particular, we have seen one benchmark: Far Cry 2, running the Ranch Small demo, with NVIDIA running it on both their unnamed GF100 card and a GTX285. The GF100 card was faster (84fps vs. 50fps), but as Ranch Small is a semi-randomized benchmark (certain objects are in some runs and not others) and we’ve seen Far Cry 2 to be CPU-limited in other situations, we don’t put much faith in this specific benchmark. When it comes to performance, we’re content to wait until we can test GF100 cards ourselves.

With that out of the way, let’s get started on GF100.

115 Comments

View All Comments

SothemX - Tuesday, March 9, 2010 - link

WELL.lets just make it simple. I am an advid gamer...I WANT and NEED power and performance. I care only about how well my games play, how good they look, and the impression they leave with me when I am done.I own a PS3 and am thrilled they went with Nvidia- (smart move)

I own and PC that utilizes the 9800GT OC card....getting ready to upgrade to the new GF100 when it releases, last thing that is on my mind is how the market share is, cost is not an issue.

Hard-Core gaming requires Nvidia. Entry-level baby boomers use ATI.

Nvidia is just playing with their food....its a vulgar display of power- better architecture, better programming, better gamming.

StevoLincolnite - Monday, January 18, 2010 - link

[quote]So why does NVIDIA want so much geometry performance? Because with tessellation, it allows them to take the same assets from the same games as AMD and generate something that will look better. With more geometry power, NVIDIA can use tessellation and displacement mapping to generate more complex characters, objects, and scenery than AMD can at the same level of performance.[/quote]Might I add to that, nVidia's design is essentially "Modular" they can increase and decrease there geometry performance essentially by taking units out, this however will force programmers to program for the lowest common denominator, whilst AMD's iteration of the technology is the same across the board, so essentially you can have identical geometry regardless of the chip.

Yojimbo - Monday, January 18, 2010 - link

just say the minimum, not the lowest common denominator. it may look fancy bit it doesn't seem to fit.chizow - Monday, January 18, 2010 - link

The real distinction here is that Nvidia's revamp of fixed-function geometry units to a programmable, scalable, and parallel Polymorph engine means their implementation won't be limited to acceleration of Tesselation in games. Their improvements will benefit every game ever made that benefits from increased geometry performance. I know people around here hate to claim "winners" and "losers" around here when AMD isn't winning, but I think its pretty obvious Nvidia's design and implementation is the better one.Fully programmable vs. fixed-function, as long as the fully programmable option is at least as fast is always going to be the better solution. Just look at the evolution of the GPU from mostly fixed-function hardware to what it is today with GF100...a fully programmable, highly parallel, compute powerhouse.

mcnabney - Monday, January 18, 2010 - link

If Fermi was a winner Nvidia would have had samples out to be benchmarked by Anand and others a long time ago.Fermi is designed for GPGPU with gaming secondary. Goody for them. They can probably do a lot of great things and make good money in that sector. But I don't know about gaming. Based upon the info that has gotten out and the fact that reality hasn't appeared yet I am guessing that Fermi will only be slightly faster than 5870 and Nvidia doesn't want to show their hand and let AMD respond. Remember, AMD is finishing up the next generation right now - so Fermi will likely compete against Northern Isles on AMDs 32nm process in the Fall.

dragonsqrrl - Monday, February 15, 2010 - link

Firstly, did you not read this article? The gf100 delay was due in large part to the new architecture they developed, and architectural shift ATI will eventually have to make if they wish to remain competitive. In other words, similarly to the g80 enabling GPU computing features/unified shaders for the first time on the PC, Nvidia invested huge resources in r&d and as a result had a next generation, revolutionary GPU before ATI.Secondly, Nvidia never meant to place gaming second to GPU computing, as much as you ATI fanboys would like to troll about this subject. What they're trying to do is bring GPU computing up to the level GPU gaming is already at (in terms of accessibility, reliability, and performance). The research they're doing in this field could revolutionize research into many fields outside of gaming, including medicine, astronomy, and 'yes' film production (something I happen to deal with a LOT) while revolutionizing gaming performance and feature sets as well

Thirdly, I would be AMAZED if AMD can come out with their new architecture (their first since the hd2900) by the 3rd quarter of this year, and on the 32nm process. I just can't see them pushing GPU technology forward in the same way Nvidia has given their new business model (smaller GPUs, less focus on GPU computing), while meeting that tight deadline.

chewietobbacca - Monday, January 18, 2010 - link

"Winning" the generation? What really matters?The bottom line, that's what. I'm sure Nvidia liked winning the generation - I'm sure they would have loved it even more if they didn't lose market share and potential profits from the fight...

realneil - Monday, January 25, 2010 - link

winning the generation is a non-prize if the mainstream buyer can only wish they had one. Make this kind of performance affordable and then you'll impress me.chizow - Monday, January 18, 2010 - link

Yes and the bottom line showed Nvidia turning a profit despite not having the fastest part on the market.Again, my point about G80'ing the market was more a reference to them revolutionizing GPU design again rather than simply doubling transistors and functional units or increasing clockspeeds based on past designs.

The other poster brought up performance at any given point in time, I was simply pointing out a fact being first or second to market doesn't really matter as long as you win the generation, which Nvidia has done for the last few generations since G80 and will again once GF100 launches.

sc3252 - Monday, January 18, 2010 - link

Yikes, if it is more than the original GTX 280 I would expect some loud cards. When I saw those benchmarks of farcrry 2 I was disappointed that I didn't wait, but now that it is using more than a GTX 280 I think I may have made the right choice. While right now I wan't as much performance as possible eventually my 5850 will go into a secondary pc(why I picked 5850) with a lesser power supply. I don't want to have to buy a bigger power supply just because a friend might come over and play once a week.