Where AFR Is Mediocre, and How Hydra Can Be Better

Perhaps it’s best that we first start with a discussion on how modern multi-GPU linking is handled by NVIDIA and AMD. After some earlier experimentation, both have settled on a method called Alternate Frame Rendering (AFR), which as the name implies has each card render a different frame.

The advantage of AFR is that it’s relatively easy to implement – each card doesn’t need to know what the other card is doing beyond simple frame synchronization. The driver in turn needs to do some work managing things in order to keep each GPU fed and timed correctly (not to mention coaxing another frame out of the CPU for rendering).

However even as simple as AFR is, it isn’t foolproof and it isn’t flawless. Making it work at its peak level of performance requires some understanding of the game being run, which is why for even such a “dumb” method we still have game profiles. Furthermore it comes with a few inherent drawbacks

- Each GPU needs to get a frame done in the same amount of time as the other GPUs.

- Because of the timing requirement, the GPUs can’t differ in processing capabilities. AFR works best when they are perfectly alike.

- Dealing with games where the next frame is dependent on the previous one is hard.

- Even with matching GPUs, if your driver gets the timing wrong, it can render frames at an uneven pace. Frames need to be spaced apart equally – when this fails to happen you get microstuttering.

- AFR has multiple GPUs working on different frames, not the same frame. This means that frame throughput increases, but not the latency for any individual frame. So if a single card gets 30fps and takes 16.6ms to render a frame, a pair of cards in AFR get 60fps but still take 16.6ms to render a frame.

Despite those drawbacks, for the most part AFR works. Particularly if you’re not highly sensitive to lag or microstuttering, it can get very close to doubling the framerate in a 2-card configuration (and less efficient with more cards).

Lucid believes they can do better, particularly when it comes to matching cards. AFR needs matching cards for timing reasons, because it can’t actually split up a single frame. With Hydra, Lucid is splitting up frames and gives them two big advantages over AFR: Rendering can be done by dissimilar GPUs, and rendering latency is reduced.

Right now, the ability to use dissimilar GPUs is the primary marketing focus behind the Hydra technology. Lucid and MSI will both be focusing almost exclusively on that ability when it comes to pushing the Hydra and the Fuzion. What you won’t see them focusing on is the performance versus AFR, the difference in latency, or game compatibility for that matter. The ability to use dissimilar GPUs is the big selling point for the Hydra & Fuzion right now.

So how does the Hydra work? We covered this last year when Lucid first announced the Hydra, so we’re not going to cover this completely in depth again. However here’s a quick refresher for you.

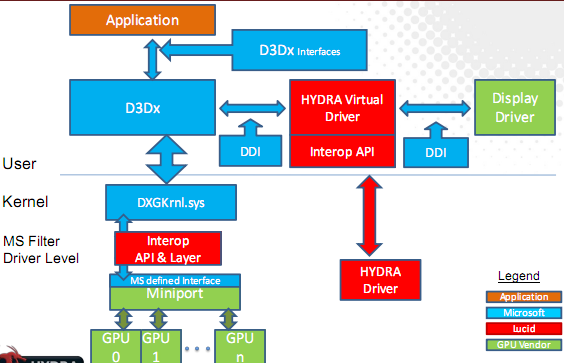

As the Hydra technology is based upon splitting up the job of rendering the objects in a frame, the first task is to intercept all Direct3D or OpenGL calls, and to make some determinations about what is going to be rendered. This is the job of Lucid’s driver, and this is where most of the “magic” is in the Hydra technology. The driver needs to determine roughly how much work will be needed for each object, also look at inter-frame dependences, and finally look at the relative power of each GPU.

Once the driver has determined how to best split up the frame, it then interfaces with the video card’s driver and hands it a partial frame composed of only the bits it needs to render. This is followed by the Hydra then reading back the partial frames, and compositing them into one whole frame. Finally the complete frame is sent out to the primary GPU (the GPU the monitor is plugged into) to be displayed.

All of this analysis and compositing is quite difficult to do (which is in part why AMD and NVIDIA moved away from frame-splitting schemes) which is what makes Hydra’s method the “hard” method. Compared to AFR, it takes a great deal more work to split up a frame by objects and to render them on different GPUs.

As with AFR, this method has some drawbacks:

- You can still microstutter if you get the object allocation wrong. Some frames may put too much work on the weaker GPU

- Since you can use mismatched cards, you can’t always use “special” features like Coverage Sampling Anti-Aliasing unless both cards have the feature.

- Synchronization still matters.

- Individual GPUs need to be addressable. This technology doesn’t work with multi-GPU cards like the Radeon 5970 or the GeForce GTX 295.

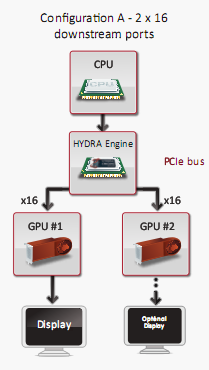

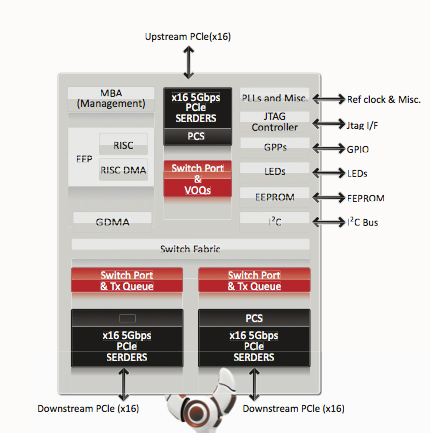

This is also a good time to quickly mention the hardware component of the Hydra. The Hydra 200 is a combination PCIe bridge chip, RISC processor, and compositing engine. Lucid won’t tell us too much about it, but we know the RISC processor contained in it runs at 300MHz, and is based on Tensilica’s Diamond architecture. The version of the Hydra being used in the Fuzion is their highest-end part, the LT24102, which features 48 PCIe 2.0 lanes (16 up, 32 down). This chip is 23mm2 and consumes 5.5W. We do not have any pictures of the die or know the transistor count, but you can count on it using relatively few transistors (perhaps 100M?)

Ultimately in a perfect world, the Hydra method is superior – it can be just as good as AFR with matching cards, and you can use dissimilar cards. In a practical world, the devil’s in the details.

47 Comments

View All Comments

cesthree - Friday, January 8, 2010 - link

Multi GPU gaming already suffers from drivers that suck. You want the < 3% who actually run multi GPU's to throw HYDRA driver issues into the mix? That doesn't sound appealing, at all, even if I had thousands to throw at the hardware.Fastest Single GPU. Nuff Said.

Although if Lucid can do this, then maybe ATI and Nvidia will get off their dead-bums and fix their drivers already.

Makaveli - Thursday, January 7, 2010 - link

The major fail is most of the post on this article, its very early silicon with beta drives. And most of you expect it to be beating Xfire and Sli by 30%. When the big guys have had years to tune their drives and they own the hardware. I would like to see where this by next christmas before I pass judgement. Just because you don't see it in front of your face doesn't mean the potential isn't there.Sometimes alittle faith will go along way.

prophet001 - Friday, January 8, 2010 - link

i agreeHardin - Thursday, January 7, 2010 - link

It's a shame the results don't look as promising as we had hoped. Maybe it's just early drivers issues. But it looks like it's too expensive and it's not any better than crossfire as it is. It doesn't even have dx 11 support yet and who knows when they will add it.Jovec - Thursday, January 7, 2010 - link

With these numbers, I wonder why they allowed them to be posted. They had to know they were getting much worse results with their chips than XF, and the negative publicity isn't going to do them any good. I suppose they didn't want to have another backroom showing, but that doesn't mean they should show at this stage.jnmfox - Thursday, January 7, 2010 - link

As has been stated the technology is unimpressive, hopefully they can get things fixed. I am just happy to see one of the best RTS ever made in the benchmarks again. CoH should always be part of anandtech's reviews, then I wouldn't need to go to other sites for video card reviews :P.IKeelU - Thursday, January 7, 2010 - link

I was actually hoping AMD would buy this tech and integrate it into their cards/chipsets. Or maybe Intel. As it stands, we have a small company, developing a supposedly GPU-agnostic "graphics helper" that is attempting to supplant what the big players are already doing with proprietary tech. They need support from mobo manufacturers and cooperation from GPU vendors (who have little incentive to help at the moment due to the desire to lock-in users to proprietary stuff). I really, really, want the Hydra to be a success, but the situation is a recipe for failure.nafhan - Friday, January 8, 2010 - link

That's the same thing I was thinking through the whole article. The market they are going after is small, very demanding, and completely dependent on other companies. The tech is good, but I have a hard time believing they will ever have the resources to implement it properly. Best case scenario (IMO): AMD buys them once they go bankrupt in a year or so, keeps all the engineers, and integrates the tech into their enthusiast NB/SB.krneki457 - Thursday, January 7, 2010 - link

Anand couldn't you use a gtx295 to get approximate gtx280 SLI figures? I read that Hydra doesn't work with dual GPU cards, but couldn't you disable Hydra? You mentioned in the article, that this is possible.As for technology itself, like a lot of comments already mentioned, I really don't see much use in it. Even if it worked properly it would have been more at home in low to mid range motherboards.

Ryan Smith - Thursday, January 7, 2010 - link

I'm going to be seriously looking at using hacked drivers to get SLI results. There are a few ways to add SLI to boards that don't officially support it.It's not the most scientific thing, but it may work to bend the rules this once.