CES Preview - Micron's RealSSD C300 - The First 6Gbps SATA SSD

by Anand Lal Shimpi on January 7, 2010 12:00 AM EST- Posted in

- Trade Shows

I bumped into Nathan Kirsch of Legit Reviews and Jansen Ng of DailyTech while I was picking up my CES badge yesterday. They both had Storage Visions badges. I didn't have one. I felt left out.

It got worse when they told me that they just saw Micron's new SSD - the RealSSD C300. Both of them said it looked damn good. I felt extra left out.

Then they told me that Kristin Bordner, Micron PR, was looking for me and wanted to give me a drive. Things started looking up.

I made the trek over to the Riviera today to meet with Micron and take a look at their RealSSD C300. SSDs are difficult enough to evaluate after months of testing. They're basically impossible to gauge from a one hour meeting in a casino ballroom.

Faster than Intel? Perhaps.

The C300 is based on a new Marvell controller. It's the first consumer SSD to natively support 6Gbps SATA. The firmware and all of the write placement algorithms are developed internally by Micron and its team of 40 engineers. Micron tried using Marvell firmware in the past and quickly learned that it resulted in too much of a "HDD-like" experience (ahem, JMicron).

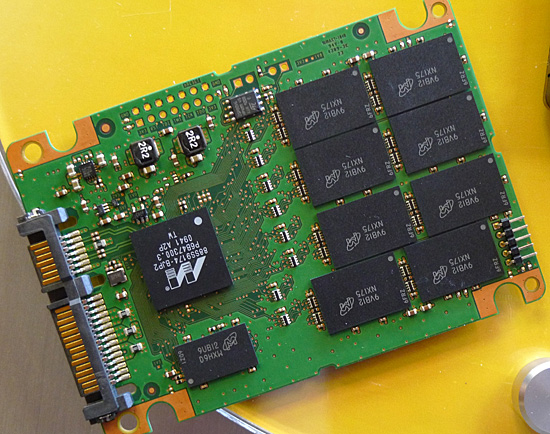

The Marvell controller features two ARM9 processor cores that operate in parallel. While they can load balance, one generally handles host requests while the other handles NAND requests.

Two ARM9 processors, Micron firmware, 256MB of external DRAM and 8-channels of MLC NAND flash

Adjacent to the controller is a massive 256MB DRAM. According to Micron, "very little" user data is stored in the DRAM. It's not used as a long term cache, but mostly for write tracking and mapping tables.

The controller internally doesn't have much cache on-die. Not nearly as much as Intel, according to Micron. While the original X25-M only had a 512KB SRAM, Micron claims that the latest G2 controller has a 2MB cache on-die. Micron x-rayed the chip to find out.

The write placement algorithms are similar in nature to what Intel does. There's no funny SandForce-like technology at work here. Every time a write is performed the controller does a little bit of cleanup to ensure that the drive doesn't get into an unreasonably slow performance state. Unlike Intel however, Micron does do garbage collection while the drive is idle. The idle garbage collection works independently of OS or file system.

TRIM is supported but only under Windows 7. There are no software tools to manually TRIM the drive. Micron hopes that its write placement algorithms and idle garbage collection will be enough to keep drive performance high regardless of OS.

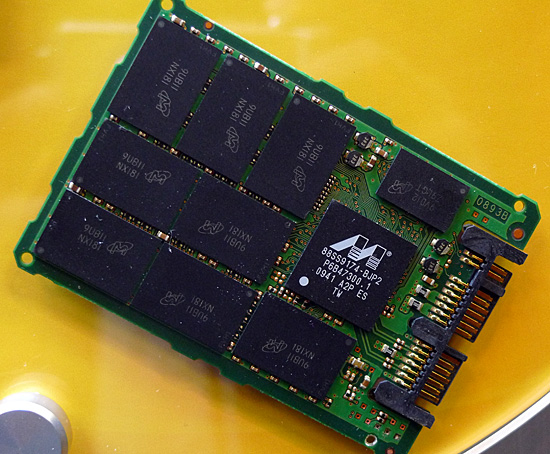

The RealSSD C300 will also be available in a 1.8" form factor for OEMs

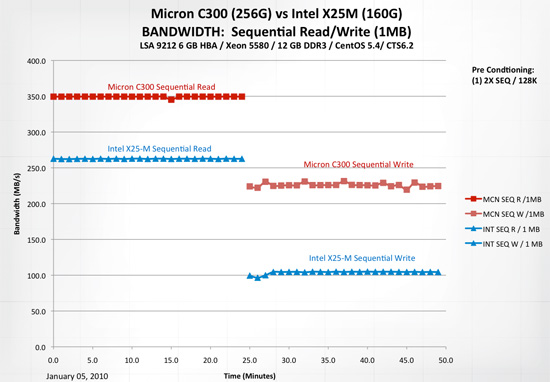

Supporting 6Gbps SATA isn't enough to drive performance up. Micron is also using 34nm ONFI 2.1 NAND flash. The combination of faster NAND and a faster interface results in sequential read speeds of as much as 350MB/s. That's a full 100MB/s greater than the SandForce based OCZ Vertex 2 Pro we just previewed.

Write speeds don't set any records though. Micron claims the drive will do 215MB/s on sequential writes.

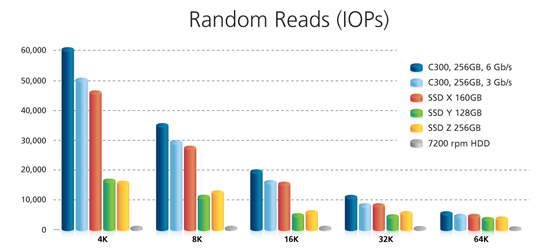

Random 4KB read/write speed appears to be X25-M class if not better. Micron only showed off peak numbers for a very short iometer run so we'll have to wait for me to get a drive before I can get a good idea of random performance.

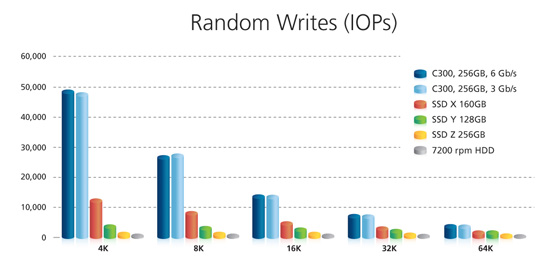

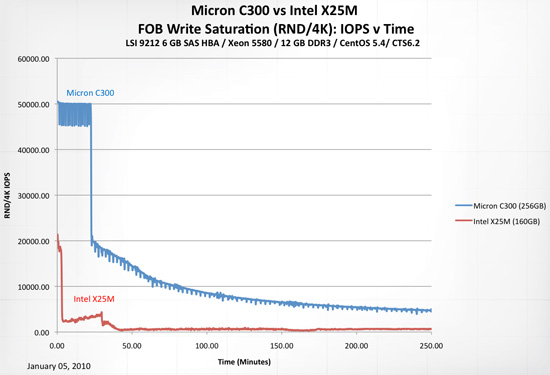

Micron does claim that the drive is more resilient than Intel's X25-M. Running a pure 4KB random write test across the entire drive's LBA space for 250 minutes (!) resulted in the following data:

This workload is far more severe than anything you'd find on a desktop PC, but it does show that the RealSSD C300 appears to be fairly resistant to steep performance drops. The drive is completely unused prior to this test however.

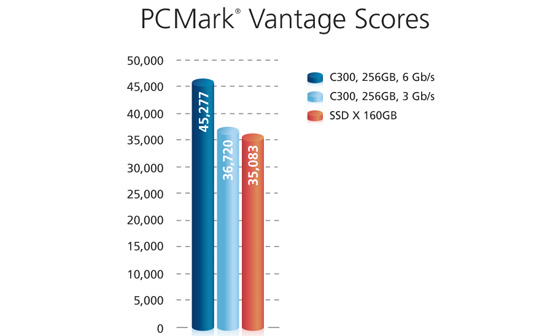

PCMark Vantage performance is pretty impressive. On a SATA 3Gbps controller it appears to equal Intel's X25-M G2, but with a 6Gbps controller it is 23% faster if Micron's numbers can be believed.

The issue of compatibility testing is a, err, non-issue. Micron already has a large compatibility lab for its memory. It just tests SSDs in the same lab now. Because Micron is going to be selling to OEMs the drives have to pass a 1000 hour qualification test before they can ship. The consumer drive will need 500 hours of test time before it can ship.

The Micron RealSSD C300 will be OEM-only, going after the same markets as Intel, Samsung and Toshiba. Crucial, Micron's retail arm, will sell direct to retail.

The Crucial RealSSD C300 will be available in two capacities: 128GB and 256GB with 7% of the NAND capacity used as spare area. The drives will be priced at $399 and $799 respectively. OEMs will get drives starting at the end of this month, but the Crucial drives will be available for purchase in February.

I'm already on the list for a review sample, so for now we wait.

33 Comments

View All Comments

supremelaw - Thursday, January 7, 2010 - link

> From my understanding, although SSDs were approaching the theoretical limit, they were still ~50MB/s away, which is a considerable amount (~17%).50/300 = 16.7%

Let me try to explain this in reverse:

If an SSD were cable of perfectly saturating a 300 MB/second

interface, that would imply that there is absolutely no

computational / controller overhead in that device.

Although this "mode" may be easy to conceptualize,

it's impossible in realistic, practical terms.

But, your comments raise a very important point:

when Micron's C300 is cabled to a SATA/6G controller

at the other end of the data cable, the maximum

data rates do NOT scale up by a factor of 2-to-1.

My conclusion -- from this lack of scaling --

is that the real limiting factors are the

raw bandwidth of Nand Flash chips themselves and/or

the efficiency of controller(s) imbedded in the SSD device.

Both of the latter "latencies" are additive,

because that imbedded controller must wait

while Nand Flash chips cycle, then the

imbedded controller(s) must do its own computations.

Also, if an SSD has a DRAM cache, that memory

also has its own latencies and access times

which add further overhead to the device's

normal operation.

Finally, imbedded controller(s) must turn around

and handle I/O across the data cable i.e.

by communicating with another controller

at the other end of that data cable

(add-on controller or on-board the motherboard).

> When I move to SSDs it will be on a 6Gbps channel at a minimal. I'd like to see 2 or 3 SSDs in RAID, including Intel's, OCZs, and this Micron (possibly even a Colossus for good measure).

I agree with this latter statement 1000% -- very well said!

MRFS

yyrkoon - Saturday, January 9, 2010 - link

Except that 3Gbit actually equates to 384MB/s theoretical. take the 8b/10b encoding into consideration then we're talking 300MB/s for data ( or 307.2MB/s if my math, and understanding of 8b/10b encoding is correct).Then, outside of this you have the typical protocol/hardware vs "outside world" issue to contend with. And not unlike GbE ethernet, you have many other factors come into play. Such as data block sizes/conversions, controller bandwidth capabilities ( which is probably why Micron chose dual ARM9 Microprocessors ), and the "controlling" host CPU/Operating systems ability to keep up with the flow of data( the spice . . . err data must flow ). In other words, operating systems, and CPUs being general purpose, are not going to be 100% optimized for a specific set of hardware / tasks.

So, then the obvious occurs . . . you get what you get.

yyrkoon - Saturday, January 9, 2010 - link

And oh, the 6Gbit/SATAIII spec is not all about the drive. You *can* have more than one drive hooked into a single SATA port. "Port multipliers" are an example of possibly running up to 16 (15 plus the controller, not unlike SCSI ) devices into one SATA port. Although, the most drives I have personally seen connected to a Port Multiplier is 5. Because the makers of said PM's limit it to that number. Possibly for Max bandwidth considerations, or ease /cost of manufacturing.supremelaw - Thursday, January 7, 2010 - link

http://www.newegg.com/Product/Product.aspx?Item=N8...">http://www.newegg.com/Product/Product.a...82E16813...MRFS

supremelaw - Thursday, January 7, 2010 - link

And, as long as the SATA protocol is being adopted here,there is a 10/8 overhead that is the nature of the beast:

serial protocols have historically used 1 start bit,

8 data bits and 1 stop bit for every logical byte transmitted.

Thus, even before hardware overheads are considered,

SATA 3G really tops out at 240 MB/second of user data

(300 x (8/10) = 240).

Therefore, SATA 6G will likewise top out at 480 MB/second

of user data (600 x .8) .

SSDs should take clues from Western Digital's decision

to drop all the check bits on each sector, and migrate

ASAP to a full "4K sector" with much less data overhead:

"Western Digital’s Advanced Format:

The 4K Sector Transition Begins," by Ryan Smith

http://www.anandtech.com/storage/showdoc.aspx?i=36...">http://www.anandtech.com/storage/showdoc.aspx?i=36...

See the drawing of the "Advanced Format 4K".

MRFS

supremelaw - Thursday, January 7, 2010 - link

I need to correct myself (again):please forgive me for this:

3G / 10 = 300 MB/sec. of user data (excluding start & stop bits)

6G / 10 = 600 MB/sec. of user data (excluding start & stop bits)

What I started to say (but got sidetracked) is that 3G goes to 375

and 6G goes to 750 MB/sec. of user data withOUT the start and stop bits,

and effective bandwidth will increase in proportion to a

reduction in extra protocol bits.

(SORRY FOR WRITING BEFORE THINKING :)

The SATA protocol uses 10 bits per byte,

whereas ASCII is a 7-bit code with one extra

bit that is not defined by ASCII.

MRFS

vol7ron - Thursday, January 7, 2010 - link

Thank you for your response. I also thought that the 4K size was going to change. I may be wrong in that statement, but I thought there was a movement to make it smaller/bigger?Thanks, volt

supremelaw - Thursday, January 7, 2010 - link

There's a video of their RealSSD C300 at Micron's blogwhich reported a lot of measurements of "4K IOPs"

(4,096-byte input-output operations per second):

http://www.micronblogs.com/2009/12/you-asked-for-i...">http://www.micronblogs.com/2009/12/you-asked-for-i...

That seems to be the new "chunk" standard, if you will.

And, it makes total sense, now that WD is moving towards

their "Advanced Format" (4K bytes + ~100 ECC bytes per "sector").

That "100" is still a best guess, from what I read:

whatever the ECC uses, there is a lot of storage to be gained

from this new Advanced Format.

MRFS

lensman0419 - Thursday, January 7, 2010 - link

Reason you are seeing the improvement is, considerable amount of the SATA bandwidth is consumed by the protocol itself. The theoretical useful data bandwith is around 270 mb/sec. The SATA 6GB offers up more useful bandwidth for the system.No comment on the pricing, doesn't make sense to me either :)

supremelaw - Thursday, January 7, 2010 - link

We're watching and still waiting for SATA/6Gto be integrated into motherboards.

A few add-on controllers are available, but

some suffer from a ceiling of 500 MB/second

(read "not true 6G"):

http://www.supremelaw.org/systems/asus/PCIe.x1.Gen...">http://www.supremelaw.org/systems/asus/PCIe.x1.Gen...

(see "PCIe x1 Gen2")

Then, there are the Intel and LSI "enterprise-class"

RAID controllers that now support 6G ports:

http://www.intel.com/Products/Server/RAID-controll...">http://www.intel.com/Products/Server/RA...rollers/...

http://www.newegg.com/Product/Product.aspx?Item=N8...">http://www.newegg.com/Product/Product.a...82E16816...

Those are pretty steep premiums to get 6G support

at the other end of the SATA cable!

We need motherboards with 4 x SATA/6G ports

like this GIGABYTE GA-X58A-UD7:

http://www.newegg.com/Product/Product.aspx?Item=N8...">http://www.newegg.com/Product/Product.a...82E16813...

MRFS