Intel Arrandale: 32nm for Notebooks, Core i5 540M Reviewed

by Anand Lal Shimpi on January 4, 2010 12:00 AM EST- Posted in

- Laptops

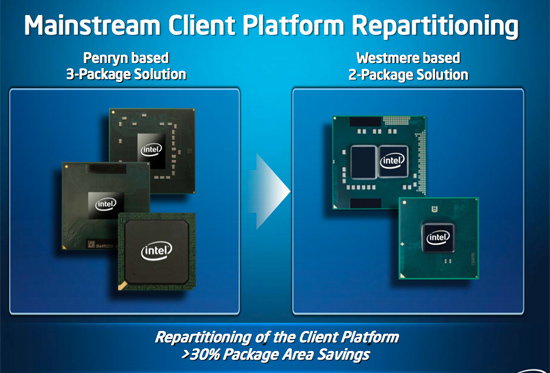

Clarkdale is the desktop processor, but Arrandale is strictly for my notebooks. The architecture is the same as Clarkdale. You've got a 32nm Westmere core and a 45nm chipset on the same package:

The two-chip solution does matter more for notebooks as it means that motherboards can shrink. Previously this feature was only available to OEMs who went with NVIDIA's ION platform (or GeForce 9400M as it was once known). This is the first incarnation of Intel's 32nm process so it's not quite as power optimized as we'd like. The first mainstream Arrandale CPUs are 35W TDP, compared to the 25W TDP of most thin and light notebooks based on mobile Core 2. Granted the 35W includes the graphics, but it's not always going to be lower total power consumption (more on this later).

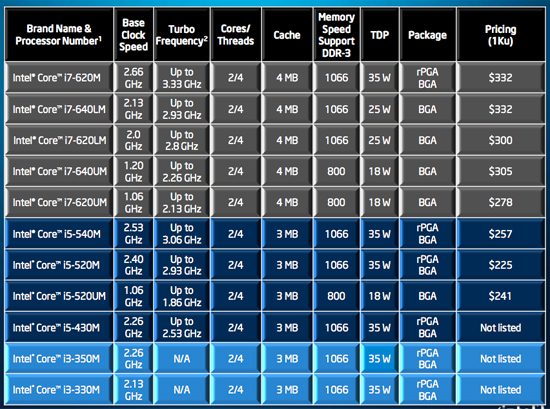

The Arrandale lineup launching today is huge. Intel launched 7 Clarkdale CPUs, but we've got a 11 mobile Arrandale CPUs coming out today:

The architecture is similar to Clarkdale. You get private 256KB L2s (one per core) and a unified L3 cache for the CPU. The L3 is only 3MB (like the Pentium G9650) on the Core i5 and Core i3 processors, but it's 4MB (like the desktop Core i5/i3) on the mobile Core i7. Confused yet? I'll have to admit, Intel somehow took a potentially simple naming scheme and made it unnecessarily complex. We also get some low-voltage parts that have 18W TDPs. They run at low default clock speeds but can turbo up pretty high.

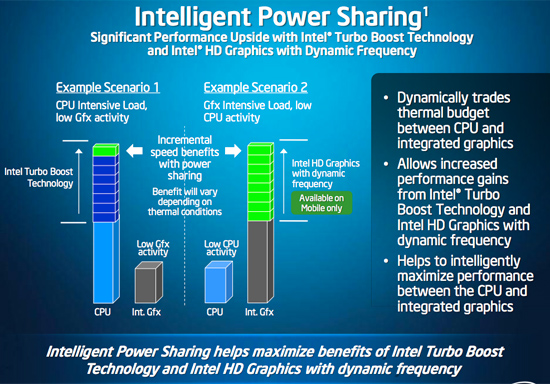

Turbo is hugely important here. While Clarkdale's Turbo isn't exactly useful, the TDPs are low enough in mobile that you can really ramp up clock speed if you aren't limited by cooling. Presumably this will allow you to have ultra high performance plugged-in modes where your CPU (and fans) can ramp up as high as possible to get great performance out of your notebook. Add an SSD and the difference between a desktop and a notebook just got even smaller.

Arrandale does have one trick that Clarkdale does not: graphics turbo.

GPU bound applications (e.g. games) can force the CPU part of Arrandale into a low power state, and the GPU can use the added thermal headroom to increase its clock speed. This is a mobile only feature but it's the start of what will ultimately be the answer to achieving a balanced system. Just gotta get those Larrabee cores on-die...

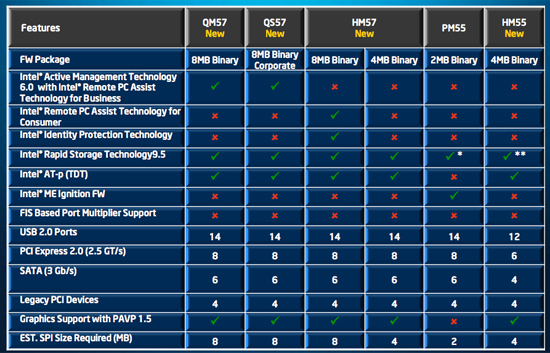

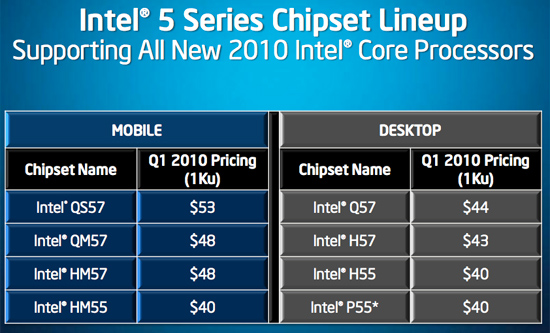

Chipsets are even more complicated on the mobile side:

38 Comments

View All Comments

bsoft16384 - Monday, January 4, 2010 - link

The biggest problem with Intel graphics isn't performance - it's image quality. Intel's GPUs don't have AA and their AF implementation is basically useless.Add in the fact that the Intel recently added a 'texture blurring' feature to their drivers to improve performance (which is, I believe, on by default) and you end up with quite a different experience compared with a Radeon 4200 or GeForce 9400M based solution, even if the performance is nominally similar.

Also, I've noticed that Intel graphics do considerably better in benchmarks than they do in the real world. The Intel GMA X4500MHD in my CULV-based Acer 1410 does around ~650 in 3DMark06, which is about 50% "faster" than my friend's 3-year-old GeForce 6150-based AMD Turion notebook. But get in-game, with some particle effects going, and the Intel pisses all over the floor (~3-4fps) while the GeForce 6150 still manages to chug along at 15fps or so.

bobsmith1492 - Monday, January 4, 2010 - link

That is, Intel's integrated graphics are so slow that even if they offered AA/AF they are too slow to actually be able to use them. The same goes for low-end Nvidia integrated graphics as well.bsoft16384 - Tuesday, January 5, 2010 - link

Not true for NV/AMD. WoW, for example, runs fine with AA/AF on GeForce 9400. It runs decent with AF on the Radeon 3200 too.Remember that 20fps is actually pretty playable in WoW with hardware cursor (so the cursor is always 20fps).

bobsmith1492 - Monday, January 4, 2010 - link

Do you really think you can actually use AA/AF on an integrated Intel video processor? I don't believe your point is relevant.MonkeyPaw - Monday, January 4, 2010 - link

Yes, since AA and AF can really help the appearance of older titles. Some of us don't expect an IGP to run Crysis.JarredWalton - Monday, January 4, 2010 - link

The problem is that AA is really memory intensive, even on older titles. Basically, it can double the bandwidth requirements and since you're already sharing bandwidth with the CPU it's a severe bottleneck. I've never seen an IGP run 2xAA at a reasonable frame rate.bsoft16384 - Tuesday, January 5, 2010 - link

Newer AMD/NV GPUs have a lot of bandwidth saving features, so AA is pretty reasonable in many less demanding titles (e.g. CS:S or WoW) on the HD4200 or GeForce 9400.bsoft16384 - Tuesday, January 5, 2010 - link

And, FYI, yes, I've tried both. I had a MacBook Pro (13") briefly, and while I ultimately decided that the graphics performance wasn't quite good enough (compared with, say, my old T61 with a Quadro NVS140m), it was still night and day compared with the GMA X4500.The bottom line in my experience is that the GMA has worse quality output (particularly texture filtering) and that it absolutely dies with particle effects or lots of geometry.

WoW is not at all a shader-heavy game, but it can be surprisingly geometry and texture heavy for low-end cards in dense scenes. The Radeon 4200 is "only" about 2x as fast as the GMA X4500 in most benchmarks, but if you go load up demanding environments in WoW you'll notice that the GMA is 4 or 5 times slower. Worse, the GMA X4500 doesn't really get any faster when you lower the resolution or quality settings.

Maybe the new generation GMA solves these issues, but my general suspicion is that it's still not up-to-par with the GeForce 9400 or Radeon 4200 in worst-case performance or image quality, which is what I really care about.

JarredWalton - Tuesday, January 5, 2010 - link

Well, that's the rub, isn't it: GMA 4500MHD is not the same as the X4500 in the new Arrandale CPUs. We don't know everything that has changed, but performance alone shows a huge difference. We went from 10 shader units to 12 and performance at times more than doubled. Is it driver optimizations, or is it better hardware? I'm inclined to think it's probably some of both, and when I get some Arrandale laptops to test I'll be sure to run more games on them. :-)dagamer34 - Monday, January 4, 2010 - link

Sounds like while performance increased, battery life was just "meh". However, does the increased performance factor in the Turbo Boost that Arrandale can perform or was the clock speed locked at the same rate as the Core 2 Duo?And what about how battery life is affected by boosting performance with Turbo Boost? I guess we'll have to wait for production models for more definitive answers (I'm basically waiting for the next-gen 13.3" MacBook Pro to replace my late-2006 MacBook Pro).