OCZ's Vertex 2 Pro Preview: The Fastest MLC SSD We've Ever Tested

by Anand Lal Shimpi on December 31, 2009 12:00 AM EST- Posted in

- Storage

Enter the SandForce

OCZ actually announced its SandForce partnership in November. The companies first met over the summer, and after giggling at the controller maker’s name the two decided to work together.

Use the SandForce

Now this isn’t strictly an OCZ thing, far from it. SandForce has inked deals with some pretty big players in the enterprise SSD market. The public ones are clear: A-DATA, OCZ and Unigen have all announced that they’ll be building SandForce drives. I suspected that Seagate may be using SandForce as the basis for its Pulsar drives back when I was first briefed on the SSDs. I won’t be able to confirm for sure until early next year, but based on some of the preliminary performance and reliability data I’m guessing that SandForce is a much bigger player in the market than its small list of public partners would suggest.

SandForce isn’t an SSD manufacturer, rather it’s a controller maker. SandForce produces two controllers: the SF-1200 and SF-1500. The SF-1200 is the client controller, while the SF-1500 is designed for the enterprise market. Both support MLC flash, while the SF-1500 supports SLC. SandForce’s claim to fame is thanks to their extremely low write amplification, MLC enabled drives can be used in enterprise environments (more on this later).

SandForce isn’t an SSD manufacturer, rather it’s a controller maker. SandForce produces two controllers: the SF-1200 and SF-1500. The SF-1200 is the client controller, while the SF-1500 is designed for the enterprise market. Both support MLC flash, while the SF-1500 supports SLC. SandForce’s claim to fame is thanks to their extremely low write amplification, MLC enabled drives can be used in enterprise environments (more on this later).

Both the SF-1200 and SF-1500 use a Tensilica DC_570T CPU core. As SandForce is quick to point out, the CPU honestly doesn’t matter - it’s everything around it that determines the performance of the SSD. The same is true for Intel’s SSD. Intel licenses the CPU core for the X25-M from a third party, it’s everything else that make the drive so impressive.

SandForce also exclusively develops the firmware for the controllers. There’s a reference design that SandForce can supply, but it’s up to its partners to buy Flash, layout the PCBs and ultimately build and test the SSDs.

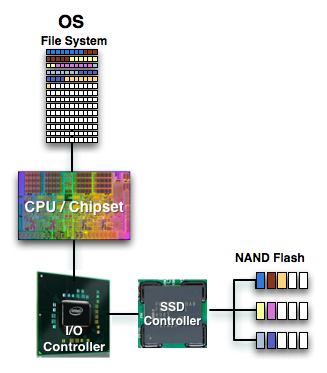

Page Mapping with a Twist

We talked about LBA mapping techniques in The SSD Relapse. LBAs (logical block addresses) are used by the OS to tell your HDD/SSD where data is located in a linear, easy to look up fashion. The SSD is in charge of mapping the specific LBAs to locations in Flash. Block level mapping is the easiest to do, requires very little memory to track, and delivers great sequential performance but sucks hard at random access. Page level mapping is a lot more difficult, requires more memory but delivers great sequential and random access performance.

Intel and Indilinx use page level mapping. Intel uses an external DRAM to cache page mapping tables and block history, while Indilinx uses it to do all of that plus cache user data.

SandForce’s controller implements a page level mapping scheme, but forgoes the use of an external DRAM. SandForce believes that it’s not necessary because their controllers simply write less to the flash.

100 Comments

View All Comments

Wwhat - Wednesday, January 6, 2010 - link

You make a good point, and anand seems to deliberately deflect thinking about it, now you must wonder why.Anyway don't be disheartened, your point is good regardless of this support of 'magic' that anad seems to prefer over an intellectual approach.

Shining Arcanine - Thursday, December 31, 2009 - link

As far as I can tell from Anand's description of the technology, it seems that this is being done transparently to the operating system, so while the operating system thinks that 25GB have been written, the SSD knows that it only wrote 11GB. Think of it of having two balancing sheets, one that other people see that has nice figures and the other that you see which has the real figures, sort of like what Enron did, except instead of showing the better figures to everyone else when the actual figures are worse, you show the worse figures to everyone else when the actual figures are better.Anand Lal Shimpi - Thursday, December 31, 2009 - link

Data compression, deduplication, etc... are all apparently picked and used on the fly. SandForce says it's not any one algorithm but a combination of optimizations.Take care,

Anand

AbRASiON - Friday, January 1, 2010 - link

What about data reliability, compressed data can normally be a bit of an issue recovering it - any thoughts?Jenoin - Thursday, December 31, 2009 - link

Could you please post actual disk capacity used for the windows 7 and office install?The "size" vs "size on disk" of all the folders/files on the drive, (listed by windows in the properties context tab) would be interesting, to see what level of compression there is.

Thanks

Anand Lal Shimpi - Thursday, December 31, 2009 - link

Reported capacity does not change. You don't physically get more space with DuraWrite, you just avoid wasting flash erase cycles.The only way to see that 25GB of installs results in 11GB of writes is to query the controller or flash memory directly. To the end user, it looks like you just wrote 25GB of data to the drive.

Take care,

Anand

notty22 - Thursday, December 31, 2009 - link

It would be nice for the customer if OCZ did not produce multiple models with varying degrees of quality . Whether its the controller or memory , or combination thereof.

Go to Newegg glance at OCZ 60 gig ssd and greeted with this.

OCZ Agility Series OCZSSD2-1AGT60G

OCZ Core Series V2 OCZSSD2-2C60G

OCZ Vertex Series OCZSSD2-1VTX60G

OCZ Vertex OCZSSD2-1VTXA60G

OCZ Vertex Turbo OCZSSD2-1VTXT60G

OCZ Vertex EX OCZSSD2-1VTXEX60G

OCZ Solid Series OCZSSD2-1SLD60G

OCZ Summit OCZSSD2-1SUM60G

OCZ Agility EX Series OCZSSD2-1AGTEX60G

219.00 - 409.00

Low to high the way I listed them.

I can understand when some say they will wait until the

manufactures work out all the various bugs/negatives that must

be inherent in all these model/name changes.

Which model gets future technical upgrades/support ?

jpiszcz - Thursday, December 31, 2009 - link

I agree with you on that one.What we need is an SSD that beats the X25-E, so far, there is none.

BTW-- is anyone here running X25-E on enterprise severs with > 100GB/day? If so, what kind of failure rates are seen?

Lonyo - Thursday, December 31, 2009 - link

I like the idea.Given the current state of the market, their product is pretty suitable when it comes to end user patterns.

SSDs are just too expensive for mass storage, so traditional large capacity mechanical drives make more sense for your film or TV or music collection (all of which are likely to be compressed), which all the non-compressed stuff goes on your SSD for fast access.

It's god sound thinking behind it for a performance drive, although in the long run I'm not so sure the approach would always be particularly useful in a consumer oriented drive.

dagamer34 - Thursday, December 31, 2009 - link

At least for now, consumer-oriented drives aren't where the money is. Until you get 160GB drives down to $100, most consumers will call SSDs too expensive for laptop use.The nice thing about desktops though is multiple slots. 80GB is all what most people need to install an OS, a few programs, and games. Media should be stored on a separate platter-based drive anyway (or even a centralized server).