OCZ's Vertex 2 Pro Preview: The Fastest MLC SSD We've Ever Tested

by Anand Lal Shimpi on December 31, 2009 12:00 AM EST- Posted in

- Storage

Controlling Costs with no DRAM and Cheaper Flash

SandForce is a chip company. They don’t make flash, they don’t make PCBs and they definitely don’t make SSDs. As such, they want the bulk of the BOM (Bill Of Materials) cost in an SSD to go to their controllers. By writing less to the flash, there’s less data to track and smaller tables to manage on the fly. The end result is SF promises its partners that they don’t need to use any external DRAMs alongside the SF-1200 or SF-1500. It helps justify SandForce’s higher controller cost than a company like Indilinx.

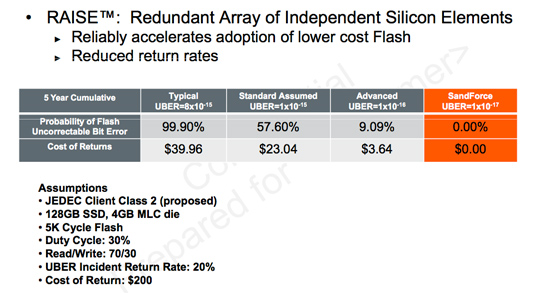

By writing less to flash SandForce also believes its controllers allow SSD makers to use lower grade flash. Most MLC NAND flash on the market today is built for USB sticks or CF/SD cards. These applications have very minimal write cycle requirements. Toss some of this flash into an SSD and you’ll eventually start losing data.

Intel and other top tier SSD makers tackle this issue by using only the highest grade NAND available on the market. They take it seriously because most users don’t back up and losing your primary drive, especially when it’s supposed to be on more reliable storage, can be catastrophic.

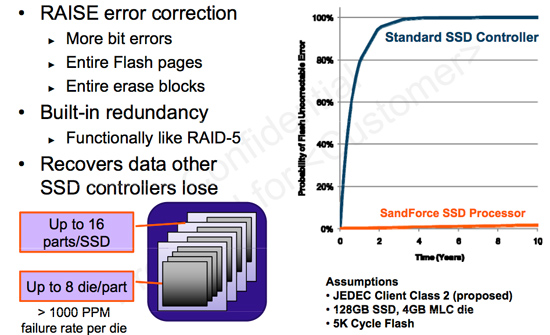

SandForce attempts to internalize the problem in hardware, again driving up the cost/value of its controller. By simply writing less to the flash, a whole new category of cheaper MLC NAND flash can be used. In order to preserve data integrity the controller writes some redundant data to the flash. SandForce calls it similar to RAID-5, although the controller doesn’t generate parity data for every bit written. Instead there’s some element of redundancy, the extent of which SF isn’t interested in delving into at this point. The redundant data is striped across all of the flash in the SSD. SandForce believes it can correct errors at as large as the block level.

There’s ECC and CRC support in the controller as well. The controller has the ability to return correct data even if it comes back with errors from the flash. Presumably it can also mark those flash locations as bad and remember not to use them in the future.

I can’t help but believe the ability to recover corrupt data, DuraWrite technology and AES-128 encryption are somehow related. If SandForce is storing some sort of hash of the majority of data on the SSD, it’s probably not too difficult to duplicate that data, and it’s probably not all that difficult to encrypt it either. By doing the DuraWrite work up front, SandForce probably gets the rest for free (or close to it).

100 Comments

View All Comments

blowfish - Friday, January 1, 2010 - link

80GB? You really need that much? I'm not sure how much space current games take up, but you'd hope that if they shared the same engine, you could have several games installed in significantly less space than the sum of their separate installs. On my XP machines, my OS plus programs partitions are all less than 10GB, so I reckon 40GB is the sweet spot for me and it would be nice to see fast drives of that capacity at a reasonable price. At least some laptop makers recognise the need for two drive slots. Using a single large SSD for everything, including data, seems like extravagant overkill.Gasaraki88 - Monday, January 4, 2010 - link

Just as a FYI, Conan take 30GB. That's one game. Most new games are around 6GB. WoW takes like 13GB. 80GB runs out real fast.DOOMHAMMADOOM - Friday, January 1, 2010 - link

I wouldn't go below 160 GB for a SSD. The games in just my Steam folder alone go to 170 GB total. Games are big these days. The thought of putting Windows and a few programs and games onto an 80GB hard drive is not something I would want to do.Swivelguy2 - Thursday, December 31, 2009 - link

This is very interesting. Putting more processing power closer to the data is what has improved the performance of these SSDs over current offerings. That makes me wonder: what if we used the bigger, faster CPU on the other side of the SATA cable to similarly compress data before storing it on an X25-M? Could that possible increase the effective capacity of the drive while addressing the X25-M's major shortcoming in sequential write speed? Also, compressing/decompressing on the CPU instead of in the drive sends less through SATA, relieving the effects of the 3 GB/s ceiling.Also, could doing processing on the data (on either end of SATA) add more latency to retrieving a single file? From the random r/w performance, apparently not, but would a simple HDTune show an increase in access time, or might it be apparent in the "seat of the pants" experience?

Happy new year, everyone!

jacobdrj - Friday, January 1, 2010 - link

The race to the true 'Isolinear Chip' from Star Trek is afoot...Fox5 - Thursday, December 31, 2009 - link

This really does look like something that should have been solved with smarter file systems, and not smarter controllers imo. (though some would disagree)Reiser4 does support gzip compression of the file system though, and it's a big win for performance. I don't know if NTFS's compression is too, but I know in the past it had a negative impact, but I don't see why it wouldn't perform better if there was more cpu performance.

blagishnessosity - Thursday, December 31, 2009 - link

I've wondered this myself. It would be an interesting experiment. There are http://en.wikipedia.org/wiki/Comparison...systems#... (NTFS, Btrfs, ZFS and Reiser4). In windows, I suppose this could be tested by just right clicking all your files and checking "compress" and then running your benchmarks as usual. In linux, this would be interesting to test with btrfs's SSD mode paired with a low-overhead io scheduler like noop or deadline.What interests me the most though is SSD performance on a http://en.wikipedia.org/wiki/Log-structured_file_s... as they theoretically should never have random reads or writes. In the linux realm, there are several log-based filesystems (JFFS2, UBIFS, LogFS, NILFS2) though none seem to perform ideally in real world usage. Hopefully that'll change in the future :-)

blagishnessosity - Thursday, December 31, 2009 - link

correction:There are http://en.wikipedia.org/wiki/Comparison...systems#...">several filesystems that support transparent compression (NTFS, Btrfs, ZFS and Reiser4).

What interests me the most though is SSD performance on a http://en.wikipedia.org/wiki/Log-structured_file_s...">Log-based filesystem as they theoretically should never have random reads or writes.

(note to web admin: the comment wysiwig does not appear to work for me)

themelon - Thursday, December 31, 2009 - link

Note that ZFS now also has native DeDupe support as of build 128http://blogs.sun.com/bonwick/en_US/entry/zfs_dedup">http://blogs.sun.com/bonwick/en_US/entry/zfs_dedup

grover3606 - Saturday, November 13, 2010 - link

Is the used performance with trim enabled?