Intel Atom D510: Pine Trail Boosts Performance, Cuts Power

by Anand Lal Shimpi on December 21, 2009 12:01 AM EST- Posted in

- CPUs

Intel announced the Atom processor in 2008. That same year we were introduced to the first two members of the family: Diamondville and Silverthorne. The chips were both called Atom, but they differed in their application. Diamondville was used in desktops, nettops and netbooks, while Silverthorne was almost exclusively for MIDs (Mobile Internet Devices).

Atom continues its split personality. Silverthorne begets Moorestown, the next-generation Atom for MIDs and smartphones. Diamondville, on the other hand, leads us to Pine Trail - the next-generation Atom for desktops, nettops and netbooks.

Pine Trail is the platform codename. Pineview is the codename for the new Atom CPU.

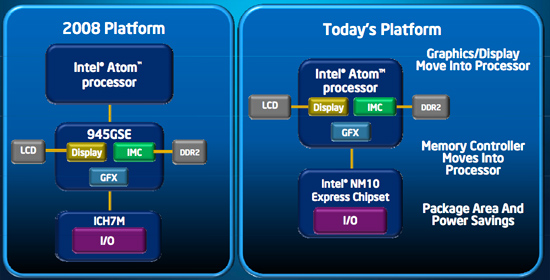

Pineview takes the same 45nm Atom architecture introduced in 2008 and integrates a memory controller, DMI link and GMA 3150 graphics core.

Integrating the memory controller is extremely important for Atom as it continues to be an in-order architecture. With minimal options for reordering instructions on the fly, if Atom encounters a load the pipeline stalls while the memory request completes. Despite Atom’s sensitivity to memory latency, most synthetic tests showed a minimal improvement in memory latency from Pineview. The real world performance benefit is also less than expected but tangible, but for whatever reason that’s not manifested in any synthetic memory latency tests. More on this shortly.

Two Versions of the New Atom

The chips being announced today are the Intel Atom N450, Atom D410 and D510. The N450 is the lower power netbook version of the new Atom and is a single core processor. Intel claims that only single core Atom processors will be offered in netbooks, a limitation that may be lifted at some point in the future but no time soon. Intel seems intent on keeping netbooks from being too high performance, or even just less miserable than they would be with a single core Atom.

The D410 and D510 are for desktops and nettops. They are single and dual core versions of the new processor, respectively.

All three chips run at 1.66GHz. They only differ in core counts, TDPs, memory speed and supported capacity. The table below lists the details:

| Processor | Clock Speed | Cores / Threads | L2 Cache | Memory | TDP |

| Intel Atom D510 | 1.66GHz | 2 / 4 | 1MB | DDR2-800 (4GB max) | 13W |

| Intel Atom D410 | 1.66GHz | 1 / 2 | 512KB | DDR2-800 (4GB max) | 10W |

| Intel Atom N450 | 1.66GHz | 1 / 2 | 512KB | DDR2-667 (2GB max) | 5.5W |

The netbook version of Pineview only supports a maximum of DDR2-667 and according to Intel's datasheet can only support at most 2GB of memory. Its TDP is nearly half that of the desktop/nettop version.

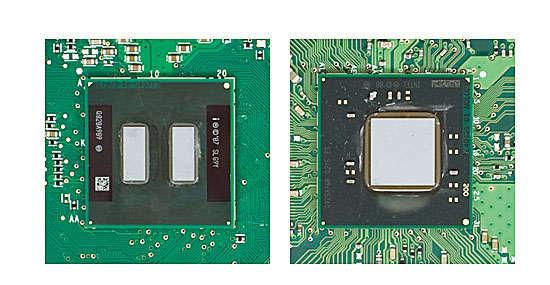

The first dual core Atom processors were just two single core Atoms on a single package. Pineview dual-core is monolithic because both cores have to share the same memory controller.

Dual-core Atom "Diamondville" (left) vs. Dual-core Atom "Pineview" (right)

41 Comments

View All Comments

Shadowmaster625 - Monday, December 21, 2009 - link

Why doesnt AMD just take one of their upcoming mobile 880 series northbridges and add a memory controller and a single Athlon core? It would be faster than atom, more efficient than Ion, and could be binned for low power. Instead they just stand there with their thumbs up their butts while Intel shovels this garbage onto millions of unsuspecting consumers at even higher profit margins.JarredWalton - Monday, December 21, 2009 - link

The problem is that even single core Athlons are not particularly power friendly. I'm sure they could get 5-6 hours of battery life if they tried hard, but Atom can get twice that.Hector1 - Monday, December 21, 2009 - link

Do you want some whine with that ? Where were you when chipsets were created by taking a bunch of smaller ICs on the motherboard and putting them altogether into one IC ? PCs became cheaper and faster. We thought it was great. Do you know anything about L2 Cache ? It used to be separate on the motherboards as well until it was integrated into the CPU. PCs became cheaper and faster and we thought it was great. Remember when CPUs were solo ? They became Duo & Quad making the PCs faster and dropping price/performance. AMD & Intel integrated the memory controller and, whoa!, guess what ? Faster & lower price/performance and, yes, we thought it was great. It's called Moore's Law and it's all been part of the semiconductor revolution that's still going on since the '60s. GPUs are no different. They're still logic gates made out of transistors and with new 32nm technology, then 22nm and 16nm, the graphics logic will be integrated as well. Seriously, what did you think would happen ?TETRONG - Monday, December 21, 2009 - link

Do you understand that Moore's Law is not a force of nature?Intel has artificially handicapped the low-voltage sector in order to force consumers to purchase Pentiums. Right where they wanted you all along.

Since when is it ok for Intel to dictate what type of systems are created with processors?

First it was the 1GB of Ram limitation, now you can't have a dual-core. When does it end?

"We have a mediocre CPU, combined with a below average GPU-according to our amortization schedule you could very well have it in the year 2013(after the holidays of course), by which time we should have our paws all over the video encoding and browsing standards, which we'll be sure to make as taxing as possible. Official release of USB 3.0 will be right up in a jiff!

Voldenuit - Monday, December 21, 2009 - link

The historical examples you cite are not analagous, because intel bundling their anemic GPUs onto the package makes performance *worse*, and bundling the two dies onto a single package (they're not on the same chip, either, so there is no hard physical limitation) makes competing IGPs more expensive, since you now have to pay for a useless intel IGP as well as a 3rd party one if you were going to buy an IGP system.And just because a past course of action was embraced by the market does not mean it was not anti-competitive.

bnolsen - Saturday, December 26, 2009 - link

Performance is worse?? As far as I can see the bridge requires no heat sink and the cpu can be cooled passively. Power use went way down. For this plaform that is improved performance.My current atom netbooks do fine playing flash on their screens and just fine playing 720p h264 mkv files.

If you want a bunch of power use and heat, just skip the ion platform and go with a core2 based system.

Hector1 - Monday, December 21, 2009 - link

You need to re-read the tech articles. Pineview does integrate both the graphics and memory controller into the CPU. It's the ICH that remains separate. Even if it didn't, what do you think will happen when this goes to 32nm, 22nm and 16nm ? As for performance, Anand says in the title "Pine Trail Boosts Performance, Cuts Power" so that's good enough for me.Intel obviously created the Atom for a low cost, low power platform and they're delivering. It'll continue to be fine-tuned with more integration to lower costs. The market obviously wants it. SOC is coming too (System On a Chip) for even lower costs. Not the place for high performance graphics, I think.

This is really about Moore's Law marching on. It's driven down prices, increased performances and lowered power more than anything else on the planet. Without it, we'd still be paying the $5000 I paid for my 1st PC in 1980 -- an Apple II Plus. What you're saying, whether you know it or not, is that we should stop advancing processes and stop Moore's Law. Personally, I'd like to see us not stop at 45nm and keep going.

kaoken - Monday, December 21, 2009 - link

I agree that progress should be made, but bundling an intel cpu and IGP into a chip is anti-competitive. I wouldn't mind though if there were an intel cpu and ati/nvidia on a chip.JonnyDough - Tuesday, December 22, 2009 - link

Hector is right in one respect, and that is that if Intel is going to be dumb, we don't have to purchase their products. I especially like the sarcastic cynicism in the article when mentioning all the things that Intel's chip CAN'T do. They just don't know how to make a GPU without patent infringement. If they can't compete, they'll try using their big market share to hurt competition. Classic Intel move. They never did care about innovation, only about market share and money. But I guess that's what happens when you're a mega corp with lots of stockholder expectations and pressure. I'll give my three cheers to the underdogs!overvolting - Monday, December 21, 2009 - link

Hear Hear