The Radeon HD 5970: Completing AMD's Takeover of the High End GPU Market

by Ryan Smith on November 18, 2009 12:00 AM EST- Posted in

- GPUs

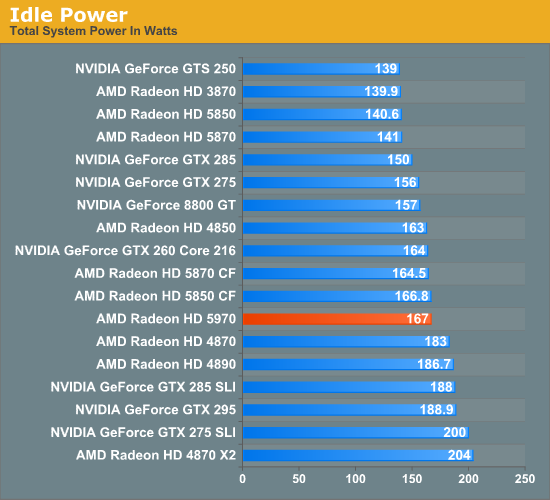

Thanks to AMD’s aggressive power optimizations, the idle power of the 5970 is rated for 42W. In practice this puts it within spitting distance of the 5800 series in Crossfire, and below a number of other cards including the GTX 295, 4870X2, and even the 4870 itself.

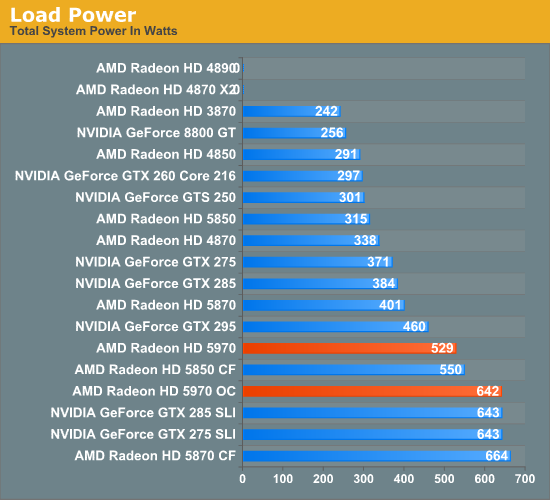

Once we start looking at load power, we find our interesting story. Remember that the 5970 is specifically built and binned in order to meet the 300W cap. As a result it offers 5850CF performance, but at 21W lower power usage, and the gap only increases as you move up the chart with more powerful cards in SLI/CF mode. The converse of this is that it flirts with the cap more than our GTX 295, and as a result comes in 69W higher. But since we’re using OCCT, any driver throttling needs to be taken in to consideration.

Looking at the 5970 when it’s overclocked, it becomes readily apparently why a good power supply is necessary. For that 15% increase in core speed and 20% increase in memory speed, we pay a penalty of 113W! This puts it in league with the GTX series in SLI, and the 5870CF, except that it’s drawing all of this power over half as many plugs. We’re only going to say this one more time: if you’re going to overclock the 5970, you must have a very good power supply.

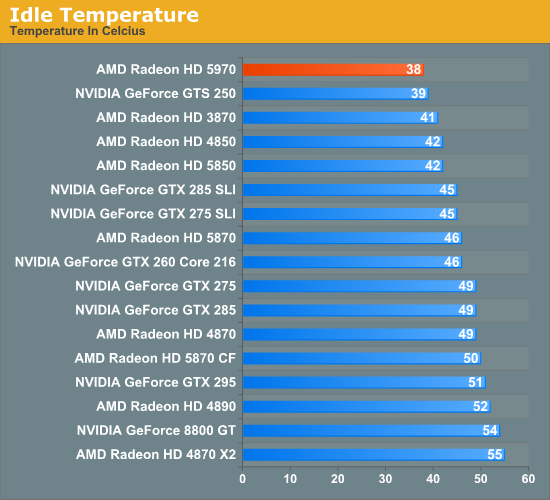

Moving on, the vapor chamber cooler makes itself felt in our temperature testing. The 5970 is the coolest high-end card we’ve tested (yes, you’ve read that right), coming in at 38C, below even the GTS 250. This is in stark opposition to previous dual-GPU cards, which have inhabited the top of the chart. Even the 5850 isn’t quite as cool as a 5970 at idle.

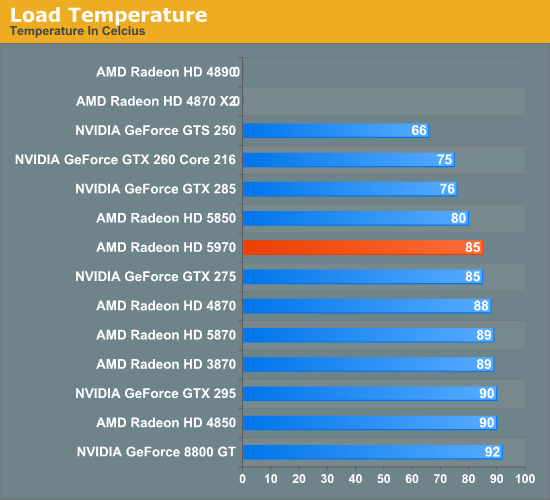

At load, we see a similar but slightly different story. It’s no longer the coolest card, losing out to the likes of the 5850 and GTX 285, but at 85C it hangs with the GTX 275, and below other single and dual-GPU cards such as the 5870 and GTX 295. This is a combination of the vapor cooler, and the fact that AMD slapped an oversized cooler on this card for overclocking purposes. Although Anand’s card failed at OCCT when overclocked, my own card hit 93C here, so assume that this cool advantage erodes under overclocking.

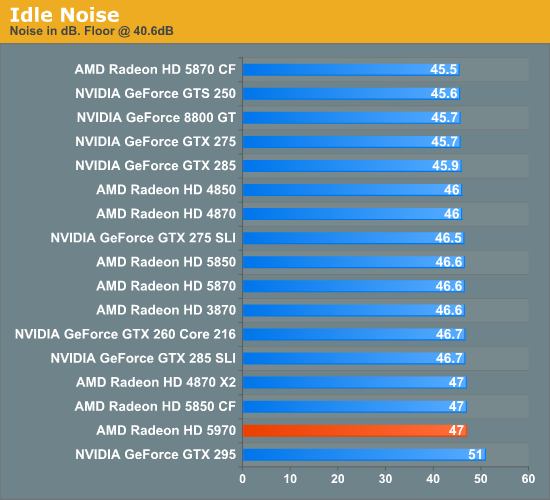

Finally we have our look at noise. Realistically, every card runs up against the noise floor, and the 5970 is no different. At 38C idle, it can keep its fan at very low speeds.

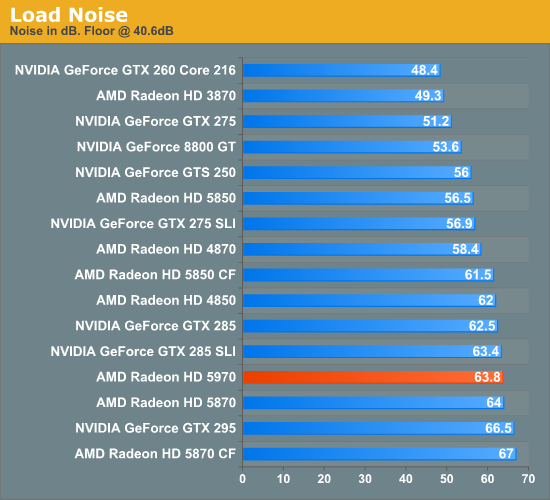

It’s at load that we find another interesting story. At 63.8dB it’s plenty loud, but it’s still quieter than either the GTX 295 or 5870CF, the former of which it is significantly faster than. Given the power numbers we saw earlier, we had been expecting something that registered as louder, so this was a pleasant surprise.

We will add that on a subjective basis, AMD seems to have done something to keep the whine down. The GTX 295 (and 4870X2) aren’t just loud, but they have a slight whine to them – the 5970 does not. This means that it’s not just a bit quieter to sound meters, but it really comes across that way to human ears too. But by the same token, I would consider the 5850CF to quieter still, more so than 2dB would imply.

114 Comments

View All Comments

SJD - Wednesday, November 18, 2009 - link

Thanks Anand,That kind of explains it, but I'm still confused about the whole thing. If your third monitor supported mini-DP then you wouldn't need an active adapter, right? Why is this when mini-DP and regular DP are the 'same' appart from the actual plug size. I thought the whole timing issue was only relevant when wanting a third 'DVI' (/HDMI) output from the card.

Simon

CrystalBay - Wednesday, November 18, 2009 - link

WTH is really up at TWSC ?Jacerie - Wednesday, November 18, 2009 - link

All the single game tests are great and all, but once I would love to see AT run a series of video card tests where multiple instances of games like EVE Online are running. While single instance tests are great for the FPS crowd, all us crazy high-end MMO players need some love too.Makaveli - Wednesday, November 18, 2009 - link

Jacerie the problem with benching MMO's and why you don't see more of them is all the other factors that come into play. You have to now deal with server latency, you also have no control of how many players are usually in the server at any given time when running benchmarks. There is just to many variables that would not make the benchmarks repeatable and valid for comparison purposes!mesiah - Thursday, November 19, 2009 - link

I think more what he is interested in is how well the card can render multiple instances of the game running at once. This could easily be done with a private server or even a demo written with the game engine. It would not be real world data, but it would give an idea of performance scaling when multiple instances of a game are running. Myself being an occasional "Dual boxer" I wouldn't mind seeing the data myself.Jacerie - Thursday, November 19, 2009 - link

That's exactly what I was trying to get at. It's not uncommon for me to be running at lease two instances of EVE with an entire assortment of other apps in the background. My current 3870X2 does the job just fine, but with 7 out and DX11 around the corner I'd like to know how much money I'm going to need to stash away to keep the same level of usability I have now with the newer cards.Zool - Wednesday, November 18, 2009 - link

The so fast is only becouse 95% of the games are dx9 xbox ports. Still crysis is the most demanding game out there quite a time (it need to be added that it has a very lazy engine). In Age of Conan the diference in dx9 and dx10 is more than half(with plenty of those efects on screen even1/3) the fps drop. Those advanced shader efects that they are showing in demos are actualy much more demanding on the gpu than the dx9 shaders. Its just the thing they dont mention it. It will be same with dx11. A full dx11 game with all those fancy shaders will be on the level of crysis.crazzyeddie - Wednesday, November 18, 2009 - link

... after their first 40nm test chips came back as being less impressive than **there** 55nm and 65nm test chips were.silverblue - Wednesday, November 18, 2009 - link

Hehe, I saw that one too.frozentundra123456 - Wednesday, November 18, 2009 - link

Unfortunately, since playing MW2, my question is: are there enough games that are sufficiently superior on the PC to justify the inital expense and power usage of this card? Maybe thats where eyefinity for AMD and PhysX for nVidia come in: they at least differentiate the PC experience from the console.I hate to say it, but to me there just do not seem to be enough games optimized for the PC to justify the price and power usage of this card, that is unless one has money to burn.