NVIDIA's Bumpy Ride: A Q4 2009 Update

by Anand Lal Shimpi on October 14, 2009 12:00 AM EST- Posted in

- GPUs

Blhaflhvfa.

There’s a lot to talk about with regards to NVIDIA and no time for a long intro, so let’s get right to it.

At the end of our Radeon HD 5850 Review we included this update:

“Update: We went window shopping again this afternoon to see if there were any GTX 285 price changes. There weren't. In fact GTX 285 supply seems pretty low; MWave, ZipZoomFly, and Newegg only have a few models in stock. We asked NVIDIA about this, but all they had to say was "demand remains strong". Given the timing, we're still suspicious that something may be afoot.”

Less than a week later and there were stories everywhere about NVIDIA’s GT200b shortages. Fudo said that NVIDIA was unwilling to drop prices low enough to make the cards competitive. Charlie said that NVIDIA was going to abandon the high end and upper mid range graphics card markets completely.

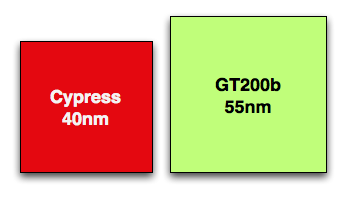

Let’s look at what we do know. GT200b has around 1.4 billion transistors and is made at TSMC on a 55nm process. Wikipedia lists the die at 470mm^2, that’s roughly 80% the size of the original 65nm GT200 die. In either case it’s a lot bigger and still more expensive than Cypress’ 334mm^2 40nm die.

Cypress vs. GT200b die sizes to scale

NVIDIA could get into a price war with AMD, but given that both companies make their chips at the same place, and NVIDIA’s costs are higher - it’s not a war that makes sense to fight.

NVIDIA told me two things. One, that they have shared with some OEMs that they will no longer be making GT200b based products. That’s the GTX 260 all the way up to the GTX 285. The EOL (end of life) notices went out recently and they request that the OEMs submit their allocation requests asap otherwise they risk not getting any cards.

The second was that despite the EOL notices, end users should be able to purchase GeForce GTX 260, 275 and 285 cards all the way up through February of next year.

If you look carefully, neither of these statements directly supports or refutes the two articles above. NVIDIA is very clever.

NVIDIA’s explanation to me was that current GPU supplies were decided on months ago, and in light of the economy, the number of chips NVIDIA ordered from TSMC was low. Demand ended up being stronger than expected and thus you can expect supplies to be tight in the remaining months of the year and into 2010.

Board vendors have been telling us that they can’t get allocations from NVIDIA. Some are even wondering whether it makes sense to build more GTX cards for the end of this year.

If you want my opinion, it goes something like this. While RV770 caught NVIDIA off guard, Cypress did not. AMD used the extra area (and then some) allowed by the move to 40nm to double RV770, not an unpredictable move. NVIDIA knew they were going to be late with Fermi, knew how competitive Cypress would be, and made a conscious decision to cut back supply months ago rather than enter a price war with AMD.

While NVIDIA won’t publicly admit defeat, AMD clearly won this round. Obviously it makes sense to ramp down the old product in expectation of Fermi, but I don’t see Fermi with any real availability this year. We may see a launch with performance data in 2009, but I’d expect availability in 2010.

While NVIDIA just launched its first 40nm DX10.1 parts, AMD just launched $120 DX11 cards

Regardless of how you want to phrase it, there will be lower than normal supplies of GT200 cards in the market this quarter. With higher costs than AMD per card and better performance from AMD’s DX11 parts, would you expect things to be any different?

Things Get Better Next Year

NVIDIA launched GT200 on too old of a process (65nm) and they were thus too late to move to 55nm. Bumpgate happened. Then we had the issues with 40nm at TSMC and Fermi’s delays. In short, it hasn’t been the best 12 months for NVIDIA. Next year, there’s reason to be optimistic though.

When Fermi does launch, everything from that point should theoretically be smooth sailing. There aren’t any process transitions in 2010, it’s all about execution at that point and how quickly can NVIDIA get Fermi derivatives out the door. AMD will have virtually its entire product stack out by the time NVIDIA ships Fermi in quantities, but NVIDIA should have competitive product out in 2010. AMD wins the first half of the DX11 race, the second half will be a bit more challenging.

If anything, NVIDIA has proved to be a resilient company. Other than Intel, I don’t know of any company that could’ve recovered from NV30. The real question is how strong will Fermi 2 be? Stumble twice and you’re shaken, do it a third time and you’re likely to fall.

106 Comments

View All Comments

sbuckler - Wednesday, October 14, 2009 - link

Not all doom and gloom: http://www.brightsideofnews.com/news/2009/10/13/nv...">http://www.brightsideofnews.com/news/20...contract...Which also puts them in the running for the next Wii I would have thought?

Zapp Brannigan - Thursday, October 15, 2009 - link

unlikely, tegra is basically just an arm11 processor, allowing full backwards compatibility with the current arm9 and arm7 processors in the ds. If Nintendo want to have full backwards compatibility with the wii 2 then they'll have to stick the current ibm/ati combo.papapapapapapapababy - Wednesday, October 14, 2009 - link

1) ati launches crappy cards, 2) anand realizes "crapy cards, we might need nvidia after all" 3) anand does some nvidia damage control. 4)damage control sounds like wishful thinking to me 5) lol"While RV770 caught NVIDIA off guard, Cypress did not". XD

NVIDIA knew they were going to -FAIL- and made a conscious decision to KEEP FAILING? Guys, guys, they where cough off guard AGAIN. It does not matter if they know it! IT IS STILL A BIG FAIL! THEY KNEW? what kind of nonsense is that? BTW They could not shrink @ launch anything except that OEM garbage... what makes you think that fermi is going to be any different ?

whowantstobepopular - Wednesday, October 14, 2009 - link

"1) ati launches crappy cards, 2) anand realizes "crappy cards, we might need nvidia after all" 3) anand does some nvidia damage control"ROFL

Maybe Anand wrote this article to lay to the rest the last vestiges of SiliconDoc's recent rantings.

Seriously, Anand and team...

You guys do a fine job of thoroughly covering the latest official developments in the enthusiast PC space. You're doing the right thing by sticking to info that's confirmed. Charlie, Fudo and others are covering the rumours just fine, so what we really need is what we get here at Anandtech: Thorough, prompt reviews of tech products that have just released, and interesting commentary on PC market developments and directions (such as the above article).

I like the fact that you add a little bit of your own interpretation into these sorts of commentaries, and at the same time make sure we know what is fact and what is interpretation.

I guess I see it this way: You've been commentating on this IT game for quite a few years now, and the articles show it. There are plenty of references to parallels between current situations and historic ones, and these are both interesting and informative. This is one of many aspects of the articles here at Anandtech that make me (and others, it seems) keep coming back. Your knowledge of the important points in IT history is confidence inspiring when it comes to weighing up the value of your commentaries.

Finally, I have to commend the way that everyone on the Anandtech team appears to read through the comments under their articles. It's rather encouraging when suggestions and corrections for articles are noted and acted upon promptly, even when it involves extra work (re-running benchmarks, creating new graphs etc.). And the touch of humour that comes across in some of the replies (and articles) from the team makes a good comedic interlude during an otherwise somewhat bland day at work.

Keep up the good work Anandtech!

Transisto - Wednesday, October 14, 2009 - link

I like this place. . .Transisto - Wednesday, October 14, 2009 - link

I like this place. . .shotage - Wednesday, October 14, 2009 - link

Thumbs up to this post. These are my thoughts and sentiments also. Thank you to all @ Anandtech for excellent reading! Comments included ;)Shayd - Wednesday, October 14, 2009 - link

Ditto, thanks!Pastuch - Wednesday, October 14, 2009 - link

Fantastic post. I couldn't have said it better myself.Scali - Wednesday, October 14, 2009 - link

We'll have to see. nVidia competed just fine against AMD with the G80 and G92. The biggest problem with GT200 was that they went 65 nm rather than 55 nm, but even so, they still held up against AMD's parts because of the performance advantage. Especially G92 was hugely successful, incredible performance at a good price. Yes, the chip was larger than a 3870, but who cared?Don't forget that GT200 is based on a design that is now 3 years old, which is ancient. Just going to GDDR5 alone will already make the chip significantly smaller and less complex, because you only need half the bus width for the same performance.

Then there's probably tons of other optimizations that nVidia can do to the execution core to make it more compact and/or more efficient.

I saw someone who estimated the number of transistors per shader processor based on the current specs of Fermi, compared to G80/G92/GT200. The result was that they were all around 5.5M transistors per SP, I believe. So that means that effectively nVidia gets the extra flexibility 'for free'.

Combine that with the fact that 40 nm allows them to scale to higher clockspeeds, and allows them to pack more than twice the number of SPs on a single chip, and the chip as a whole will probably be more efficient anyway, and it seems very likely that this chip will be a great performer.

And if you have the performance, you dictate the prices. It will then be the salvage parts and the scaled down versions of this architecture that will do the actual competing against AMD's parts, and those nVidia chips will obviously be in a better position to compete on price than the 'full' Fermi.

If Fermi can make the 5870 look like a 3870, nVidia is golden.