NVIDIA’s GeForce GT 220: 40nm and DX10.1 for the Low-End

by Ryan Smith on October 12, 2009 6:00 AM EST- Posted in

- GPUs

DirectX 10.1 on an NVIDIA GPU?

Easily the most interesting thing about the GT 220 and G 210 is that they mark the introduction of DirectX 10.1 functionality on an NVIDIA GPU. It’s no secret that NVIDIA does not take a particular interest in DX10.1, and in fact even with this they still don’t. But for these new low-end parts, NVIDIA had some special problems: OEMs.

OEMs like spec sheets. They want parts that conform to certain features so that they can in turn use those features to sell the product to consumers. OEMs don’t want to sell a product with “only” DX10.0 support if their rivals are using DX10.1 parts. Which in turn means that at some point NVIDIA would need to add DX10.1 functionality, or risk losing out on lucrative OEM contracts.

This is compounded by the fact that while Fermi has bypassed DX10.1 entirely for the high-end, Fermi’s low-end offspring are still some time away. Meanwhile AMD will be shipping their low-end DX11 parts in the first half of next year.

So why do GT 220 and G 210 have DX10.1 functionality? To satisfy the OEMs, and that’s about it. NVIDIA’s focus is still on DX10 and DX11. DX10.1 functionality was easy to add to the GT200-derrived architecture (bear in mind that GT200 already had some DX10.1 functionality), and so it was done for the OEMs. We would also add that NVIDIA has also mentioned the desire to not be dinged by reviewers and forum-goers for lacking this feature, but we’re having a hard time buying the idea that NVIDIA cares about either of those nearly as much as they care about what the OEMs think when it comes to this class of parts.

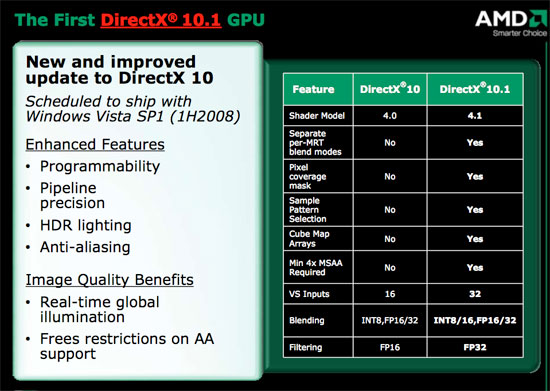

DX10.1 in a nutshell, as seen in our Radeon 3870 Review

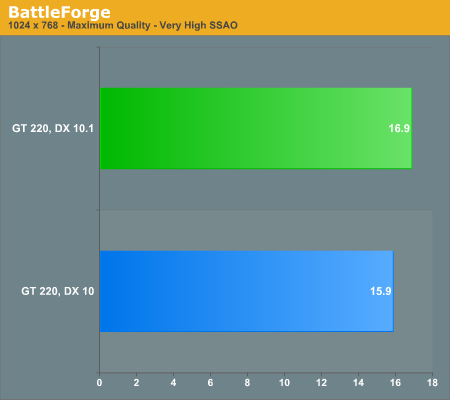

At any rate, while we don’t normally benchmark with DX10.1 functionality enabled, we did so today to make sure DX10.1 was working as it should be. Below are our Battleforge results, using DX10 and DX10.1 with Very High SSAO enabled.

The ultimate proof that DX10.1 is a checkbox feature here is performance. Certainly DX10.1 is a faster way to implement certain effects, but running them in the first place still comes at a significant performance penalty. Hardware of this class is simply too slow to make meaningful use of the DX10.1 content that’s out there at this point.

80 Comments

View All Comments

Ryan Smith - Monday, October 12, 2009 - link

I don't have that information at this moment. However this is very much the wrong card if you're going scientific work for performance reasons.apple3feet - Wednesday, October 14, 2009 - link

Well, as a developer, I just need it to work. Other machines here have TESLAs and GTX280s, but a low end cool running card would be very useful for development machines.I believe that the answer to my question is that it's 1.2 (i.e. everything except double precision), so no good for me.

jma - Monday, October 12, 2009 - link

Ryan, if you run 'deviceQuery' from the Cuda SDK, it will tell you all there is to know.Another goodie would be 'bandwidthTest' for those of us who can't figure out the differences between various DDR and GDDR's and what the quoted clocks are supposed to imply ...

vlado08 - Monday, October 12, 2009 - link

My HD 4670 idles in 165MHz core and 249,8MHz memoy clock and GPU temps 36-45 degrees(passively cooled) as reported by GPU-Z 0.3.5 Is there a possibility that your card didn't lower it's clock during idle?vlado08 - Monday, October 12, 2009 - link

My question is to Ryian of course.Ryan Smith - Monday, October 12, 2009 - link

Yes, it was idling correctly.KaarlisK - Monday, October 12, 2009 - link

The HD4670 cards differ.I've bought three:

http://www.asus.com/product.aspx?P_ID=Z9qnCFnOUNDM...">http://www.asus.com/product.aspx?P_ID=Z9qnCFnOUNDM...

http://www.gigabyte.com.tw/Products/VGA/Products_O...">http://www.gigabyte.com.tw/Products/VGA/Products_O...

http://www.asus.com/product.aspx?P_ID=g6LDXHUo0EzV...">http://www.asus.com/product.aspx?P_ID=g6LDXHUo0EzV...

The first one would idle at 0.9v.

The second would idle at 1.1v. When I edited the BIOS for lover idle voltages, I could not get it to be stable.

The third one turned out to have a much cheaper design - not only did it have slower memory, it had no voltage adjustments and idles at 1.25v (but the correct idle frequency).

vlado08 - Monday, October 12, 2009 - link

Are GT 220 capable of DXVA decoding of h.264 at High Profile Level 5.1?And videos wiht more than 5 reference frames. Because ATI HD4670 can only do High Profile (HiP) Level 4.1 Blu-ray compatible.

Also what is the deinterlacing on GT 220 if you have monitor and TV in extended mode? Is it vector adaptive deinterlacing?

These questions are important for this video card because it is obvious that it is not for gamer but for HTPC.

On HD 4670 when you have one monitor then you have vector adaptive deinterlacing but if you have two monitors or monitor and TV and they are in extended mode then you only have "bob" deinterlacing.

I'm not sure if this is driver bug or hardware limitation.

Ryan Smith - Monday, October 12, 2009 - link

I have another GT 220 card due this week or early next. Drop me an email; if you have something I can use to test it, I will gladly try it out. I have yet to encounter anything above 4.1 though; it seems largely academic.MadMan007 - Monday, October 12, 2009 - link

So my inner nerd that just has to know is confused. Are these truly GT200-based or G9x-based? Different sources say different things. In a way the GT200 series was an improvement on G9x anyway but with enough significant low level changes to make it different. The article calls these GT200 *series* but that could be in name only. It's not clear if that means smaller process cut down die GT200-based or added feature G9x-based.Inquiring nerds want to know!