NVIDIA’s GeForce GT 220: 40nm and DX10.1 for the Low-End

by Ryan Smith on October 12, 2009 6:00 AM EST- Posted in

- GPUs

There are some things you just don’t talk about among polite company. Politics, money, and apparently OEM-only GPUs. Back in July NVIDIA launched their first 40nm GPUs, and their first GPUs featuring DX10.1 support; these were the GeForce GT 220 and G 210. And if you blinked, you probably missed it. As OEM-only parts, these went into the OEM channel without any fanfare or pageantry.

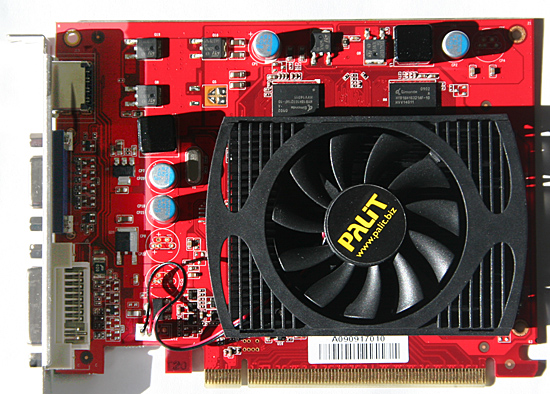

Today that changes. NVIDIA is moving the GT 220 and G 210 from OEM-only sales to retail, which means NVIDIA’s retail vendors can finally get in on the act and begin selling cards. Today we are looking at one of the first of those cards, the Palit GT 220 Sonic Edition.

| Form Factor | 9600GT | 9600GSO | GT 220 (GDDR3) | 9500GT | G 210 (DDR2) |

| Stream Processors | 64 | 48 | 48 | 32 | 16 |

| Texture Address / Filtering | 32 / 32 | 24 / 24 | 16 / 16 | 16 / 16 | 16 / 16 |

| ROPs | 16 | 16 | 8 | 8 | 8 |

| Core Clock | 650MHz | 600MHz | 625MHz | 550MHz | 675MHz |

| Shader Clock | 1625MHz | 1500MHz | 1360MHz | 1400MHz | 1450MHz |

| Memory Clock | 900MHz | 900MHz | 900MHz | 400MHz |

400MHz |

| Memory Bus Width | 256-bit | 128-bit | 128-bit | 128-bit | 64-bit |

| Frame Buffer | 512MB | 512MB | 512MB | 512MB | 512MB |

| Transistor Count | 505M | 505M | 486M | 314M | 260M |

| Manufacturing Process | TSMC 55nm | TSMC 55nm | TSMC 40nm | TSMC 55nm | TSMC 40nm |

| Price Point | $69-$85 | $40-$60 | $69-$79 | $45-$60 | $40-$50 |

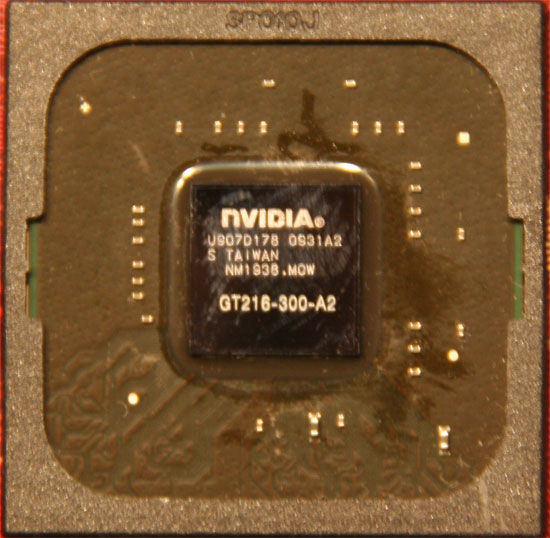

GT 220 and G 210 are based on the GT216 and GT218 cores respectively (anyone confused yet?) which are the first and so far only 40nm members of NVIDIA’s GT200 family. These are specifically designed as low-end cards, with 48 SPs on the GT 220, and 16 SPs on the G 210. The GT 220 is designed to sit between the 9500GT and 9600GT in performance, making its closest competitor the 48SP 9600GSO. Meanwhile the G 210 is the replacement for the 9400GT.

We will see multiple configurations for each card, as NVIDIA handles low-end parts somewhat looser than they do the high-end parts. GT 220 will come with DDR2, DDR3, or GDDR3 memory on a 128bit bus, with 512MB or 1GB of it. So the memory bandwidth of these cards is going to vary wildly; the DDR2 cards will be 400MHz and the GDDR3 cards may go as high as 1012MHz according to NVIDIA’s specs. G 210 meanwhile will be DDR2 and DDR3 only, again going up to 1GB. With a 64bit memory bus, it will have half the memory bandwidth of GT 220.

The GPU configurations will vary too, but not as wildly. NVIDIA’s official specs call for a 625MHz core clock and a 1360MHz shader clock. We’ve seen a number of cards with a higher core clock, and a card with a lower shader clock. Based on the card we have, we’re going to consider 635Mhz/1360Mhz stock for the GPU, and 900MHz stock for GDDR3, and test accordingly.

The transistor count for the GT218 die comes out to 260M, and for GT216 it’s 486M. NVIDIA would not disclose the die size to us, but having disassembled our GT 220, we estimate it to be around 100mm2. One thing we do know for sure is that along with the small die, NVIDIA has managed to knock down power consumption for the GT 220. At load the card will draw 58W, at idle it’s a tiny 7W. We don’t have the power data for the G 210, but it’s undoubtedly lower.

The prices on GT 220 cards are expected to range between $69 and $79, with the cards at the top end being those with the best RAM. This puts GT 220 in competition with AMD’s Radeon HD 4600 series, and NVIDIA’s own 9600GT. The G 210 will have an approximate price of $45, putting it in range of the Radeon HD 4300/4500 series, and NVIDIA’s 9500GT. The GT 220 in particular is in an odd spot: it’s supposed to underperform the equally priced (if not slightly cheaper) 9600GT. Meanwhile the G210 is supposed to underperform the 9500GT, which is also available for $45. So NVIDIA is already starting off on the wrong foot here.

Finally, availability should not be an issue. These cards have been shipping for months to OEMs, so the only thing that has really changed is that now some of them are going into the retail pool. It’s a hard launch and then some. Not that you’ll see NVIDIA celebrating; while the OEM-only launch was no-key, this launch is only low-key at best. NVIDIA didn’t send out any samples, and it wasn’t until a few days ago that we had the full technical data on these new cards.

We would like to thank Palit for providing us with a GT 220 card for today’s launch, supplying us with their GT 220 Sonic Edition.

80 Comments

View All Comments

Joe90 - Monday, October 12, 2009 - link

Last week I bought a Medion P6620 laptop. It's fitted with an Nvidia 220M graphics card with 512MB of GDDR3 ram. The blurb of the box said it was a DirectX 10.1 card. Have NV released any details about the laptop 220M as well as the desktop 220? All I could find out from Wikipedia & Notebookcheck.net was that the 220M was a 40 micron version of the 9600M GT but the Anandtech review suggests that things are more complicated than this.My only contribution to the debate is that I picked up this laptop for a paltry £399. Given that my machine has a T6500, 320GB HD, all I can think is that NV has be giving away the 220M to OEMs for pennies!

Finally, has anyone seen any signs of Windows 7 drivers for the 220M? I got a freebie upgrade but I'm a bit scared of using it just in case there were no drivers for a graphics card that NV don't recognise on their own website.

JarredWalton - Monday, October 12, 2009 - link

I'm assuming http://www.nvidia.com/object/notebook_winvista_win...">these won't work? I know they don't list 220M, but they're the latest official drivers from NVIDIA; otherwise you're stuck with whatever the notebook manufacturer has delivered. I'd guess NVIDIA will have updated mobile notebook reference drivers relatively soon, though, to coincide with the official Win7 launch.Joe90 - Tuesday, October 13, 2009 - link

Many thanks for the link. I think you're right. Although this NV driver page refers to the '200 series', it doesn't explicitly mention the 220M card. Until it does, I'll hang back from doing the Windows 7 upgrade.As it happens, I've only just made the leap from XP to Vista. Despite all the bad stuff I've read, Vista seems ok so I might just stick with it for a while. I'm finding I can play games ok with the Vista/220M combination. I'm currently playing FEAR 2 at 1366 x 768 with all the detail settings on maximum and the game plays fine. Given the low price of the laptop, I'm more than happy with the graphics performance.

JarredWalton - Tuesday, October 13, 2009 - link

I've never had a true hate for Vista, but there are areas I dislike (several clicks just to get to resolution adjustment, for example). Windows 7 is better in pretty much every way as far as I can tell, although it's not like Vista is horrible and Win7 is awesome. It's more like Vista reached a state of being "good enough" and 7 addresses a few remaining flaws.Now, if someone at MS would fix the glitch where my laptop power settings keep resetting.... (Every few tests, my battery saving options will reset to "default" and turn screen saver, system sleep, etc. on after I explicitly disabled it for testing. Annoying!)

plonk420 - Monday, October 12, 2009 - link

it's AMAZING for this gen's "lowest end" (at retail)... beats the pants off my 9400GT currently in my HTPC. probably even equals or betters my once only 2 generation old nearly high end HD3850.this is going in my HTPC in a heartbeat when it hits $30-35 (unless power consumption is stupid high, which i don't think it is. i THINK i read about this somewhere else, and someone was moaning about it using 4 watts more than some ATI part it was being compared to).

ltcommanderdata - Monday, October 12, 2009 - link

Given the more competitive desktop landscape, these new 40nm DX10.1 chips are not impressive at all. However, their mobile derivatives were announced months ago, and in the mobile space where mainstream GPUs seem to be made up of many, many combinations of nVidia's 32SP GPUs, a 40nm 48SP DX10.1 GPU would actually bring something to the table. It'd be great if we can get a review of a notebook with the GT 240M for instance.JarredWalton - Monday, October 12, 2009 - link

It looks like there are laptops with GT 240M starting at around $1100, and the jump from there to a 9800M card (96SPs, 256-bit RAM) is very small. Unless you can get GT 240M laptops for around $800, I don't see them being a big deal. Other factors could change my mind, though - battery life perhaps, or size/form factor considerations.apple3feet - Monday, October 12, 2009 - link

For some people, the most important detail of any new NVIDIA card is the CUDA Compute Capability. Will it run my scientific simulation code? Only if the CUDA Compute Capability is 1.3 - so that it supports double precision floating point arithmetic. Could we have just one line somewhere to tell us this vital info?jasperjones - Monday, October 12, 2009 - link

LOL,for dgemm (double-precision matrix multiplication) the GTX 285 is only about twice as fast as a Core i7 920 (using CUBLAS and Intel MKL, respectively).This suggests you shouldn't run your double precision code on a GT 220. Hell, even running CUDA code on your CPU using emulation might be faster than running on the GT 220..

apple3feet - Wednesday, October 14, 2009 - link

You're wrong. Given the right problem, tackled using the right algorithm, and well-written code, TESLAs can do 20x the throughput of a Nehalem - even in double precision.