NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

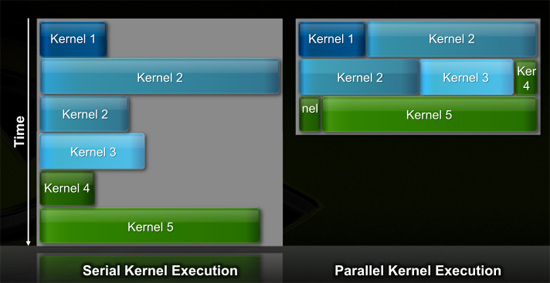

Efficiency Gets Another Boon: Parallel Kernel Support

In GPU programming, a kernel is the function or small program running across the GPU hardware. Kernels are parallel in nature and perform the same task(s) on a very large dataset.

Typically, companies like NVIDIA don't disclose their hardware limitations until a developer bumps into one of them. In GT200/G80, the entire chip could only be working on one kernel at a time.

When dealing with graphics this isn't usually a problem. There are millions of pixels to render. The problem is wider than the machine. But as you start to do more general purpose computing, not all kernels are going to be wide enough to fill the entire machine. If a single kernel couldn't fill every SM with threads/instructions, then those SMs just went idle. That's bad.

GT200 (left) vs. Fermi (right)

Fermi, once again, fixes this. Fermi's global dispatch logic can now issue multiple kernels in parallel to the entire system. At more than twice the size of GT200, the likelihood of idle SMs went up tremendously. NVIDIA needs to be able to dispatch multiple kernels in parallel to keep Fermi fed.

Application switch time (moving between GPU and CUDA mode) is also much faster on Fermi. NVIDIA says the transition is now 10x faster than GT200, and fast enough to be performed multiple times within a single frame. This is very important for implementing more elaborate GPU accelerated physics (or PhysX, great ;)…).

The connections to the outside world have also been improved. Fermi now supports parallel transfers to/from the CPU. Previously CPU->GPU and GPU->CPU transfers had to happen serially.

415 Comments

View All Comments

hazarama - Saturday, October 3, 2009 - link

"Do you see any sign of commercial software support? Anybody Nvidia can point to and say "they are porting $important_app to openCL"? I haven't heard a mention. That pretty much puts Nvidia's GPU computing schemes solely in the realm of academia"Maybe you should check out Snow Leopard ..

samspqr - Friday, October 2, 2009 - link

Well, I do HPC for a living, and I think it's too early to push GPU computing so hard because I've tried to use it, and gave up because it required too much effort (and I didn't know exactly how much I would gain in my particular applications).I've also tried to promote GPU computing among some peers who are even more hardcore HPC users, and they didn't pick it up either.

If even your typical physicist is scared by the complexity of the tool, it's too early.

(as I'm told, there was a time when similar efforts were needed in order to use the mathematical coprocessor...)

Yojimbo - Sunday, October 4, 2009 - link

>>If even your typical physicist is scared by the complexity of the >>tool, it's too early.This sounds good but it's not accurate. Physicists are interested in physics and most are not too keen on learning some new programing technique unless it is obvious that it will make a big difference for them. Even then, adoption is likely to be slow due to inertia. Nvidia is trying to break that inertia by pushing gpu computing. First they need to put the hardware in place and then they need to convince people to use it and put the software in place. They don't expect it to work like a switch. If they think the tools are in place to make it viable, then how is the time to push, because it will ALWAYS require a lot of effort when making the switch.

jessicafae - Saturday, October 3, 2009 - link

Fantastic article.I do bioinformatics / HPC and in our field too we have had several good GPU ports for a handful for algorithms, but nothing so great to drive us to add massive amounts of GPU racks to our clusters. With OpenCL coming available this year, the programming model is dramatically improved and we will see a lot more research and prototypes of code being ported to OpenCL.

I feel we are still in the research phase of GPU computing for HPC (workstations, a few GPU racks, lots of software development work). I am guessing it will be 2+ years till GPU/stream/OpenCL algorithms warrant wide-spread adoption of GPUs in clusters. I think a telling example is the RIKEN 12petaflop supercomputer which is switching to a complete scalar processor approach (100,000 Sparc64 VIIIfx chips with 800,000 cores)

http://www.fujitsu.com/global/news/pr/archives/mon...">http://www.fujitsu.com/global/news/pr/archives/mon...

Thatguy97 - Thursday, May 28, 2015 - link

oh fermi how i miss ya hot underperforming ass