NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

ECC Support

AMD's Radeon HD 5870 can detect errors on the memory bus, but it can't correct them. The register file, L1 cache, L2 cache and DRAM all have full ECC support in Fermi. This is one of those Tesla-specific features.

Many Tesla customers won't even talk to NVIDIA about moving their algorithms to GPUs unless NVIDIA can deliver ECC support. The scale of their installations is so large that ECC is absolutely necessary (or at least perceived to be).

Unified 64-bit Memory Addressing

In previous architectures there was a different load instruction depending on the type of memory: local (per thread), shared (per group of threads) or global (per kernel). This created issues with pointers and generally made a mess that programmers had to clean up.

Fermi unifies the address space so that there's only one instruction and the address of the memory is what determines where it's stored. The lowest bits are for local memory, the next set is for shared and then the remainder of the address space is global.

The unified address space is apparently necessary to enable C++ support for NVIDIA GPUs, which Fermi is designed to do.

The other big change to memory addressability is in the size of the address space. G80 and GT200 had a 32-bit address space, but next year NVIDIA expects to see Tesla boards with over 4GB of GDDR5 on board. Fermi now supports 64-bit addresses but the chip can physically address 40-bits of memory, or 1TB. That should be enough for now.

Both the unified address space and 64-bit addressing are almost exclusively for the compute space at this point. Consumer graphics cards won't need more than 4GB of memory for at least another couple of years. These changes were painful for NVIDIA to implement, and ultimately contributed to Fermi's delay, but necessary in NVIDIA's eyes.

New ISA Changes Enable DX11, OpenCL and C++, Visual Studio Support

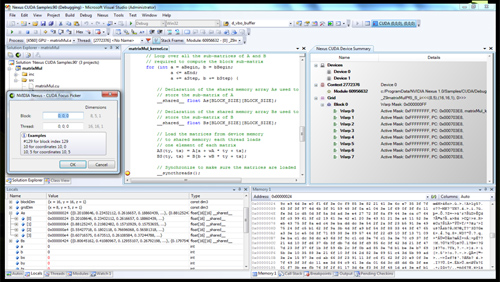

Now this is cool. NVIDIA is announcing Nexus (no, not the thing from Star Trek Generations) a visual studio plugin that enables hardware debugging for CUDA code in visual studio. You can treat the GPU like a CPU, step into functions, look at the state of the GPU all in visual studio with Nexus. This is a huge step forward for CUDA developers.

Nexus running in Visual Studio on a CUDA GPU

Simply enabling DX11 support is a big enough change for a GPU - AMD had to go through that with RV870. Fermi implements a wide set of changes to its ISA, primarily designed at enabling C++ support. Virtual functions, new/delete, try/catch are all parts of C++ and enabled on Fermi.

415 Comments

View All Comments

Voo - Saturday, October 3, 2009 - link

You may overseen it, but there was a edit by an administrator to one of his posts which did exactly what you want ;)james jwb - Sunday, October 4, 2009 - link

that's good to hear :)Hxx - Friday, October 2, 2009 - link

By the looks of it, Nvidia doesn't have much going on for this year. If they loose the DX11 boat against ATI then I will pity their stockholders. About the only thing that makes those green cards attractive is their Physics spiel. Now if ATI would hurry up and do somethin with that Havoc, then dark days will await Nvidia. One way or the other, its a win-win for the consumer. I just wish their AMD division would fare just as well against intel.Zool - Friday, October 2, 2009 - link

I dont wont to be too pesimistic but availability in Q1 2010 is lame late. Windows 7 will come out soon so people will surely want to upgrade to dx11 till christmas. Also OEM market which is actualy the most profitable. Dell, HP and others will hawe windows 7 systems and they will of course need dx11 cards till christmas.(amd will hawe hopefully all models out till that time)Than of course dx11 games that will come out in future can be optimized for radeon 5K now while for gt300 we dont even know the graphic specs and the only working silicon dont even resemble to a card.

Very bad timing for nvidia this time that will give amd a huge advantage.

Zool - Friday, October 2, 2009 - link

Actualy this could hapen if u merge a super gpgpu tesla card and a GPU and want to sell it as one("because designing GPUs this big is "fucking hard"). Average people (maybe 95% of all) dont even know what Megabyte or bit is not even GPGPU. They will want to buy a graphic card not cuda card.If amd and microsoft will make heawy DX11 pr than even the rest of nvidias gpus wont sell.

PorscheRacer - Friday, October 2, 2009 - link

As with anything hardware, you need the killer software to have consumers want it. DX11 is out now, so we have Windows 7 (which most people are taking a liking to, even gamers) and you have a few upcoming games that people look to be interested in. For GPGPU and all that, well... What do we have as a seriously awesome application that consumers want and feel they need to go out and buy a GPU for? Some do that for F@H and the like, and a few for transcoding video, but what else is there? Until we see that, it's going to be ahrd to convince consumers to buy that GPU. As it is, most feel IGP is good enough for them...PorscheRacer - Friday, October 2, 2009 - link

Actually, thinking about this... Maybe if they were able to put a small portion of this into IGP, and include some good software with it, maybe the average consumer could see the benefits easier and quicker and be inclined to go for that step up to a dedicated GPU?RXR - Friday, October 2, 2009 - link

DocSilicon, you are one funny as hell mental patient to be!. I really hope you dont get banned. You just made reading the comments a whole lot more fun. Plus, it's win win. You get to satisfy your need to go completely postal at everyone, and we get a funny sideshow.- Friday, October 2, 2009 - link

Great words but nothing behind! Fermis is Nvidias Prescott or should I say much like the last Voodoo chip that never really appeared on the market? Too many transistors are not good ...ioannis - Friday, October 2, 2009 - link

Although the Star Trek TNG reference is ok, 'Nexus' should have been accompanied by a Blade Runner reference instead, Nexus-6 :)