NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

ECC Support

AMD's Radeon HD 5870 can detect errors on the memory bus, but it can't correct them. The register file, L1 cache, L2 cache and DRAM all have full ECC support in Fermi. This is one of those Tesla-specific features.

Many Tesla customers won't even talk to NVIDIA about moving their algorithms to GPUs unless NVIDIA can deliver ECC support. The scale of their installations is so large that ECC is absolutely necessary (or at least perceived to be).

Unified 64-bit Memory Addressing

In previous architectures there was a different load instruction depending on the type of memory: local (per thread), shared (per group of threads) or global (per kernel). This created issues with pointers and generally made a mess that programmers had to clean up.

Fermi unifies the address space so that there's only one instruction and the address of the memory is what determines where it's stored. The lowest bits are for local memory, the next set is for shared and then the remainder of the address space is global.

The unified address space is apparently necessary to enable C++ support for NVIDIA GPUs, which Fermi is designed to do.

The other big change to memory addressability is in the size of the address space. G80 and GT200 had a 32-bit address space, but next year NVIDIA expects to see Tesla boards with over 4GB of GDDR5 on board. Fermi now supports 64-bit addresses but the chip can physically address 40-bits of memory, or 1TB. That should be enough for now.

Both the unified address space and 64-bit addressing are almost exclusively for the compute space at this point. Consumer graphics cards won't need more than 4GB of memory for at least another couple of years. These changes were painful for NVIDIA to implement, and ultimately contributed to Fermi's delay, but necessary in NVIDIA's eyes.

New ISA Changes Enable DX11, OpenCL and C++, Visual Studio Support

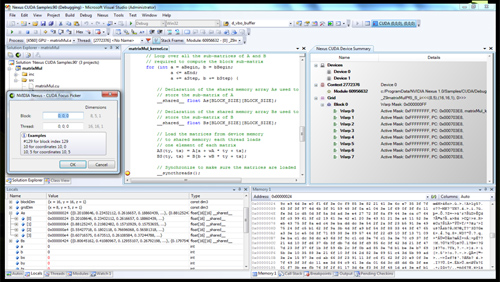

Now this is cool. NVIDIA is announcing Nexus (no, not the thing from Star Trek Generations) a visual studio plugin that enables hardware debugging for CUDA code in visual studio. You can treat the GPU like a CPU, step into functions, look at the state of the GPU all in visual studio with Nexus. This is a huge step forward for CUDA developers.

Nexus running in Visual Studio on a CUDA GPU

Simply enabling DX11 support is a big enough change for a GPU - AMD had to go through that with RV870. Fermi implements a wide set of changes to its ISA, primarily designed at enabling C++ support. Virtual functions, new/delete, try/catch are all parts of C++ and enabled on Fermi.

415 Comments

View All Comments

palladium - Monday, October 5, 2009 - link

Not quite:http://www.dailytech.com/article.aspx?newsid=16410">http://www.dailytech.com/article.aspx?newsid=16410

Scroll down halfway thru the comments. He re-registered as SilicconDoc and barks about his hatred for red roosters (in an Apple-related article!)

johnsonx - Monday, October 5, 2009 - link

that looks more like someone mocking him- Sunday, October 4, 2009 - link

According to this very link http://www.anandtech.com/video/showdoc.aspx?i=3573...">http://www.anandtech.com/video/showdoc.aspx?i=3573... AMD already presented a WORKING SILICON at Computex roughly 4 months ago on June 3rd. So it took roughly 4 and a half months to prepare drivers, infrastructure and mass production to have enoough for the start of Windows 7 and DX11. However, Nvidia wasnt even talking about W7 and DX11 so late Q1 2010 or even later becomes more realistic than december. But there are much more questions ahead: What pricepoint, Clockrates and TDP. My impression is that Nvidia has no clue about this questions and the more I watch this development, the more Fermi resembles to the Voodoo5 Chip and the V6000 card which never made into the market because of its much to high TDP.silverblue - Sunday, October 4, 2009 - link

Nah, I expect nVidia to do everything they can to get this into retail channels because it's the culmination of a lot of hard work. I also expect it to be a monster, but I'm still curious as to how they're going to sort out mainstream options due to their top-down philosophy.That's not to say ATI's idea of a mid-range card that scales up and down doesn't have its flaws, but with both the 4800 and 5800 series, there's been a card out at the start with a bona fide GPU with nothing disabled (4850, and now 5870), along with a cheaper counterpart with slower RAM and a slightly handicapped core (4830/5850). Higher spec single GPU versions will most likely just benefit from more and/or faster RAM and/or a higher core clock, but the architecture of the core itself will probably be unchanged - can nVidia afford to release a competing version of Fermi without disabling parts of the core? If it's as powerful as we're lead to believe, it will certainly warrant a higher price tag than the 5870.

Ahmed0 - Saturday, October 3, 2009 - link

Nvidia wants it to be the jack of all trades. However, they are risking with being an overpriced master of none. Thats probably the reason they give their cards more and more gimmicks to play with each year. They are hoping that the cards value will be greater than the sum of its parts. And that might even be a successful strategy to some extent. In a consumerist world, reputation is everything.They might start overdoing it at some point though.

Its like mobile phones nowadays. You really dont need to have a radio, an mp3-player, a camera nor other such extras in it (in fact, my phone isnt able to do anything but call and send messages). But unless you have these features, you arent considered as competition. It gives you the opportunity to call your product "vastly superior" even though from a usability standpoint it isnt.

SymphonyX7 - Saturday, October 3, 2009 - link

Ahh... I see where you're coming from. I've had many classmates who've asked me what laptop to buy and they're always so giddy when they see laptops with the "Geforce" sticker and say they want it cause they want some casual gaming. Yes, even if the GPU is a Geforce 9100M. I recommended them laptop using AMD's Puma platform and many of them ask if that's a good choice (unfortunately here, only the Macbook has a 9400M GPU and it's still outside many of my classmates' budgets). Seems like brand awareness of Nvidia amongst many consumers is still much better than AMD/ATI's. So it's an issue of clever branding then?Lifted - Saturday, October 3, 2009 - link

A little late for any meaningful discussion over here as AT let the trolls go for 40 or so pages. I doubt many people can be arsed to sort through it now, so you'd be better off going to a forum for a real discussion of Fermi.neomocos - Saturday, October 3, 2009 - link

if you missed it then here you go ... happy day for all of us :quote from comment posted on page 37 by Pastuch

" Below is an email I got from Anand. Thanks so much for this wonderful site.

-------------------------------------------------------------------

Thank you for your email. SiliconDoc has been banned and we're accelerating the rollout of our new comments rating/reporting system as a result of him and a few other bad apples lately.

A- "

james jwb - Saturday, October 3, 2009 - link

Some may enjoy it, but this unusual freedom that blatant trolls using aggressive, rude language are getting lately is making a mockery of this site.I don't mind it going on for a while, even 20 pages tbh, it is funny, but at some point i'd like to see a message from Gary saying, "K, SiliconDoc, we've laughed enough at your drivel, tchau, banned! :)"

That's what i want to see after reading through 380 bloody comments, not that he's pretty much gotten away with it. And if he has finally been banned, i'd actually love to know about it in the comments section.

/Rant over.

Gary Key - Monday, October 5, 2009 - link

He is gone as are a couple of others. We have a new comments system in final development now that should take care of this problem in the future.