Lucid Hydra 200: Vendor Agnostic Multi-GPU, Available in 30 Days

by Anand Lal Shimpi on September 22, 2009 5:00 PM EST- Posted in

- GPUs

A year ago Lucid announced the Hydra 100: a physical chip that could enable hardware multi-GPU without any pesky SLI/Crossfire software, game profiles or anything like that.

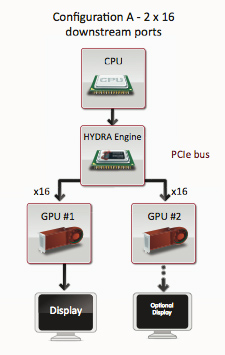

At a high level what Lucid's technology does is intercept OpenGL/DirectX commands from the CPU to the GPU and load balance them across any number of GPUs. The final buffers are read back by the Lucid chip and sent to primary GPU for display.

The technology sounds flawless. You don't need to worry about game profiles or driver support, you just add more GPUs and they should be perfectly load balanced. Even more impressive is Lucid's claim that you can mix and match GPUs of different performance levels. For example you could put a GeForce GTX 285 and a GeForce 9800 GTX in parallel and the two would be perfectly load balanced by Lucid's hardware; you'd get a real speedup. Eventually, Lucid will also enable multi-GPU configurations from different vendors (e.g. one NVIDIA GPU + one AMD GPU).

At least on paper, Lucid's technology has the potential to completely eliminate all of the multi-GPU silliness we've been dealing with for the past several years. Today, Lucid is announcing the final set of hardware that will be shipping within the next ~30 days.

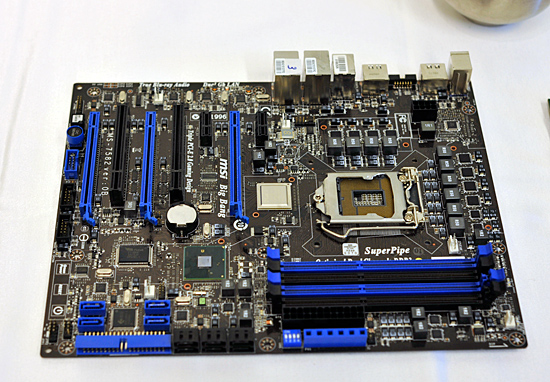

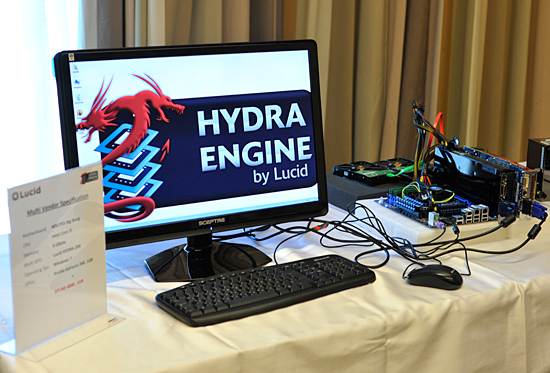

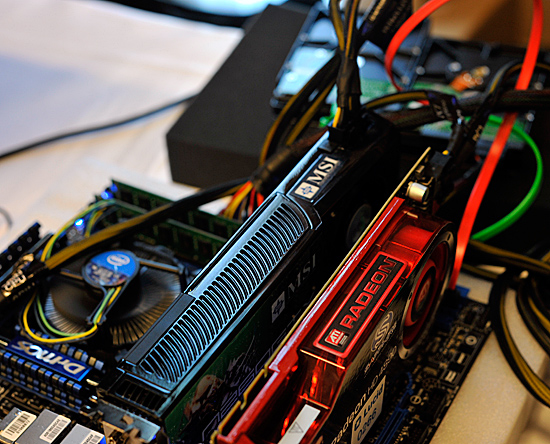

The MSI Big Bang, a P55 motherboard with Lucid's Hydra 200

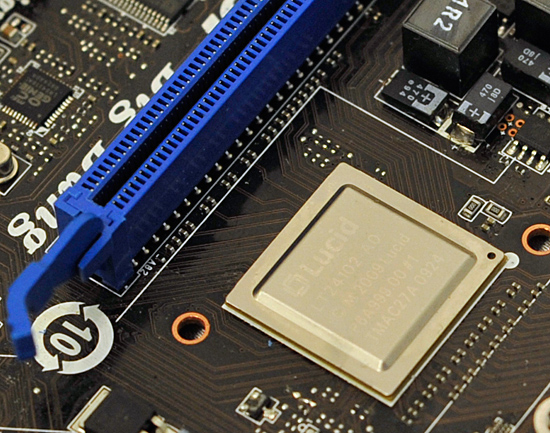

It's called the Hydra 200 and it will first be featured on MSI's Big Bang P55 motherboard. Unlike the Hydra 100 we talked about last year, 200 is built on a 65nm process node instead of 130nm. The architecture is widely improved thanks to much more experience with the chip on Lucid's part.

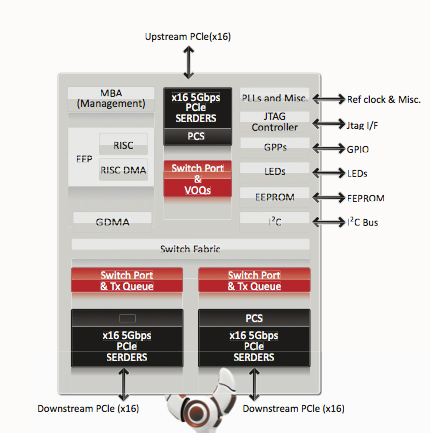

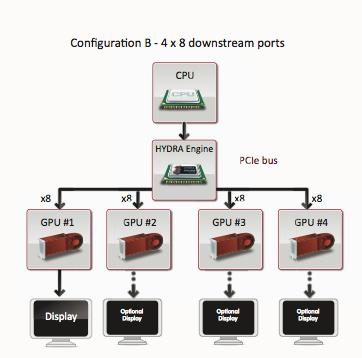

There are three versions of the Hydra 200: the LT22114, the LT22102 and the LT22114. The only difference between the chips are the number of PCIe lanes. The lowest end chip has a x8 connection to the CPU/PCIe controller and two x8 connections to GPUs. The midrange LT22102 has a x16 connection to the CPU and two x16 connections for GPUs. And the highest end solution, the one being used on the MSI board, has a x16 to the CPU and then a configurable pair of x16s to GPUs. You can operate this controller in 4 x8 mode, 1 x16 + 2 x8 or 2 x16. It's all auto sensing and auto-configurable. The high end product will be launching in October, with the other two versions shipping into mainstream and potentially mobile systems some time later.

Lucid wouldn't tell us the added cost on a motherboard but Lucid gave us the guidance of around $1.50 per PCIe lane. The high end chip has 48 total PCIe lanes, which puts the premium at $72. The low end chip has 24 lanes, translating into a $36 cost for the Hydra 200 chip. Note that since the Hydra 200 has an integrated PCIe switch, there's no need for extra chips on the motherboard (and of course no SLI licensing fees). The first implementation of the Hydra 200 will be on MSI's high end P55 motherboard, so we can expect prices to be at the upper end of the spectrum. With enough support, we could see that fall into the upper mainstream segment.

Lucid specs the Hydra 200 at a 6W TDP.

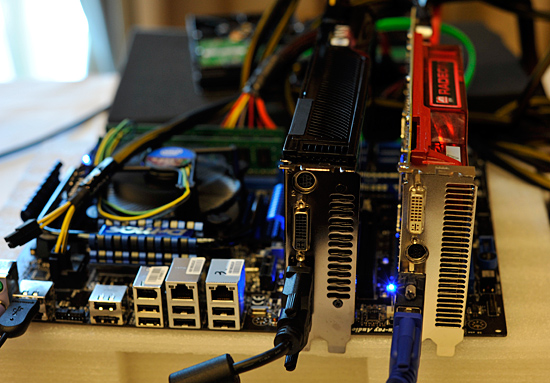

Also unlike last year, we actually got real seat time with the Hydra 200 and MSI's Big Bang. Even better: we got to play on a GeForce GTX 260 + ATI Radeon HD 4890 running in multi-GPU mode.

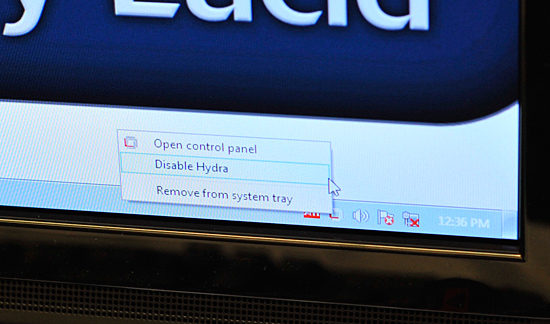

Of course with two different GPU vendors, we need Windows 7 to allow both drivers to work at the same time. Lucid's software runs in the background and lets you enable/disable multi-GPU mode:

If for any reason Lucid can't run a game in multi-GPU mode, it will always fall back to working on a single GPU without any interaction from the end user. Lucid claims to be able to accelerate all DX9 and DX10 games, although things like AA become easier in DX10 since all hardware should resolve the same way.

NVIDIA and ATI running in multi-GPU mode on a single system

There are a lot of questions about performance and compatibility, but honestly we can't say much on that until we get the hardware ourselves. We were given some time to play with the system and can say that it at least works.

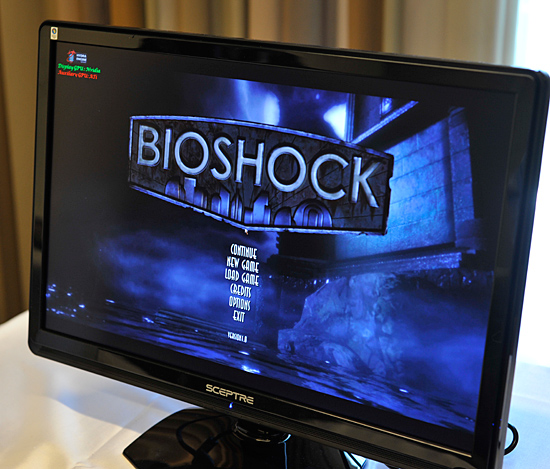

Lucid only had two games installed on the cross-vendor GPU setup: Bioshock and FEAR 2. There are apparently more demos at the show floor, we'll try and bring more impressions from IDF later this week.

94 Comments

View All Comments

Chlorus - Tuesday, September 22, 2009 - link

I had written this off as vaporware, seeing as how Intel seemed to completely forget about it after last year.prophet001 - Tuesday, September 22, 2009 - link

wow... just wowand :)

JAG87 - Tuesday, September 22, 2009 - link

If it works this will be the holy grail of pc gaming.gigahertz20 - Wednesday, September 23, 2009 - link

It's like momma always said, if it's too good to be true, it probably is.But if it works as advertised, hydra will be going on all kinds of motherboards. Who would ever want to do SLI or crossfire, when you can buy a motherboard with this chip and get linear performance scaling with each additional video card you add.

I hope the hype lives up to the expectations, but I'm prepared for disappointment.

GeorgeH - Tuesday, September 22, 2009 - link

This.That noise you hear is the sound of countless wallets slamming shut for the next 30 days. You'd have to be a fool to buy or build a new gaming PC until we find out how well this actually works (and exactly how much it will cost.)

ianken - Wednesday, September 23, 2009 - link

Not mine.We know that not all GPUs render the same way. AMD AA looks different than nVidia AA. They have different modes and make different trade offs for perf.

I suspect best results will still be matched GPUs. The win is (possibly) the ability to get better load balancing than you get with SLI or Crossfire. Some games scale poorly beyond two GPUs, will this fix that? If so then THAT is the win.

What I do forsee is a gina tpile of app compat issues. Tons of forums posts that are "Hey, how to you get X working with GPUs FOO and BAR? Anyone? BUMP"

MadMan007 - Wednesday, September 23, 2009 - link

Countless is way overstating it but I'm sure some people will wait for it. Ultimately it's still a multi-GPU configuration which is a small niche.Ratinator - Wednesday, September 23, 2009 - link

This technology would convert me to go multi GPU. In the past I would just buy one card and by the time I needed another I would just upgrade to the latest which was usually significantly faster. I didn't want the hassle of having to get the exact same brand and model. Now I could just buy the latest and greatest and put that one in as well.YGDRASSIL - Wednesday, September 23, 2009 - link

So you get a new card which is three times as fast as your old. You keep the old juicesucking basterd in to get maybe a 20% speedup.Ratinator - Friday, September 25, 2009 - link

PS: The old Juice sucking bastard??? Have you seen the new ATI card and how much juice it sucks. Just because it is old doesn't mean it sucks more juice.