AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

The Return of Supersample AA

Over the years, the methods used to implement anti-aliasing on video cards have bounced back and forth. The earliest generation of cards such as the 3Dfx Voodoo 4/5 and ATI and NVIDIA’s DirectX 7 parts implemented supersampling, which involved rendering a scene at a higher resolution and scaling it down for display. Using supersampling did a great job of removing aliasing while also slightly improving the overall quality of the image due to the fact that it was sampled at a higher resolution.

But supersampling was expensive, particularly on those early cards. So the next generation implemented multisampling, which instead of rendering a scene at a higher resolution, rendered it at the desired resolution and then sampled polygon edges to find and remove aliasing. The overall quality wasn’t quite as good as supersampling, but it was much faster, with that gap increasing as MSAA implementations became more refined.

Lately we have seen a slow bounce back to the other direction, as MSAA’s imperfections became more noticeable and in need of correction. Here supersampling saw a limited reintroduction, with AMD and NVIDIA using it on certain parts of a frame as part of their Adaptive Anti-Aliasing(AAA) and Supersample Transparency Anti-Aliasing(SSTr) schemes respectively. Here SSAA would be used to smooth out semi-transparent textures, where the textures themselves were the aliasing artifact and MSAA could not work on them since they were not a polygon. This still didn’t completely resolve MSAA’s shortcomings compared to SSAA, but it solved the transparent texture problem. With these technologies the difference between MSAA and SSAA were reduced to MSAA being unable to anti-alias shader output, and MSAA not having the advantages of sampling textures at a higher resolution.

With the 5800 series, things have finally come full circle for AMD. Based upon their SSAA implementation for Adaptive Anti-Aliasing, they have re-implemented SSAA as a full screen anti-aliasing mode. Now gamers can once again access the higher quality anti-aliasing offered by a pure SSAA mode, instead of being limited to the best of what MSAA + AAA could do.

Ultimately the inclusion of this feature on the 5870 comes down to two matters: the card has lots and lots of processing power to throw around, and shader aliasing was the last obstacle that MSAA + AAA could not solve. With the reintroduction of SSAA, AMD is not dropping or downplaying their existing MSAA modes; rather it’s offered as another option, particularly one geared towards use on older games.

“Older games” is an important keyword here, as there is a catch to AMD’s SSAA implementation: It only works under OpenGL and DirectX9. As we found out in our testing and after much head-scratching, it does not work on DX10 or DX11 games. Attempting to utilize it there will result in the game switching to MSAA.

When we asked AMD about this, they cited the fact that DX10 and later give developers much greater control over anti-aliasing patterns, and that using SSAA with these controls may create incompatibility problems. Furthermore the games that can best run with SSAA enabled from a performance standpoint are older titles, making the use of SSAA a more reasonable choice with older games as opposed to newer games. We’re told that AMD will “continue to investigate” implementing a proper version of SSAA for DX10+, but it’s not something we’re expecting any time soon.

Unfortunately, in our testing of AMD’s SSAA mode, there are clearly a few kinks to work out. Our first AA image quality test was going to be the railroad bridge at the beginning of Half Life 2: Episode 2. That scene is full of aliased metal bars, cars, and trees. However as we’re going to lay out in this screenshot, while AMD’s SSAA mode eliminated the aliasing, it also gave the entire image a smooth makeover – too smooth. SSAA isn’t supposed to blur things, it’s only supposed to make things smoother by removing all aliasing in geometry, shaders, and textures alike.

As it turns out this is a freshly discovered bug in their SSAA implementation that affects newer Source-engine games. Presumably we’d see something similar in the rest of The Orange Box, and possibly other HL2 games. This is an unfortunate engine to have a bug in, since Source-engine games tend to be heavily CPU limited anyhow, making them perfect candidates for SSAA. AMD is hoping to have a fix out for this bug soon.

“But wait!” you say. “Doesn’t NVIDIA have SSAA modes too? How would those do?” And indeed you would be right. While NVIDIA dropped official support for SSAA a number of years ago, it has remained as an unofficial feature that can be enabled in Direct3D games, using tools such as nHancer to set the AA mode.

Unfortunately NVIDIA’s SSAA mode isn’t even in the running here, and we’ll show you why.

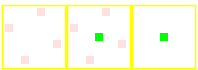

5870 SSAA

GTX 280 MSAA

GTX 280 SSAA

At the top we have the view from DX9 FSAA Viewer of ATI’s 4x SSAA mode. Notice that it’s a rotated grid with 4 geometry samples (red) and 4 texture samples. Below that we have NVIDIA’s 4x MSAA mode, a rotated grid with 4 geometry samples and a single texture sample. Finally we have NVIDIA’s 4x SSAA mode, an ordered grid with 4 geometry samples and 4 texture samples. For reasons that we won’t get delve into, rotated grids are a better grid layout from a quality standpoint than ordered grids. This is why early implementations of AA using ordered grids were dropped for rotated grids, and is why no one uses ordered grids these days for MSAA.

Furthermore, when actually using NVIDIA's SSAA mode, we ran into some definite quality issues with HL2: Ep2. We're not sure if these are related to the use of an ordered grid or not, but it's a possibility we can't ignore.

If you compare the two shots, with MSAA 4x the scene is almost perfectly anti-aliased, except for some trouble along the bottom/side edge of the railcar. If we switch to SSAA 4x that aliasing is solved, but we have a new problem: all of a sudden a number of fine tree branches have gone missing. While MSAA properly anti-aliased them, SSAA anti-aliased them right out of existence.

For this reason we will not be taking a look at NVIDIA’s SSAA modes. Besides the fact that they’re unofficial in the first place, the use of a rotated grid and the problems in HL2 cement the fact that they’re not suitable for general use.

327 Comments

View All Comments

Ryan Smith - Wednesday, September 23, 2009 - link

The load temp is the same as a single card.ilnot1 - Wednesday, September 23, 2009 - link

Does anyone have a link to any review that compares 4850's, 4870's, and 4890's in Crossfire against the 5870 & 5870 CF setup?T2k - Wednesday, September 23, 2009 - link

FWIW: http://www.techpowerup.com/reviews/AMD/HD_5870_PCI...">http://www.techpowerup.com/reviews/AMD/HD_5870_PCI...T2k - Wednesday, September 23, 2009 - link

Ehh, I meant: http://www.techpowerup.com/reviews/ATI/Radeon_HD_5...">http://www.techpowerup.com/reviews/ATI/Radeon_HD_5...ilnot1 - Wednesday, September 23, 2009 - link

Thanks T2k, but the only cards that are in Crossfire in that review are the 58XX's. There are no other comparisons to cards in CF or SLI. Since Ryan included some of the most recent nVida cards in SLI I was hoping to find the 48XX's in CF.T2k - Thursday, September 24, 2009 - link

Basically the rule of thumb seems to be that at 1920x1200 a single 5870 is still slightly slower than 4870X2 and probably slightly faster than a 4850X2 2GB.I own the latter so I will wait this time - either they lower the initial price of the 5870X2 or they release a 5850X2, otherwise I'll pass because single 5870 is simply OVERPRICED as it is already.

T2k - Wednesday, September 23, 2009 - link

Seriously: we get a very nice technical background section - then you top it with this more than idiotic collection of games for testing, leaving out 4850X2 2GB, 5850, using TWO stupid CryEngine-based PoS from Crytek, the most un-optimized code producers or WoW, of which even you admit it's CPU-bounded but now CoD:WaW, no Clear Sky, no UT3 or rather a single current Unreal Eninge-based game?Benchmarking part is ALMOST WORTHLESS, the only useful info is that unless you go above 1920x1200 the 4870X2 pretty much owns 5870's @ss as of now.

Ryan Smith - Wednesday, September 23, 2009 - link

For what it's worth, Batman: Arkham Asylum is UE3 engine based.T2k - Wednesday, September 23, 2009 - link

OK, I missed that (probably because I found the game shots ugly and became uninterested.)But how about ET:QW? Yes, it's not the best looking game but it is still popular, let alone World at War which is both great looking and crazy popular, let alone Clear Sky which is a very demanding DX10.1 game? Where is Fallout 3? Where is Modern Warfare?

FFS the most demanding are the quick ation-shooters and we, FPS players are the first one to upgrade to new cards...

Werelds - Thursday, September 24, 2009 - link

How would ET:QW be a good benchmark? Last I checked, it's still limited to the 30 FPS animations, which makes running it at more than 30 FPS pointless because everything will look jerky.I agree something like the CoD games should be included for comparison's sake, but they're hardly a good benchmark or taxing on a system. QW does not fall into the same category though, it has a smaller active playerbase than even L4D which lost a lot of players due to the lack of updates.