AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

The Return of Supersample AA

Over the years, the methods used to implement anti-aliasing on video cards have bounced back and forth. The earliest generation of cards such as the 3Dfx Voodoo 4/5 and ATI and NVIDIA’s DirectX 7 parts implemented supersampling, which involved rendering a scene at a higher resolution and scaling it down for display. Using supersampling did a great job of removing aliasing while also slightly improving the overall quality of the image due to the fact that it was sampled at a higher resolution.

But supersampling was expensive, particularly on those early cards. So the next generation implemented multisampling, which instead of rendering a scene at a higher resolution, rendered it at the desired resolution and then sampled polygon edges to find and remove aliasing. The overall quality wasn’t quite as good as supersampling, but it was much faster, with that gap increasing as MSAA implementations became more refined.

Lately we have seen a slow bounce back to the other direction, as MSAA’s imperfections became more noticeable and in need of correction. Here supersampling saw a limited reintroduction, with AMD and NVIDIA using it on certain parts of a frame as part of their Adaptive Anti-Aliasing(AAA) and Supersample Transparency Anti-Aliasing(SSTr) schemes respectively. Here SSAA would be used to smooth out semi-transparent textures, where the textures themselves were the aliasing artifact and MSAA could not work on them since they were not a polygon. This still didn’t completely resolve MSAA’s shortcomings compared to SSAA, but it solved the transparent texture problem. With these technologies the difference between MSAA and SSAA were reduced to MSAA being unable to anti-alias shader output, and MSAA not having the advantages of sampling textures at a higher resolution.

With the 5800 series, things have finally come full circle for AMD. Based upon their SSAA implementation for Adaptive Anti-Aliasing, they have re-implemented SSAA as a full screen anti-aliasing mode. Now gamers can once again access the higher quality anti-aliasing offered by a pure SSAA mode, instead of being limited to the best of what MSAA + AAA could do.

Ultimately the inclusion of this feature on the 5870 comes down to two matters: the card has lots and lots of processing power to throw around, and shader aliasing was the last obstacle that MSAA + AAA could not solve. With the reintroduction of SSAA, AMD is not dropping or downplaying their existing MSAA modes; rather it’s offered as another option, particularly one geared towards use on older games.

“Older games” is an important keyword here, as there is a catch to AMD’s SSAA implementation: It only works under OpenGL and DirectX9. As we found out in our testing and after much head-scratching, it does not work on DX10 or DX11 games. Attempting to utilize it there will result in the game switching to MSAA.

When we asked AMD about this, they cited the fact that DX10 and later give developers much greater control over anti-aliasing patterns, and that using SSAA with these controls may create incompatibility problems. Furthermore the games that can best run with SSAA enabled from a performance standpoint are older titles, making the use of SSAA a more reasonable choice with older games as opposed to newer games. We’re told that AMD will “continue to investigate” implementing a proper version of SSAA for DX10+, but it’s not something we’re expecting any time soon.

Unfortunately, in our testing of AMD’s SSAA mode, there are clearly a few kinks to work out. Our first AA image quality test was going to be the railroad bridge at the beginning of Half Life 2: Episode 2. That scene is full of aliased metal bars, cars, and trees. However as we’re going to lay out in this screenshot, while AMD’s SSAA mode eliminated the aliasing, it also gave the entire image a smooth makeover – too smooth. SSAA isn’t supposed to blur things, it’s only supposed to make things smoother by removing all aliasing in geometry, shaders, and textures alike.

As it turns out this is a freshly discovered bug in their SSAA implementation that affects newer Source-engine games. Presumably we’d see something similar in the rest of The Orange Box, and possibly other HL2 games. This is an unfortunate engine to have a bug in, since Source-engine games tend to be heavily CPU limited anyhow, making them perfect candidates for SSAA. AMD is hoping to have a fix out for this bug soon.

“But wait!” you say. “Doesn’t NVIDIA have SSAA modes too? How would those do?” And indeed you would be right. While NVIDIA dropped official support for SSAA a number of years ago, it has remained as an unofficial feature that can be enabled in Direct3D games, using tools such as nHancer to set the AA mode.

Unfortunately NVIDIA’s SSAA mode isn’t even in the running here, and we’ll show you why.

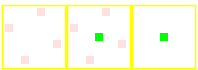

5870 SSAA

GTX 280 MSAA

GTX 280 SSAA

At the top we have the view from DX9 FSAA Viewer of ATI’s 4x SSAA mode. Notice that it’s a rotated grid with 4 geometry samples (red) and 4 texture samples. Below that we have NVIDIA’s 4x MSAA mode, a rotated grid with 4 geometry samples and a single texture sample. Finally we have NVIDIA’s 4x SSAA mode, an ordered grid with 4 geometry samples and 4 texture samples. For reasons that we won’t get delve into, rotated grids are a better grid layout from a quality standpoint than ordered grids. This is why early implementations of AA using ordered grids were dropped for rotated grids, and is why no one uses ordered grids these days for MSAA.

Furthermore, when actually using NVIDIA's SSAA mode, we ran into some definite quality issues with HL2: Ep2. We're not sure if these are related to the use of an ordered grid or not, but it's a possibility we can't ignore.

If you compare the two shots, with MSAA 4x the scene is almost perfectly anti-aliased, except for some trouble along the bottom/side edge of the railcar. If we switch to SSAA 4x that aliasing is solved, but we have a new problem: all of a sudden a number of fine tree branches have gone missing. While MSAA properly anti-aliased them, SSAA anti-aliased them right out of existence.

For this reason we will not be taking a look at NVIDIA’s SSAA modes. Besides the fact that they’re unofficial in the first place, the use of a rotated grid and the problems in HL2 cement the fact that they’re not suitable for general use.

327 Comments

View All Comments

SiliconDoc - Wednesday, September 30, 2009 - link

No, it's the fact you tell LIES, and always in ati's favor, and you got caught, over and over again.That is WHAT HAS HAPPENED.

Now you catch hold of your senses for a moment, and supposedly all the crap you spewed is "ok".

SiliconDoc - Friday, September 25, 2009 - link

Once again, all that matters to YOU, is YOUR games for PC, and ONLY top sellers, and only YOUR OPINION on PhysX.However, after you claimed only 2 games, you went on to bloviate about Havok.

Now you've avoided entirely that issue. Am I to assume, as you have apparently WISHED and thrown about, that HAVOK does not function on NVidia cards? NO QUITE THE CONTRARY !

--

What is REAL, is that NVidia runs Havok AND PhysX just fine, and not only that but ATI DOES NOT.

Now, instead of supporting BOTH, you have singled out your object of HATRED, and spewed your infantile rants, your put downs, your empty comparisons (mere statements), then DEMAND that I show PhysX is worthwhile, with "golden sellers". LOL

It has been 1.5 years or so since Aegia acquisition, and of course, game developers turning anything out in just 6 short months are considered miracle workers.

The real problem oif course for you is ATI does not support PhysX, and when a rouge coder made it happen, NVidia supported him, while ATI came in and crushed the poor fella.

So much for "competition", once again.

Now, I'd demand you show where HAVOK is worthwhile, EXCEPT I'm not the type of person that slams and reams and screams against " a percieved enemy company" just because "my favorite" isn't capable, and in that sense, my favorite IS CAPABLE.

Now, PhysX is awesome, it's great, it's the best there is, and that may or may not change, but as for now, NO OTHER demonstrations (you tube and otherwise) can match it.

That's just a sad fact for you, and with so many maintaining your biased and arrogant demand for anything else, we may have another case of VHS instead of BETA, which of course, you would heartily celebrate, no matter how long it takes to get there.

LOL

Yes, it is funny. It's just hilarious. A few months ago before Mirror's Edge and Anand falling in love with PhysX in it, admittedly, in the article he posted, we had the big screamers whining ZERO.

Well, now a few months later you are whining TWO.

Get ready to whine higher. Yes, you have read about the uptick in support ? LOL

You people are really something.

Oh, I know, CUDA is a big fat zero according to you, too.

(please pass along your thoughts to higher education universities here in the USA, and the federal government national lab research facilites. Thanks)

SiliconDoc - Thursday, September 24, 2009 - link

Yes, another excuse monger. So you basically admit the text is biased, and claim all readers should see the charts and go by those. LOLSo when the text is biased, as you admit, how is it that the rest, the very core of the review is not ? You won't explain that either.

Furthermore, the assumption that competition leads to something better in technology for videocards quicker, fails the basic test that in terms of technology, there is a limit to how fast it proceeds forward, since scientific breakthroughs must come, and often don't come, for instance, new energy technologies, still struggling after decades to make a breakthrough, with endless billions spent, and not much to show for it.

Same here with videocards, there is a LIMIT to the advancement speed, and competition won't be able to exceed that limit.

Furthermore, I NEVER said prices won't be driven down by competition, and you falsely asserted that notion to me.

I DID however say, ATI ALSO IS KNOWN FOR OVERPRICING. (or rather unknown by the red fans, huh, even said by omission to have NOT COMMITTED that "huge sin", that you all blame only Nvidia for doing.)

So you're just WRONG once again.

Begging the other guy to "not argue" then mischaracterizing a conclusion from just one of my statements, ignoring the points made that prove your buddy wrong period, and getting the body of your idea concerning COMPETITION incorrect due to technological and scientific constraints you fail to include, is no way to "argue" at all.

I sure wish there was someone who could take on my points, but so far none of you can. Every time you try, more errors in your thinking are easily exposed.

A MONOPOLY, in let's take for instance, the old OIL BARRONS, was not stagnant, and included major advances in search and extraction, as Standard Oil history clearly testifies to.

Once again, the "pat" cleche' is good for some things ( for instance competing drug stores, for example ), or other such things that don't involve inaccesible technology that has not been INVENTED yet.

The fact that your simpleton attitude failed to note such anomolies, is clearly evidence that once again, thinking "is not reuired" for people like you.

Once again, the rebuttal has failed.

kondor999 - Thursday, September 24, 2009 - link

This is just sad, and I'm no fanboy. I really wanted a 5870, but only with 100% more speed than a GTX285 - not a lousy 33%. Definitely not worth me upgrading, so I guess ATI saved me some money. I'm certain that my 3 GTX280's in Tri-SLI will destroy 2 5870's in CF - although with slightly less compatability (an important advantage for ATI, but not nearly enough).Moricon - Thursday, September 24, 2009 - link

I have been a regular at Tomshardware for a while now, nad keep coming back to Anandtech time and again to read reviews I have already read on other sites, and this one is by far the best I have read so far, (guru3d, toms, firing squad, and many others)The 5870 looks awesome, but from an upgrade point of view, I guess my system will not really benefit from moving on from E7200 @3.8ghz 4gb 1066, HD4870 @850mhz 4400mhz on 1680x1050.

Such a shame that i dont have a larger monitor at the moment or I would have jumped immediately.

Looks like the path is q9550 and 5870 and 1920x1200 monitor or larger to make sense, then might as well go i7, i5, where do you stop..

Well done ATI, well done! But if history follows the Nvidia 3xx chip will be mindblowing compared!

djc208 - Thursday, September 24, 2009 - link

I was most surprised at how far behind the now 2-generation old 3870 is now (at least on these high-end games). Guess my next upgrade (after a SSD) should be a 5850 once the frenzy dies away.JonnyDough - Thursday, September 24, 2009 - link

They could probably use a 1.5 GB card. :(mapesdhs - Wednesday, September 23, 2009 - link

Ryan, any chance you could run Viewperf or other pro-app benchmarks

please? Some professionals use consumer hardware as a cheap way of

obtaining reasonable performance in apps like Maya, 3DS Max, ProE,

etc., so it would most interesting to know how the 5870 behaves when

running such tests, how it compares to Quadro and FireGL cards.

Pro-series boards normally have better performance for ops such as

antialiases lines via different drivers and/or different internal

firmware optimisations. Someday I figure perhaps a consumer card will

be able to match a pro card purely by accident.

Ian.

AmdInside - Wednesday, September 23, 2009 - link

Sorry if this has already been asked but does the 5870 support audio over Display Port? I am holding out for a card that does such a thing. I know it does it for HDMI but also want it to do it for Display Port.VooDooAddict - Wednesday, September 23, 2009 - link

Been waiting for a single gaming class card that can power more then 2 displays for quite some time. (The more then 2 monitors not necessarily for gaming.)The fact that this performs a noticeable bit better then my existing 4870 512MB is a bonus.