The SSD Relapse: Understanding and Choosing the Best SSD

by Anand Lal Shimpi on August 30, 2009 12:00 AM EST- Posted in

- Storage

Live Long and Prosper: The Logical Page

Computers are all about abstraction. In the early days of computing you had to write assembly code to get your hardware to do anything. Programming languages like C and C++ created a layer of abstraction between the programmer and the hardware, simplifying the development process. The key word there is simplification. You can be more efficient writing directly for the hardware, but it’s far simpler (and much more manageable) to write high level code and let a compiler optimize it.

The same principles apply within SSDs.

The smallest writable location in NAND flash is a page; that doesn’t mean that it’s the largest size a controller can choose to write. Today I’d like to introduce the concept of a logical page, an abstraction of a physical page in NAND flash.

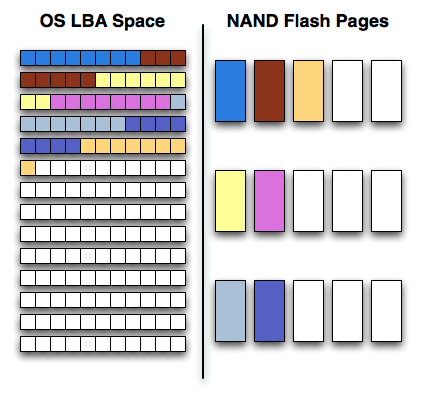

Confused? Let’s start with a (hopefully, I'm no artist) helpful diagram:

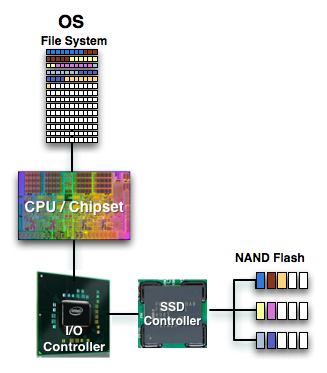

On one side of the fence we have how the software views storage: as a long list of logical block addresses. It’s a bit more complicated than that since a traditional hard drive is faster at certain LBAs than others but to keep things simple we’ll ignore that.

On the other side we have how NAND flash stores data, in groups of cells called pages. These days a 4KB page size is common.

In reality there’s no fence that separates the two, rather a lot of logic, several busses and eventually the SSD controller. The latter determines how the LBAs map to the NAND flash pages.

The most straightforward way for the controller to write to flash is by writing in pages. In that case the logical page size would equal the physical page size.

Unfortunately, there’s a huge downside to this approach: tracking overhead. If your logical page size is 4KB then an 80GB drive will have no less than twenty million logical pages to keep track of (20,971,520 to be exact). You need a fast controller to sort through and deal with that many pages, a lot of storage to keep tables in and larger caches/buffers.

The benefit of this approach however is very high 4KB write performance. If the majority of your writes are 4KB in size, this approach will yield the best performance.

If you don’t have the expertise, time or support structure to make a big honkin controller that can handle page level mapping, you go to a larger logical page size. One such example would involve making your logical page equal to an erase block (128 x 4KB pages). This significantly reduces the number of pages you need to track and optimize around; instead of 20.9 million entries, you now have approximately 163 thousand. All of your controller’s internal structures shrink in size and you don’t need as powerful of a microprocessor inside the controller.

The benefit of this approach is very high large file sequential write performance. If you’re streaming large chunks of data, having big logical pages will be optimal. You’ll find that most flash controllers that come from the digital camera space are optimized for this sort of access pattern where you’re writing 2MB - 12MB images all the time.

Unfortunately, the sequential write performance comes at the expense of poor small file write speed. Remember that writing to MLC NAND flash already takes 3x as long as reading, but writing small files when your controller needs large ones worsens the penalty. If you want to write an 8KB file, the controller will need to write 512KB (in this case) of data since that’s the smallest size it knows to write. Write amplification goes up considerably.

Remember the first OCZ Vertex drive based on the Indilinx Barefoot controller? Its logical page size was equal to a 512KB block. OCZ asked for a firmware that enabled page level mapping and Indilinx responded. The result was much improved 4KB write performance:

| Iometer 4KB Random Writes, IOqueue=1, 8GB sector space | Logical Block Size = 128 pages | Logical Block Size = 1 Page |

| Pre-Release OCZ Vertex | 0.08 MB/s | 8.2 MB/s |

295 Comments

View All Comments

jasperjones - Monday, September 7, 2009 - link

Bit of a late reply to your question but a single overwrite with random data is fully secure. At least for HDDs there have been tests from academics that tried to recover data from a HDD after wiping it via "dd if=/dev/urandom bs=1M" They weren't able to recover anything.smjohns - Thursday, September 3, 2009 - link

Great 3rd installment and I have learnt more about SSD's from this site than any other !!Whilst there is no doubt that Intel G2 definitely remains the SSD drive of choice (assuming you have the cash). Why did Intel choose not to address the poor sequential write speeds? In the above tests it seems no better than a standard 5400 hard disc....which is a little poor. I accept it is blisteringly fast for everything else but not sure why this was ignored / shelved?

Is it that it is currently impossible to build a drive that can be fast at both large sequential and small random file writes? Or is it that the G2 was always intended to be an incremental improvement over the G1 (fixing some of its short comings) rather than a complete top to bottom redesign of the unit, which may have lead to this being addressed? As such could a future firmware release improve these speeds....or is it definitely a hardware restriction?

I have to say I am personally torn between the OCZ Vertex and Intel G2 at the moment. Whilst I accept the G2 seems to be the quicker drive in the real world, I was disappointed that they did not improve the sequential write speeds and in addition to this, they do seem a little slow with support. The OCZ on the other hand seems a bit of an all rounder and not that much slower than the G2. In addition to this I REALLY like OCZ's approach to supporting these drives and they really seem to listen to their customers feedback.

One final question....when installing an SSD into a laptop with a fresh Windows 7 install, is there now any need for special formatting / OS settings to ensure best drive performance / life? There is a lot of stuff on the web but it all seems particularly relevant for XP and partially Vista but I was under the impression that Win7 was designed to work with SSDs out of the box?

derkurt - Thursday, September 3, 2009 - link

Then, there is another reason why SSDs are not covered extensively by the mainstream press: They are too complicated.Let's say you want to buy a hard disk. You could just buy any hard disk, since the difference between good and bad ones is fairly small. If you buy an ExcelStor, for example, you will still get something which works and delivers sufficient performance compared to faster models. Unless you are looking at the server market, there is not that much difference at all. Some models have larger caches, faster seek times and higher transfer rates due to higher rotational speeds, but the main difference is capacity, so the market is transparent.

Now look at the SSD market: The difference between good and bad ones is huge, incredibly huge. The Intel G2 is lightning fast while some old JMicron-based drives are much worse than a 5-years-old hard disk. You can't just go and buy "an SSD". You need to be informed:

What controller is the SSD using? Do I have to align my partitions, or is my operating system detecting the SSD and doing that for me? Does my OS support TRIM? Does my AHCI driver support TRIM? Does my SSD support TRIM? Does my current firmware revision support TRIM, and if so, do I need to flash a beta firmware which still has some serious flaws in it? How is the performance degradation after heavy use? What about random write access times (very big differences here which strongly affect real world performance)?

If you don't care about the above, chances are you will get a crappy drive. And even if you do, you'll have a hard time finding out some essential facts (thanks Anand!), since the manufacturers aren't exactly putting them on their webpages. They will tell you the capacity and the maximum linear transfer rates. That's all, basically. You will have to do some exhaustive googling to investigate what controller the drive is using, whether the firmware supports TRIM, and so on. Even Intel is holding back with detailed information, though they wouldn't have to, since they have nothing to hide as their drives are the fastest in nearly all aspects.

I don't know for sure why the manufacturers are making a secret out of essential information, even if they can shine there. But there's one thing I do know: Only when people don't need to care about controllers, OS support, firmwares etc. anymore, SSDs are ready to hit the mainstream.

smjohns - Thursday, September 3, 2009 - link

I fully agree with you here and it is one of the reasons why I have not taken the plunge yet. I am definitely holding out for Win7 and then upgrade my laptop with both that and an SSD.Even after reading these great articles, whilst I now know which drives support Trim and the fact that none of them have this functionality fully enabled and will require a future firmware update ("shudders"), the SSD market is indeed a confusing place to be. And thats before you consider having to align partitions (what the heck is this) and the various settings in the BIOS / OS you need to enable / disable to ensure your lovely new drive does not die within a few weeks / months / years.

If the industry really does want widespread adoption of these new drives, it needs to resolve these issues and come up with some easy and readily available standards we can all follow. I just hope Win7 is as SSD friendly as we are led to believe.

derkurt - Thursday, September 3, 2009 - link

AFAIK, "aligning" partitions means that the logical layout of blocks has to match the physical block assigment on the SSD in a certain way, otherwise writing one logical block on the filesystem level may result in an unnecessary I/O operation covering two blocks on the SSD (because the logical block spans the boundaries between two physical blocks). But don't ask me for details, I haven't dug into that yet.

According to MS, Windows 7 detects SSDs and applies a proper alignment scheme automatically during installation of the OS. If you'd like to install a Linux distribution or an older version of Windows, you'll probably have to take care of that by yourself, unfortunately.

I guess there aren't that many, you should just turn on AHCI support - the drive will work without it, but you need it for enabling NCQ, which can give you a 5-10% performance boost. However, you need to do this before the OS installation, otherwise your OS might cease to boot. Oh, and also you may have to temporarily turn off AHCI support when flashing a new firmware, because some flashing tools are struggling of AHCI is turned on.

I hope that with the advent of Windows 7 going into public sale, SSD manufacturers will start to ship reliable, TRIM-enabled firmware revisions. If so, you shouldn't have to think about all these issues anymore as long as you are using Windows 7.

derkurt - Thursday, September 3, 2009 - link

I was one of the lucky guys to get an Intel G2 drive before they stopped shipping it for a while, and I can absolutely confirm everything Anand states about performance.However, I still wonder why there is relatively few competition out there. At least in theory, it takes far less know-how to produce a good SSD than is required to manufacture reliable hard disk drives - think about the expansive and complicated fine mechanics involved. Actually, there are some Chinese manufacturers most of us have never heard of, such as RunCore, which manage to deliver SSDs of at least usable quality.

Where is the Samsung drive that blows the competition away? What about Seagate, Western Digital, Hitachi? Are they just watching from the sideways while SSDs from some young and small companies are cannibalizing their markets?

At the time the shift from CRTs to LCDs was taking place, German premium TV manufacturer Loewe estimated that it would take many years until CRTs became obsolete. But the change happened so fast it nearly blew off their business before they finally started to ship high-quality LCDs in response to market demand. It seems to me that the very same thing is happening again now.

The G2 is gorgeous, no doubt about it. But the price point is still way above being ready to hit the mainstream. Computers are simply not important enough to Joe Sixpack to spend 200+ USD for storage solutions only, even if it _really_ accelerates the machine (something most people won't believe until they experienced it themselves), and especially considering the low capacities offered by SSDs so far.

If something as great as the G2 can be offered for 240 USD while being sold to a relatively small audience, what prices can we expect to see if the mainstream is hit? If USB sticks can be sold for less than 5 USD, what is the fundamental problem at reaching a price point of 60 USD for high-quality SSDs? Of course, SSDs contain much more intelligence than USB pen drives: Multi-channel controllers with sophisticated strategies, caches, and so on, but the main difference should be the effort required to engineer these devices, rather than the cost for building them.

I am a bit frustrated that while there are SSDs available now which deliver superior performance, they still cover a small niche of enthusiasts (and there are probably a lot more people who would want to buy one if they only knew that these things exist), and the traditional hard drive manufacturers cease to join the game. The most important reason why Intel priced the G2 at a more affordable level is probably not the competition by Indilinx drives, but rather the idea that they can gain more profit by selling much more drives, even if they are sold at a lower price, as long as production costs are fairly small.

Is Samsung sleeping, or are they just fearing that the shift to SSDs might destroy their mechanical hard drive business? I doubt that they don't have engineers capable of creating SSDs which deliver a performance comparable to Intel's drives. Maybe the mediocre performance of their SSDs is part of a strategy, which says that SSD development shouldn't be pushed too fast until the rest of the market is really forcing them to do so.

Companies such as Apple need to sell good SSDs with their computers, by default. Why can't premium PC manufacturers like Apple sell their hardware with a G2 drive, while they are offering similarly expansive CPUs? If you are spending the 240 USD for a CPU upgrade instead, I'd take every bet that you were unable to feel a comparable performance gain. It's a shame that PC sellers are neglecting hard drive performance while at the same time stressing the CPU power of their systems in their advertisements. Only if Seagate & Co. realize that they are losing a large and growing market share by not joining the SSD race, prices will drop. So far, they just don't care about some hardware geeks like us.

pepito - Monday, November 16, 2009 - link

There are a bunch of companies selling SSD already, its just that you don't know where to find them, and most reviewers only care about big players, such as Intel or Samsung.If you check, for example, http://kakaku.com/pc/ssd/">http://kakaku.com/pc/ssd/ you can see there are currently 24 manufacturers listed there (use google translate, as its in japanese).

Some you probably never heard of: MTRON, Greenhouse, Buffalo, CFD, Wintec, PhotoFast, etc.

iwodo - Thursday, September 3, 2009 - link

I have trouble understanding WHY Apple, uses Samsung CRAPPY SSD like everyone else when they could easily make their own.And SSD drive, like all Indlinx drive, are nothing more then Flash Chip soldered on to PCB with Indilinx Core. Apple is already the largest Flash buyer in the world, they properly buy the cheapest Flash memory in the market. ( Intel and Samsung of coz don't count since they make the flash themselfs. ) Building an SSD themself would be adding $20 dollars on top of 8 Chips 64Gb Flash.

Why they dont build one and use it accross its Mac is beyond me. Since even the firmware is the same as everyone else.

pepito - Monday, November 16, 2009 - link

For the same reason that Dell doesn't make their own batteries, its not their business.Borski - Thursday, September 3, 2009 - link

How does G.skill Falcon compare with the reviewed units? I've seen very good reviews (close to Vertex) elsewhere but they don't mention things like used vs new performance, or power consumption.I'm considering buying the G.Skill Falcon 64G, which is cheaper than Agility in some places.