Revisiting Linux Part 1: A Look at Ubuntu 8.04

by Ryan Smith on August 26, 2009 12:00 AM EST- Posted in

- Linux

CPU Benchmarks

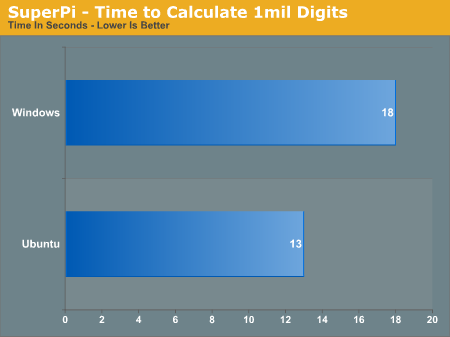

We’ll start our short look at Ubuntu’s performance with our CPU intensive benchmarks. Up first is SuperPi, a single-threaded pi-calculating benchmark. Here we time how long it takes to calculate Pi to 1 million digits.

We ran this test several times more than usual just to make sure we weren’t seeing any kind of error. The Linux version of SuperPi really is about 30% faster than the Vista version. Keep this in mind, this will be an important point later.

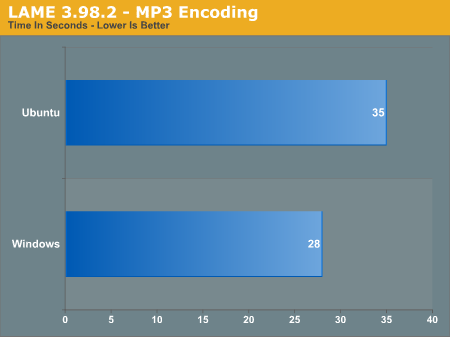

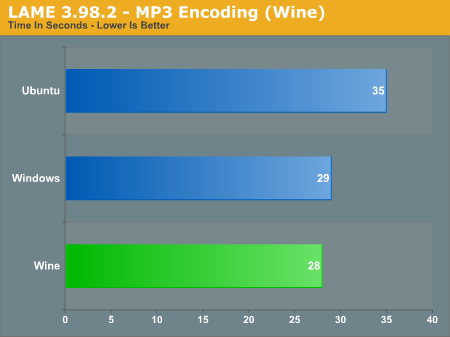

Meanwhile the situation for LAME is inverted. Vista outscores the Linux version by nearly 20%.

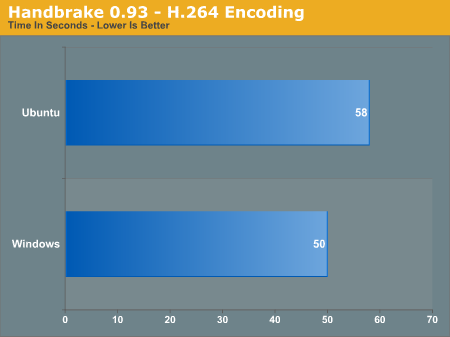

Using the cross-platform X264-based Handbreak for our video encoding test, Vista once again pulls ahead of Linux.

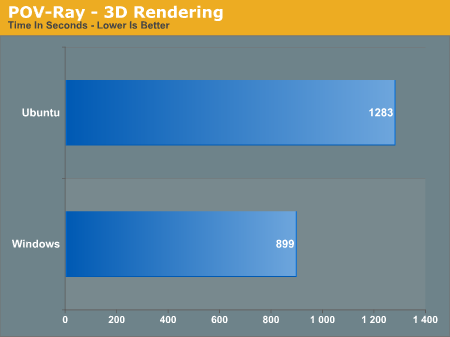

Once more Vista is ahead by a large margin.

From what we can tell, there’s little-if-any innate performance advantage to Vista or Linux in these benchmarks. Our working theory is that the performance difference comes down to the compiler used. Many Linux applications are compiled with GCC, while for Windows it’s either the Visual Studio compiler, or Intel’s own compiler (which is also available for Linux). There’s also a matter of compiler settings, as we saw in our quick breakout of Firefox benchmarks.

Meanwhile SuperPi uses a lot of hand-rolled code, although we’re still not sure why it’s outperforming Vista on Linux by as much as it is.

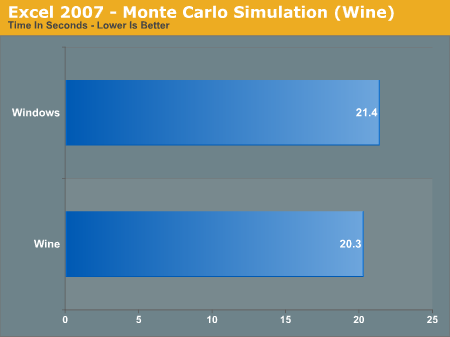

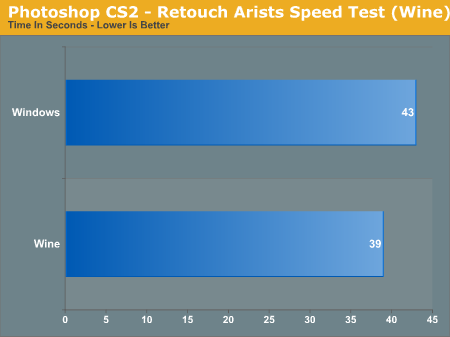

To shed a little more light on this idea of compiler performance, we have a few benchmarks of Windows application performance under Ubuntu through Wine.

Here we see a most amazing thing: Ubuntu is outperforming Windows at running Windows applications! As we’ve removed the influence of compilers the Photoshop results are particularly interesting. From what we can tell it’s normally as fast under Linux as it is Vista, however there seems to be a short gap of low-CPU usage when running it under Vista that doesn’t occur when running it under Ubuntu. As a result Ubuntu finishes a few seconds earlier.

There are a number of conditional cases that mean that applications running under Wine don’t always match or beat Windows performance, but in our tests there’s no performance hit to using Wine to run Windows applications.

These results also lend a great deal of support to the idea that there’s a significant difference in performance between the two operating systems due to their compilers. This goes particularly for the LAME benchmark, where the performance gap melts away under Wine. This is something we’re going to have to look in to in the future.

195 Comments

View All Comments

apt1002 - Thursday, August 27, 2009 - link

Excellent article, thank you. I will definitely be passing it on.I completely agree with superfrie2 about the CLI. Why resist it?

Versions: I, like you, originally plumped for Hardy Heron because it is an LTS version. I recently changed my mind, and now run the latest stable Ubuntu. As a single user, at home, the benefits of a long-term unchanging OS are pretty small, and in the end it was more important to me to have more recent versions of software. Now if I were administering a network for an office, it would be a different matter...

Package management: Yes, this is absolutely the most amazing part of free software! How cool is it to get all your software, no matter who wrote it, from one source, which spends all its time diligently tracking its dependencies, checking it for compatibility, monitoring its security flaws, filtering out malware, imposing sensible standards, and resisting all attempts by big corporations to shove stuff down your throat that you don't want, all completely for free? And you can upgrade *everything* to the latest versions, at your own convenience, in a single command. I still don't quite believe it.

Unpackaged software: Yes, I agree, unpackaged software is not nearly as good as packaged software. It's non-uniform, may not have a good uninstaller, might require me to install something else first, might not work, and might conceal malware of some sort. That's no different from any other OS. However, it's not as bad as you make out. There *is* a slightly more old-fashioned way of installing software: tarballs. They're primitive, but they are standard across all versions of Unix (certainly all Linux distributions), they work, and pretty much all Linux software is available in this form. It never gets worse than that.

Games: A fair cop. Linux is bad for games.

GPUs: Another fair cop. I lived with manually installing binary nVidia drivers for five years, but life's too short for that kind of nonsense. These days I buy Intel graphics only.

40 second boot: More like 20 for me on my desktop machine, and about 12 on my netbook (which boots off SSD). After I installed, I spent a couple of minutes removing software I didn't use (e.g. nautilus, gdm, and most of the task bar applets), and it pays off every time I boot.

Separate menu bar and task bar: I, like you, prefer a Windows-ish layout with everything at the bottom, so after I installed I spent a minute or two dragging-and-dropping it all down there.

GregE - Wednesday, August 26, 2009 - link

I use GNU/Linux for 100% of my needs, but then I have for years and my hardware and software reflect this. For example I have a Creative Zen 32gb SSD music player and only buy DRM free MP3s. In Linux I plug it in and fire up Amarok and it automatically appears in the menus and I can move tracks back and forth. I knew this when I bought it, I would never buy an iPod as I know it would make life difficult.The lesson here is that if you live in a Linux world you make your choices and purchases accordingly. A few minutes with Google can save you a lot of hassle when it comes to buying hardware.

There are three web sites any Ubuntu neophyte needs to learn.

1 www.medibuntu.org where multimedia hassles evaporate.

2 http://ubuntuguide.org/wiki/Ubuntu:Jaunty">http://ubuntuguide.org/wiki/Ubuntu:Jaunty the missing manual where you will find the solution to just about any issue.

3 http://www.getdeb.net/">http://www.getdeb.net/ where new versions of packages are published outside of the normal repositories. You need to learn how to use gdebi installer, but essentially you download a deb and double click on it.

Then there are PPA repositories for the true bleeding edge. This is the realm of the advanced user.

For a home user it is always best to keep up to date. The software is updated daily, what did not work yesterday works today. Hardware drivers appear all the time, by sticking with LTS releases you are frozen in time. Six months is a long time, a year is ancient history. An example is USB TV sticks, buy one and plug it into 8.10 and nothing happens, plug it in 9.04 and it just works or still does not work, but will in 9.10

Yes it is a wild ride, but never boring. For some it is an adventure, for others it is too anarchic.

I use Debian Sid which is a rolling release. That means that there are no new versions, every day is an update that goes on forever. Ubuntu is good for beginners and the experienced, the more you learn the deeper you can go into a world of software that exceeds 30,000 programs that are all free in both senses.

I look forward to part 2 of this article, but remember that the author is a Linux beginner, clearly technically adept but still a Linux beginner.

It all comes down to choice.

allasm - Thursday, August 27, 2009 - link

> I use Debian Sid which is a rolling release.> That means that there are no new versions, every day is an update that goes on forever.

This is actually one of the best things about Ubuntu and Debian - you NEVER have to reinstall your OS.

With Windows you may live with one OS for years (few manage to do that without reinstalling, but it is definitely possible) - but you HAVE to wipe everything clean and install a new OS eventually. With Debian and Ubuntu you can simply constantly upgrade and be happy. At the same time noone forces you to upgrade ALL the time, or upgrade EVERYTHING - if you arehappy with, say, firefox v2 and dont want to go to v3 because your fav skin is not there yet - just dont upgrade one app (and decide for yourself if uyou need the security fixes).

Some time ago I turned on a Debian box which was offline/turned off for 2+ years and managed to update it (to a new release) with just two reboots (one for the new kernel to take effect). That was it, it worked right after that. To be fair, I did have to update a few config files manually after that to make it flawless, but even without manual updates the OS at least booted "into" the new release. Natuarally, all my user data stayed intact, as did most of the OS settings. Most (99%) programs worked as expected as well - the problematic 1% falling on some GUI programs not dealing well with new X/window manager. And had no garbage files or whatever after the update (unlike what you get if you try to upgrade a winXP to say WinVista)

Having said all that, I 100% agree that linux has its problems as a desktop OS (I use windows more than linux day-to-day), but I totally disagree that using one OS for a long time is a weak point of Ubuntu.

P.S. one thing i never tried is upgrading a 32 bit distro to 64 bit - i wonder if this is possible on a live OS using a package manager.

wolfdale - Wednesday, August 26, 2009 - link

A good article but I have a few pointers.1) More linux distros need to be reviewed. Your "out of the box" review was informational but seemed to more in-tuned with commercial products aimed for making a profit (ie, is this a good buy for your money?). I, for one, used to check AnandTech.com before making a big computer item purchase. However linux is free to the public thus the tradeoff for the user would now be how much time should I invest in learning and customizing this particular distro. Multi-distro comparisons along with a few customized snapshots would help the average user on deciding what to spend with his valuable time and effort.

2) Include Linux compatibility on hardware reviews. Like I said earlier, I once used AnandTech.com as my guide for all PC related purchases and I have to say about 80% of the time it was correct. But, try to imagine my horror about 1.5 years ago when my brand spanking new HD4850 video card refused to do anything related to 3-D on Ubuntu. I spent weeks trying to get it to work but ended up selling it and going with Nvidia. Of course it was a driver issue but no where did AnandTech.com mentioned this other than saying it was a best buy.

Thanks for listening, I feel better now. I'm looking forward to reading your Ubuntu 9.04 review and please keep adding more linux related articles.

ParadigmComplex - Wednesday, August 26, 2009 - link

When I first saw that there was going to be a "first time with Linux" article on Anandtech, I have to admit I was a bit worried. While the hardware reviews here are excellent, it's already something you guys are familiar with - it's not new grounds, you know what to look for. I sadly expected Ryan would enter with the wrong mindset, trip over something small and end up with an unfair review like almost all "first time with Linux" reviews end up being.Boy, was I wrong.

With only one major issue (about APT, which I explained in another post) and only a handful of little things (which I expect will be largely remedied in Part 2), this article was excellent. Pretty much every major thing that needed to be touched on was hit, most of Ubuntu's major pluses and minuses fairly reviewed. It's evident you really did your homework, Ryan. Very well done. I should have known better then to doubt anyone from anandtech, you guys are brilliant :D

Fox5 - Wednesday, August 26, 2009 - link

One last thing I forgot to say....Good job on the article. I (and many others) would have liked to see 9.04 instead (I don't know of anyone who uses the LTS releases, those seemed to be aimed at system integrators, such as Dell's netbooks with ubuntu), but the article itself was quality.

jasperjones - Wednesday, August 26, 2009 - link

I'd like to make one last addition in similar spirit. I appreciate this article as a generally unbiased review that covers many important aspects of a general-purpose OS.And just to be sure: I'm not a Linux fanatic, in fact, for some reason, I'm writing up this post on Vista x64 ;)

jasperjones - Wednesday, August 26, 2009 - link

You're right that there are historical reasons that dictate that one Linux binary might be in /usr/bin, another in /sbin or /usr/sbin, yet another one in /usr/local/bin, etc.However, you really couldn't care less as long as the binary is in your path. which foo will then tell you the location. Furthermore, there's hardly any need to manually configure something in the installation directory. Virtually anything that can be user-configured (and there's a lot more that can than on Windows) can be configured in a file below ~ (your home). The name of the config file is usually intuitive.

But yeah, for things that you configure as admin (think X11 in /etc/X11/xorg.conf or Postgres usually somewhere under /usr/local/pgsql) you might need to know the directory. However, the admin installs the app, so he should know. Furthermore, GUIs exist to configure most admin-ish things (I don't know what it's in Ubuntu for X but it's sax2 in SUSE; and it's pgadmin for Postgresql in both Ubuntu and SUSE)

ParadigmComplex - Wednesday, August 26, 2009 - link

Again, if I may extend from what you've said:Even though it is technically possible to reorder the directory structure, Ubuntu isn't going to do it for a variety of reasons:

First and foremost, one must remember Ubuntu is essentially just a snapshot of Debian's in-development branch (unstable aka Sid) with some polish aiming towards user-friendliness and (paid) support. Other then the user-friendly tweaks and support, Ubuntu is whatever Debian is at the time of the snapshot. And while Debian has a lot of great qualities, user-friendliness isn't one of them (hence the need for Ubuntu). Debian focuses on F/OSS principles (DFSG), stability, security, and portability - Debian isn't going to reorder everything in the name of user-friendless.

Second, it'd break compatibility with every other Linux program out there. Despite the fact that Ryan seemed to think it's a pain to install things that aren't from Ubuntu's servers, it's quite common. If Ubuntu rearranges things, it'd break everything else from everyone else.

Third, it would be a tremendous amount of work. I don't have a number off-hand, but Ubuntu has a huge number of programs available in it's repos that would have to be changed. Theoretically it could be done with a script, but it's risking breaking quite a lot for no real gain. And this would have to be done every six months from the latest Debian freeze.

jasperjones - Wednesday, August 26, 2009 - link

I disagree with the evaluation of the package manager.First, there's a repo for almost anything. I quickly got used to adding a repo containing newer builds of a desired app and then installing via apt-get.

Second, with a few exceptions, you can just download source code and then install via "./configure; make; sudo make install." This usually works very well if, before running those commands, you have a quick look at the README and install required dependencies via apt-get (the versions of the dependencies in the package manager almost always are fine).

Third, and most importantly, you can simply update your whole Ubuntu distribution via dist-upgrade. True, you might occasionally get issues from doing that (ATI/NVIDIA driver comes to mind) but think of the convenience. You get a coffee while "sudo apt-get dist-upgrade" runs and when you get back, virtually EVERYTHING is upgraded to a recent version. Compare that with Windows, where you might waste hours to upgrade all apps (think of coming back to your parent's PC after 10 months, discovering all apps are outdated).