Parsing Input in Software and the CPU Limit

Before we get into software, for the sake of sanity, we are going to ignore context switching and we'll pretend that only the operating system kernel and the game are running and always get processor time exactly when they need it for as long as its needed (and never need it at the same time). In real life desktop operating systems, especially on single core processors, there will be added delay due to process scheduling between our game and other tasks (which is handled by the operating system) and OS background tasks. These delays (in extreme cases called starvation) can be somewhere between a handful of nanoseconds or on the microsecond level on modern systems depending on process prioritization, what else is happening, and how the scheduler is implemented.

Once the mouse has sent its report over USB to the PC and the USB root hub receives the data, it is up to the OS (for our purposes, MS Windows) to handle the data next. Our report travels from the USB root hub over the system bus (southbridge through the north bridge to the CPU takes +/- some nanoseconds depending on load), is put on an input stack (in this case the HID (Human Interface Device) stack), and a Windows OS message (WM_INPUT) is generated to let any user space software monitoring raw mouse input know that new data has arrived. Software written to take full advantage of hardware will handle the WM_INPUT message by reading the appropriate data directly from the HID stack after it gets the message that data is waiting.

This particular part of the process (checking windows messages and handling the WM_INPUT message) happens pretty fast and should be on the order of microseconds at worst. This is a hard delay to track down, as the real time this takes is dependent on what the programmer actually does. Latencies here are not guaranteed by either the motherboard chipset or Windows.

Once the software has the data (after at least 1ms and some microseconds in change), it needs to do something with it. This is hugely variable, as developers can choose to implement doing something with input at any of a number of points in the process of updating the game state for the next frame. The thing that makes the most sense to me would be to run your AI based on the previous input data, step through any scripted actions, update physics per object based on last state and AI decisions, then get user data and update player state/physics based on previous state and current input.

There are cases or design decisions that may require getting user input before doing some of these other tasks, so the way I would want to do it might not be practical. This whole part of the pipeline can be quite long as highly intelligent AI and immersive physics (along with other game scripting and state updates) can require massive amounts of work. At the least we have lots of sorting, branching, and necessarily serial computations to worry with.

Depending on when input is collected and the depth and breadth of the simulation, we could see input lag increase up to several milliseconds. This is highly game dependent, but it isn't something the end user has any control over outside of getting the fastest possible CPU (and this still won't likely change things in a perceivable way as there are memory and system latencies to consider and the GPU is largely the bottleneck in modern games). Some games are designed to be highly responsive and some games are designed to be highly accurate. While always having both cranked up to 11 would be great, there are trade offs to be made.

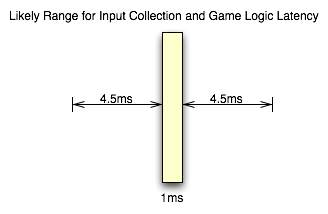

Unfortunately, that leaves us with a highly variable situation. The only way to really determine the input lag caused by game code itself is profile the code (which requires access to the source to be done right) or ask a developer. But knowing the specifics aren't as necessary as knowing that there's not much that can be done by the gamer to mitigate this issue. For the purposes of this article, we will consider game logic to typically add somewhere between 1ms and 10ms of input lag in modern games. This considers things like decoupling simulation and AI threads from rendering and having work done in parallel among other things. If everything were done linearly things would very likely take longer.

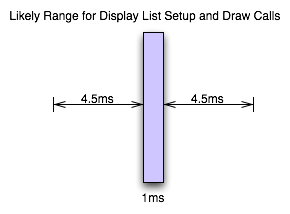

When we've got our game state updated, we then setup graphics for rendering. This will involve using our game state to update geometry and display lists on the CPU side before the GPU can start work on the next frame. The speed of this step is again dependent on the implementation and can take up a good bit of time. This will be dependent on the complexity of the scene and the number of triangles required. Again, while this is highly dependent on the game and what's going on, we can typically expect something between 1ms and 10ms for this part of the process as well if we include the time it takes to upload geometry and other data to the GPU.

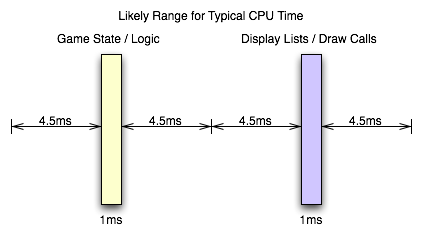

Now, all the issues we've covered on this page go into making up a key element of game performance: CPU time. The total latency from front to back in this stage of a game engine creates a CPU limit on performance. When what comes after this (rendering on the GPU) takes less time than everything up to this point, we have hit the CPU limit. We can typically see the CPU limit when we drop resolution down to something ridiculously low on a high end card without seeing any real performance gain between that and the next highest resolution.

From the examples I've given here, if both the game logic and the graphics/geometry setup come in at the minimum latencies I've suggested should be typical, we could be CPU limited at as much as 500 frames per second. On the flip side, if both portions of this process push up to the 10ms level, we would never see a frame rate over 50 FPS no matter how fast the GPU rendered anything.

Obviously there is variability in games, and sometimes we see a CPU limit at less than 60 FPS even at the lowest resolution on the highest end hardware. Likewise, we can see framerates hit over 2000 FPS when drawing a static image (where game logic and display lists don't need to be updated) with a menu in front of it (like when a user hits escape in Oblivion with vsync off). And, again, multi-threaded software design on multi-core CPUs really middies up the situation. But this is near enough to illustrate the point.

And now it's on to the portion of realtime 3D graphics that typically incurs the most input lag before we leave the computer: the graphics hardware.

85 Comments

View All Comments

aguilpa1 - Thursday, July 16, 2009 - link

Lots of variables that we never consider when trying to do fast gaming. I would be curious how much lag is in a racing sim like GRID or Colin McCrae DIRT. Those are intense graphics games and demand the fastest everything to keep you from going in the ditch. I have noticed I compensate at times by estimating when to start turning before the turn arrives.crimson117 - Thursday, July 16, 2009 - link

Isn't part of that just intentional skidding / drift in racing games, to mimic the "lag" of rubber catching asphalt at high speeds?hechacker1 - Thursday, July 16, 2009 - link

You say TF2 is GPU limited, but with my 4850 I find the first core is pegged at 100%. The same applies to my older 3850.With core i7 920 @ 166x20 = 3320MHz and +166 for Turbo mode, hyper threading on, I see TF2 using 6 cores, The first is pegged out at 100%, the second and third vary from 50-100% depending on the action (32 player server). The other three hover around 25%.

1920x1080. Benq 2400G (bought for its low input-lag)

All highest settings, 4xMSAA, Aniso 8x, Disable vsync, FOV 90

My framerate hovers around 100FPS for most Valve maps.

I use this autoexec to get more threading and higher quality textures:

rlod 0 matpicmip -10 clnewimpacteffects 1 mpusehwmmodels 1 mpusehwmvcds 1 clburninggibs 1 matspecular 1 matparallaxmap 1 rthreadedparticles 1 rthreadedrenderables 1 clragdollcollide "1" jpegquality 100 rthreadedclientshadow_manager 1

Most people say TF2 is a CPU limited game. Perhaps that only applies ATI?

Even without the autoexec.cfg, I see the game use 100% on the first core.

Very good article though. I hope this shuts up the false info that 60fps is too fast for humans to notice.

DerekWilson - Thursday, July 16, 2009 - link

even if a core is pegged at 100% that doesn't mean the game's performance is CPU limited.at 2560x1600 we were hovering around 110fps but at 1152x864 we were constantly well over 200 fps. As lowering resolution doesn't change the load on the CPU, this clearly indicates that we were GPU bound -- at least at 25x16.

For our 1152x864@120Hz test, we might have been CPU bound, but I don't have the data to know for sure here (I didn't test any near resolutions).

hechacker1 - Thursday, July 16, 2009 - link

Oh yeah.Flip queue to 0.

ATI A.I. at Low or "standard" (I've read "high" mode can use more CPU?)

Latest driver. Windows 7 x64 7201.

Qiasfah - Thursday, July 16, 2009 - link

In the article you stated that TF2 was GPU limited (and it was in the situations you were testing), however you should find that in battle situations with other active characters present it becomes heavily CPU limited. It would be interesting to see if there was a difference in input lag due to this in the midst of battles rather than sitting idle.I run an i7 920, and even with multicore enabled (an option which will very commonly double a persons TF2 framerate) i get the same dips in FPS regardless of graphical settings. It would be interesting to see how overclocking affects the performance of this game.

DerekWilson - Thursday, July 16, 2009 - link

More than likely, in TF2, you'll be bottlenecked at the network when it comes to performance ...But the way Valve does things is with local prediction (running code on the client) and then checking predictions on the server. This should mean that our test shows what you can expect to actually /see/ whether or not what actually /happens/ is the same (if you are very laggy on the network or if there are lots of players or whatever).

codestrong - Thursday, July 16, 2009 - link

"Beyond that, GPU is the next most important faster (factor?), and a mouse that can do at least 500 reports per second is a good idea." Nice work by the way. I've been interested in this since Carmack mentioned input lag during his work on quake live.DerekWilson - Thursday, July 16, 2009 - link

yeah, i meant factor. thanks.SiliconDoc - Tuesday, July 21, 2009 - link

Yes, nice article and nice work on getting the job done without a super expensive camera, on an interesting subject for gamers.