Scanout and the Display

Alright. So depending on the game, we are up to somewhere between 13ms and 58ms after our mouse was moved. The GPU just finished rendering and swapped the finished frame to the front buffer. What happens next is called scanout: the frame is sent out the DVI-I port over the cable and to the monitor.

If our monitor's refresh rate is 60Hz (as is typical these days), it will actually take something like 16ms to send the full frame to the monitor (plus there's about half a millisecond of "blanking" between frames being sent) giving us 16.67ms of transmission delay. In this case we are limited by the bandwidth capabilities of DVI, HDMI and DisplayPort and the timing standards put forth by VESA. So to send a full frame of anything to the display we will have 16.67ms of input lag added. Some monitors will display this data as it is received, but others will latch input meaning the full frame must be sent before it can be displayed (but let's not get too far ahead of ourselves). Either way, we will consider the latency of this step to be at least one frame (as the monitor will still take 16ms to draw the image either way).

So now we need to talk about vsync. Let's pretend we aren't using it. Let's pretend our game runs at a rock solid exact 60 FPS and our refresh rate is 60Hz, but the buffer swap happens half way between each vertical sync. This means every frame being scanned out would be split down the middle. The top half of the frame will be an additional 16.67ms behind (for a total of 33.3ms of lag). Of course, the bottom half, while 16.67ms newer than the top, won't have it's own top half sent until the next frame 16.67ms later.

In this particular case, the way the math works out if we average the latency of all the pixels on a split frame we would get the same average latency as if we enabled vsync. Unfortunately, when framerate is either higher or lower than refresh rate, vsync has the potential to cause tons of problems and this equivalence doesn't carry in the least.

If our frametime is just longer than 16.67ms with vsync enabled, we will add a full additional frame of latency (with no work being done on the GPU) before we are able to swap the finished buffer to the front for scanout. The wasted work can cause our next frame not to come in before the next vsync, giving us up to two frames of latency (one because we wait to swap and one because of the delay in starting the next frame). If our framerate is higher than 60 FPS, our GPU will have to stop working after rendering until the next vsync. This is a waste of resources and decreases overall performance, but definitely not by as much as if we use vsync at less than the monitor refresh. The upper limit of additional delay is 16.67ms minus frametime (less than one frame) rather than two full frames.

When framerate is lower than refresh rate, using either a 1 frame flip queue with vsync or triple buffering will allow the graphics hardware to continue doing rendering work while adding between 0 and 16.67ms of additional latency (the average will be between the two extremes). So you get the potential benefits of vsync (no tearing and synchronization) without the additional decrease in performance that occurs when no work gets done on the GPU. At framerates higher than refresh rate, when using a render queue, we do end up adding an additional frame of latency per number of frames we render ahead, so this solution isn't a very good one for mitigating input latency (especially in twitch shooters) in high framerate games.

Once the data is sent to the monitor, we've got more delay in store.

We've already mentioned that some LCDs latch the entire frame before display. Beyond this delay, some displays will perform image processing on the input (including scaling if this is not done on the graphics hardware). In some cases, monitors will save two frames to overdrive LCD cells to get them to respond faster. While this can improve the speed at which the picture on the monitor changes, it can add another 16.67ms to 33.3ms of latency to the input (depending on whether one frame is processed or two). Monitors with a game mode or true 120Hz monitors should definitely add less input lag than monitors that require this sort of processing.

Add, on top of all this, the fact that it will take between 2ms and 16ms for the pixels on the LCD to actually switch (response time varies between panels and depending on what levels the transition is between) and we are done: the image is now on the screen.

So what do we have total after the image is flipped to the front buffer?

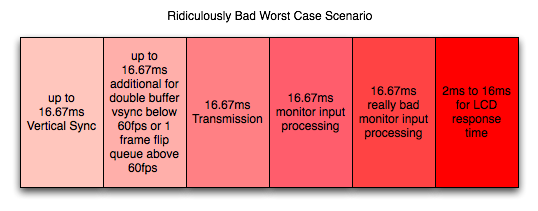

One frame of lag for transmission (to display a full frame), up to 1 frame of lag if we enable triple buffering (or 1 frame render ahead and we run at less than refresh rate), up to two frames of lag if we just turn on vsync, at framerates higher than the refresh rate we we'll add an additional frame of lag for every frame we render ahead with vsync on, and zero to 2 frames of lag for the monitor to display the image (if it does extensive image processing).

So after crazy speed from the mouse to the front buffer, here we are waiting ridiculous amounts of time to get the image to appear on the screen. We add at the very very least 16.67ms of lag in this stage. At worst we're taking on between 66.67ms and 83.3ms which is totally unacceptable. And that's after the computer is completely done working on the image.

This brings our totals up to about 33ms to 80ms input lag for typical cases. Our worst case for what we've outlined, however, is about 135ms of latency between mouse movement and final display which could be discernible and might start to feel mushy. Sometimes game developers stray a bit and incur a little more input lag than is reasonable. Oblivion and Fallout 3 come to mind.

But don't worry, we'll take a look at some specific cases next.

85 Comments

View All Comments

snarfbot - Friday, July 17, 2009 - link

i have a crt and a plasma tv, both essentially lag free.my friends have tn monitors, also no perceivable lag.

the worst offender is my friends samsung tv, its noticeably laggy.

but no where near as bad as wireless mice. they gotta be in the 30+ms region.

nevbie - Friday, July 17, 2009 - link

My mouse wire is about the same length as my monitor cable. It's too bad the latter doesn't have same relative bandwidth.1. But 16ms? Is it still around 16ms if the resolution is halved, which would cut the amount of data per frame to 1/4, or is the 16ms some kind of worst case?

2. Does the "input lag"(I'd prefer calling it response time) change if you use VGA (analog) connection instead of DVI (digital)? That would be a funny workaround.

DerekWilson - Friday, July 17, 2009 - link

1) scanout is always around 16ms regardless of resolution. blanking is always around 0.5ms regardless of resolution as long as the refresh rate is set to 60Hz. it's the VESA standards that determine this. Higher refresh rates mean faster transmission times. cable bandwidth limits maximum resolution+refresh. LCD makers are really the ones to blame for higher refresh rates not being available with lower resolutions ... I believe the traditional limit to this was LCD response time.2) i don't believe so.

bob4432 - Friday, July 17, 2009 - link

so how slow is my ps/2 trackman wheel connecting into a 4 port kvm? never thought about this area of gaming...interesting.DerekWilson - Friday, July 17, 2009 - link

I'm not sure if the kvm adds significant delay or not ... that'd be an interesting question.I do believe that ps/2 is 100Hz (10ms) though ... so ... ya know, slower :-)

anantec - Thursday, July 16, 2009 - link

i would love to see some analysis of wireless input devices, and keyboards.anantec - Thursday, July 16, 2009 - link

for example, the wireless controler for playstation 3and recently razer made a wireless gaming mouse

http://www.razerzone.com/gaming-mice/razer-mamba/">http://www.razerzone.com/gaming-mice/razer-mamba/

as the article mentioned, usb device is limited to 1000Hz, what about bluetooth devices?

Stas - Thursday, July 16, 2009 - link

Really appreciate the article. Even though I didn't learn much from it. It is VERY useful to point people to when the topic comes up. "Huh, Input lag? What's that? I'm on broadband, dude, and I have a QUAD-CORE!!!" <--- With ppl like that, I, now, have more than 2 options (murder them, or spend 30 min explaining input lag), I can send them to AnAndTech. You guys, own.marraco - Thursday, July 16, 2009 - link

Thanks for such useful article.The tweaking on the mouse was one I never had seen before (and I search for tweaks regularly).

One thing that I would add, is that some games (as FEAR 2) include controls for mouse smoothing, and/or some way to increase mouse responsivenes. They really affect my imput lag perception.

jkostans - Thursday, July 16, 2009 - link

I use a G5 mouse, and a CRT running at 100Hz. As long as my computer pushes 100+fps I don't notice any input lag at all.This is what I've found to contribute to input lag in order of significance:

Framerate, refresh rate, mouse rate. Oh and I've never used an LCD I can't stand the ghosting.