Parsing Input in Software and the CPU Limit

Before we get into software, for the sake of sanity, we are going to ignore context switching and we'll pretend that only the operating system kernel and the game are running and always get processor time exactly when they need it for as long as its needed (and never need it at the same time). In real life desktop operating systems, especially on single core processors, there will be added delay due to process scheduling between our game and other tasks (which is handled by the operating system) and OS background tasks. These delays (in extreme cases called starvation) can be somewhere between a handful of nanoseconds or on the microsecond level on modern systems depending on process prioritization, what else is happening, and how the scheduler is implemented.

Once the mouse has sent its report over USB to the PC and the USB root hub receives the data, it is up to the OS (for our purposes, MS Windows) to handle the data next. Our report travels from the USB root hub over the system bus (southbridge through the north bridge to the CPU takes +/- some nanoseconds depending on load), is put on an input stack (in this case the HID (Human Interface Device) stack), and a Windows OS message (WM_INPUT) is generated to let any user space software monitoring raw mouse input know that new data has arrived. Software written to take full advantage of hardware will handle the WM_INPUT message by reading the appropriate data directly from the HID stack after it gets the message that data is waiting.

This particular part of the process (checking windows messages and handling the WM_INPUT message) happens pretty fast and should be on the order of microseconds at worst. This is a hard delay to track down, as the real time this takes is dependent on what the programmer actually does. Latencies here are not guaranteed by either the motherboard chipset or Windows.

Once the software has the data (after at least 1ms and some microseconds in change), it needs to do something with it. This is hugely variable, as developers can choose to implement doing something with input at any of a number of points in the process of updating the game state for the next frame. The thing that makes the most sense to me would be to run your AI based on the previous input data, step through any scripted actions, update physics per object based on last state and AI decisions, then get user data and update player state/physics based on previous state and current input.

There are cases or design decisions that may require getting user input before doing some of these other tasks, so the way I would want to do it might not be practical. This whole part of the pipeline can be quite long as highly intelligent AI and immersive physics (along with other game scripting and state updates) can require massive amounts of work. At the least we have lots of sorting, branching, and necessarily serial computations to worry with.

Depending on when input is collected and the depth and breadth of the simulation, we could see input lag increase up to several milliseconds. This is highly game dependent, but it isn't something the end user has any control over outside of getting the fastest possible CPU (and this still won't likely change things in a perceivable way as there are memory and system latencies to consider and the GPU is largely the bottleneck in modern games). Some games are designed to be highly responsive and some games are designed to be highly accurate. While always having both cranked up to 11 would be great, there are trade offs to be made.

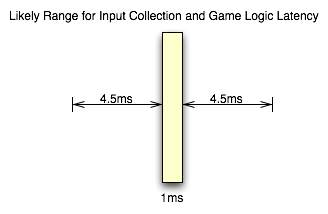

Unfortunately, that leaves us with a highly variable situation. The only way to really determine the input lag caused by game code itself is profile the code (which requires access to the source to be done right) or ask a developer. But knowing the specifics aren't as necessary as knowing that there's not much that can be done by the gamer to mitigate this issue. For the purposes of this article, we will consider game logic to typically add somewhere between 1ms and 10ms of input lag in modern games. This considers things like decoupling simulation and AI threads from rendering and having work done in parallel among other things. If everything were done linearly things would very likely take longer.

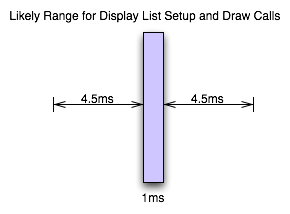

When we've got our game state updated, we then setup graphics for rendering. This will involve using our game state to update geometry and display lists on the CPU side before the GPU can start work on the next frame. The speed of this step is again dependent on the implementation and can take up a good bit of time. This will be dependent on the complexity of the scene and the number of triangles required. Again, while this is highly dependent on the game and what's going on, we can typically expect something between 1ms and 10ms for this part of the process as well if we include the time it takes to upload geometry and other data to the GPU.

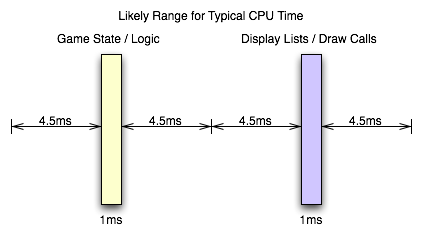

Now, all the issues we've covered on this page go into making up a key element of game performance: CPU time. The total latency from front to back in this stage of a game engine creates a CPU limit on performance. When what comes after this (rendering on the GPU) takes less time than everything up to this point, we have hit the CPU limit. We can typically see the CPU limit when we drop resolution down to something ridiculously low on a high end card without seeing any real performance gain between that and the next highest resolution.

From the examples I've given here, if both the game logic and the graphics/geometry setup come in at the minimum latencies I've suggested should be typical, we could be CPU limited at as much as 500 frames per second. On the flip side, if both portions of this process push up to the 10ms level, we would never see a frame rate over 50 FPS no matter how fast the GPU rendered anything.

Obviously there is variability in games, and sometimes we see a CPU limit at less than 60 FPS even at the lowest resolution on the highest end hardware. Likewise, we can see framerates hit over 2000 FPS when drawing a static image (where game logic and display lists don't need to be updated) with a menu in front of it (like when a user hits escape in Oblivion with vsync off). And, again, multi-threaded software design on multi-core CPUs really middies up the situation. But this is near enough to illustrate the point.

And now it's on to the portion of realtime 3D graphics that typically incurs the most input lag before we leave the computer: the graphics hardware.

85 Comments

View All Comments

DerekWilson - Monday, July 20, 2009 - link

This is how we disable vsync.We got the same results in lag with present interval set to either 1 or 0 ... it really didn't make a measurable difference in our testing.

DerekWilson - Monday, July 20, 2009 - link

to clarify a little, this is why i think that Gamebryo (or Bethesda) must do some sort of internal timing that strictly enforces framerate, CPU time, or something based on some other factor than present interval.NetSoerfer - Monday, July 20, 2009 - link

On page 5, the fifth paragraph begins with "If our frametime is just longer than 16.67ms...". The next paragraph is meant to describe the opposite but begins with "When framerate is lower than refresh rate...".Longer frametime equals lower refresh rate. The second paragraph should read "When framerate is higher than refresh rate..." or "When frametime is shorter than refresh rate...".

DerekWilson - Monday, July 20, 2009 - link

No, the next paragraph is not meant to describe the opposite case ...The first paragraph you cite describes the effects of double-buffered vsync on framerates both lower than refresh (first half of the paragraph) and higher than refresh (second half of the paragraph).

The second paragraph you cite describes the effects of a 1 frame flip-queue with vsync or triple buffering on framerates that are lower than refresh.

Sorry if that wasn't clear.

Per Hansson - Sunday, July 19, 2009 - link

Hi, I tried your recommendation with "overclocking" the mouse (erm, we are really just changing the speed of the USB port, not the mouse right?)Anyway, I've got a MS IntelliMouse Explorer v3.0

When I run "Direct Input Mouse Rate" it shows my lag as 8ms at 125hz...

So I used the driver hidusbf and changed the frequency to 1000hz, this resulted in 1.4ms and 700hz with my mouse...

But now to begin with I had the mouse speed set to max in the Intellipoint mouse setup, and also "enhance pointer precision" enabled...

And at 125hz / 8ms lag that gave me a good speed, a bit slower than I had in Win2K but still acceptable (current os is XP x64)

But now with my "overclocked" mouse the movement is waaay to slow, I need a bigger mousepad to move the mousepointer all across my monitor

Is this intended or just due to MS drivers or whatever?

I was planning on getting the Microsoft Habu gaming mouse developed by Razer because the current iteration of the Explorer 3.0 is a POS with crap microbuttons that keep failing, think I've been through 3 of these in the last 2 years, even replaced them with ones bought at Elfa but they also failed after a couple months

Anyway, will all mouse have this speed issue at high ouse rates? (above 125hz)

MarktheC - Monday, July 27, 2009 - link

Re: "But now with my "overclocked" mouse the movement is waaay to slow, I need a bigger mousepad to move the mousepointer all across my monitor. Is this intended or just due to MS drivers or whatever?"Yes, this is "how it works" (but it can be fixed).

What's happening is this: At 125 Hz and a given on-the-pad mouse speed, each mouse report might be returning (say) 16 counts/report.

The XP/Vista/7 "Enhance pointer precision" code uses the "16" value to lookup an acceleration curve (SmoothMouseXCurve/SmoothMouseYCurve) and apply a scaling factor to the mouse input (approx x 1.4 when the mouse count is 16). The pointer moves ~1.4 * 16 = ~22 pixels.

If the report rate is changed to to 1000 Hz, each mouse report returns 2, 2, 2, 2, 2, 2, 2, 2 instead (same gross movement of 16, but spread over 8 times as many reports). Now the XP/Vista/7 "Enhance pointer precision" code uses "2" to lookup the acceleration curve and returns a scaling factor (~0.6 when the mouse count is 2). The pointer moves ~0.6 * 2 * 8 = ~9 pixels and you perceive the mouse as slow.

This is (somewhat) described here:

http://www.codinghorror.com/blog/archives/000977.h...">http://www.codinghorror.com/blog/archives/000977.h...

http://www.microsoft.com/whdc/archive/pointer-bal....">http://www.microsoft.com/whdc/archive/pointer-bal....

BUT Microsoft made a silly design mistake!:

http://donewmouseaccel.blogspot.com/2009/06/out-of...">http://donewmouseaccel.blogspot.com/200...t-of-syn...

A solution is to tweak the Registry: HKEY_CURRENT_USER\Control Panel>Mouse>SmoothMouseXCurve and SmoothMouseYCurve values.

Treat each group of 4 bytes as a 32-bit integer, and divide by 8 (for 1000 Hz). AFAIK, doing this for both SmoothMouseYCurve & SmoothMouseXCurve should return the acceleration back to normal.

A BETTER solution may be to stick with "Enhance pointer precision" and 125 Hz for normal Windows work, and use 1000 Hz only for gaming AND TURN OFF "Enhance pointer precision" when gaming (if required by the game: most modern games uses DirectX to read the mouse, which ignores the "Enhance pointer precision" checkbox anyway).

Re: "I was planning on getting the Microsoft Habu ... will all mouse have this speed issue at high mouse rates? (above 125hz)"

I don't know: I expect the Habu driver will do the right thing and not need any fix as above, but I don't know...

DerekWilson - Monday, July 20, 2009 - link

Actually ... the report / second rate should have zero impact on the speed of the pointer. I do say should -- something odd could be happening like it could be dropping counts in order to assemble reports that fast (i.e. your mouse could be too overclocked and might be doing things wrong). But I am not a hardcore mouse overclocker myself so I'd do a little research on it.I would recommend, if your mouse can't actually hit 1000Hz, to drop it down to 500 reports/second instead of 1000 ... it should be more consistent that way, and maybe it will fix your pointer speed issue.

The CPI (reported as DPI) will have an impact on pointer speed. But so will things like setting mouse speed to maximum and using "enhance pointer precision" ... though these latter two don't really have desirable results.

I strongly recommend leaving mouse speed at the middle notch ... setting it higher actually skips pixels (though "enhance pointer precisions" makes your mouse able to move one pixel at a time if you move it really slowly). And I also recommend not using "enhance pointer precision" as well ...

These MS pointer ballistics can cause problems in older games, but if the developer did the "right" thing and used either DirectInput or raw input devices then the pointer speed settings shouldn't affect games (only the sensitivity slider in the game should affect pointer speed if it's done right). In most cases going forward you should be able to use the OS to manipulate your pointer speed without negatively impacting your game ... but there is a chance that these settings could negatively impact your gaming experience if the developer used a less desirable way to access the mouse data.

Per Hansson - Monday, July 20, 2009 - link

Thanks, the behaviour is the same at 250hz and 500hzThose rates just slow down the mouse more...

There would be no way at all that I could set the mouse speed slider to the middle and get used to that, same for not having enhance pointer precision on

Guess sometimes you just can't win eh? ;)

In fact I was quite annoyed by the change in ballistics going from Win2K which supported acceleration which I used and really liked to WinXP which only has this "enhance pointer precision" option

Xcrypt - Thursday, November 20, 2014 - link

You shouldn't enable enhance pointer precision, nor should you have your mouse speed set to maximum. Both will adversely affect your ability to aim, especially the acceleration will.valnar - Sunday, July 19, 2009 - link

"It is possible to overclock your mouse."Now I've seen everything. :)