The SSD Anthology: Understanding SSDs and New Drives from OCZ

by Anand Lal Shimpi on March 18, 2009 12:00 AM EST- Posted in

- Storage

The Trim Command: Coming Soon to a Drive Near You

We run into these problems primarily because the drive doesn’t know when a file is deleted, only when one is overwritten. Thus we lose performance when we go to write a new file at the expense of maintaining lightning quick deletion speeds. The latter doesn’t really matter though, now does it?

There’s a command you may have heard of called TRIM. The command would require proper OS and drive support, but with it you could effectively let the OS tell the SSD to wipe invalid pages before they are overwritten.

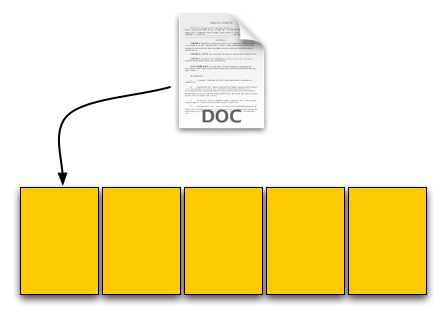

The process works like this:

First, a TRIM-supporting OS (e.g. Windows 7 will support TRIM at some point) queries the hard drive for its rotational speed. If the drive responds by saying 0, the OS knows it’s a SSD and turns off features like defrag. It also enables the use of the TRIM command.

When you delete a file, the OS sends a trim command for the LBAs covered by the file to the SSD controller. The controller will then copy the block to cache, wipe the deleted pages, and write the new block with freshly cleaned pages to the drive.

Now when you go to write a file to that block you’ve got empty pages to write to and your write performance will be closer to what it should be.

In our example from earlier, here’s what would happen if our OS and drive supported TRIM:

Our user saves his 4KB text file, which gets put in a new page on a fresh drive. No differences here.

Next was a 8KB JPEG. Two pages allocated; again, no differences.

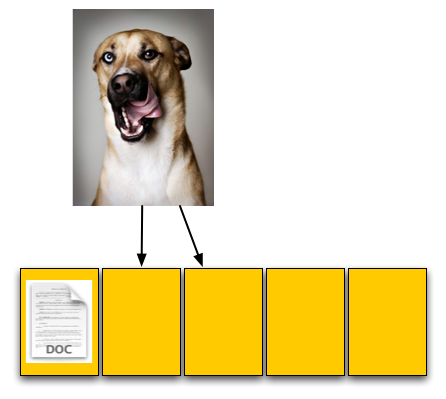

The third step was deleting the original 4KB text file. Since our drive now supports TRIM, when this deletion request comes down the drive will actually read the entire block, remove the first LBA and write the new block back to the flash:

The TRIM command forces the block to be cleaned before our final write. There's additional overhead but it happens after a delete and not during a critical write.

Our drive is now at 40% capacity, just like the OS thinks it is. When our user goes to save his 12KB JPEG, the write goes at full speed. Problem solved. Well, sorta.

While the TRIM command will alleviate the problem, it won’t eliminate it. The TRIM command can’t be invoked when you’re simply overwriting a file, for example when you save changes to a document. In those situations you’ll still have to pay the performance penalty.

Every controller manufacturer I’ve talked to intends on supporting TRIM whenever there’s an OS that takes advantage of it. The big unknown is whether or not current drives will be firmware-upgradeable to supporting TRIM as no manufacturer has a clear firmware upgrade strategy at this point.

I expect that whenever Windows 7 supports TRIM we’ll see a new generation of drives with support for the command. Whether or not existing drives will be upgraded remains to be seen, but I’d highly encourage it.

To the manufacturers making these drives: your customers buying them today at exorbitant prices deserve your utmost support. If it’s possible to enable TRIM on existing hardware, you owe it to them to offer the upgrade. Their gratitude would most likely be expressed by continuing to purchase SSDs and encouraging others to do so as well. Upset them, and you’ll simply be delaying the migration to solid state storage.

250 Comments

View All Comments

Jamor - Wednesday, March 18, 2009 - link

The best tech article I've ever read, and I've read a few.haze4peace - Wednesday, March 18, 2009 - link

Wow, excellent article and so much useful information in an easy to understand way. I have just recently been paying attention to SSDs and thanks to this article I am armed with the information to make the correct choice for my needs. Thanks AnandTech, its the deep and honest articles like these that keep me coming back for more.Alseki - Wednesday, March 18, 2009 - link

I just registered then simply to say, great article. Really informative and enjoyable to read.alexsch8 - Wednesday, March 18, 2009 - link

Anand,Thank you for this article, very informative.

Looking at the example you are giving with your self-manufactured SSD drive: If I save the DOC I use up a page. Based on what you are saying, if I make a change to that DOC, it would then be saved in the next page instead of overwriting the existing page? If that is true, then the File Allocation system (FAT or MFT) itself would contribute quite a bit to the 'filling up of pages' phenomena. Could you elaborate if the proposed file system for SSD addresses this?

Ytterbium - Wednesday, March 18, 2009 - link

Fantastic article, shame that the vendors blacklisted you for telling the truth and OCZ rock for working so hard to address issues.I'll be ordering my Intel SSD soon, I'll defintly consider the Summit when it comes out for my encoding rig as there sequental writes matter to me.

mindless1 - Wednesday, March 18, 2009 - link

Great even, but I've have to disagree with the significance of the passage that suggested the Indilinx controller makes data loss as bad on those SSD as on a conventional hard drive.The primary cause of data loss is mechanical or component failure, not power loss. If we want to consider power loss, it's not just the drive which is prone to lose data, the entire system memory suffers far more data loss than that.

Further, a sufficiently sized supercapacitor should keep the drive operating for a period of time beyond when the rest of the system would be operational, it could be sufficient for the controller to finish writing to flash all received data (or just use an UPS, that's what they're for?).

Second, I can't believe that OCZ only tests designs with HDTach and Atto, I think it more likely they knew of the problem but didn't expect anyone to find it so quickly, and felt the higher sequential speeds made it more marketable. This makes me feel that manufacturers, then online sellers should differentiate their drives with a standardized random read/write score.

What would be really nice is if the Indilinx based SSDs had an application available, similar to a HDD acoustic management bit changing app, that lets the owner set their own preference for IO versus sequential read performance.

gomakeit - Wednesday, March 18, 2009 - link

This is by far the BEST article on SSD I've ever read! Great job anand and yes I read every single word of it!MagicPants - Wednesday, March 18, 2009 - link

Don't they ever try using their own devices? One second of latency should slap any user in the face. It should be very easy for a manufacturer to build a system with their new technology put it in front of people and see what happens, but apparently they're not doing this.They wait for reviewers to do the work for them and then get upset when they find a problem.

What the manufacturers should be taking away from this article is:

1) Try your competitor's products

2) Try your own products

3) Try them in real life as opposed to synthetic tests

4) Compare everything you've tried and market the performance that matters

7Enigma - Thursday, March 19, 2009 - link

But that would make sense....and we know marketing rarely does.paulinus - Wednesday, March 18, 2009 - link

That art is great. Finally someone done ssd test's right, and said loud what we, customers, can get for that hefty pricetags.I've supposed that only choices are intel and new ocz's. Now I know, and big kudos for that.

Just need a bit more $$ for x25-m, it'll be ideal for heavy workstation use, and biggest vertex'll replace wd black in my aging 6910p :)