NVIDIA GeForce GTS 250: A Rebadged 9800 GTX+

by Derek Wilson on March 3, 2009 3:00 AM EST- Posted in

- GPUs

In the beginning there was the GeForce 8800 GT, and we were happy.

Then, we then got a faster version: the 8800 GTS 512MB. It was more expensive, but we were still happy.

And then it got complicated.

The original 8800 GT, well, it became the 9800 GT. Then they overclocked the 8800 GTS and it turned into the 9800 GTX. Now this made sense, but only if you ignored the whole this was an 8800 GT to begin with thing.

The trip gets a little more trippy when you look at what happened on the eve of the Radeon HD 4850 launch. NVIDIA introduced a slightly faster version of the 9800 GTX called the 9800 GTX+. Note that this was the smallest name change in the timeline up to this point, but it was the biggest design change; this mild overclock was enabled by a die shrink to 55nm.

All of that brings us to today where NVIDIA is taking the 9800 GTX+ and calling it a GeForce GTS 250.

Enough about names, here's the card:

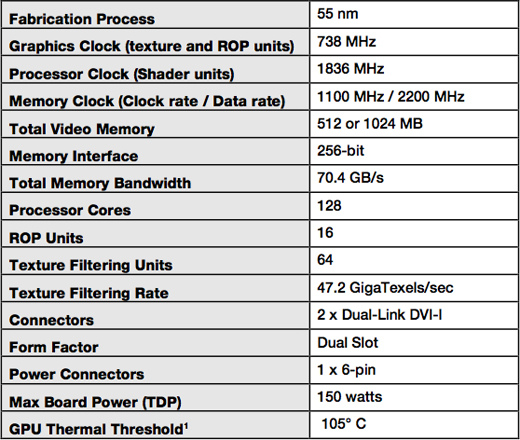

You can get it with either 512MB or 1GB of GDDR3 memory, both clocked at 2.2GHz. The core and shader clocks remain the same at 738MHz and 1.836GHz respectively. For all intents and purposes, this thing should perform like a 9800 GTX+.

If you get the 1GB version, it's got a brand new board design that's an inch and a half shorter than the 9800 GTX+:

GeForce GTS 250 1GB (top) vs. GeForce 9800 GTX+ (bottom)

The new board design isn't required for the 512MB cards unfortunately, so chances are that those cards will just be rebranded 9800 GTX+s.

The 512MB cards will sell for $129 while the 1GB cards will sell for $149.

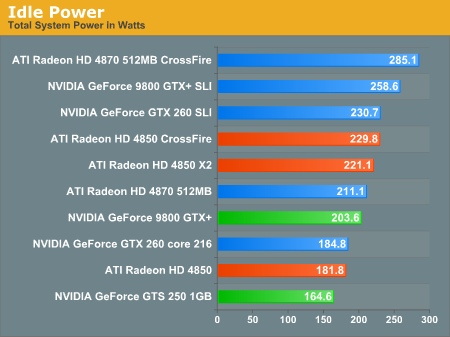

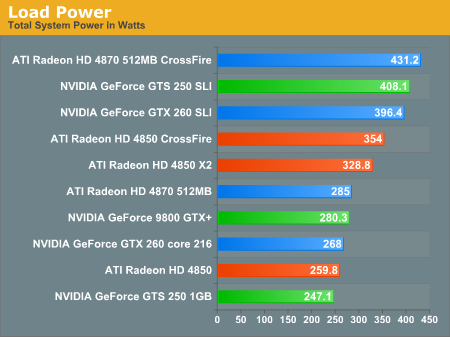

While the GPU is still a 55nm G92b, this is a much more mature yielding chip now than when the 9800 GTX+ first launched and thus power consumption is lower. With GPU and GDDR3 yields higher, power is lower and board costs can be driven down as well. The components on the board draw a little less power all culminating in a GPU that will somehow contribute to saving the planet a little better than the Radeon HD 4850.

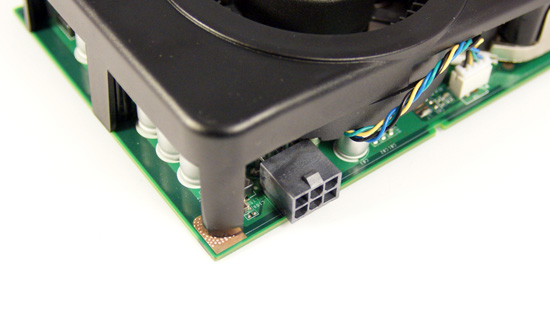

There's only one PCIe power connector on the new GTS 250 1GB boards

Note that you need to have the new board design to be guaranteed the power savings, so for now we can only say that the GTS 250 1GB will translate into power savings:

These are the biggest gains you'll see from this GPU today. It's still a 9800 GTX+.

103 Comments

View All Comments

Hrel - Thursday, April 9, 2009 - link

you should specify when you're being sarcastic and when you're being serious. Also, all that red rooster loon red camp green goblin crap simply doesn't make any sense and makes you sound like a tin-foil hat wearing crazy person. Just sayin' dude, lighten up. Do you work for Nvidia? Or do you just really hate AMD?Yes, they're both good cores, and yes, it'd be great if Nvidia used DDR5, but they don't, so they don't get the performance boost from it; that's their fault too. And they DID make the GT200 core too big and expensive to produce, that's why the GTX260 is now being sold at a loss; just to maintain market share.

Hrel - Wednesday, March 4, 2009 - link

Oh, also... I almost forgot: you still didn't include 3D Mark scores:( PLEASE start including 3D Mark scores in your reviews.Also, I care WAY more about how these cards perform at 144x900 and 1280x800 than I do about 2560x1600; I will NEVER have a monitor with a resolution that high. No point.

It's just, I'm more interested in seeing what happens when a card that's on the border of playable with max settings, gets the resolution turned down some, then what happens when the resolution gets turned up beyond what my monitor can even display.

It's pretty simple really, more on board RAM means the card won't insta-tank at resolutions above 1680x1050; but the percent differences should be the same between the cards. Where, comparing a bunch of 512MB and 1GB cards, at resolutions at 1680x1050 and lower, that extra RAM doesn't really matter; so all we're seeing is how powerful the cards are. It seems like a truer representation of the cards performance to me.

Hrel - Wednesday, March 4, 2009 - link

I really do mean to stop adding to this; just wanted to clarify.When I say that the extra RAM doesn't matter, I mean that the extra RAM isn't necessary just to run the game at ur chosen resolution. Of course some newer games will take advantage of that extra RAM even at resolutions as low as 1280x800. I'd just rather see how the card performs in the game based on it's capabilities rather than seeing one card perform better than another simply because that "other" card doesn't have enough on board RAM; which has NOTHING to do with how much rendering power the card has and has only to do with on board RAM.

I think it would be good to just add a fourth resolution, 1280x800, just to show what happens when the cards aren't being limited by their on board RAM and are allowed to run the game to the best of their abilities; without superficial limitations. There, pretty sure I'm done. Please respond to at least some of this; it took me kind of a long time; relative to how long I normally spend writing comments.

SiliconDoc - Wednesday, March 18, 2009 - link

Hmmm... you'd think you could bring yuorself to apply that to the 4850 and the 4870 that have absolutely IDENTICAL CORES, and only have a RAM DIFFERENCE.Yeah, one would think.

I suppose the red fan feverish screeding "blocked" your immensely powerful mind from thinking of that.

Hrel - Saturday, March 21, 2009 - link

What are you talking about?Hrel - Wednesday, March 4, 2009 - link

I'm excited about this; I was kind of wondering what Nvidia was going to do, considering GT200 costs too much to make and isn't significantly faster than the last generation; and I knew there couldn't be a whole new architecture yet, even Nvidia doesn't have that much money.However I'm excited because this is a 9800GTX+, still a very good performing part, made slightly smaller, more energy efficient and cooler running; not to mention offered at a lower price point! Yay, consumers win!(Why did Charlie at the Inquirer say it was MORE expensive but anandtech lists lower prices?) I really hope the 512MB version is shorter and only needs 1 PCI-E connector/lower power consumption; if not, that almost seems like intentional resistance to progress. However the extra RAM will be great now that the clocks are set right; and at $150, or less if rebates and bundles start being offered, that's a great deal.

On the whole, Nvidia trying to essentially screw the reviewers... I guess I don't have much to say; I'm disappointed. But Nvidia has shown this type of behavior before; it's a shame, but it will only change with new company leadership.

Anyway, from what I've read so far, it looks like the consumer is winning, prices are dropping, performance is increasing(before at an amazingly rapid rate, now at a crawl, but still increasing.) power consumption is going down and manufacturing processes are maturing... consumers win!

san1s - Wednesday, March 4, 2009 - link

365? are you sure about that?"even when the 9800 was new... iirc the 4850 was already making it look bad"

google radeon 4850 vs 9800 GTX+ and see the benchmarks... IMO the 9800 was making the brand new 4850 look bad

"i'd doubt that anyone buying a 9800 today is planning to sli it later"

what if they already have a 9800? much cheaper to get another one for sli than a new gtx 260

"hahaha, less power useage relative to"

read the article

"name some mainstream cuda and physx uses"

ever heard of badaboom? folding@home? mirror's edge?

the gts 250 competes with the 4850, not 4870

"continually confusing their most loyal customers "

what's so confusing about reading a review and looking at the price?

The gts 250 makes perfect sense to me. Rather than spending $ on R&D for a downgraded GT200 (that will perform the same more or less), why not use an existing GPU that has the performance between the designated 240 and 260?

Its a no win situation, option #1 will mean a waste of money for something that won't perform better than the existing product that can probably be made cheaper (the G92b is much smaller), and #2 will cause complaints with enthusiasts who are too lazy to read reviews.

Which option looks better?

kx5500 - Thursday, March 5, 2009 - link

Shut the *beep* up f aggot, before you get your face bashed in and cut

to ribbons, and your throat slit.

SiliconDoc - Wednesday, March 18, 2009 - link

I saw that more than once in Combat Arms - have you been playing to long on your computer?rbfowler9lfc - Tuesday, March 3, 2009 - link

Well, whatever it is, be it a rebadged this or that, it seems like it runs on par with the GTX260 in most of the tests. So if it's significantly cheaper than the GTX260, I'll take it, thanks.