NVIDIA GeForce GTS 250: A Rebadged 9800 GTX+

by Derek Wilson on March 3, 2009 3:00 AM EST- Posted in

- GPUs

In the beginning there was the GeForce 8800 GT, and we were happy.

Then, we then got a faster version: the 8800 GTS 512MB. It was more expensive, but we were still happy.

And then it got complicated.

The original 8800 GT, well, it became the 9800 GT. Then they overclocked the 8800 GTS and it turned into the 9800 GTX. Now this made sense, but only if you ignored the whole this was an 8800 GT to begin with thing.

The trip gets a little more trippy when you look at what happened on the eve of the Radeon HD 4850 launch. NVIDIA introduced a slightly faster version of the 9800 GTX called the 9800 GTX+. Note that this was the smallest name change in the timeline up to this point, but it was the biggest design change; this mild overclock was enabled by a die shrink to 55nm.

All of that brings us to today where NVIDIA is taking the 9800 GTX+ and calling it a GeForce GTS 250.

Enough about names, here's the card:

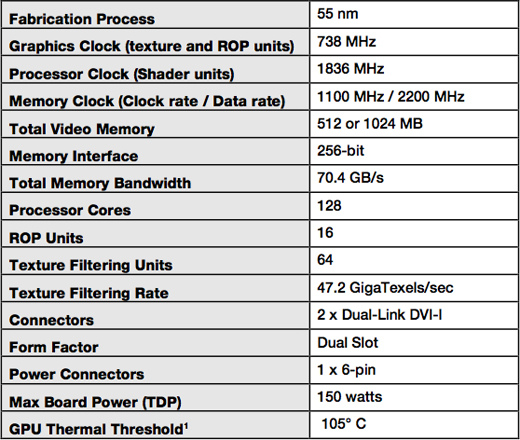

You can get it with either 512MB or 1GB of GDDR3 memory, both clocked at 2.2GHz. The core and shader clocks remain the same at 738MHz and 1.836GHz respectively. For all intents and purposes, this thing should perform like a 9800 GTX+.

If you get the 1GB version, it's got a brand new board design that's an inch and a half shorter than the 9800 GTX+:

GeForce GTS 250 1GB (top) vs. GeForce 9800 GTX+ (bottom)

The new board design isn't required for the 512MB cards unfortunately, so chances are that those cards will just be rebranded 9800 GTX+s.

The 512MB cards will sell for $129 while the 1GB cards will sell for $149.

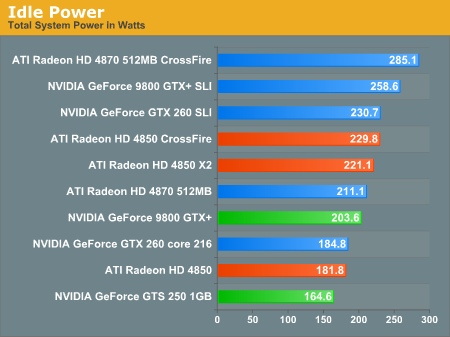

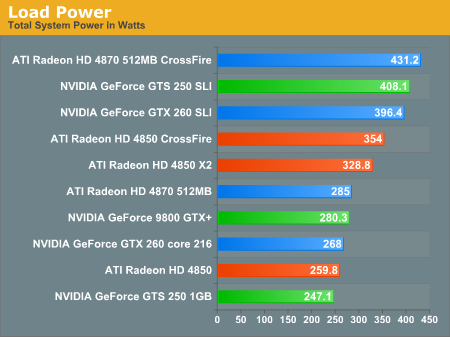

While the GPU is still a 55nm G92b, this is a much more mature yielding chip now than when the 9800 GTX+ first launched and thus power consumption is lower. With GPU and GDDR3 yields higher, power is lower and board costs can be driven down as well. The components on the board draw a little less power all culminating in a GPU that will somehow contribute to saving the planet a little better than the Radeon HD 4850.

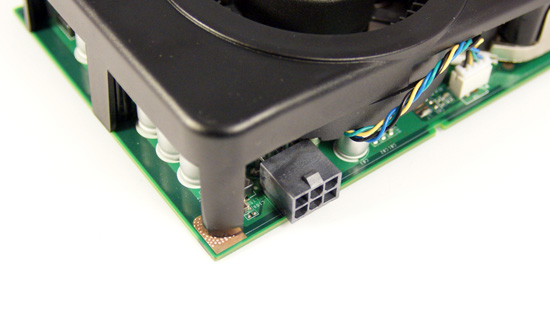

There's only one PCIe power connector on the new GTS 250 1GB boards

Note that you need to have the new board design to be guaranteed the power savings, so for now we can only say that the GTS 250 1GB will translate into power savings:

These are the biggest gains you'll see from this GPU today. It's still a 9800 GTX+.

103 Comments

View All Comments

SiliconDoc - Saturday, March 21, 2009 - link

Thanks for going completely nutso (already knew you were anyway), and not having any real counterpoint to EVERYTHING I've said.Face the truth, and stop spamming.

A two year old with a red diaper rash bottom can drool and scream.

Epic fail.

kx5500 - Thursday, March 5, 2009 - link

Shut the *beep* up f aggot, before you get your face bashed in and cut

to ribbons, and your throat slit.

Baov - Thursday, March 5, 2009 - link

Does this thing do hybridpower like the 9800gtx+? Will it completly power down?san1s - Wednesday, March 4, 2009 - link

"365 wwwwwelll no but how old is the g92 regardless of die size.. g80?lol?"

if you think all the changes that went from g80 to g92b were insignificant, then I guess you'll think that the difference from an intel x6800 and an eo stepping e8400 is meaningless too. I mean, they are both around 3 Ghz right? and they both say core 2, so that means that they're the same./sarcasm off. I'm not going to continue with this any further- if you don't get it, then you'll never will. The gpu in the 9800 GTX+ was released last summer, over half a year ago, but not quite a year.

"at all resoutions?"

at all the resolutions that a educated person purchasing a midrange video card plays at. Mid range card= midrange monitor. You don't mix high end with low end or midrange components as that will result in bottlenecking. Anyway, the difference between 8 and 12 FPS @ 2560 by 1600 are meaningless as they are not playable anyway.

"i wouldnt say $50 would stop me from getting a 260 it is at least a newer arch. or ahem a 1gb 4870.

what if they do have a 9800/250... well if they look at the power #'s for sli in this article they'd definately reconsider"

not everyone has the luxury to overshoot their budget on a single component by $50 and call it insignificant.

"most people don't care enough to engage in this activity"

lol. How would they ever get their custom built PCs to work without knowing a bit of background info? Give a normal person a bunch of components and lets see how far they get without knowing anything about PCs. If you don't know your hardware you shouldn't be building computers anyway. I personally wouldn't go out and buy tires by myself if I were up change them myself without researching. I don't have a clue about tire sizes, and I as sure as hell won't buy new tires without researching just because I don't care for that activity.

"and what about option #3 buy ati?"

That's not what I was talking about. Consumers should support all the sides of competition to drive prices down, not just only ati or only nvidia. What I meant was people blaming nvidia for their own mistakes. There is a gap in the current line of nvidia gpus, and to fill it, what would be the best way while maintaining performance relative to the price and naming bracket?

SiliconDoc - Wednesday, March 18, 2009 - link

Good response, you aren't a fanboy, but the idiots can't tell. You put the slap on the little fanboys COMPLAINT.This is an article about the GTS250, and the whining little fanboy red wailers come on and whine and cry about it.

To respond to their FUD and straighten out their kookball distorted lies IS NOT BEING A FANBOY.

You did a good job trying to straighten out the poor ragers noggin.

As for the other whiners agreeing "fan boys go away" - if they DON'T LIKE the comments, don't read 'em. They both added ZERO to the discussion, other than being the easy, lame, smart aleck smarmers that pretended to be above the fray, but dove into the gutter whining not about the review, but about fellow enthusiasts commenting on it - and I'm GLAD to join them.

I hope "they go away" - and YOU, keep slapping the whiners about nvidia right where they need it - upside the yapping text lies and stupidity.

Thank you for doing it, actually, I appreciate it.

SiliconDoc - Wednesday, March 18, 2009 - link

PS - as for the red fanboy that did the review, I guess he thought he was doing a "dual gpu review".I suppose "the point" of having all the massive dual gpu scores above the GTS250 - was to show "how lousy it is" - and to COVER UP the miserable failure of the 4850 against it.

Keep the GTS250 at OR NEAR 'the bottom of every benchmark"...

( Well, now there's another hint as to why Derek gets DISSED when it comes to getting NVidia cards from Nvidia - his bias is the same and WORSE than the red fan boy commenters - AND NVIDIA KNOWS IT AS WELL AS I DO.)

Thanks for the "dual gpu's review".

Totally - Thursday, March 5, 2009 - link

Dear fanboys,Go away.

Love,

Totally

Hxx - Thursday, March 5, 2009 - link

lol best post gj TotallySeriously, 3 main steps to buy the righ card:

1. look at benchmarks

2. buy the cheapest card with playable fps in the games u play

3. don't think its future proof - none of them are.

Mikey - Wednesday, March 4, 2009 - link

Is this even worth the money? In terms of value, would the 4870 be the one to get?http://findaerialequipment.com/">aerial lifts ftw

Nfarce - Wednesday, March 4, 2009 - link

Mikey, the 4870 is the way to go in just about all scenarios. Search AnandTech's report from last fall on the 4870 1GB under the Video/Graphics section. The GeForce 260/216 is still more and performs lower. Normally I'm an Nvidia fanboy, but in this segment where I'm purchasing, it's ATI/AMD hands down no questions asked.