NVIDIA Fall Driver Update (rel 180) and Other Treats

by Derek Wilson on November 20, 2008 8:00 AM EST- Posted in

- GPUs

Let's Talk about PhysX Baby

When AMD and NVIDIA both started talking about GPU accelerated physics the holy grail was the following scenario:

You upgrade your graphics card but now instead of throwing your old one away, you simply use it for GPU accelerated physics. Back then NVIDIA didn't own AGEIA and this was the way of competing with AGEIA's PPU, why buy another card when you can simply upgrade your GPU and use your old one for pretty physics effects?

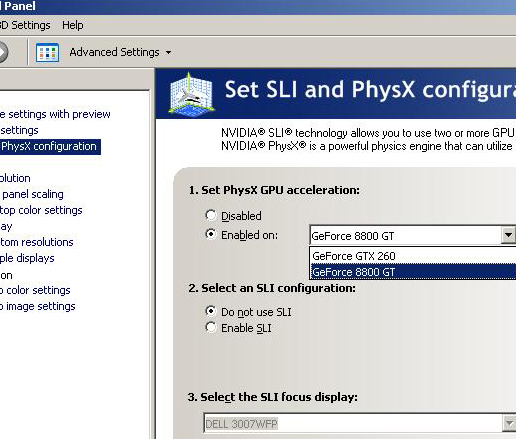

It was a great promise but something that was never enabled in a useable way by either company until today. Previous NVIDIA drivers with PhysX support required that you hook a monitor up to the card that was to accelerate PhysX and extend the windows desktop onto that monitor. With NVIDIA's 180.48 drivers you can now easily choose which NVIDIA graphics card you'd like to use for PhysX. Disabling PhysX, enabling it on same GPU as the display, or enabling it on a different GPU are now as easy as picking the right radio button option and selecting the card from a drop down menu.

When we tested this, it worked. Which was nice. While it's not a fundamental change in what can be done, the driver has been refined to the point where it should have been in the first place. It is good to have an easy interface to enable and adjust the way PhysX runs on the system and to be able to pick whether PhysX runs on the display hardware (be it a single card or an SLI setup) or on a secondary card. But this really should have already been done.

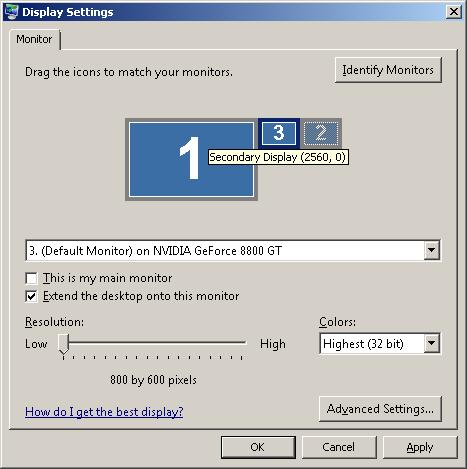

There is another interesting side effect. When we enabled PhysX on our secondary card, we noticed that the desktop had been extended onto a non-existent monitor.

Windows has a limitation of not allowing GPUs to be used unless they are enabled as display devices, which was the cause of the cumbersome issues with enabling PhysX on a secondary card in the first place. Microsoft hasn't fixed anything from their end, but NVIDIA has made all the mucking around with windows transparent. It seems they simply tell windows a display is connected when it is actually not. It's a cool trick, but hopefully future versions of Windows will not require such things.

Mirror's Edge: The First Title to Impress us with GPU PhysX?

Around every single GPU release, whether from AMD or NVIDIA, we get a call from NVIDIA telling us to remember that only on NVIDIA hardware can you get PhysX acceleration (not physics, but PhysX). We've always responded by saying that none of the effects enabled by PhysX in the games that support it are compelling enough for us to recommend an underperforming NVIDIA GPU over a more competitive AMD one. Well, NVIDIA promises that Mirror's Edge, upon its release in January for the PC will satisfy our needs.

We don't have the game nor do we have a demo for anything other than consoles, but NVIDIA promises it'll be good and has given us a video that we can share. To under line the differences between the PhysX and non-PhysX version, here's what to look for: glass fragments are a particle system without PhysX and persistent objects with (meaning they stick around and can be interacted with). Glass fragments are also smaller and more abundant with PhysX. Cloth is non-interactive and can't be ripped torn or shot through without PhysX (it will either not there at all or it won't respond to interaction). Some of the things not shown really clearly are that smoke responds to and interacts with characters and leaves and trash will blow around to help portray wind and in response to helicopters.

Another aspect to consider is the fact that PhysX effects can be run without GPU acceleration at greatly reduced performance. This means that AMD users will be able to see what their missing. Or maybe an overclocked 8 core (16 thread) Nehalem will do the trick? Who knows... we really can't wait to get our hands on this one to find out.

We'll let you be the judge, is this enough to buy a NVIDIA GPU over an AMD one? What if the AMD one was a little cheaper or a little faster, would it still be enough?

We really want to see what the same sequence would have looked like with PhysX disabled. Unfortunately, we don't have a side by side video. But that could also significantly impact our recommendation. We are very interested in what Mirror's Edge has to offer, but it looks like getting the full picture means waiting for the game to hit the streets.

63 Comments

View All Comments

superkdogg - Friday, November 21, 2008 - link

Evidently they're were at least to errors with the grammer of the 3 their's in this article...."and decided that their never should"

MarcLeFou - Friday, November 21, 2008 - link

I've always enjoyed coming here because you're not afraid to say your opinions and slam any wrongdoing/less than perfect situation by a company (such as ATI's notorious paper launches in the last few years and the situation of monthly driver releases that, you feel, are less than ideal).On this one however, the author seems to be looking for excuses for why Nvidia is coming up short on its "huge performance increase" promises. I can't imagine Anandtech not slamming somebody hard for unproven/false claims but somehow, this didn't happen here.

Am I the only one who feels that the author of this article showed a bias toward Nvidia ?

chizow - Friday, November 21, 2008 - link

How is it wrong to say one way of doing things is better than the other is when Derek gives clear and concise examples of why? It really comes down to this, if the common solution for a bug or problem with the latest driver is to revert to prior driver revisions, the monthly update program has failed. If a monthly driver needs daily hot fixes and hot fixes for those hot fixes and hot fixes that break one thing that was just fixed the system is clearly flawed. Its a freaking joke and now people are finally seeing how bad the situation really is. The scariest thing is its only going to get worst until they make some fundamental changes.Derek did also slam Nvidia (wrongly, imo) over Assassin's Creed losing DX10.1 support, but lets take another look at that situation. Ubisoft also developed Far Cry 2, another DX10.1 title and we ONCE AGAIN have rendering errors, the reason cited for AC's 10.1 removal, with ATI hardware. Now I'm not sure how you can come to the conclusion that Nvidia is somehow conspiring, influencing or causing games to run buggy on ATI hardware without looking at the drivers or the hardware itself. ATI is now 2 for 2 with DX10.1 titles and rendering errors, so I think we can drop the conspiracy theory for now.

Instead of blaming Nvidia for ATI's problems or defending their broken driver model, you need to look at how ATI can improve support. Nvidia's TWIMTBP program has benefits beyond a sticker on a box. Working with developers and supplying them with free hardware does pay off in helping launch a well supported title with fewer problems at launch. Nvidia owners see this in the form of new drivers that coincide with big game launches or blurbs about how the game was developed on Nvidia hardware or how the game runs best on Nvidia hardware.

chizow - Friday, November 21, 2008 - link

They're not covering up for Nvidia, they show clearly the driver does nothing for them in the games they tested. Derek leaves open the possibility that they didn't test the games where improvements were promised, or did not have the proper GPU configuration. If you look at the driver release it shows exactly what games with a possible % dependent on system and GPU configuration. More specifically, improvements might have been for SLI, and AT did not test any of the games that were specifically covered in NV's reviewer package for the 180 driver (the new titles covered in other games like L4D, Fallout3, Dead Space, Far Cry 2 etc.Simply put, if Nvidia claims a % increase in certain titles, or better performance than the competition in specific titles and you go and test completely different titles that don't show any increase, what have you really proven?

GiantPandaMan - Friday, November 21, 2008 - link

I'd agree. Both ATI and nVidia have problems. I'm actually happy to learn about the regression testing problem, and, given a final full explanation by Derek was enlightening. HOWEVER, to say nVidia's way of doing things is that much better is wrong. After all, didn't it cause the Vista crapup? What about what nVidia did with Assassin's Creed so ATI owners got screwed? This is how a company with a better driver program acts?For the record I own both a 4870 and a 8800GT. Honestly? Barely ever had a problem with either company's drivers crashing. However, the 8800GT took ages to be compatible with my GatewayFHD24 in Vista, while it was fine with XP.

chizow - Friday, November 21, 2008 - link

How is saying one way of doing things is better than the other is wrong when Derek gives clear and concise examples of why? It really comes down to this, if the common solution for a bug or problem with the latest driver is to revert to prior driver revisions, the monthly update program has failed. If a monthly driver needs daily hot fixes and hot fixes for those hot fixes and hot fixes that break one thing that was just fixed the system is clearly flawed. Its a freaking joke and now people are finally seeing how bad the situation really is. The scariest thing is its only going to get worst until they make some fundamental changes.Derek did also slam Nvidia (wrongly, imo) over Assassin's Creed losing DX10.1 support, but lets take another look at that situation. Ubisoft also developed Far Cry 2, another DX10.1 title and we ONCE AGAIN have rendering errors, the reason cited for AC's 10.1 removal, with ATI hardware. Now I'm not sure how you can come to the conclusion that Nvidia is somehow conspiring, influencing or causing games to run buggy on ATI hardware without looking at the drivers or the hardware itself. ATI is now 2 for 2 with DX10.1 titles and rendering errors, so I think we can drop the conspiracy theory for now.

Instead of blaming Nvidia for ATI's problems or defending their broken driver model, you need to look at how ATI can improve support. Nvidia's TWIMTBP program has benefits beyond a sticker on a box. Working with developers and supplying them with free hardware does pay off in helping launch a well supported title with fewer problems at launch. Nvidia owners see this in the form of new drivers that coincide with big game launches or blurbs about how the game was developed on Nvidia hardware or how the game runs best on Nvidia hardware.

GiantPandaMan - Friday, November 21, 2008 - link

Umm, there's far more than 2 DX10.1 games. Other than the two you cited, which ones have problems with ATI drivers?The two games you cited with problems are from Ubisoft. Hint, HINT?

I've acknowledged in a previous post and in the last post that ATI does have problems with their drivers that they need to address. I suggested that ATI move to releasing drivers every other month. Defending their model? That's a serious error in your comprehension if that's what you think I did. I pointed out that their model isn't worse than nVidia's.

You need to take a closer examination about what is being said, also, it's pretty clear that you're heavily biased to nVidia. Neither you nor I know the exact dealings with TWIMTP, but the facts are the facts. AC worked great on ATI hardware, and then it didn't. Ubisoft and Ubisoft alone out of current game publishers has done a poor job getting their game to work properly on a significant portion of the computer gaming population. I've bought about 6 games-L4D, Fallout3, Supreme Commander, Witcher:EE, etc.-in the past few months, none of them have had problems with my ATI video card.

That fact leads me to believe that it's fairly likely that Ubisoft is incompetent when coming to properly ensure their games work with the ATI hardware. Either that or they have a sweetheart deal with nVidia, take your pick.

chizow - Friday, November 21, 2008 - link

Name them. Oh right you can't. That's because there's only 2 DX10.1 titles, actually just 1 since AC no longer applies.

Yes, the only dev that actually bothers implementing DX10.1 can't seem to get it working on ATI hardware, so they just patch it out instead. The FC2 render errors were fixed by ATI in one of the myriad Hot Fixes btw, but I've heard the stuttering issue in DX10 is still around. Time to patch out DX10.1 support again?

Saying its "wrong to say Nvidia's driver model is better than ATI" is defending ATI's driver model when it was so clearly detailed why Nvidia's driver model was better. If someone claims Nvidia's driver model is better and you say ATI's driver model isn't worst than Nvidia's, its pretty clear you are defending ATI's driver model, just as you did again here.

No, I think you do, as its clear you're willing to work off half-truths and rumors alone. I do prefer Nvidia cards but that doesn't mean I wouldn't buy an ATI card tomorrow if it suited my needs.

Not the exact dealings but the basics are in the public domain and easily referenced. Nvidia has said numerous times they supply hardware, engineering resources and driver support for TWIMTBP titles. This is easily verified when you open up the game manual and it says:

AN IMPORTANT NOTE REGARDING GRAPHICS AND HAVING THE BEST POSSIBLE EXPERIENCE

....The game was developed and tested using NVIDIA GeForce 8,9, and GTX 200 Series graphics cards and the intended experience can be more fully realized....etc etc.

Or when there's a blurb by the developers accompanying a driver update for a high profile title like Far Cry 2 about how the game was developed on NV hardware......

Getting hardware in the hands of devs during development to me is the most important difference, as its clear NV is more proactive in their driver development and why their drivers are consistently better than ATI's for new games.

Worked great? Hardly.

http://techreport.com/discussions.x/14707">http://techreport.com/discussions.x/14707

That's about the best summary of events you'll find. The render errors were reproduced independently by numerous review sites. If you own an ATI card and want DX10.1 support that badly and the errors don't bother you, its as simple as not patching the game.

But no Assassin's Creed....and no Far Cry 2 and certainly no other DX10.1 games (since there are none).

Or maybe ATI needs to take a hard look at their driver program and make some changes instead of hoping for the best and playing whack-a-mole when problems start popping up.

piroroadkill - Friday, November 21, 2008 - link

"This means that AMD users will be able to see what their missing."Oh lawd

rocky1234 - Friday, November 21, 2008 - link

To the one who said about ever owning a ATI card you know what I own Both Nvidia & ATI & I must say the ATI card & drivers have been way betterthan what I have had with novidia this far. I spent 4 months waiting for Nvidia to fix the Vista problems they were having & finally gave up

on them. I moved that card to a Windows XP machine & now only have a BSOD maybe once a week & yes its always Novidia related their WIndows support simply sucks. They focus to much on the games or paying the game dev's for a few more frames than they do about having their drivers work with Windows itself.

With AMD/ATI I have not had a BSOD from their drivers yet & I have had this card for awhile now. Yes I have had some random game crashes here & there & yes they could have been caused by video drivers or just a crappy game code. My point here is all of my games work fine with the ATI card & drivers & I have just over 86 games installed & all work fine. Would I ever go back to Novida frak no if its took them this long to fix the Vista issues & some lingering XP problems I hate to be a Novidia owner when Windows 7 comes out..to funny even funnier is now Appple owners are gonna see just how good Novida drivers are as well.

Don;t get me wrong Novida hardware is great very well designed its just to bad all they care about is getting those 3 extra frames per second in any given game that they will pretty much do anything or pay any body to get those frames & they forget about the things like overall system compatibility.

So I will take the once a month driver updates just because I know that if there is a problem with a new game that it may be addressed & I willnot have to wait5 or 6 months for a fix from Nvidia.

Just my thoughts on this & having owned both companies cards I have seen problems on both sides I just think that AMD/ATI is the lesser of the 2 evils at the moment.