The Dark Knight: Intel's Core i7

by Anand Lal Shimpi & Gary Key on November 3, 2008 12:00 AM EST- Posted in

- CPUs

Thread It Like Its Hot

Hyper Threading was a great technology, simply first introduced on the wrong processor. The execution units of any modern day microprocessor are power hungry and consume a lot of die space, the last thing you want is to have them be idle with nothing to do. So you implement various tricks to keep them fed and working as often as possible. You increase cache sizes to make sure they never have to wait on main memory, you integrate a memory controller to ensure that trips to main memory are as speedy as possible, you prefetch data that you think you'll need in the future, you predict branches, etc...

Enabling simultaneous multi-threaded (SMT) execution is one of the most power efficient uses of a microprocessor's transistor budget, as it requires a very minimal increase in die size but can easily double the utilization of a CPU's execution units. SMT, or as Intel calls it, Hyper Threading does this by simply dispatching two threads of instructions to an individual processor core at the same time without increasing the available execution resources. Parallelism is paramount to extracting peak performance out of any out of order core, double the number of instructions being looked at to extract parallelism from and you increase your likelihood of getting work done without waiting on other instructions to retire or data to come back from memory.

In the Pentium 4 days enabling Hyper Threading required less than a 5% increase in die size but resulted in anywhere from a 0 - 35% increase in performance. On the desktop we rarely saw a big boost in performance except in multitasking scenarios, but these days multithreaded software is far more common than it was six years ago when Hyper Threading first made its debut.

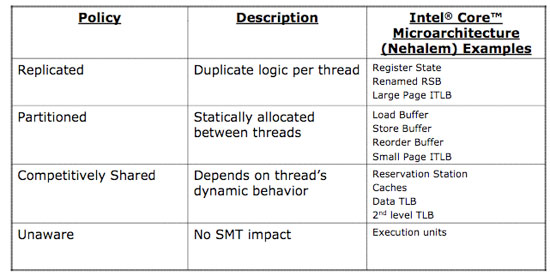

This table shows what needed to be added, partitioned, shared or unchanged to enable Hyper Threading on Intel's Core microarchitecture

When the Pentium 4 made its debut however all we really had to worry about was die size, power consumption had yet to become a big issue (which the P4 promptly changed). These days power efficiency, die size and performance all go hand in hand and thus the benefits of Hyper Threading must also be looked at from the power perspective.

I took a small sampling of benchmarks ranging from things like POV-Ray which scales very well with more threads to iTunes, an application that couldn't care less if you had more than two cores. What we're looking at here are the performance and power impact due to Hyper Threading:

| Intel Core i7-965 (Nehalem 3.2GHz) | POV-Ray 3.7 Beta 29 | Cinebench R10 1CPU | Race Driver GRID | |||

| HT Disabled | 3239 PPS | 207W | 4671 CBMarks | 161.8W | 103 fps | 300.7W |

| HT Enabled | 4202 PPS | 233.7W | 4452 CBMarks | 159.5W | 102.9 fps | 302W |

Looking at POV-Ray we see a 30% increase in performance for a 12% increase in total system power consumption, that more than exceeds Intel's 2:1 rule for performance improvement vs. increase in power consumption. The single threaded Cinebench test shows a slight decrease in both performance and power consumption (negligible) and the same can be said for Race Driver GRID.

When Hyper Threading improves performance, it does so at a reasonable increase in power consumption. When performance isn't impacted, neither is power consumption. This time around Hyper Threading has no drawbacks, while before the only way to get it was with a processor that was too hot and barely competitive, today Intel offers it on an architecture that we actually like. Hyper Threading is actually the first indication of Nehalem's true strength, not performance, but rather power efficiency...

73 Comments

View All Comments

npp - Tuesday, November 4, 2008 - link

Well, the funny thing is THG got it all messed up, again - they posted a large "CRIPPLED OVERCKLOCKING" article yesterday, and today I saw a kind of apology from them - they seem to have overlooked a simple BIOS switch that prevents the load through the CPU from rising above 100A. Having a month to prepare the launch article, they didn't even bother to tweak the BIOS a bit. That's why I'm not taking their articles seriously, not because they are biased towards Intel ot AMD - they are simply not up to the standars (especially those here @anandtech).gvaley - Tuesday, November 4, 2008 - link

Now give us those 64-bit benchmarks. We already knew that Core i7 will be faster than Core 2, we even knew how much faster.Now, it was expected that 64-bit performance will be better on Core i7 that on Core 2. Is that true? Draw a parallel between the following:

Performance jump from 32- to 64-bit on Core 2

vs.

Performance jump from 32- to 64-bit on Core i7

vs.

Performance jump from 32- to 64-bit on Phenom

badboy4dee - Tuesday, November 4, 2008 - link

and what's those numbers on the charts there? Are they frames per second? high is better then if thats what they are. Charts need more detail or explanation to them dude!TSM

MarchTheMonth - Tuesday, November 4, 2008 - link

I don't believe I saw this anywhere else, but the spots for the cooler on the Mobo, they the same as like the LGA 775, i.e. can we use (non-Intel) coolers that exist now for the new socket?marc1000 - Tuesday, November 4, 2008 - link

no, the new socket is different. the holes are 80mm far from each other, on socket 775 it was 72mm away.Agitated - Tuesday, November 4, 2008 - link

Any info on whether these parts provide an improvement on virtualized workloads or maybe what the various vm companies have planned for optimizing their current software for nehalem?yyrkoon - Tuesday, November 4, 2008 - link

Either I am not reading things correctly, or the 130W TDP does not look promising for the end user such as myself that requires/wants a low powered high performance CPU.The future in my book is using less power, not more, and Intel does not right now seem to be going in this direction. To top things off, the performance increase does not seem to be enough to justify this power increase.

Being completely off grid(100% solar / wind power), there seem to be very few options . . . I would like to see this change. Right now as it stands, sticking with the older architecture seems to make more sense.

3DoubleD - Tuesday, November 4, 2008 - link

130W TDP isn't much worse for previous generations of quad core processors which were ~100W TDP. Also, TDP isn't a measure of power usage, but of the required thermal dissipation of a system to maintain an operating temperature below an set value (eg. Tjmax). So if Tjmax is lower for i7 processors than it is for past quad cores, it may use the same amount of power, but have a higher TDP requirement. The article indicates that power draw has increased, but usually with a large increase in performance. Page 9 of the article has determined that this chip has a greater performance/watt than its predecessors by a significant margin.If you are looking for something that is extremely low power, you shouldn't be looking at a quad core processor. Go buy a laptop (or an EeePC-type laptop with an Atom processor). Intel has kept true to its promise of 2% performance increase for every 1% power increase (eg. a higher performance per watt value).

Also, you would probably save more power overall if you just hibernate your computer when you aren't using it.

Comdrpopnfresh - Monday, November 3, 2008 - link

Do differing cores have access to another's L2? Is it directly, through QPI, or through L3?Also, is the L2 inclusive in the L3; does the L3 contain the L2 data?

xipo - Monday, November 3, 2008 - link

I know games are not the strong area of nehalem, but there are 2 games i'd like to see tested. Unreal T. 3 and Half Life 2 E2.. just to know how does nehalem handles those 2 engines ;D