AMD Radeon HD 4830: Affordable Performance And Heavy Competition

by Derek Wilson on October 23, 2008 12:01 AM EST- Posted in

- GPUs

The Card and The Test

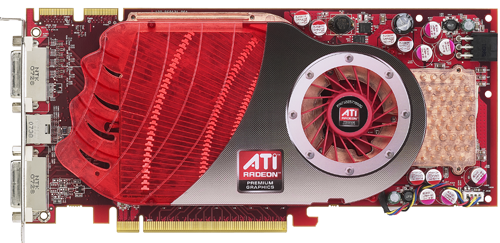

The Radeon HD 4830 reference board we tested is based on a revised design put together for this part, but AMD built this chip to be able to fit into existing 4850 board designs as well. The maximum power envelope is the same, but actual power usage will be lower. AMD has informed us that initial boards based on the 4830 will be using 4850 boards, but that down the line we should start seeing boards based on the more compact 4830 reference design.

As for how the GPU stacks up against some of the other offerings from AMD, here's a handy chart:

| ATI Radeon HD 4870 | ATI Radeon HD 4850 | ATI Radeon HD 4830 | ATI Radeon HD 4670 | |

| Stream Processors | 800 | 800 | 640 | 320 |

| Texture Units | 40 | 40 | 32 | 32 |

| ROPs | 16 | 16 | 16 | 8 |

| Core Clock | 750MHz | 625MHz | 575MHz | 750MHz |

| Memory Clock | 900MHz (3600MHz data rate) GDDR5 | 993MHz (1986MHz data rate) GDDR3 | 900MHz (1800MHz data rate) GDDR3 | 1000MHz (2000MHz data rate) GDDR3 |

| Memory Bus Width | 256-bit | 256-bit | 256-bit | 128-bit |

| Frame Buffer | 512MB/1GB | 512MB | 512MB | 512MB |

| Transistor Count | 956M | 956M | 956M | 514M |

| Manufacturing Process | TSMC 55nm | TSMC 55nm | TSMC 55nm | TSMC 55nm |

Based on the information we know about the GPU, the 4830 is clearly just an RV770 with two SIMDs disabled. While AMD does have safeguards built into their GPUs to help improve yield, nothing is perfect. There will be ICs that come off the line that simply can't function properly at the desired speed or with all the hardware enabled to make it onto a higher end card. Chip makers will save these parts and bin them for possible use in lower end products later. We also sometimes see higher end binned chips released as special editions overclocked models, so it does work both ways.

The price of the 4830 means that it will see higher volume sales than either the 4850 or the 4870. That's just how it works: more people buy cheaper parts. The interesting twist here is that the RV770 is being used in 3 different parts ranging from $130 to $300 with very little time lapse between the initial release and the current situation.

While we still would really love to see a top to bottom launch on day one of a new architecture some time, this is very impressive in it's own right. The delay between the launch of the 4870 and the 4830 is likely due to the fact that AMD needed to maintain enough supply to meet demand for it's two higher end parts while steadily building up a supply of chips for use in the 4830. As demand will be higher, stockpiling chips that can't run at 4830 specification for a few months will certainly help meet the needs of the market.

Now that we know what we're testing, let's take a look at our test platform.

| Test Setup | |

| CPU | Intel Core 2 Extreme QX9770 @ 3.20GHz |

| Motherboard | EVGA nForce 790i SLI |

| Video Cards | ATI Radeon HD 4870 ATI Radeon HD 4850 ATI Radeon HD 4830 ATI Radeon HD 4670 NVIDIA GeForce GTX 260 core 216 NVIDIA GeForce GTX 260 NVIDIA GeForce 9800 GTX+ NVIDIA GeForce 9800 GT |

| Video Drivers | Catalyst 8.11 Beta ForceWare 178.24 |

| Hard Drive | Seagate 7200.9 120GB 8MB 7200RPM |

| RAM | 4 x 1GB Corsair DDR3-1333 7-7-7-20 |

| Operating System | Windows Vista Ultimate 64-bit SP1 |

| PSU | PC Power & Cooling Turbo Cool 1200W |

56 Comments

View All Comments

mczak - Thursday, October 23, 2008 - link

While the text says 1 simd disabled (which is clearly wrong), it seems AMD sent out review samples with 3 simds disabled instead of 2, hence the review samples being slower than they should be (http://www.techpowerup.com/articles/other/155)">http://www.techpowerup.com/articles/other/155). So did you also test such a card?7Enigma - Thursday, October 23, 2008 - link

Where is the Assasin's Creed data? Where is the broken line graph for The Witcher showing performance at different resolutions? I've commented on the last several articles on your data analysis and frequently dislike the chosen resolution you use for the horizontal bar graph, but at least you had the broken line graph to compare with.With the vast majority of people using 17-19" LCD's with 1280X1024 (especially in the price range of the card being reviewed), it seems kind of strange to me the higher resolutions for 20-22" LCD's are the ones being selected for the large bar graphs. I know the playability difference between 60fps and 70fps is moot, but the trend it shows is very important for those that do not plan on upgrading to a larger monitor and want to know which is the better card.

For instance the only data we see for The Witcher is at 20-22" resolutions. This single data point shows the AMD card 9% faster than the Nvidia at a just playable (IMO) framerate. As that is likely the average framerate, a 9% difference could be huge when you get into a minimum situation. If I have a 17-19" monitor this data is worthless. Does the trend of AMD being 9% faster hold true at the lower resolution, or does the Nvidia card pull closer?

And while I'm repeating myself from previous articles, I beg of you, PLEASE PLEASE PLEASE try to keep the colors of the bar graphs the SAME as in the broken line graphs. It is very frustrating to follow the wrong card from bar graph to line graph because the colors do not match up between them.

Overall good review, there are just these nagging issues that would make the article great.

strikeback03 - Thursday, October 23, 2008 - link

I only know two people using 1280x1024 LCDs, and both would consider $130 way too much to spend on any computer component. I'd guess there are more people these days using 17-19" widescreens with 1280x800 or 1440x900 resolution than the 1280x1024 screens, as these widescreens are what has been common in packages at B&M stores or a while now.7Enigma - Thursday, October 23, 2008 - link

And your point is? Those resolutions you listed are right around the 1280X1024 resolution I was referring too. It's not the height/width of the monitor that matters with these cards, it is the overall pixel resolution that can have differences between them. A 1280X1024 uses 1.3 million pixels per screen. A 1440X900 uses almost exactly the same number of total pixels, so you could directly compare the results unless the video card had some weird resizing issues. A 1280X800 uses 1.0 million or about a quarter less so this difference could be even larger between the cards than in my original example.GlItCh017 - Thursday, October 23, 2008 - link

For $130.00 I would not be disappointed with that card. Then you consider it to be somewhat cheaper on newegg maybe as low as $100.00 and you got yourself a bargain, overclock it a little or buy an OC version even, not too shabby.Butterbean - Thursday, October 23, 2008 - link

I jumped to "Final Words" and boom - no info on 4830 but right into steering people to an Nvidia card. That's so Anandtech.mikepers - Thursday, October 23, 2008 - link

Actually what Derek says is it's shop around and get the best price between the two. If there is in fact a $20 to $30 difference then get the 9800gt. If not then get the 4830.Performance is about the same and right now you can get a 9800gt for $100 after rebate. (for $110 get it delivered with a copy of COD4 included)

This doesn't even consider the power advantage of the 9800gt. Assuming you leave your PC on all the time then that little 14 watt idle difference adds up. At the 20 cents per KwH I pay here in Long Island, NY the 9800gt would save me about $24.50 a year. So personally, I would definitely get the 9800gt unless I could find the 4830 for a decent amount less then the 9800gt.

Spoelie - Thursday, October 23, 2008 - link

Didn't really notice it the first read through and most of the time I don't concur with bias allegations.However it seems in this case the paragraphs could have been rearranged to 1-4-5-2-3-6 (no rewording necessary) and the conclusion would have said the same thing, only focusing a bit more obvious on the product at hand than what a great deal the nvidia card is.

DerekWilson - Friday, October 24, 2008 - link

thats actually a good suggestion. done.Hanners - Thursday, October 23, 2008 - link

"Based on the information we know about the GPU, the 4830 is clearly just an RV770 with one SIMD disabled."Don't you mean two SIMD cores?