Nehalem - Everything You Need to Know about Intel's New Architecture

by Anand Lal Shimpi on November 3, 2008 1:00 PM EST- Posted in

- CPUs

...and Now We Understand Why: Hyper Threading

Years ago I asked Pat Gelsinger what he was most excited about in the microprocessor industry, he answered: threading. This was during the early days of Hyper Threading but since the demise of the Pentium 4, we never really got another look at HT on any of the subsequent microprocessors based on Core.

Hyper Threading was Intel’s marketing name for simultaneous multi-threading (SMT), the ability to fetch instructions from two threads at the same time instead of just a single one. The OS sees a HT enabled processor as multiple processors, in this case two, and send two threads of instructions to the CPU.

Nehalem sees the return of Hyper Threading and it is met with much, much better performance than we ever saw with Pentium 4 for a number of reasons:

1) Nehalem has much more memory bandwidth and larger caches than Pentium 4, giving it the ability to get data to the core faster and more predictably

2) Nehalem is a much wider architecture than Pentium 4, taking advantage of it demands the use of multiple threads per core.

Just as the first Pentium 4 didn’t ship with Hyper Threading support, the architectures that Nehalem was derived from didn’t have HT either. The time is right with Nehalem for the reasons mentioned above and because there are simply many more applications that can take advantage of HT today than ever before.

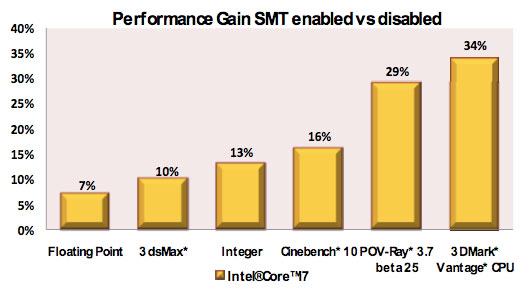

The chart below shows the performance gain from enabling HT on a Nehalem processor, no other variables were changed:

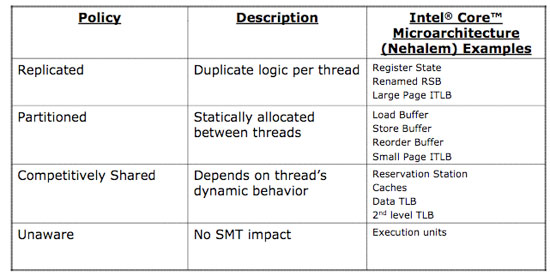

Just like on Atom, enabling HT on Nehalem required very little die area. Only the register state, renamed return stack buffer and large page instruction TLBs are duplicated. The rest of the data structures are either partitioned in half when HT is enabled or are “competitively” shared, in that they dynamically determine how much of each resource is allocated per thread:

Enabling HT was a very power efficient move for Nehalem, and you can expect that it will improve performance in a number of applications - far more regularly than it ever did with the Pentium 4.

Now it should also make sense why Intel increased buffer sizes in Nehalem, because many of those buffers now have to deal with ops coming from two threads instead of just one. That’s more instructions to decode, better utilization of the front end, more micro-ops in the pipeline, more micro-ops in the re-order engine and more micro-ops executing concurrently.

35 Comments

View All Comments

defter - Friday, August 22, 2008 - link

Links are 20-bit wide, regardless of encoding or whether 1,2,8,16 or 20 bits are used to tranmist the data.I wonder who is flamebaiting here, a previous poster just mentioned the correct link width, he wasn't talking about "usable speed".

rbadger - Thursday, August 21, 2008 - link

"Each QPI link is bi-directional supporting 6.4 GT/s per link. Each link is 2-bytes wide..."This is actually incorrect. Each link is 20 bits wide, not 16 (2 bytes). This information is on the slide posted directly below the paragraph.

JarredWalton - Thursday, August 21, 2008 - link

It's 20-bits but using a standard 8/10 encoding mechanism, so of the 20 bits only 16 are used to transmit data and the other four bits are (I believe) for clock signaling and/or error correction. It's the same thing we see with SATA and HyperTransport.ltcommanderdata - Thursday, August 21, 2008 - link

Since the PCU has a firmware, I wonder if it will be updatable? It would be useful if lessons learn in the power management logic of later steppings and in Westmere can be brought back to all Nehalems through a firmware update for lower power consumption or even better performance with better Turbo mode application. Although a failed or corrupt firmware update on a CPU could be very problematic.wingless - Thursday, August 21, 2008 - link

I thought about this when I read about it the first time too. Flashing your CPU could kill the power management or the whole CPU in one fell swoop!