AMD's Radeon HD 4870 X2 - Testing the Multi-GPU Waters

by Anand Lal Shimpi & Derek Wilson on August 12, 2008 12:00 AM EST- Posted in

- GPUs

These Aren't the Sideports You're Looking For

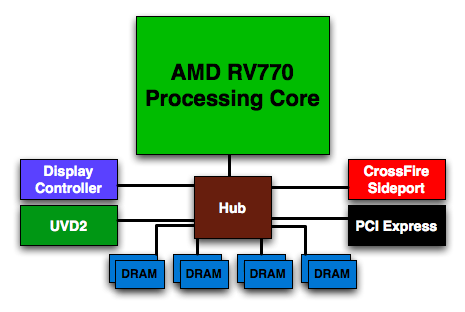

Remember this diagram from the Radeon HD 4850/4870 review?

I do. It was one of the last block diagrams I drew for that article, and I did it at the very last minute and wasn't really happy with the final outcome. But it was necessary because of that little red box labeled CrossFire Sideport.

AMD made a huge deal out of making sure we knew about the CrossFire Sideport, promising that it meant something special for single-card, multi-GPU configurations. It also made sense that AMD would do something like this, after all the whole point of AMD's small-die strategy is to exploit the benefits of pairing multiple small GPUs. It's supposed to be more efficient than designing a single large GPU and if you're going to build your entire GPU strategy around it, you had better design your chips from the start to be used in multi-GPU environments - even more so than your competitors.

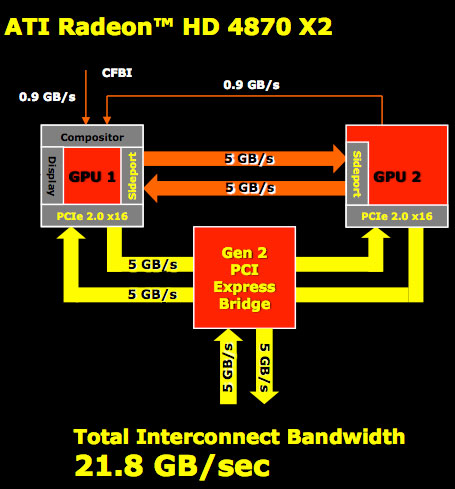

AMD wouldn't tell us much initially about the CrossFire Sideport other than it meant some very special things for CrossFire performance. We were intrigued but before we could ever get excited AMD let us know that its beloved Sideport didn't work. Here's how it would work if it were enabled:

The CrossFire Sideport is simply another high bandwidth link between the GPUs. Data can be sent between them via a PCIe switch on the board, or via the Sideport. The two aren't mutually exclusive, using the Sideport doubles the amount of GPU-to-GPU bandwidth on a single Radeon HD 4870 X2. So why disable it?

According to AMD the performance impact is negligible, while average frame rates don't see a gain every now and then you'll see a boost in minimum frame rates. There's also an issue where power consumption could go up enough that you'd run out of power on the two PCIe power connectors on the board. Board manufacturers also have to lay out the additional lanes on the graphics card connecting the two GPUs, which does increase board costs (although ever so slightly).

AMD decided that since there's relatively no performance increase yet there's an increase in power consumption and board costs that it would make more sense to leave the feature disabled.

The reference 4870 X2 design includes hardware support for the CrossFire Sideport, assuming AMD would ever want to enable it via a software update. However, there's no hardware requirement that the GPU-to-GPU connection is included on partner designs. My concern is that in an effort to reduce costs we'll see some X2s ship without the Sideport traces laid out on the PCB, and then if AMD happens to enable the feature in its drivers later on some X2 users will be left in the dark.

I pushed AMD for a firm commitment on how it was going to handle future support for Sideport and honestly, right now, it's looking like the feature will never get enabled. AMD should have never mentioned that it ever existed, especially if there was a good chance that it wouldn't be enabled. AMD (or more specifically ATI) does have a history of making a big deal of GPU features that never get used (Truform anyone?), so it's not too unexpected but still annoying.

The lack of anything special on the 4870 X2 to make the two GPUs work better together is bothersome. You would expect a company who has built its GPU philosophy on going after the high end market with multi-GPU configurations to have done something more than NVIDIA when it comes to actually shipping a multi-GPU card. AMD insists that a unified frame buffer is coming, it just needs to make economic sense first. The concern here is that NVIDIA could just as easily adopt AMD's small-die strategy going forward if AMD isn't investing more R&D dollars into enabling multi-GPU specific features than NVIDIA.

The lack of CrossFire Sideport support or any other AMD-only multi-GPU specific features reaffirms what we said in our Radeon HD 4800 launch article: AMD and NVIDIA don't really have different GPU strategies, they simply target different markets with their baseline GPU designs. NVIDIA aims at the $400 - $600 market while AMD shoots for the $200 - $300 market. And both companies have similar multi-GPU strategies, AMD simply needs to rely on its more.

93 Comments

View All Comments

pattycake0147 - Tuesday, August 12, 2008 - link

I've noticed the same bias recently. I've only been a member for a little over a year now and even in the short time the site has gone downhill.sweetsauce - Tuesday, August 12, 2008 - link

Translation: I like ATI and you don't so im going to bitch. Even though my name is tech guy, i obviously have ovaries. I'm going to go cry now on ATI's behalf.jnmfox - Tuesday, August 12, 2008 - link

Get over yourself. Pointing out facts isn't taking pot-shots.This is just what I was looking for in a review of the X2. The numbers tell the story. In the majority of cases the X2 isn't worth it, and until AMD & NVIDIA get proper hardware implementation of multi-GPU solutions it will most continue to be the case.

To little performance increase for the large increase is cost.

skiboysteve - Tuesday, August 12, 2008 - link

i completely agree with anand on this article. the lack of innovation from a company supposedly focusing on multi chip solutions is stupidalthough yes, it is really fast.

and why cant they clock it lower at idle?

astrodemoniac - Tuesday, August 12, 2008 - link

... reviews I have ever seen here @ Anands. I am extremely disappointed with this so called "Review" ... hell, I have seen PREVIEWS that would put it to shame.Oh, and what in the hell did AMD do to you that you're so obviously pissed off at them?... are you annoyed they didn't give you preferential treatment to release the review earlier? man just go back to the unbiased reviews, we're buying graphic cards, not brands.

It's like the guys writing the reviews are not gamers any more o_0

/rant

Halley - Wednesday, August 13, 2008 - link

It's no secret that AnandTech is "managed by Intel" as a user put it. Of course every one must have some source of income to support their families and themselves but it's pathetic to show such blatant biasedness.TheDoc9 - Tuesday, August 12, 2008 - link

Anandtech isn't about gaming anymore, it's about photography and home theater. And the occasional newest intel extreem cpu.I think Dailytech and the forums carry Anandtech these days...

DigitalFreak - Tuesday, August 12, 2008 - link

"AMD decided that since there's relatively no performance increase yet there's an increase in power consumption and board costs that it would make more sense to leave the feature disabled. "In other words, it's broken in hardware and we couldn't get it working, so we "disabled" it.

NullSubroutine - Tuesday, August 12, 2008 - link

You didn't even include test system specs or driver versions.CreasianDevaili - Tuesday, August 12, 2008 - link

I wanted to know why you didnt retest the 4870CF setup when you obviously had some issues with it before in GRID. I noticed the 280gtx setup was retested which resulted in higher FPS. I feel that after running the game at 2560x1600 on my FW900 and also from other reviews that you had a issue with crossfire not working at that resolution. The single 4870 shouldnt be getting better FPS by that degree at 2560x1600 because it also has 512mb of vram.So I just wanted to know why the 280gtx was special enough to retest when this review was about the 4870X2. If it is to show a good comparison then why wasnt the 4870CF, which many have and want to see, not retested as well.