NVIDIA's 1.4 Billion Transistor GPU: GT200 Arrives as the GeForce GTX 280 & 260

by Anand Lal Shimpi & Derek Wilson on June 16, 2008 9:00 AM EST- Posted in

- GPUs

Power and Power Management

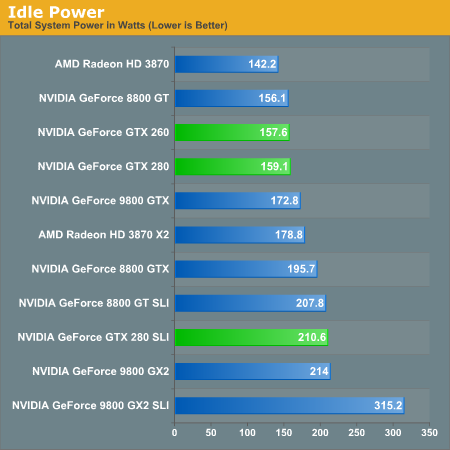

Power is a major concern of many tech companies going forward, and just adding features "because we can" isn't the modus operandi anymore. Now it's cool (pardon the pun) to focus on power management, performance per watt, and similar metrics. To that end, NVIDIA has beat their GT200 into such submission that it's 2D power consumption can reach as low as 25W. As we will show below, this can have a very positive impact on idle power for a very powerful bit of hardware.

These enhancements aren't breakthorugh technologies: NVIDIA is just using clock gating and dynamic voltage and clock speed adjustment to achieve these savings. There is hardware on the GPU to monitor utilization and automatically set the clock speeds to different performance modes (either off for hybrid power, 2D/idle, HD video, or 3D/performance). Mode changes can be done on the millisecond level. This is very similar to what AMD has already implemented.

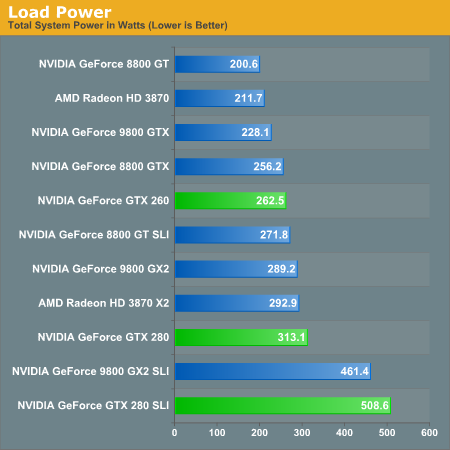

With increasing transistor count and huge GPU sizes with lots of memory, power isn't something that can stay low all the time. Eventually the hardware will actually have to do something and then voltages will rise, clock speed will increase, and power will be converted into dissapated heat and frames per second. And it is hard to say what is more impressive, the power saving features at idle, or the power draw at load.

There is an in between stage for HD video playback that runs at about 32W, and it is good to see some attention payed to this issue specifically. This bodes well for mobile chips based off of the GT200 design, but in the desktop this isn't as mission critical. Yes reducing power (and thus what I have to pay my power company) is a good thing, but plugging a card like this into your computer is like driving an exotic car: if you want the experience you've got to pay for the gas.

Idle power so low is definitely nice to see. Having high end cards idle near midrange solutions from previous generations is a step in the right direction.

But as soon as we open up the throttle, that power miser is out the door and joules start flooding in by the bucket.

Cooling NVIDIA's hottest card isn't easy and you can definitely hear the beast moving air. At idle, the GPU is as quiet as any other high-end NVIDIA GPU. Under load, as the GTX 280 heats up the fan spins faster and moves much more air, which quickly becomes audible. It's not GeForce FX annoying, but it's not as quiet as other high-end NVIDIA GPUs; then again, there are 1.4 billion transistors switching in there. If you have a silent PC, the GTX 280 will definitely un-silence it and put out enough heat to make the rest of your fans work harder. If you're used to a GeForce 8800 GTX, GTS or GT, the noise will bother you. The problem is that returning to idle from gaming for a couple of hours results in a fan that doesn't want to spin down as low as when you first turned your machine on.

While it's impressive that NVIDIA built this chip on a 65nm process, it desperately needs to move to 55nm.

108 Comments

View All Comments

junkmonk - Monday, June 16, 2008 - link

I can has vertex data? LMFAO, hahha that was a good laugh.PrinceGaz - Monday, June 16, 2008 - link

When I looked at that, I assumed it must be a non-native English speaker who put that in the block. I'm still not entirely sure what it was trying to convey other than that the core will need to be fed with lots of vertices to keep it busy.Spoelie - Tuesday, June 17, 2008 - link

http://icanhascheezburger.com/">http://icanhascheezburger.com/http://icanhascheezburger.com/tag/cheezburger/">http://icanhascheezburger.com/tag/cheezburger/

chizow - Monday, June 16, 2008 - link

Its going to take some time to digest it all, but you two have done it again with a massive but highly readable write-up of a new complex microchip. You guys are still the best at what you do, but a few points I wanted to make:1) THANK YOU for the clock-for-clock comparo with G80. I haven't fully digested the results, but I disagree with your high-low increase thresholds being dependent on solely TMU and SP. You don't mention GT200 has 33% more ROP as well which I think was the most important addition to GT200.

2) The SP pipeline discussion was very interesting, I read through 3/4 of it and glanced over the last few paragraphs and it didn't seem like you really concluded the discussion by drawing on the relevance of NV's pipeline design. Is that why NV's SPs are so much better than ATI's, and why they perform so well compared to deep piped traditional CPUs? What I gathered was that NV's pipeline isn't nearly as rigid or static as traditional pipelines, meaning they're more efficient and less dependent on other data in the pipe.

3) I could've lived without the DX10.1 discussion and more hints at some DX10.1 AC/TWIMTBP conspiracy. You hinted at the main reason NV wouldn't include DX10.1 on this generation (ROI) then discount it in the same breath and make the leap to conspiracy theory. There's no doubt NV is throwing around market share/marketing muscle to make 10.1 irrelevant but does that come as any surprise if their best interest is maximizing ROI and their current gen parts already outperform the competition without DX10.1?

4) CPU bottlenecking seems to be a major issue in this high-end of GPUs with the X2/SLI solutions and now GT200 single-GPUs. I noticed this in a few of the other reviews where FPS results were flattening out at even 16x12 and 19x12 resolutions with 4GHz C2D/Qs. You'll even see it in a few of your benches at those higher (16/19x12) resolutions in QW:ET and even COD4 and those were with 4x AA. I'm sure the results would be very close to flat without AA.

That's all I can think of for now, but again another great job. I'll be reading/referencing it for the next few days I'm sure. Thanks again!

OccamsAftershave - Monday, June 16, 2008 - link

"If NVIDIA put the time in (or enlisted help) to make CUDA an ANSI or ISO standard extention to a programming language, we would could really start to get excited."Open standards are coming. For example, see Apple's OpenCL, coming in their next OS release.

http://news.yahoo.com/s/nf/20080612/bs_nf/60250">http://news.yahoo.com/s/nf/20080612/bs_nf/60250

ltcommanderdata - Monday, June 16, 2008 - link

At least AMD seems to be moving toward standardizing their GPGPU support.http://www.amd.com/us-en/Corporate/VirtualPressRoo...">http://www.amd.com/us-en/Corporate/VirtualPressRoo...

AMD has officially joined Apple's OpenCL initiative under the Khronos Compute Working Group.

Truthfully, with nVidia's statements about working with Apple on CUDA in the days leading up to WWDC, nVidia is probably on board with OpenCL too. It's just that their marketing people probably want to stick with their own CUDA branding for now, especially for the GT200 launch.

Oh, and with AMD's launch of the FireStream 9250, I don't suppose we could see benchmarks of it against the new Tesla?

paydirt - Monday, June 16, 2008 - link

tons of people reading this article and thinking "well, performance per cost, it's underwhelming (as a gaming graphics card)." What people are missing is that GPUs are quickly becoming the new supercomputers.ScythedBlade - Monday, June 16, 2008 - link

Lol ... anyone else catch that?Griswold - Monday, June 16, 2008 - link

Too expensive, too power hungry and according to other reviews, too loud for too little gain.The GT200 could become Nvidias R600.

Bring it on AMD, this is your big chance!

mczak - Monday, June 16, 2008 - link

G92 does not have 6 rop partitions - only 4 (this is also wrong in the diagram). Only G80 had 6.And please correct that history rewriting - that the FX failed against radeon 9700 had NOTHING to do with the "powerful compute core" vs. the high bandwidth (ok the high bandwidth did help), in fact quite the opposite - it was slow because the "powerful compute core" was wimpy compared to the r300 core. It definitely had a lot more flexibility but the compute throughput simply was more or less nonexistent, unless you used it with pre-ps20 shaders (where it could use its fx12 texture combiners).