NVIDIA's 1.4 Billion Transistor GPU: GT200 Arrives as the GeForce GTX 280 & 260

by Anand Lal Shimpi & Derek Wilson on June 16, 2008 9:00 AM EST- Posted in

- GPUs

Overclocked and 4GB of GDDR3 per Card: Tesla 10P

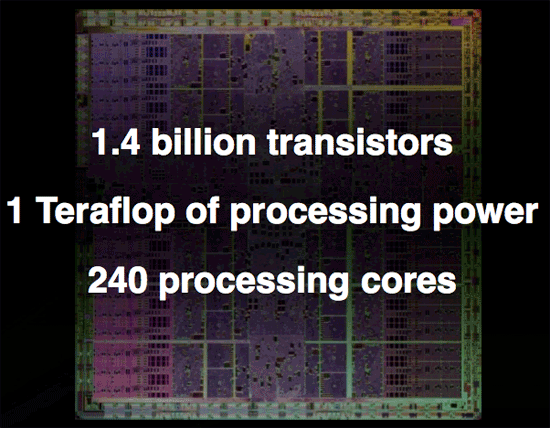

Now let's say that you want to get some real work done with NVIDIA's GT200 GPU but that 1.4 billion transistor chip just isn't enough. NVIDIA does have an answer for you, in the form of an overclocked GT200 with the 240 SPs running at 1.5GHz (up from 1.3GHz in the GTX 280) and with a full 4GB of GDDR3 memory on-board.

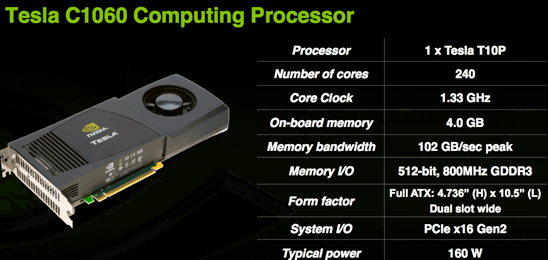

Today NVIDIA is also announcing their next generation Tesla product based on GT200 (called a T10P when used on Tesla for some reason). The workstation graphics guys will have to wait a while for a GT200 Quadro unfortunately. This new Tesla is similar to the older model in that it has much more RAM and no IO ports. The server version is also clocked higher than the desktop part because fan noise isn't an issue and data centers have lower ambient temperatures than some corner of an office under a desk.

The Tesla C1060 has an entire 4GB of RAM on board. This is obviously very large and will do well to accomodate the large scale scientific computing apps it is targeted at. This card is designed for use in workstations and is the little brother to the new monster server that is also being announced today.

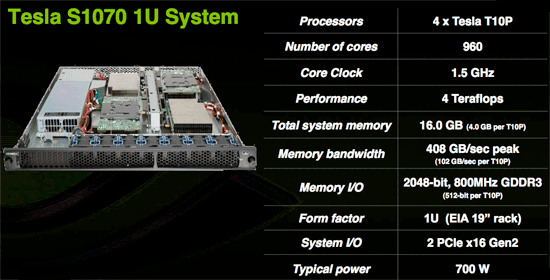

The Tesla S1070 is a 1U server containing essentially 4 C1060 cards for a total of 16GBs of RAM on 960 SPs. This server, like the older version, connects to a server via a PCIe cable and is designed to run code written for CUDA at incredible speeds. With 120 double precision IEEE 754r floating point units in combination with the 960 single precision IEEE 754 units, this server is a viable option for many more projects than the previous Tesla hardware which was only capable of single precision floating point.

Though we don't have an application to benchmark the double precision floating point hardware on GT200 yet, NVIDIA states that a GT200 can roughly match an 8 core Xeon system in DP performance. This would put the S1070 on par with a 32 way Xeon setup at less than 700W. Needless to say, single precision code runs much much faster and can outpace hundreds of traditional CPUs in parallel.

While these servers are expensive (though we don't have pricing), they are cheap compared to the alternatives currently out there. The fact that CUDA code can be implemented and tested on any of the 70 million NVIDIA G80+ GPUs currently in people's hands means that developer already have a platform to test and debug code on before committing to the Tesla solution. On top of that, schools are beginning to adopt CUDA as a teaching tool for parallel computing. As CUDA gains acceptance and the benefits of GPU computing are realized, more and more major markets will take interest.

The graphics card is no longer a toy. The combination of CUDA's academic acceptance as a teaching tool and the availability of 64-bit floating point in GT200 make GPUs a mission critical computing tool that will act as a truly disruptive technology. Not only will many major markets that depend on high performance floating-point processing realize this, but every consumer with an NVIDIA graphics card will be able to take advantage of hundreds of gigaflops of performance from CUDA based consumer applications.

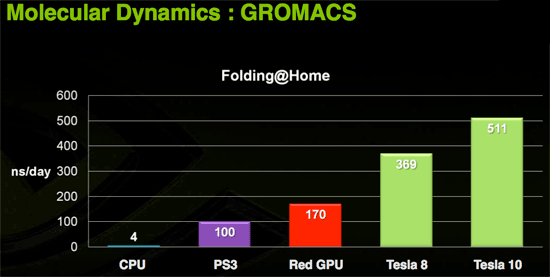

Today we have folding@home and soon we'll have Elemental's transcoder. Imagine the audio and video processing capabilities of a PC if the GPU were actively used in software like ProTools and Premier. Open source programs could easily best the processing capabilities of many solutions with dedicated hardware for these types of applications.

Of course, the major limiter to the adoption of this technology is that it is vendor specific. If NVIDIA put the time in (or enlisted help) to make CUDA an ANSI or ISO standard extention to a programming language, we would could really start to get excited. Beyond that, the holy grail would be a unification of virtualized instruction sets creating a standard low level "assembly" interface for GPU computing allowing CUDA to compile to one target and run on any graphics card. Sort of an x86 for massively parallel work.

Right now CUDA compiles to PTX, NVIDIA's virtual instruction set, and there is no reason someone couldn't write a CUDA compiler to target AMD's equivalent CAL (or even to develop a PTX to CAL wrapper that allowed AMD GPUs to run compiled CUDA code). Unfortunately, NVIDIA doesn't want to invest money and resources in extending functionality to AMD and AMD doesn't want to invest money and resources into bolstering an NVIDIA owned technology (that could theoretically radically change to cripple AMD's hardware support in future versions). While standards and cooperation are a great idea, the competition in this market is such that neither NVIDIA nor AMD are looking to take a chance on benefiting the consumer if there is any risk of strenthening the competition (even in spite of weakening the industry).

108 Comments

View All Comments

junkmonk - Monday, June 16, 2008 - link

I can has vertex data? LMFAO, hahha that was a good laugh.PrinceGaz - Monday, June 16, 2008 - link

When I looked at that, I assumed it must be a non-native English speaker who put that in the block. I'm still not entirely sure what it was trying to convey other than that the core will need to be fed with lots of vertices to keep it busy.Spoelie - Tuesday, June 17, 2008 - link

http://icanhascheezburger.com/">http://icanhascheezburger.com/http://icanhascheezburger.com/tag/cheezburger/">http://icanhascheezburger.com/tag/cheezburger/

chizow - Monday, June 16, 2008 - link

Its going to take some time to digest it all, but you two have done it again with a massive but highly readable write-up of a new complex microchip. You guys are still the best at what you do, but a few points I wanted to make:1) THANK YOU for the clock-for-clock comparo with G80. I haven't fully digested the results, but I disagree with your high-low increase thresholds being dependent on solely TMU and SP. You don't mention GT200 has 33% more ROP as well which I think was the most important addition to GT200.

2) The SP pipeline discussion was very interesting, I read through 3/4 of it and glanced over the last few paragraphs and it didn't seem like you really concluded the discussion by drawing on the relevance of NV's pipeline design. Is that why NV's SPs are so much better than ATI's, and why they perform so well compared to deep piped traditional CPUs? What I gathered was that NV's pipeline isn't nearly as rigid or static as traditional pipelines, meaning they're more efficient and less dependent on other data in the pipe.

3) I could've lived without the DX10.1 discussion and more hints at some DX10.1 AC/TWIMTBP conspiracy. You hinted at the main reason NV wouldn't include DX10.1 on this generation (ROI) then discount it in the same breath and make the leap to conspiracy theory. There's no doubt NV is throwing around market share/marketing muscle to make 10.1 irrelevant but does that come as any surprise if their best interest is maximizing ROI and their current gen parts already outperform the competition without DX10.1?

4) CPU bottlenecking seems to be a major issue in this high-end of GPUs with the X2/SLI solutions and now GT200 single-GPUs. I noticed this in a few of the other reviews where FPS results were flattening out at even 16x12 and 19x12 resolutions with 4GHz C2D/Qs. You'll even see it in a few of your benches at those higher (16/19x12) resolutions in QW:ET and even COD4 and those were with 4x AA. I'm sure the results would be very close to flat without AA.

That's all I can think of for now, but again another great job. I'll be reading/referencing it for the next few days I'm sure. Thanks again!

OccamsAftershave - Monday, June 16, 2008 - link

"If NVIDIA put the time in (or enlisted help) to make CUDA an ANSI or ISO standard extention to a programming language, we would could really start to get excited."Open standards are coming. For example, see Apple's OpenCL, coming in their next OS release.

http://news.yahoo.com/s/nf/20080612/bs_nf/60250">http://news.yahoo.com/s/nf/20080612/bs_nf/60250

ltcommanderdata - Monday, June 16, 2008 - link

At least AMD seems to be moving toward standardizing their GPGPU support.http://www.amd.com/us-en/Corporate/VirtualPressRoo...">http://www.amd.com/us-en/Corporate/VirtualPressRoo...

AMD has officially joined Apple's OpenCL initiative under the Khronos Compute Working Group.

Truthfully, with nVidia's statements about working with Apple on CUDA in the days leading up to WWDC, nVidia is probably on board with OpenCL too. It's just that their marketing people probably want to stick with their own CUDA branding for now, especially for the GT200 launch.

Oh, and with AMD's launch of the FireStream 9250, I don't suppose we could see benchmarks of it against the new Tesla?

paydirt - Monday, June 16, 2008 - link

tons of people reading this article and thinking "well, performance per cost, it's underwhelming (as a gaming graphics card)." What people are missing is that GPUs are quickly becoming the new supercomputers.ScythedBlade - Monday, June 16, 2008 - link

Lol ... anyone else catch that?Griswold - Monday, June 16, 2008 - link

Too expensive, too power hungry and according to other reviews, too loud for too little gain.The GT200 could become Nvidias R600.

Bring it on AMD, this is your big chance!

mczak - Monday, June 16, 2008 - link

G92 does not have 6 rop partitions - only 4 (this is also wrong in the diagram). Only G80 had 6.And please correct that history rewriting - that the FX failed against radeon 9700 had NOTHING to do with the "powerful compute core" vs. the high bandwidth (ok the high bandwidth did help), in fact quite the opposite - it was slow because the "powerful compute core" was wimpy compared to the r300 core. It definitely had a lot more flexibility but the compute throughput simply was more or less nonexistent, unless you used it with pre-ps20 shaders (where it could use its fx12 texture combiners).