NVIDIA's 1.4 Billion Transistor GPU: GT200 Arrives as the GeForce GTX 280 & 260

by Anand Lal Shimpi & Derek Wilson on June 16, 2008 9:00 AM EST- Posted in

- GPUs

GT200 vs. G80: A Clock for Clock Comparison

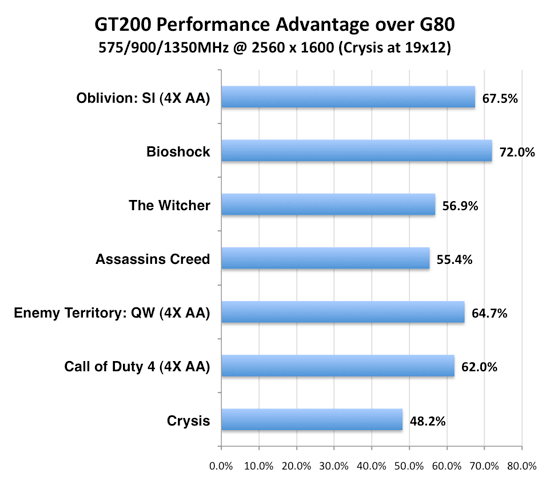

The GT200 architecture isn't tremendously different from G80 or G92, it just has a lot more processing power. The comparison below highlights the clock for clock difference between GT200 and its true predecessor, NVIDIA's G80. We clocked both GPUs at 575MHz core, 900MHz memory and 1350MHz shader, so this is a look at the hardware's architectural enhancements combined with the pipeline and bus width increases. The graph below shows the performance advantage of GT200 over G80 at the same clock speeds:

Clock for clock, just due to width increases, we should be at the very worst 25% faster with GT200. This would be the case where we are texture bound. It is unlikely an entire game will be blend rate bound to the point where we see greater than 2x speedups, and while test cases could show this real world apps just aren't blend bound. More realistically, the 87.5% increase in SPs will be the upper limit on performance improvements at the same clock rate. We see our tests behave within these predicted ranges.

Based on this, it appears that Bioshock is quite compute bound and doesn't run into many other bottlenecks when the burden is eased. Crysis on the other hand seems to be limited by more than just compute as it didn't benefit quite as much.

The way compute has been rebalanced does affect the conditions under which performance will benefit from the additional units. More performance will be available in the case where a game didn't just need more compute, but it needed more computer per texture. The converse is true when a game could benefit from more compute, but only if there was more texture hardware to feed them.

108 Comments

View All Comments

gigahertz20 - Monday, June 16, 2008 - link

I think these ridiculous prices and lackluster performance is just a way for them to sell more SLI motherboards, who would buy a $650 GTX 280 when you can buy two 8800GT's with a SLI mobo and get better performance? Especially now that the 8800GT's are approaching around $150.crimson117 - Monday, June 16, 2008 - link

It's only worth riding the bleeding edge when you can afford to stay there with every release. Otherwise, 12 months down the line, you have no budget left for an upgrade, while everyone else is buying new $200 cards that beat your old $600 card.So yeah you can buy an 8800GT or two right now, and you and me should probably do just that! But Richie Rich will be buying 2x GTX 280's, and by the time we could afford even one of those, he'll already have ordered a pair of whatever $600 cards are coming out next.

7Enigma - Tuesday, June 17, 2008 - link

Nope, the majority of these cards go to Alienware/Falcon/etc. top of the line, overpriced pre-built systems. These are for the people that blow $5k on a system every couple years, don't upgrade, might not even seriously game, they just want the best TODAY.They are the ones that blindly check the bottom box in every configuration for the "fastest" computer money can buy.

gigahertz20 - Monday, June 16, 2008 - link

Very few people are richie rich and stay at the bleeding edge. People that are very wealthy tend not to be computer geeks and purchase their computers from Dell and what not. I'd say at least 96% of gamers out there are value oriented, these $650 cards will not sell much at all. If anything, you'll see people claim to have bought one or two of these in forums and other places, but their just lying.perzy - Monday, June 16, 2008 - link

Well I for one is waiting for Larabee. Maybee (probably) it isen' all that its cranked up to be, but I want to see.And what about some real powersaving Nvidia?

can - Monday, June 16, 2008 - link

I wonder if you can just flash the BIOS of the 260 to get it to operate as if it were a 280...7Enigma - Tuesday, June 17, 2008 - link

You haven't been able to do this for a long time....they learned their lessons the hard way. :)Nighteye2 - Monday, June 16, 2008 - link

Is it just me, or does this focus on compute power mean Nvidia is starting to get serious about using the GPU for physics, as well as graphics? It's also in-line with the Ageia acquisition.will889 - Monday, June 16, 2008 - link

At the point where NV has actually managed to position SLI mobos and GPU's where you actually need that much power to get decent FPS (above 30 average) from games gaming on the PC will be entirely dead to all those but the most esoteric. It would be different if there were any games worth playing or as many games as the console brethren have. I thought GPU's/cases/power supplies were supposed to become more efficient? EG smaller but faster sort of how the TV industry made TV's bigger yet smaller in footprint with way more features - not towering cases with 1200KW PSU's and 2X GTX 280 GPU's? All this in the face o drastically raised gas prices?Wanna impress me? How about a single GPU with the PCB size of a 7600GT/GS that's 15-25% faster than a 9800GTX that can fit into a SFF case? needing a small power supply AND able to run passively @ moderate temps. THAT would be impressive. No, Seargent Tom and his TONKA_TRUCK crew just have to show how beefy his toys can be and yank your wallet chains for said. Hell, everyone needs a Boeing 747 in their case right? cause' that's progress for those 1-2 gaming titles per years that give you 3-4 hours of enjoyable PC gaming.....

/off box

ChronoReverse - Tuesday, June 17, 2008 - link

The 4850 might actually hit that target...