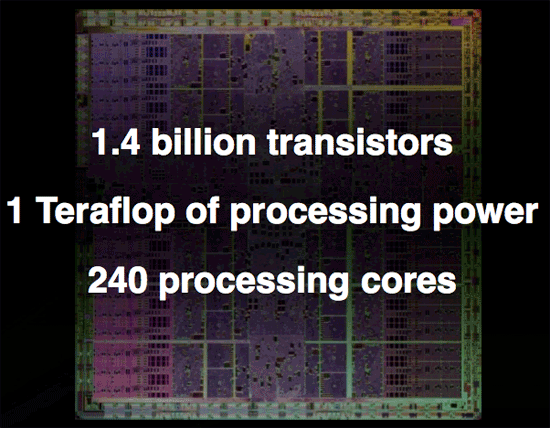

NVIDIA's 1.4 Billion Transistor GPU: GT200 Arrives as the GeForce GTX 280 & 260

by Anand Lal Shimpi & Derek Wilson on June 16, 2008 9:00 AM EST- Posted in

- GPUs

Overclocked and 4GB of GDDR3 per Card: Tesla 10P

Now let's say that you want to get some real work done with NVIDIA's GT200 GPU but that 1.4 billion transistor chip just isn't enough. NVIDIA does have an answer for you, in the form of an overclocked GT200 with the 240 SPs running at 1.5GHz (up from 1.3GHz in the GTX 280) and with a full 4GB of GDDR3 memory on-board.

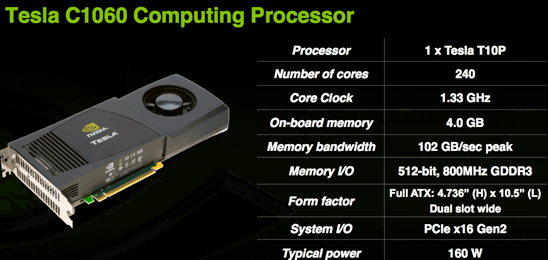

Today NVIDIA is also announcing their next generation Tesla product based on GT200 (called a T10P when used on Tesla for some reason). The workstation graphics guys will have to wait a while for a GT200 Quadro unfortunately. This new Tesla is similar to the older model in that it has much more RAM and no IO ports. The server version is also clocked higher than the desktop part because fan noise isn't an issue and data centers have lower ambient temperatures than some corner of an office under a desk.

The Tesla C1060 has an entire 4GB of RAM on board. This is obviously very large and will do well to accomodate the large scale scientific computing apps it is targeted at. This card is designed for use in workstations and is the little brother to the new monster server that is also being announced today.

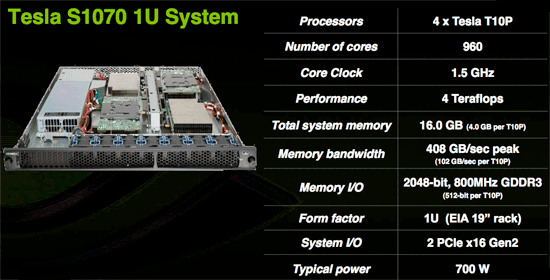

The Tesla S1070 is a 1U server containing essentially 4 C1060 cards for a total of 16GBs of RAM on 960 SPs. This server, like the older version, connects to a server via a PCIe cable and is designed to run code written for CUDA at incredible speeds. With 120 double precision IEEE 754r floating point units in combination with the 960 single precision IEEE 754 units, this server is a viable option for many more projects than the previous Tesla hardware which was only capable of single precision floating point.

Though we don't have an application to benchmark the double precision floating point hardware on GT200 yet, NVIDIA states that a GT200 can roughly match an 8 core Xeon system in DP performance. This would put the S1070 on par with a 32 way Xeon setup at less than 700W. Needless to say, single precision code runs much much faster and can outpace hundreds of traditional CPUs in parallel.

While these servers are expensive (though we don't have pricing), they are cheap compared to the alternatives currently out there. The fact that CUDA code can be implemented and tested on any of the 70 million NVIDIA G80+ GPUs currently in people's hands means that developer already have a platform to test and debug code on before committing to the Tesla solution. On top of that, schools are beginning to adopt CUDA as a teaching tool for parallel computing. As CUDA gains acceptance and the benefits of GPU computing are realized, more and more major markets will take interest.

The graphics card is no longer a toy. The combination of CUDA's academic acceptance as a teaching tool and the availability of 64-bit floating point in GT200 make GPUs a mission critical computing tool that will act as a truly disruptive technology. Not only will many major markets that depend on high performance floating-point processing realize this, but every consumer with an NVIDIA graphics card will be able to take advantage of hundreds of gigaflops of performance from CUDA based consumer applications.

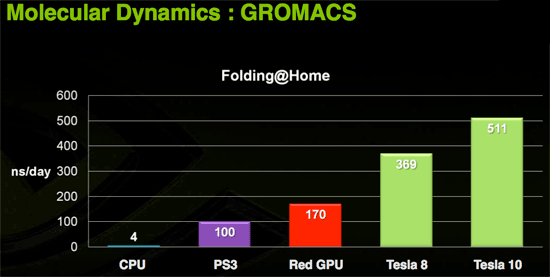

Today we have folding@home and soon we'll have Elemental's transcoder. Imagine the audio and video processing capabilities of a PC if the GPU were actively used in software like ProTools and Premier. Open source programs could easily best the processing capabilities of many solutions with dedicated hardware for these types of applications.

Of course, the major limiter to the adoption of this technology is that it is vendor specific. If NVIDIA put the time in (or enlisted help) to make CUDA an ANSI or ISO standard extention to a programming language, we would could really start to get excited. Beyond that, the holy grail would be a unification of virtualized instruction sets creating a standard low level "assembly" interface for GPU computing allowing CUDA to compile to one target and run on any graphics card. Sort of an x86 for massively parallel work.

Right now CUDA compiles to PTX, NVIDIA's virtual instruction set, and there is no reason someone couldn't write a CUDA compiler to target AMD's equivalent CAL (or even to develop a PTX to CAL wrapper that allowed AMD GPUs to run compiled CUDA code). Unfortunately, NVIDIA doesn't want to invest money and resources in extending functionality to AMD and AMD doesn't want to invest money and resources into bolstering an NVIDIA owned technology (that could theoretically radically change to cripple AMD's hardware support in future versions). While standards and cooperation are a great idea, the competition in this market is such that neither NVIDIA nor AMD are looking to take a chance on benefiting the consumer if there is any risk of strenthening the competition (even in spite of weakening the industry).

108 Comments

View All Comments

gigahertz20 - Monday, June 16, 2008 - link

I think these ridiculous prices and lackluster performance is just a way for them to sell more SLI motherboards, who would buy a $650 GTX 280 when you can buy two 8800GT's with a SLI mobo and get better performance? Especially now that the 8800GT's are approaching around $150.crimson117 - Monday, June 16, 2008 - link

It's only worth riding the bleeding edge when you can afford to stay there with every release. Otherwise, 12 months down the line, you have no budget left for an upgrade, while everyone else is buying new $200 cards that beat your old $600 card.So yeah you can buy an 8800GT or two right now, and you and me should probably do just that! But Richie Rich will be buying 2x GTX 280's, and by the time we could afford even one of those, he'll already have ordered a pair of whatever $600 cards are coming out next.

7Enigma - Tuesday, June 17, 2008 - link

Nope, the majority of these cards go to Alienware/Falcon/etc. top of the line, overpriced pre-built systems. These are for the people that blow $5k on a system every couple years, don't upgrade, might not even seriously game, they just want the best TODAY.They are the ones that blindly check the bottom box in every configuration for the "fastest" computer money can buy.

gigahertz20 - Monday, June 16, 2008 - link

Very few people are richie rich and stay at the bleeding edge. People that are very wealthy tend not to be computer geeks and purchase their computers from Dell and what not. I'd say at least 96% of gamers out there are value oriented, these $650 cards will not sell much at all. If anything, you'll see people claim to have bought one or two of these in forums and other places, but their just lying.perzy - Monday, June 16, 2008 - link

Well I for one is waiting for Larabee. Maybee (probably) it isen' all that its cranked up to be, but I want to see.And what about some real powersaving Nvidia?

can - Monday, June 16, 2008 - link

I wonder if you can just flash the BIOS of the 260 to get it to operate as if it were a 280...7Enigma - Tuesday, June 17, 2008 - link

You haven't been able to do this for a long time....they learned their lessons the hard way. :)Nighteye2 - Monday, June 16, 2008 - link

Is it just me, or does this focus on compute power mean Nvidia is starting to get serious about using the GPU for physics, as well as graphics? It's also in-line with the Ageia acquisition.will889 - Monday, June 16, 2008 - link

At the point where NV has actually managed to position SLI mobos and GPU's where you actually need that much power to get decent FPS (above 30 average) from games gaming on the PC will be entirely dead to all those but the most esoteric. It would be different if there were any games worth playing or as many games as the console brethren have. I thought GPU's/cases/power supplies were supposed to become more efficient? EG smaller but faster sort of how the TV industry made TV's bigger yet smaller in footprint with way more features - not towering cases with 1200KW PSU's and 2X GTX 280 GPU's? All this in the face o drastically raised gas prices?Wanna impress me? How about a single GPU with the PCB size of a 7600GT/GS that's 15-25% faster than a 9800GTX that can fit into a SFF case? needing a small power supply AND able to run passively @ moderate temps. THAT would be impressive. No, Seargent Tom and his TONKA_TRUCK crew just have to show how beefy his toys can be and yank your wallet chains for said. Hell, everyone needs a Boeing 747 in their case right? cause' that's progress for those 1-2 gaming titles per years that give you 3-4 hours of enjoyable PC gaming.....

/off box

ChronoReverse - Tuesday, June 17, 2008 - link

The 4850 might actually hit that target...