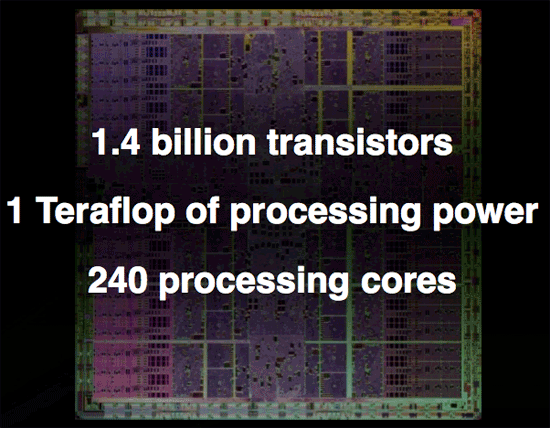

NVIDIA's 1.4 Billion Transistor GPU: GT200 Arrives as the GeForce GTX 280 & 260

by Anand Lal Shimpi & Derek Wilson on June 16, 2008 9:00 AM EST- Posted in

- GPUs

Overclocked and 4GB of GDDR3 per Card: Tesla 10P

Now let's say that you want to get some real work done with NVIDIA's GT200 GPU but that 1.4 billion transistor chip just isn't enough. NVIDIA does have an answer for you, in the form of an overclocked GT200 with the 240 SPs running at 1.5GHz (up from 1.3GHz in the GTX 280) and with a full 4GB of GDDR3 memory on-board.

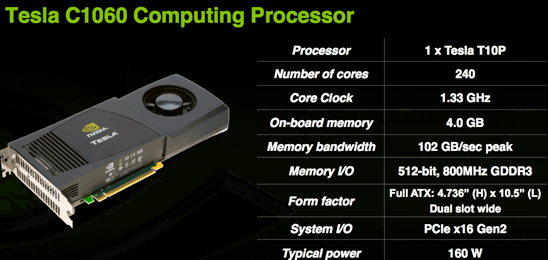

Today NVIDIA is also announcing their next generation Tesla product based on GT200 (called a T10P when used on Tesla for some reason). The workstation graphics guys will have to wait a while for a GT200 Quadro unfortunately. This new Tesla is similar to the older model in that it has much more RAM and no IO ports. The server version is also clocked higher than the desktop part because fan noise isn't an issue and data centers have lower ambient temperatures than some corner of an office under a desk.

The Tesla C1060 has an entire 4GB of RAM on board. This is obviously very large and will do well to accomodate the large scale scientific computing apps it is targeted at. This card is designed for use in workstations and is the little brother to the new monster server that is also being announced today.

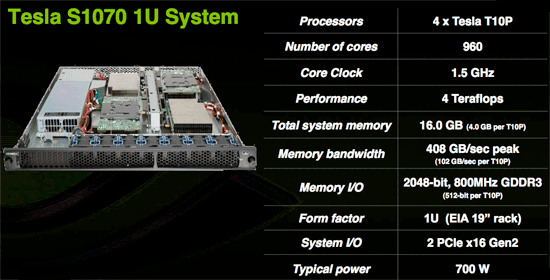

The Tesla S1070 is a 1U server containing essentially 4 C1060 cards for a total of 16GBs of RAM on 960 SPs. This server, like the older version, connects to a server via a PCIe cable and is designed to run code written for CUDA at incredible speeds. With 120 double precision IEEE 754r floating point units in combination with the 960 single precision IEEE 754 units, this server is a viable option for many more projects than the previous Tesla hardware which was only capable of single precision floating point.

Though we don't have an application to benchmark the double precision floating point hardware on GT200 yet, NVIDIA states that a GT200 can roughly match an 8 core Xeon system in DP performance. This would put the S1070 on par with a 32 way Xeon setup at less than 700W. Needless to say, single precision code runs much much faster and can outpace hundreds of traditional CPUs in parallel.

While these servers are expensive (though we don't have pricing), they are cheap compared to the alternatives currently out there. The fact that CUDA code can be implemented and tested on any of the 70 million NVIDIA G80+ GPUs currently in people's hands means that developer already have a platform to test and debug code on before committing to the Tesla solution. On top of that, schools are beginning to adopt CUDA as a teaching tool for parallel computing. As CUDA gains acceptance and the benefits of GPU computing are realized, more and more major markets will take interest.

The graphics card is no longer a toy. The combination of CUDA's academic acceptance as a teaching tool and the availability of 64-bit floating point in GT200 make GPUs a mission critical computing tool that will act as a truly disruptive technology. Not only will many major markets that depend on high performance floating-point processing realize this, but every consumer with an NVIDIA graphics card will be able to take advantage of hundreds of gigaflops of performance from CUDA based consumer applications.

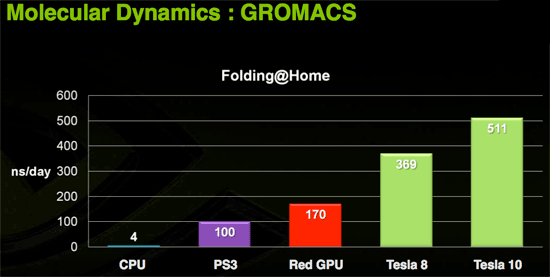

Today we have folding@home and soon we'll have Elemental's transcoder. Imagine the audio and video processing capabilities of a PC if the GPU were actively used in software like ProTools and Premier. Open source programs could easily best the processing capabilities of many solutions with dedicated hardware for these types of applications.

Of course, the major limiter to the adoption of this technology is that it is vendor specific. If NVIDIA put the time in (or enlisted help) to make CUDA an ANSI or ISO standard extention to a programming language, we would could really start to get excited. Beyond that, the holy grail would be a unification of virtualized instruction sets creating a standard low level "assembly" interface for GPU computing allowing CUDA to compile to one target and run on any graphics card. Sort of an x86 for massively parallel work.

Right now CUDA compiles to PTX, NVIDIA's virtual instruction set, and there is no reason someone couldn't write a CUDA compiler to target AMD's equivalent CAL (or even to develop a PTX to CAL wrapper that allowed AMD GPUs to run compiled CUDA code). Unfortunately, NVIDIA doesn't want to invest money and resources in extending functionality to AMD and AMD doesn't want to invest money and resources into bolstering an NVIDIA owned technology (that could theoretically radically change to cripple AMD's hardware support in future versions). While standards and cooperation are a great idea, the competition in this market is such that neither NVIDIA nor AMD are looking to take a chance on benefiting the consumer if there is any risk of strenthening the competition (even in spite of weakening the industry).

108 Comments

View All Comments

Chaser - Monday, June 16, 2008 - link

Maybe I'm behind the loop here. The only competition this article refers to is some up coming new INTEL product in contrast to an announced hard release of the next AMD GPU series a week from now?BPB - Monday, June 16, 2008 - link

Well nVidia is starting with the hi end, hi proced items. Now we wait to see what ATI has and decide. I'm very much looking forward to the ATI release this week.FITCamaro - Monday, June 16, 2008 - link

Yeah but for the performance of these cards, the price isn't quite right. I mean you can get two 8800GTs for under $400 and they typically outperform both the 260 and the 280. Yes if you want a single card, these aren't too bad a deal. But even the 9800GX2 outperforms the 280 normally.So really I have to question the pricing on them. High end for a single GPU card yes. Better price/performance than last generations card, no. I just bought two G92 8800GTSs and now I don't feel dumb about it because my two cards that I paid $170 for each will still outperform the latest and greatest which cost more.

Rev1 - Monday, June 16, 2008 - link

Maybe lack of any real competition from ATI?hadifa - Monday, June 16, 2008 - link

No, The reason is high cost to produce. over a Billion transistors, low yields, 512 bit bus ...

Unfortunately the high cost and the advance tech doesn't translate to equally impressive performance at this stage. For example, if the card had much lower power usage under load, still it would have been considered a good move forward for having comparable performance to a dual GPU solution but with much cooler running and less demanding hardware.

As the review mentions, this card begs for a die shrink. It will make it use less power, be cheaper, run cooler and even have a higher clock.

Warren21 - Monday, June 16, 2008 - link

That competition won't come for another two weeks, but when it does -- rumour has it NV plan to lower their prices. Most preliminary info has HD 4870 at 299-329 and pretty much GTX 260 performance, if not, then biting at it's heels.smn198 - Tuesday, June 17, 2008 - link

You haven't seen anything yet. check out this picture of the GTX2 290!! http://tinypic.com/view.php?pic=350t4rt&s=3">http://tinypic.com/view.php?pic=350t4rt&s=3Mr Roboto - Wednesday, June 18, 2008 - link

Soon it will be that way if Nvidia has their way.