AMD 780G: Preview of the Best Current IGP Solution

by Gary Key on March 10, 2008 12:00 PM EST- Posted in

- CPUs

We normally do not get giddy about the thought of reviewing another low budget integrated graphics platform. All right, some of us do, as we are eternal optimists that eventually a manufacturer will get it right. Guess what: AMD got it right - not exactly right, but for the first time we actually have an IGP solution that comes very close to satisfying everyone’s requirements in a low cost platform. Why are we suddenly excited about an integrated graphics platform again?

The legacy of integrated graphics platforms has historically been one of minimum functionality. With Intel as the number one graphics provider in the world, this can pose a problem for application developers looking to take advantage of the widest user base possible. Designing for the lowest common denominator can be a frustrating task when the minimum feature set and performance compared to current discreet solutions is so incredibly low.

The latest sales numbers indicate that about nine out of every ten systems sold have integrated graphics. We cannot understate the importance of a reasonable performance IGP solution in order to have a pleasurable all around experience on the PC. IGP performance might not be as important on a business platform relegated to email and office applications. However, it is important for a majority of home users who expect a decent amount of performance in a machine that typically will be a jack-of-all-trades, handling everything from email to office applications, heavy Internet usage, audio/video duties, and casual gaming.

Our opinions about the basic performance level of current IGP solutions have not always been kind. We felt like the introduction of Vista last year would ultimately benefit consumers and developers alike as it forces a certain base feature set and performance requirements for graphics hardware. However, even with full DX9 functionality required, the performance and compatibility of recent games under Vista is dismal at best. This along with borderline multimedia performance has left us with a sour taste in our mouths when using current IGP solutions from AMD, NVIDIA, and Intel for anything but email, Internet, basic multimedia, and Word; a few upgrades are inevitably required.

We are glad to say that this continual pattern of "mediocrity begets mediocrity" is finally ending, and we have AMD to thank for it. Yes, the same AMD that since the ATI merger has seemingly tripped over itself with questionable, failed, or very late product launches - depending upon your perspective. We endured the outrageous power requirements of the HD 2900 XT series and the constant K10 delays that turned into the underwhelming Phenom release; meanwhile, we watched Intel firing on all cylinders and NVIDIA upstaging AMD on the GPU front.

Thankfully, over the past few months we have seen AMD clawing its way back to respectability with the release of the HD 3xxx series of video cards, the under-appreciated 790/770 chipset release, and what remains a very competitive processor lineup in the budget sector. True, they have not been able to keep up with Intel or NVIDIA in the midrange to high-end sectors, but things are changing. While we wish AMD had an answer to Intel and NVIDIA in these more lucrative markets - for the sake of competition and the benefits that brings to the consumer - that is not where the majority of desktop sales occur.

Most sales occur in the $300~$700 desktop market dominated by IGP based solutions and typically targeted at the consumer as an all-in-one solution for the family. Such solutions up until now have caused a great deal of frustration and grief for those who purchased systems thinking they would be powerful enough to truly satisfy everyone in the household, especially those who partake in games or audio/video manipulation.

With that in mind, we think AMD has a potential hit on its hands with their latest and greatest product. No, the product is still not perfect, but it finally brings a solution to the table that can at least satisfy the majority of needs in a jack-of-all-trades machine. What makes this possible and why are we already sounding like a group of preteens getting ready for a Hannah Montana concert?

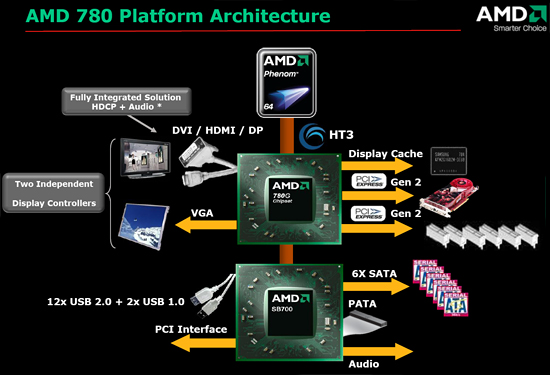

Enter Stage Left; it’s not Hannah, but AMD’s latest edition to their ever-growing chipset portfolio, the 780G/780V chipset. The chipset nomenclature might make one think the 780G/780V is just an update to the successful 690G/690V product family. While the 780G product replaces the 690G, it is much more than just an update. In fact, the 780G is an all-new chipset that features a radically improved Northbridge and a slightly improved Southbridge.

So let’s take a look at the chipset specifications and delve into the multimedia output qualities of the 780G chipset.

49 Comments

View All Comments

- Monday, March 10, 2008 - link

Where is the discussion of this chipset as an HTPC? Just a tidbit here and there? I thought that was a major selling point here. With a single core sempron 1.8ghz being enough for an HTPC which NEVER hits 100% cpu usage (see tomshardware.com) you don't need a dual core and can probably hit 60w in your HTPC! Maybe less. Why was this not a major topic in this article? With you claiming the E8300/E8200 in your last article being a HTPC dreamers chip shouldn't you be talking about how low you could go with a sempron 1.8ghz? Isn't that the best HTPC combo out there now? No heat, super low cost running it all year long etc (NOISELESS with a proper heatsink).Are we still supposed to believe your article about the E8500? While I freely admit chomping at the bit to buy an E8500 to Overclock the crap out of it (I'm pretty happy now with my e4300@3.0 and can't wait for 3.6ghz with e8500, though it will go further probably who needs more than 3.6 today for gaming), it's a piece of junk for an HTPC. Overly expensive ($220? for e8300 that was recommended) compared to a lowly Sempron 1.8 which I can pick up for $34 at newegg. With that kind of savings I can throw in a 8800GT in my main PC as a bonus for avoiding Intel. What's the point in having an HTPC where the cpu utilization is only 25%? That's complete OVERKILL. I want that as close to 100% as possible to save me money on the chip and then on savings all year long with low watts. With $200 savings on a cpu I can throw in an audigy if needed for special audio applications (since you whined about 780G's audio). A 7.1channel Audigy with HD can be had for $33 at newegg. For an article totally about "MULTIMEDIA OUTPUT QUALITIES" where's the major HTPC slant?

sprockkets - Thursday, March 13, 2008 - link

Dude, buy a 2.2ghz Athlon X2 chip for like $55. You save what, around $20 or less with a Sempron nowadays?QuickComment - Tuesday, March 11, 2008 - link

It's not 'whining' about the audio. Sticking in a sound card from Creative still won't give 7.1 sound over HDMI. That's important for those that have a HDMI-amp in a home theatre setup.TheJian - Tuesday, March 11, 2008 - link

That amp doesn't also support digital audio/Optical? Are we just talking trying to do the job within 1 cable here instead of 2? Isn't that kind of being nit picky? To give up video quality to keep in on 1 cable to me is unacceptable (hence I'd never "lean" towards G35 as suggested in the article). I can't even watch if the video sucks.QuickComment2 - Tuesday, March 11, 2008 - link

No, its not about 1 cable instead of 2. SPDIF is fine for Dolby digital and the like, ie compressed audio, but not for 7.1 uncompressed audio. For that, you need HDMI. So, this is a real deal-breaker for those serious about audio.JarredWalton - Monday, March 10, 2008 - link

I don't know about others, but I find video encoding is something I do on a regular basis with my HTPC. No sense storing a full quality 1080i HDTV broadcast using 16GB of storage for two hours when a high quality DivX or H.264 encode can reduce disk usage down to 4GB, not to mention ripping out all the commercials. Or you can take the 3.5GB per hour Windows Media Center encoding and turn that into 700MB per hour.I've done exactly that type of video encoding on a 1.8GHz Sempron; it's PAINFUL! If you're willing to just spend a lot of money on HDD storage, sure it can be done. Long-term, I'm happier making a permanent "copy" of any shows I want to keep.

The reality is that I don't think many people are buying HTPCs when they can't afford more than a $40 CPU. HTPCs are something most people build as an extra PC to play around with. $50 (only $10 more) gets you twice the CPU performance, just in case you need it. If you can afford a reasonable HTPC case and power supply, I dare say spending $100-$200 on the CPU is a trivial concern.

Single-core older systems are still fine if you have one, but if you're building a new PC you should grab a dual-core CPU, regardless of how you plan to use the system. That's my two cents.

TheJian - Tuesday, March 11, 2008 - link

I guess you guys don't have a big TV. With a 65in Divx has been out of the question for me. It just turns to crap. I'd do anything regarding editing on my main PC with the HTPC merely being a cheap player for blu-ray etc. A network makes it easy to send them to the HTPC. Just set the affinity on one of your cores to vidcoding and I can still play a game on the other. Taking 3.5GB to 700MB looks like crap on a big tv. I've noticed it's watchable on my 46in, but awful on the 65. They look great on my PC, but I've never understood anyone watching anything on their PC. Perhaps a college kid with no room for a TV. Other than that...JarredWalton - Tuesday, March 11, 2008 - link

SD resolutions at 46" (what I have) or 65" are always going to look lousy. Keeping it in the original format doesn't fix that; it merely makes to use more space.My point is that a DivX, x64, or similar encoding of a Blu-ray, HDTV, or similar HD show loses very little in overall quality. I'm not saying take the recording and make it into a 640x360 SD resolution. I'm talking about converting a full bitrate 1080p source into a 1920x1080 DivX HD, x64, etc. file. Sure, there's some loss in quality, but it's still a world better than DVD quality.

It's like comparing a JPEG at 4-6 quality to the same image at 12 quality. If you do a diff, you will find lots of little changes on the lower quality image. If you want to print up a photo, the higher quality is desirable. If you're watching these images go by at 30FPS, though, you won't see much of a loss in overall quality. You'll just use about 1/3 the space and bandwidth.

Obviously, MPEG4 algorithms are *much* more complex than what I just described - which is closer to MPEG2. It's an analogy of how a high quality HD encode compares to original source material. Then again, in the case of HDTV, the original source material is MPEG2 encoded and will often have many artifacts already.

yehuda - Monday, March 10, 2008 - link

Great article. Thanks to Gary and everyone involved! The last paragraph is hilarious.One thing that bothers me about this launch is the fact that board vendors do not support the dual independent displays feature to full extent.

If I understand the article correctly, the onboard GPU lets you run two displays off any combination of ports of your choice (VGA, DVI, HDMI or DisplayPort).

However, board vendors do not let you do that with two digital ports. They let you use VGA+DVI or VGA+HDMI, but not DVI+HDMI. At least, this is what I have gathered reading the Gigabyte GA-MA78GM-S2H and Asus M3A78-EMH-HDMI manuals. Please correct me if I'm wrong.

How come tier-1 vendors overlook such a worthy feature? How come AMD lets them get away with it?

Ajax9000 - Tuesday, March 11, 2008 - link

They are appearing. At CeBIT Intel showed off two mini-ITX boards with dual digital.DQ45EK DVI+DVI

DG45FC DVI+HDMI

http://www.mini-itx.com/2008/03/06/intels-eaglelak...">http://www.mini-itx.com/2008/03/06/intels-eaglelak...