Intel's 8-core Skulltrail Platform: Close to Perfecting the Niche

by Anand Lal Shimpi on February 4, 2008 5:00 AM EST- Posted in

- CPUs

The CPUs

Late last year Intel announced the Core 2 Extreme QX9770, a 3.2GHz, 1.6GHz FSB quad-core desktop processor. We'll finally see availability of that beast this quarter, but you'll notice that the Skulltrail platform uses a slightly different CPU: the Core 2 Extreme QX9775. The 5 indicates that unlike the QX9770, this chip works in a LGA-771 socket just like Intel's Xeon processor. The different pinout is necessary because only Xeon chipsets support multiple CPU sockets and for a handful of reasons, that means we're limited to LGA-771.

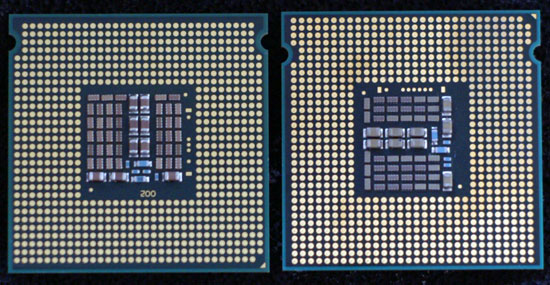

LGA-771 QX9775 (left), LGA-775 QX9770 (right) - Can you spot the four missing pins?

The specs on the QX9775 are otherwise identical to its LGA-775 counterpart. The 45nm Yorkfield/Penryn core runs all four of its cores at 3.2GHz and is fed by a 1.6GHz FSB. Each pair of cores on the CPU package has a 6MB L2 cache, for 12MB total on each individual CPU.

Despite using a Xeon socket, the Core 2 Extreme QX9775 isn't a Xeon. It turns out there are some very subtle differences between Core 2 and Xeon processors, even if they're based on the same core. Intel tunes the prefetchers on Xeon and Core 2 CPUs differently so unlike the Xeon 5365 used in its V8 platform, the QX9775 is identical in every way to desktop Core 2 processors - the only difference being pinout.

Intel wouldn't give us any more information on how the prefetchers are different, but we suspect the algorithms are tuned according to the typical applications Xeons find themselves running vs. where most Core 2s end up.

Like all other Extreme edition processors, the QX9775 ships completely unlocked, making overclocking unbelievably easy as you'll soon see.

Cooling the beast, if you think those fins will cut you...they will.

The price point is going to be the worst part of the QX9775. Intel isn't publicly announcing the price per processor, but over $1000 is a starting point. It looks like QX9770s preorders are going for over $1500 so we'd expect the QX9775s to be priced similarly, possibly eventually settling at $1200.

30 Comments

View All Comments

chizow - Monday, February 4, 2008 - link

Not sure how you could come to that conclusion unless you posted some caveats like 1) you're getting it for free from Intel or 2)you're not paying for it yourself or have no concern about costs.

Besides the staggering price tag associated with it ($500 + 2 x Xeon 9770 @$1300-1500 + FB-DIMM premium) there's some real concerns with how much benefit this set-up would yield over the best performing single socket solutions. In games, there's no support for Tri-SLI and beyond for NV parts although 3-4 cards may be an option with ATI. 3 seems more realistic as that last slot will be unusable with dual-cards.

Then there's the actual benefit gained on a practical basis. In games, looks like its not even worth bothering with as you'd most likely see a bigger boost from buying another card for SLI or CrossFire. For everything else, they're highly input intensive apps, so you spend most of your work day preparing data to shave a few seconds off compute time so you can go to lunch 5 minutes sooner or catch an earlier train home.

I guess in the end there's a place for products like this, to show off what's possible but recommending it without a few hundred caveats makes little sense to me.

chinaman1472 - Monday, February 4, 2008 - link

The systems are made for an entirely different market, not the average consumer or the hardcore gamer.Shaving off a few minutes really adds up. You think people only compile or render one time per project? Big projects take time to finish, and if you can shave off 5 minutes every single time and have it happen across several computers, the thousands of dollars invested comes back. Time is money.

chizow - Monday, February 4, 2008 - link

I didn't focus on real-world applications because the benefits are even less apparent. Save 4s on calculating time in Excel? Spend an hour formatting records/spreadsheets to save 4s...ya that's money well spent. The same is true for many real world applications. Sad reality is that for the same money you could buy 2-3x as many single-CPU rigs and in that case, gain more performance and productivity as a result.Cygni - Monday, February 4, 2008 - link

As we both noted, 'real world' isnt just Excel. Its also AutoCAD and 3dsmax. These are arenas where we arent talking about shaving 4 seconds, we are talking shaving whole minutes and in extreme cases even hours on renders.This isnt an office computer, this isnt a casual gamers machine. This is a serious workstation or extreme enthusiast rig, and you are going to pay the price premium to get it. Like I said, this is a CAD and 3D artists dream machine... not for your secretary to make phonetrees on. ;)

In this arena? I cant think of any machines that are even close to it in performance.

chizow - Monday, February 4, 2008 - link

Again, in both AutoCAD and 3DSMax, you'd be better served putting that extra money into another GPU or even workstation for a fraction of the cost. 2-3x the cost for uncertain increases over a single-CPU solution or a second/third workstation for the same price. But for a real world example, ILM said it took @24 hours or something ridiculous to render each Transformer frame. Say it took 24 hours with a single Quad Core with 2 x Quadro FX. Say Skulltrail cut that down to 18 or even 20 hours. Sure, nice improvement, but you'd still be better off with 2 or even 3 single CPU workstations for the same price. If it offered more GPU support and non-buffered DIMM support along with dual CPU support it might be worth it but it doesn't and actually offers less scalability than cheaper enthusiast chipsets for NV parts.martin4wn - Tuesday, February 5, 2008 - link

You're missing the point. Some people need all the performance they can get on one machine. Sure batch rendering a movie you just do each frame on a separate core and buy roomfulls of blade servers to run them on. But think of an individual artist on their own workstation. They are trying to get a perfect rendering of a scene. They are constantly tweaking attributes and re-rendering. They want all the power they can get in their own box - it's more efficient than trying to distribute it across a network. Other examples include stuff like particles or fluid simulations. They are done best on a single shared memory system where you can load the particles or fluid elements into a block of memory and let all the cores in your system loose on evaluating separate chunks of it.I write this sort of code for a living, and we have many customers buying up 8 core machines for individual artists doing exactly this kind of thing.

Chaotic42 - Tuesday, February 5, 2008 - link

Anyone can come up with arbitrary workflows that don't use all of the power of this system. There are, however, some workflows which would use this system.I'm a cartographer, and I deal with huge amounts of data being processed at the same time. I have mapping program cutting imagery on one monitor, Photoshop performing image manipulation on a second, Illustrator doing TIFF separates on a third, and in the background I have four Excel tabs and enough IE tabs to choke a horse.

Multiple systems makes no sense because you need so much extra hardware to run them (In the case of this system, two motherboards, two cases, etc) and you'll also need space to put the workstations (assuming you aren't using a KVM). You would also need to clog the network with your multi-gigabyte files to transfer them from one system to another for different processing.

That seems a bit more of a hassle than a system like the one featured in the article.

Cygni - Monday, February 4, 2008 - link

I dont see any problem with what he said there.All you talked about was gaming, but lets be honest here, this is not a system thats going to appeal to gamers, and this isnt a system setup for anyone with price concerns.

In reality, this is a CAD/CAM dream machine, which is a market where $4-5,000 rigs are the low end. In the long run for even small design or production firms, 5 grand is absolute peanuts and WELL worth spending twice a year to have happy engineers banging away. The inclusion of SLI/Crossfire is going to move these things like hotcakes in this sector. There is nothing that will be able to touch it. And thats not even mentioning its uses for rendering...

I guess what im saying is try to realize the world is a little bit bigger than gaming.

Knowname - Sunday, February 10, 2008 - link

On that note, is there any studies on the gains you get in CAD applications by upgrading your videocard?? How much does the gpu really play in the process?? The only significant gain I can think of for CAD is quad desktop monitors per card with Matrox vid cards. I don't see how the GPU (beyond the RAMDAC or whatever it's called) really makes a difference. Pls tell me this, I keep wasting my money on ATI cards (not mention my G550 wich I like, but it wasn't worth the money I spent on it when I could have gotten a 6600gt...) just on the hunch they'd be better than nvidea due to the 2d filtering and such (not really a big deal now, but...)HilbertSpace - Monday, February 4, 2008 - link

A lot of the 5000 intel chipsets let you use riser cards for more memory slots. Is that possible with skully?