ASUS ROG Rampage Formula: Why we were wrong about the Intel X48

by Kris Boughton on January 25, 2008 4:30 AM EST- Posted in

- Motherboards

The Clock Crossing Procedure and MCH Read Delay (tRD)

Have you ever stopped to wonder how the Memory Control Hub (MCH) manages to transfer data from the system bus (FSB) to the memory bus and vice versa? Maybe you questioned why it was not possible to run your memory at any speed you desired, independent of the selected FSB. While there have been some chipsets that provide this capability, performance was rather spotty at times depending on the particular settings in use. In fact, these systems often resulted in the creation of rather large ranges of bus speed and memory speed ratios that refused to function, stable or otherwise. The concept was certainly fair enough, but at the time a well-engineered implementation turned out to be far more difficult that anyone may have been willing to concede. It should not come as a surprise that Intel chipsets have only allow the use of certain validated memory ratios - those same numbers we have grown to love (or hate) like 1:1, 5:4, 3:2, and so forth.

Back in the days of relatively slow-paced memory performance improvements, Intel had no problems pumping out processors with default FSB values that made running memory at synchronous speeds an expensive proposition. Thus they created the "down" divider that allowed the memory subsystem to run at a bus speed below that of the system bus. This allowed for a period of innovation where processor bus architecture was able to improve without placing an undue burden on the memory industry to create reasonably priced modules capable of these increased speeds.

In a lot of ways this also prevented cost-prohibitive aspects of validating higher data transfer rates from ultimately affecting the market as a whole. Because Intel was not obligated to allocate additional funding for the completion of this expensive process, the savings (however small) could be passed on to the consumer. When coupled with the added benefit of allowing for the purchase and use of lower-binned memory, we can easily see why a move such as this helped stimulate the industry into a period of unprecedented growth.

Today's market is much different. Older signaling technologies like Advanced Gunning Transceiver Logic (AGTL+), used almost exclusively by Intel in their monolithic CPU-to-MCH interface architecture, have just about reached the practical limits. As such, "up" dividers, those that allow for memory bus speeds in excess of the system bus speed, are used almost exclusively by high-performance systems. Indeed, it would be hard to imagine ever being satisfied with a system able to make use of memory operating at transfer rates of up to DDR2-667, and no higher. As odd as it may seem, the next generation of memory (DDR3) is based entirely on the concept of running at speeds mandating the use of at least a 2:1 memory bus to system bus frequency ratio.

When examined from this aspect it becomes clear what DDR3 really is - an early look at the next generation's mainstream memory component. Only when Intel has removed the performance barrier of their antiquated FSB and loosely-coupled MCH will we be fully able to finally experience the real improvements that DDR3 promises to provide. But we digress - speculating about what could have been done better or things to come is trite. Instead, let's concentrate on what can be done now to improve the collective performance of our systems.

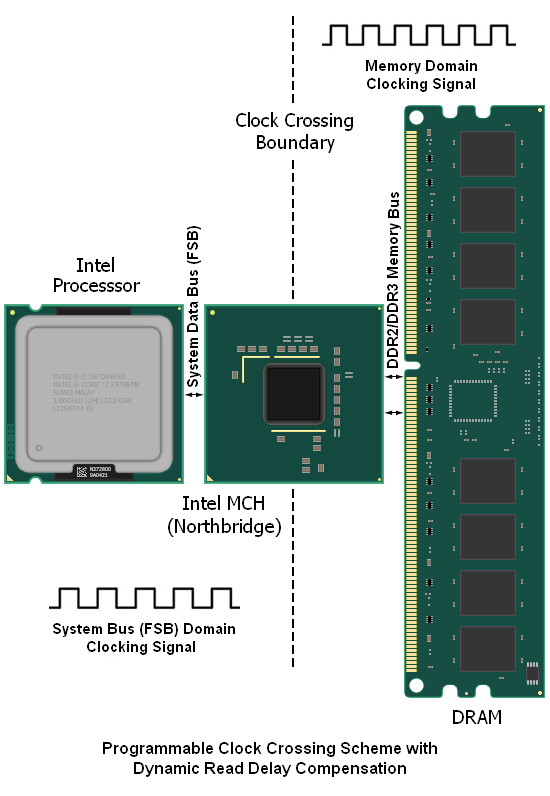

Below is a somewhat basic representation of the problem at hand (and later we will introduce the fairly elegant solution that Intel has crafted in order to tackle said problem). Are you still wondering what the problem is? Put briefly: designing an effective means for transferring data from one bus "domain" to the other, while still respecting the timing requirements for each bus.

We made mention earlier that we believe Intel's solution to be quite elegant. While this is true, the system is still far from perfect as it introduces a significant amount of extra data access latency to each and every memory read request. It might seem ironic that the design initially constructed in order to allow for higher memory speeds ends up being the very reason for the additional latency penalty incurred, but this is the case. Coincidentally, we can apply a similar accounting of the system's flaws when explaining why actualized system performance improvements are always far less than predicted when making use of the larger dividers.

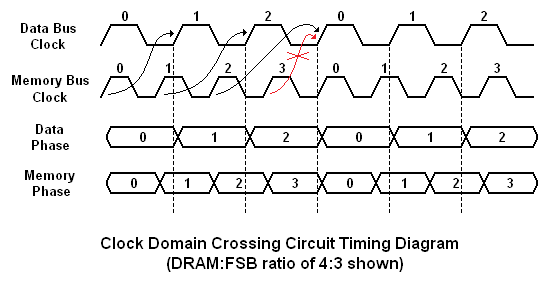

Moving data between two simultaneously running parallel buses requires a fine level of timing control and a fair amount of programmable logic to be successful, especially if the scheme is to remain flexible. The graphic below should help to illustrate the concept. Four memory data clock cycles for every three system data bus cycles (a 4:3 DRAM:FSB memory ratio) means that the MCH can do little more than queue up data requested from the memory for transport to the system bus.

This process works to ensure that no FSB cycles are left unused, but it does little to improve actual memory throughput. Because of this, the gains seen are largely due to the increased transfer efficiency. For the same reason, sustained data rates, while improved, are made at the expense of requiring added latency associated with the transfer scheme. This has the affect of retarding the responsiveness of short bursts of random read activity. Some users [Ed: Androids in disguise?] even swear that they can tell when they are using an Intel system because of the suppression of the "snappy" experience that comes when using true low-latency systems.

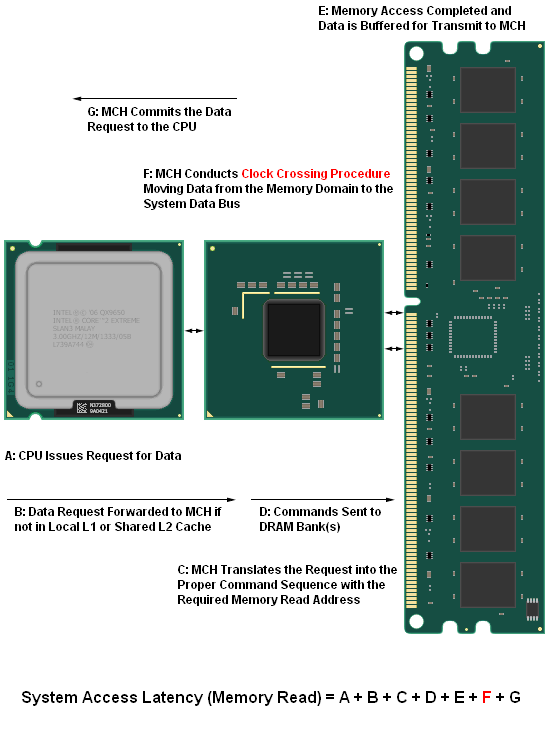

In order to better quantify exactly how the MCH clock crossing procedure influences system performance, it might be helpful to take a step back and examine the different component parameters that contribute to a system's overall memory read (access) latency. The diagram below helps to illustrate just this point - we have identified each distinct operation that takes place during a CPU data request and the sum of the individual latencies that involved with each part of the process make up the total system memory access latency, as measured. As we will see, this time - expressed in nanoseconds (ns) - is critical to overall system performance.

Although the user has at least minor control over just about every aspect of the system as modeled above, there are a few settings that when altered have the potential to drastically impact performance for the better (or worse, depending on how well you understand what you are doing). One of the most well known is the selection of primary memory timings (tCL-tRCD-tRP-tRAS). You may be surprised to learn that the effect of properly setting these values, represented by part "E", is quite insignificant when compared to your choice of tRD (the singular consideration in developing the latency associated with part "F"). This will be our focus for the remainder of our discussion. We have already lightly touched on this mysterious setting on the prior page.

73 Comments

View All Comments

kjboughton - Sunday, January 27, 2008 - link

The rules as defined may not apply exactly as provided for P35. The equations have been tested to be true for X38/X48 but additional testing is still needed on P35 in order to validate the results.Super Nade - Saturday, January 26, 2008 - link

Hi,I love the technical depth of the article. Outstanding writeup! I hope you will NOT dumb down future articles as this is how, IMO a review should be written.

S-N

Eric Rekut - Saturday, January 26, 2008 - link

Great article! I have a question, is x48 faster in super-pi than p35/x38?Rajinder Gill - Saturday, January 26, 2008 - link

Hi,In general the X38/X48 chipset outscores the P35 in Super Pi. The x48 can/will pull ahead of the X38 very marginally IF it can handle a lower overall tRD with a higher FSB combination and tighter memory sub-timing ranges - within an available level of Northbridge voltage.

regards

Raja

Rob94hawk - Saturday, January 26, 2008 - link

I would love to see you guys do benchmarking and overclocking with the QX9770+DDR3 1800 with this mobo.Rajinder Gill - Saturday, January 26, 2008 - link

Hi Rob,Kris will be testing the Rampage Extreme soon (with DDR3). The 9770's only show a little more prowess than QX9650's under LN2 cooling (in some instances - not always). With cascade/water/air cooling there's little to separate the QX9650 from the QX9770 (at least in my experience with both processors thus far).

regards

Raja

enigma1997 - Saturday, January 26, 2008 - link

Another excellent article after the QX9650 O/C one. Congratulations!!I have a few questions: What ram did you use to achieve the amazingly high bandwidth result (the one that goes with the 450FSB and tRD 5)? I understand you are using a divider of 3:2 and CAS5, so I expect the DDR2 speed should be at 10800!!

Also, I am not sure how you can get a memory read of >9000MB/s with tRD 5. I have a pair of G.Skill F2-8000PHU2-2GBHZ 4-4-4-5 and a DFI X38-T2R motherboard. I set it up with a QX9650 with tRD/FSB/ram timing identical to yours, but I only get around 8800MB/s. Note that the CPU runs at 3000Mhz.

Thanks for the article and your answers to my questions :)

kjboughton - Sunday, January 27, 2008 - link

Memory used for the incredible 450FSB/tRD 5 result was OCZ DDR2 PC-9200 Reaper (2GB kit).Regarding the testing you did at equivalent speeds, contrary to popular belief, CPU speed does influence both system memory read latency and bandwidth (add 16 clocks of whatever the CPU's Tcycle is to total system latency - about an extra 1.33ns going from 4GHz, where I tested, down to 3GHz uses in your system). This is certainly enough to reduce your BW results down below 9GB/s.

Jodiuh - Saturday, January 26, 2008 - link

"we feel there is nothing that needs modification by the end user as long as overclocking aspirations are within reason."The current Maximus series requires a bit of work (heatgun, fridge) to pull this off and replace with TIM of choice. Also I noticed a 7C drop on the bench when adding a 5CFM 40mm to the NB. Would you mind fleshing out the comment a bit more?

Thanks for the very thorough information in the article!

jedisoulfly - Friday, January 25, 2008 - link

there is a patriot viper ddr3 1600 cl7 kit at newegg for $295 (out of stock at time of this post) that is dramatically higher than good 800 ddr2 or even 1066 but just over a year ago ddr2 800 2gb kits were going for that price. I think once NV and AMD start making chip sets that support ddr3 the prices will start to come down...hopefully